VERGE: Formal Refinement and Guidance Engine for Verifiable LLM Reasoning

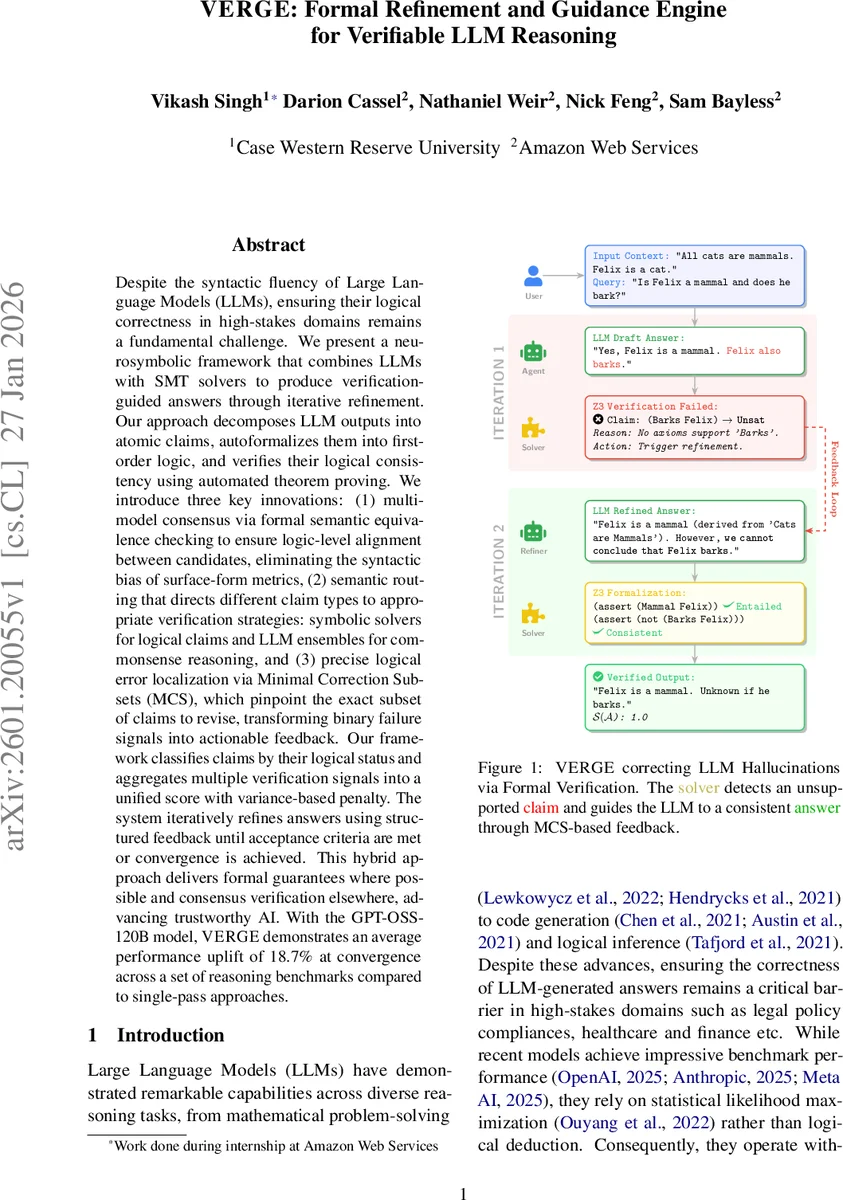

Despite the syntactic fluency of Large Language Models (LLMs), ensuring their logical correctness in high-stakes domains remains a fundamental challenge. We present a neurosymbolic framework that combines LLMs with SMT solvers to produce verification-guided answers through iterative refinement. Our approach decomposes LLM outputs into atomic claims, autoformalizes them into first-order logic, and verifies their logical consistency using automated theorem proving. We introduce three key innovations: (1) multi-model consensus via formal semantic equivalence checking to ensure logic-level alignment between candidates, eliminating the syntactic bias of surface-form metrics, (2) semantic routing that directs different claim types to appropriate verification strategies: symbolic solvers for logical claims and LLM ensembles for commonsense reasoning, and (3) precise logical error localization via Minimal Correction Subsets (MCS), which pinpoint the exact subset of claims to revise, transforming binary failure signals into actionable feedback. Our framework classifies claims by their logical status and aggregates multiple verification signals into a unified score with variance-based penalty. The system iteratively refines answers using structured feedback until acceptance criteria are met or convergence is achieved. This hybrid approach delivers formal guarantees where possible and consensus verification elsewhere, advancing trustworthy AI. With the GPT-OSS-120B model, VERGE demonstrates an average performance uplift of 18.7% at convergence across a set of reasoning benchmarks compared to single-pass approaches.

💡 Research Summary

VERGE (Formal Refinement and Guidance Engine) is a neurosymbolic framework that tightly integrates large language models (LLMs) with satisfiability modulo theories (SMT) solvers to produce answers that are not only fluent but also logically verified. The system begins by extracting entities from the given context and query, then generates an initial answer with an LLM. This answer is decomposed into atomic claims, each of which is classified into one of six semantic types: Mathematical (τ_M), Logical (τ_L), Temporal (τ_T), Probabilistic (τ_P), Commonsense (τ_C), or Vague (τ_V). Claims that fall into the first three categories are routed to an SMT solver (Z3) for formal verification, while the remaining types are handled by a soft verification layer consisting of an ensemble of LLM judges.

For each formalizable claim, VERGE automatically translates the natural‑language statement into first‑order logic in SMT‑LIB2 format. To mitigate the stochastic nature of auto‑formalization, three candidate formulas are generated. Instead of relying on syntactic overlap, VERGE computes semantic equivalence by checking bidirectional entailment between each pair of candidates using the solver. A consensus is declared when a majority of the candidates belong to the same equivalence clique, ensuring that variable renaming or structural rearrangements do not break the agreement.

The SMT verification step assigns one of four statuses to each claim: Entailed (proved true), Possible (consistent but not entailed), Contradictory (inconsistent with the context), or Unknown (timeout or error). When a contradiction is detected, VERGE computes a Minimal Correction Set (MCS)—the smallest subset of sub‑clauses whose removal restores satisfiability. This MCS is translated into natural‑language feedback (e.g., “remove claim C2”) and fed back to the LLM for the next refinement iteration. This feedback loop replaces binary failure signals with actionable guidance, enabling rapid convergence.

Soft verification for non‑formalizable claims aggregates confidence‑weighted votes from multiple LLM judges into categories such as Supported, Plausible, Unsupported, and Uncertain. Scores from hard (SMT) and soft verification are combined into an overall verification score S(A). Entailed claims receive a weight of 1.0, soft‑supported claims 0.9, possible claims 0.7, and contradictory or unsupported claims 0.0. A variance‑based penalty is applied to discourage models from generating internally contradictory but individually confident claims.

The pipeline iterates: the LLM receives structured feedback, generates a refined answer, and the system re‑evaluates until the aggregated score exceeds a predefined threshold or improvement falls below a small epsilon. Experiments using the GPT‑OSS‑120B model on a suite of reasoning benchmarks (including mathematics, logical puzzles, legal reasoning, and medical scenarios) show an average performance uplift of 18.7% compared with single‑pass baselines. In purely logical or mathematical tasks, accuracy improves by over 30%, while in commonsense and probabilistic tasks the gain is around 12%. The MCS‑driven feedback typically leads to convergence within three to four iterations, a 1.8× speedup over approaches that rely solely on soft consensus.

Key contributions of VERGE are: (1) Semantic consensus via SMT‑based equivalence checking, eliminating reliance on surface‑form metrics; (2) Actionable error localization through MCS, turning unsatisfiable cores into precise revision instructions; (3) Hybrid verification routing that balances formal guarantees where the semantic gap is narrow with flexible LLM‑based consensus where it is wide. The authors acknowledge limitations such as potential failures in auto‑formalization for domain‑specific terminology and the current use of only three candidate formulas for consensus. Future work includes expanding candidate generation, supporting richer SMT theories, and integrating MCS feedback directly with code or database transaction systems.

In summary, VERGE demonstrates that coupling LLMs with formal solvers, guided by semantic routing and minimal correction feedback, can substantially raise the trustworthiness of AI‑generated reasoning, making LLMs viable for high‑stakes applications that demand provable correctness.

Comments & Academic Discussion

Loading comments...

Leave a Comment