Size Matters: Reconstructing Real-Scale 3D Models from Monocular Images for Food Portion Estimation

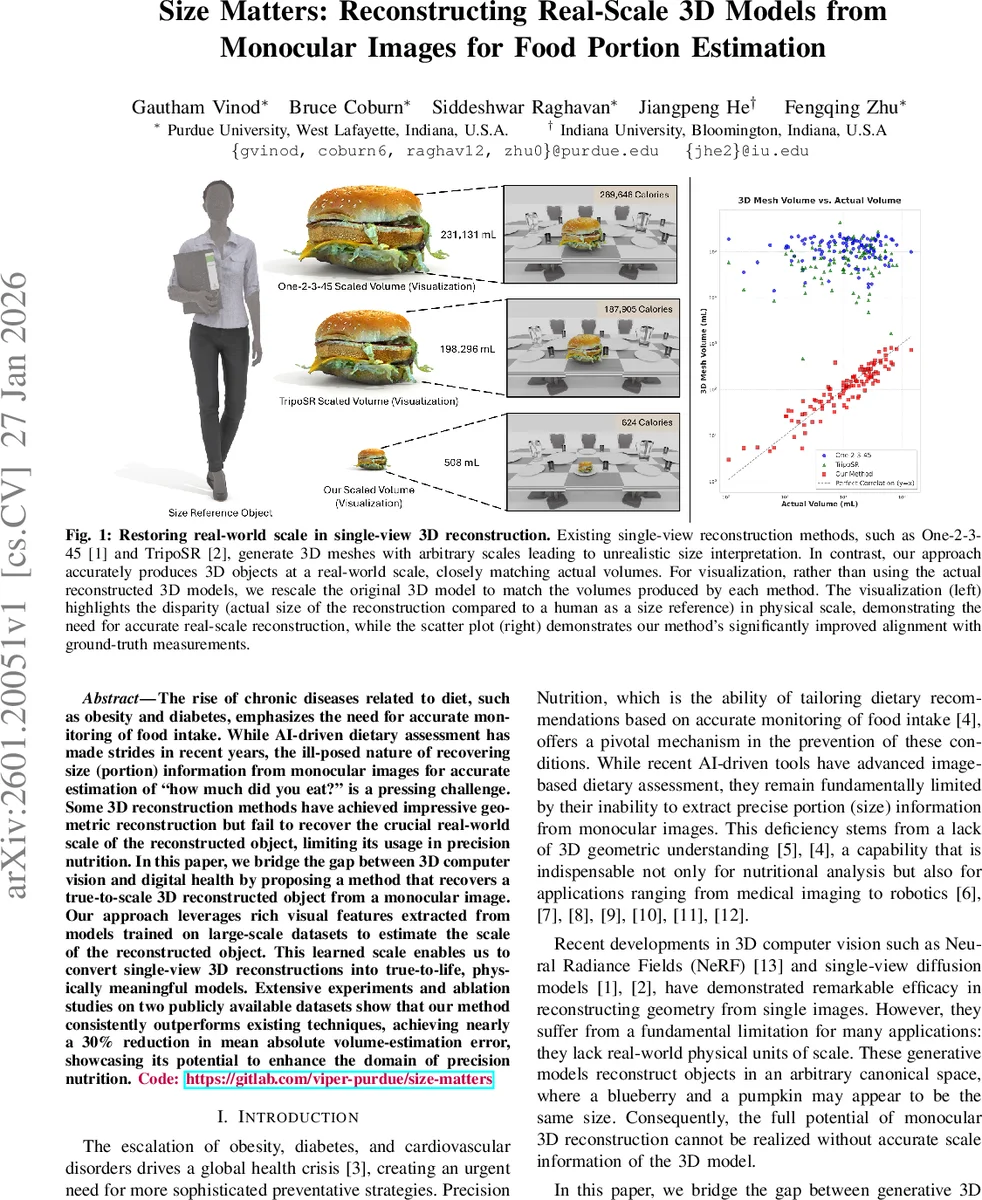

The rise of chronic diseases related to diet, such as obesity and diabetes, emphasizes the need for accurate monitoring of food intake. While AI-driven dietary assessment has made strides in recent years, the ill-posed nature of recovering size (portion) information from monocular images for accurate estimation of ``how much did you eat?’’ is a pressing challenge. Some 3D reconstruction methods have achieved impressive geometric reconstruction but fail to recover the crucial real-world scale of the reconstructed object, limiting its usage in precision nutrition. In this paper, we bridge the gap between 3D computer vision and digital health by proposing a method that recovers a true-to-scale 3D reconstructed object from a monocular image. Our approach leverages rich visual features extracted from models trained on large-scale datasets to estimate the scale of the reconstructed object. This learned scale enables us to convert single-view 3D reconstructions into true-to-life, physically meaningful models. Extensive experiments and ablation studies on two publicly available datasets show that our method consistently outperforms existing techniques, achieving nearly a 30% reduction in mean absolute volume-estimation error, showcasing its potential to enhance the domain of precision nutrition. Code: https://gitlab.com/viper-purdue/size-matters

💡 Research Summary

The paper tackles the long‑standing problem of estimating real‑world size from a single monocular image, a capability essential for accurate dietary monitoring and many other applications that require physical measurements. While recent advances in single‑view 3D reconstruction (e.g., NeRF, diffusion‑based models, and the One‑2‑3‑45 framework) can produce high‑quality geometry, they inherently output models in an arbitrary canonical space, making it impossible to directly infer volume or mass. The authors propose a two‑stage pipeline that first generates a geometry‑only mesh using a state‑of‑the‑art zero‑shot single‑view reconstruction method (One‑2‑3‑45) and then learns a scale factor that maps the mesh to real physical units.

The scale estimation stage, called the Real‑Scale Module, leverages the CLIP vision‑language model as a powerful feature extractor. The original input image and 75 rendered views of the reconstructed mesh (captured from a spherical camera arrangement at three polar angles and uniformly spaced azimuths) are each passed through a frozen CLIP ViT‑L/14 encoder, producing 1536‑dimensional embeddings. For each rendered view, the embedding is concatenated with the embedding of the original image and fed to a three‑layer MLP that regresses a single scalar: the volume scale factor ( \hat{v}{scale} ). The ground‑truth target is defined as the ratio of the true object volume ( V{gt} ) to the volume of the reconstructed mesh ( V_{recon} ). A normalized L1 loss is used to mitigate the disproportionate impact of very small objects on the loss. The final scale factor is applied to the mesh by multiplying its coordinates with the cube root of ( \hat{v}_{scale} ), thereby converting the model into milliliters (or any other real‑world unit).

The authors evaluate the approach on two publicly available datasets. MetaFood3D contains 637 food items with precise physical dimensions and nutritional information; 535 items are used for training and 102 for testing. OmniObject3D comprises 3,417 generic objects, split into 2,763 training and 654 testing samples. Metrics include Mean Absolute Error (MAE), Mean Absolute Percentage Error (MAPE), Pearson correlation coefficient (r), coefficient of determination (R²), and cosine similarity between predicted and ground‑truth volume vectors. MAPE is emphasized because many objects have very small volumes, which can inflate absolute errors.

Results demonstrate substantial improvements over a wide range of baselines: simple RGB‑only volume estimation, depth‑based reconstruction, 3D‑assisted methods, and even large vision‑language models such as GPT‑4o (with and without context). On MetaFood3D, the proposed method achieves an MAE of 59.09 mL (a 29.3 % reduction) and a MAPE of 35.83 % (a 43.3 % reduction) compared to the next‑best baseline. Correlation improves to r = 0.87 and R² to 0.75, indicating a strong linear relationship between predicted and true volumes. On OmniObject3D, the method reduces MAE to 70.49 mL (‑22.9 %) and MAPE to 85.96 % (‑2.14 %) while raising r to 0.94 and R² to 0.88. Visualizations show that previous methods often produce meshes that are dramatically over‑ or under‑scaled relative to a human reference, whereas the proposed pipeline yields volumes that align closely with the ground truth.

Key contributions include: (1) a novel integration of visual priors (via CLIP) with multi‑view geometric cues to infer absolute scale, (2) a lightweight scale‑regression network that can be trained on top of any existing single‑view reconstruction system without fine‑tuning the latter, and (3) extensive empirical validation demonstrating that real‑scale 3D reconstruction dramatically improves volume‑based dietary assessment.

The paper also discusses limitations. Rendering 75 views per object incurs significant computational and memory overhead, which may hinder real‑time or mobile deployment. Scale estimation accuracy degrades for very small or highly irregular objects where visual cues are ambiguous. Moreover, the evaluation is confined to controlled datasets; robustness to real‑world variations in lighting, occlusion, and background clutter remains to be proven. Future work is suggested in the direction of view‑sampling optimization, meta‑learning for domain adaptation, and model compression techniques to enable on‑device inference.

In summary, the work bridges a critical gap between high‑fidelity 3D shape recovery and the practical need for metric‑scale information, opening the door to more accurate, AI‑driven nutrition monitoring and other applications where physical dimensions matter.

Comments & Academic Discussion

Loading comments...

Leave a Comment