TAIGR: Towards Modeling Influencer Content on Social Media via Structured, Pragmatic Inference

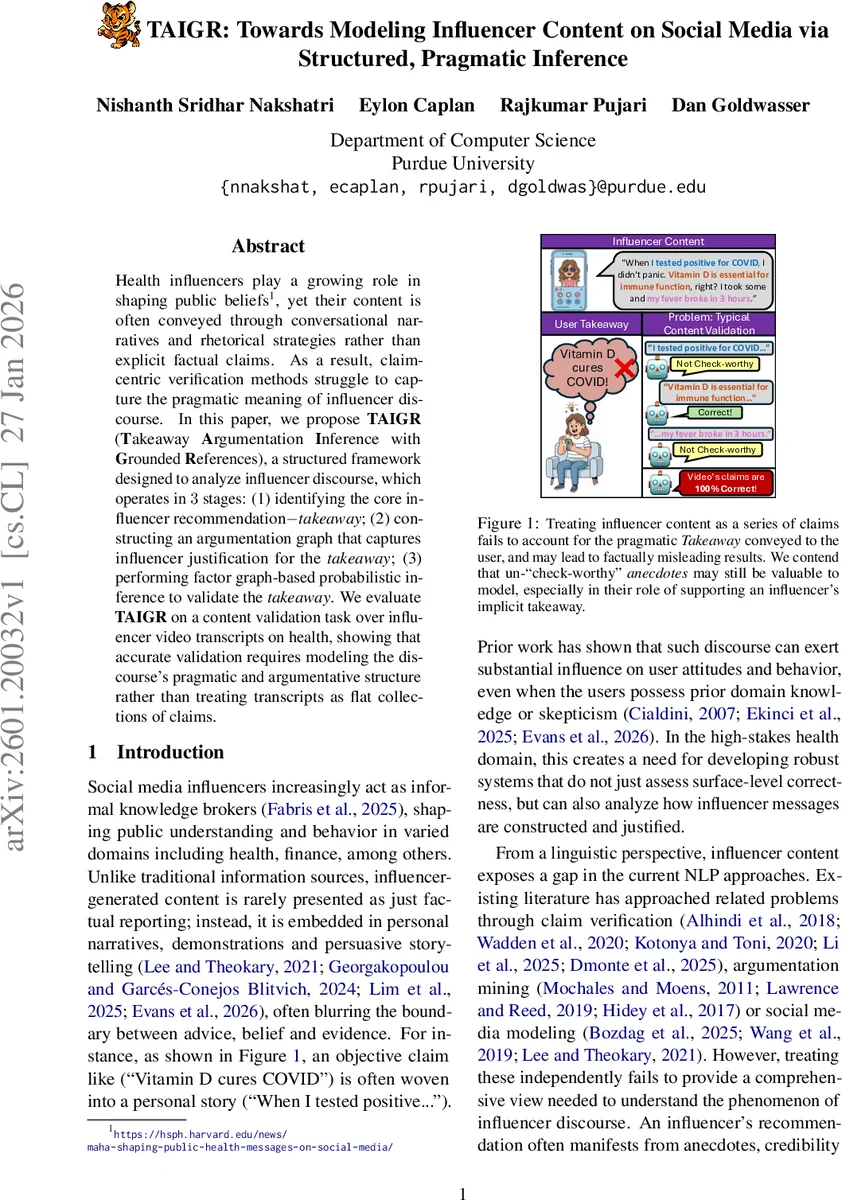

Health influencers play a growing role in shaping public beliefs, yet their content is often conveyed through conversational narratives and rhetorical strategies rather than explicit factual claims. As a result, claim-centric verification methods struggle to capture the pragmatic meaning of influencer discourse. In this paper, we propose TAIGR (Takeaway Argumentation Inference with Grounded References), a structured framework designed to analyze influencer discourse, which operates in three stages: (1) identifying the core influencer recommendation–takeaway; (2) constructing an argumentation graph that captures influencer justification for the takeaway; (3) performing factor graph-based probabilistic inference to validate the takeaway. We evaluate TAIGR on a content validation task over influencer video transcripts on health, showing that accurate validation requires modeling the discourse’s pragmatic and argumentative structure rather than treating transcripts as flat collections of claims.

💡 Research Summary

The paper addresses a critical gap in the verification of health‑related content produced by social‑media influencers. Unlike traditional news or scientific articles, influencer videos often embed recommendations within personal anecdotes, demonstrations, and persuasive storytelling rather than explicit factual statements. Consequently, claim‑centric fact‑checking pipelines, which treat a transcript as a flat list of propositions, fail to capture the pragmatic meaning that audiences actually internalize.

To overcome this limitation, the authors introduce TAIGR (Takeaway Argumentation Inference with Grounded References), a three‑stage structured framework inspired by the cognitive theory of epistemic vigilance. The first stage extracts the “takeaway”—the core actionable recommendation that a viewer is expected to adopt—from the full video transcript. Using large language model (LLM) prompting, the system identifies a single takeaway per video and classifies it as explicit (directly stated) or implicit (inferred from narrative). This step provides a semantic anchor for downstream analysis.

The second stage builds an argumentation graph that models how the influencer justifies the takeaway. The transcript is first segmented into self‑contained statements, each labeled with a rhetorical role (premise, anecdotal evidence, credibility move, emotional appeal, or none) via LLM classification. Check‑worthy claims are then extracted, and for each claim the supporting statements are identified, forming local claim‑to‑statement structures. Directed edges from claims to the takeaway (or to intermediate claims) are classified as direct support, weak support, or no support; continuous edge weights are derived from the LLM’s softmax probabilities (1.0 for direct, 0.5 for weak). The graph is iteratively expanded to capture deeper chains of reasoning, resulting in a rooted directed graph whose nodes are statements, claims, and the takeaway.

The third stage performs trust inference by grounding the argumentation graph in external scientific evidence. Only Claim and Premise nodes are considered check‑worthy; an LLM determines whether each node can be verified against biomedical literature. For each such node, the system generates up to five supporting and five opposing search queries, retrieves PubMed articles, reranks them, and classifies the evidence as supporting, refuting, or neutral. The evidence nodes are attached to the original graph, and a factor‑graph probabilistic model propagates belief scores across the augmented structure. The final trust score of the takeaway reflects the degree of alignment between the influencer’s argumentative support and the retrieved scientific consensus.

Evaluation is conducted on a newly curated dataset of 195 TikTok health videos, each paired with expert annotations from ScienceFeedback. TAIGR is compared against strong baselines from claim verification, argument mining, and plain text classification. It achieves up to a 9.7 macro‑F1 point improvement, with particular gains on implicit takeaways that traditional systems miss. A targeted human study confirms the quality of each intermediate component (takeaway extraction, argument graph construction, evidence retrieval).

A large‑scale deployment on 1,430 additional medical‑domain TikTok videos reveals two notable patterns: (i) video popularity (views, likes) is largely uncorrelated with trustworthiness, and (ii) rhetorical strategy strongly predicts trust—videos that employ logical premises and evidence‑based reasoning receive higher trust scores, whereas those relying on anecdotal narratives or emotional appeals receive lower scores.

The paper’s contributions are: (1) a novel, theory‑driven framework that jointly models pragmatic takeaway extraction, argumentative structure, and evidence‑grounded reasoning; (2) empirical evidence that structured modeling substantially outperforms claim‑centric baselines on a realistic content‑validation task; (3) large‑scale insights into how influencer rhetoric, rather than virality, shapes perceived trustworthiness in health communication.

Limitations include reliance on LLMs for multiple labeling steps, which can propagate errors; dependence on PubMed, restricting applicability to biomedical claims; and the assumption of a single takeaway per video, which may not hold for longer or multi‑topic content. Future work is suggested to handle multiple takeaways, integrate diverse evidence sources beyond biomedical literature, and explore human‑in‑the‑loop methods to improve labeling reliability.

Comments & Academic Discussion

Loading comments...

Leave a Comment