Identifying and Transferring Reasoning-Critical Neurons: Improving LLM Inference Reliability via Activation Steering

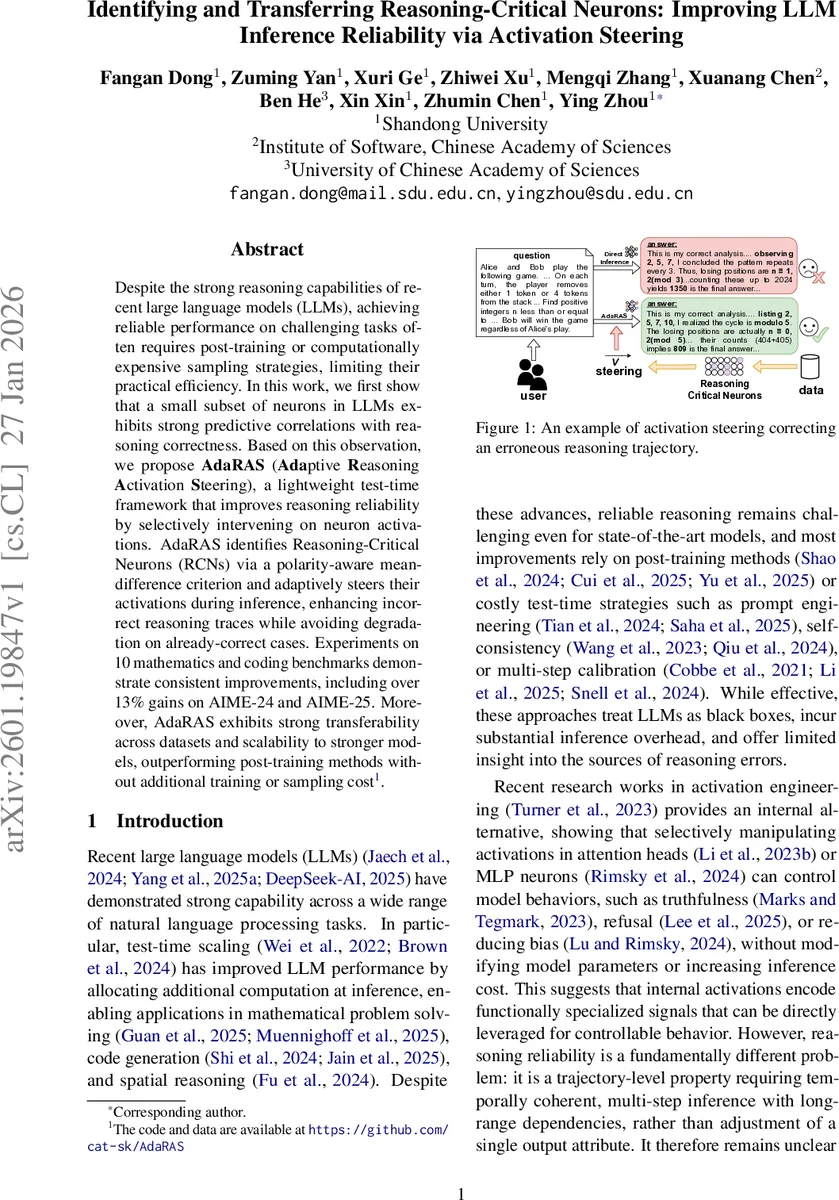

Despite the strong reasoning capabilities of recent large language models (LLMs), achieving reliable performance on challenging tasks often requires post-training or computationally expensive sampling strategies, limiting their practical efficiency. In this work, we first show that a small subset of neurons in LLMs exhibits strong predictive correlations with reasoning correctness. Based on this observation, we propose AdaRAS (Adaptive Reasoning Activation Steering), a lightweight test-time framework that improves reasoning reliability by selectively intervening on neuron activations. AdaRAS identifies Reasoning-Critical Neurons (RCNs) via a polarity-aware mean-difference criterion and adaptively steers their activations during inference, enhancing incorrect reasoning traces while avoiding degradation on already-correct cases. Experiments on 10 mathematics and coding benchmarks demonstrate consistent improvements, including over 13% gains on AIME-24 and AIME-25. Moreover, AdaRAS exhibits strong transferability across datasets and scalability to stronger models, outperforming post-training methods without additional training or sampling cost.

💡 Research Summary

The paper tackles the problem of unreliable reasoning in large language models (LLMs) when solving challenging mathematics and coding tasks. Existing solutions often rely on post‑training fine‑tuning, reinforcement learning, or expensive test‑time sampling strategies such as self‑consistency, which increase inference cost and treat the model as a black box. The authors first demonstrate that a small subset of MLP neurons inside LLMs exhibits strong predictive signals for whether a reasoning trace will end in a correct answer. By probing the last‑token activations of Qwen‑3‑1.7B/4B on AIME and AMC‑12 datasets, they train simple binary classifiers that achieve AUROC scores around 0.70–0.76, indicating that neuron activations correlate with final correctness.

Motivated by this observation, they introduce AdaRAS (Adaptive Reasoning Activation Steering), a lightweight, test‑time framework that identifies “Reasoning‑Critical Neurons” (RCNs) and selectively modifies their activations during generation. RCNs are discovered by constructing paired correct/incorrect reasoning traces for the same input, computing the mean activation difference S(l,i) for each neuron, and applying a polarity‑aware filter: only neurons whose average activation flips sign between correct and incorrect traces are retained. The top‑K neurons with the largest absolute differences form a sparse steering vector S′ₗ for each layer. During inference, a scalar α scales the addition of S′ₗ to the MLP output at every decoding step: hₗ ← hₗ₋₁ + W_down·ϕ(W_up·hₗ₋₁) + α·S′ₗ. This operation does not alter model parameters and incurs negligible computational overhead.

Because indiscriminate steering can degrade already‑correct answers, AdaRAS incorporates an adaptive gating mechanism. A lightweight failure‑prediction module is trained on early‑layer activations of the prompt, using F‑statistics for feature selection and a small attention‑based classifier. The predictor attains AUROC ≈ 0.83 on AIME, and during generation it decides whether to apply the steering. If a failure is predicted, the steering is activated; otherwise the model proceeds unchanged, preserving performance on easy cases.

The authors evaluate AdaRAS on ten benchmarks covering mathematics (AIME‑24, AIME‑25, AIME‑Extend, MATH‑500, GSM8K, AMC‑12) and coding (HumanEval, HumanEval+, MBPP, MBPP+). Baselines include vanilla chain‑of‑thought (CoT) prompting, three publicly available post‑trained reasoning models (DeepSeek‑R1‑Distill, OpenThinker, Nemotron), and a probing‑based activation steering method. AdaRAS consistently outperforms all baselines, achieving the highest accuracy on every dataset. Notably, on the difficult AIME‑25 benchmark it improves accuracy by +13.64%, surpassing the strongest post‑trained models of comparable size. Across all tasks it yields an average gain of about 5% over CoT, with larger gains (≈ 9%) on the hardest math sets. Moreover, RCNs identified on one dataset transfer to others, still delivering ≈ 4% improvements, demonstrating robustness and transferability.

Key contributions are: (1) empirical evidence that reasoning correctness can be predicted from a small set of internal neurons; (2) the AdaRAS framework, which provides a parameter‑free, test‑time activation steering method that improves reasoning reliability without extra training or sampling cost; (3) mechanistic insight that steering RCNs stabilizes latent reasoning trajectories while preserving semantic representations, enabling plug‑and‑play deployment. The work suggests that fine‑grained manipulation of internal neuron activations is a promising direction for enhancing LLM reasoning in a cost‑effective, interpretable manner.

Comments & Academic Discussion

Loading comments...

Leave a Comment