HARMONI: Multimodal Personalization of Multi-User Human-Robot Interactions with LLMs

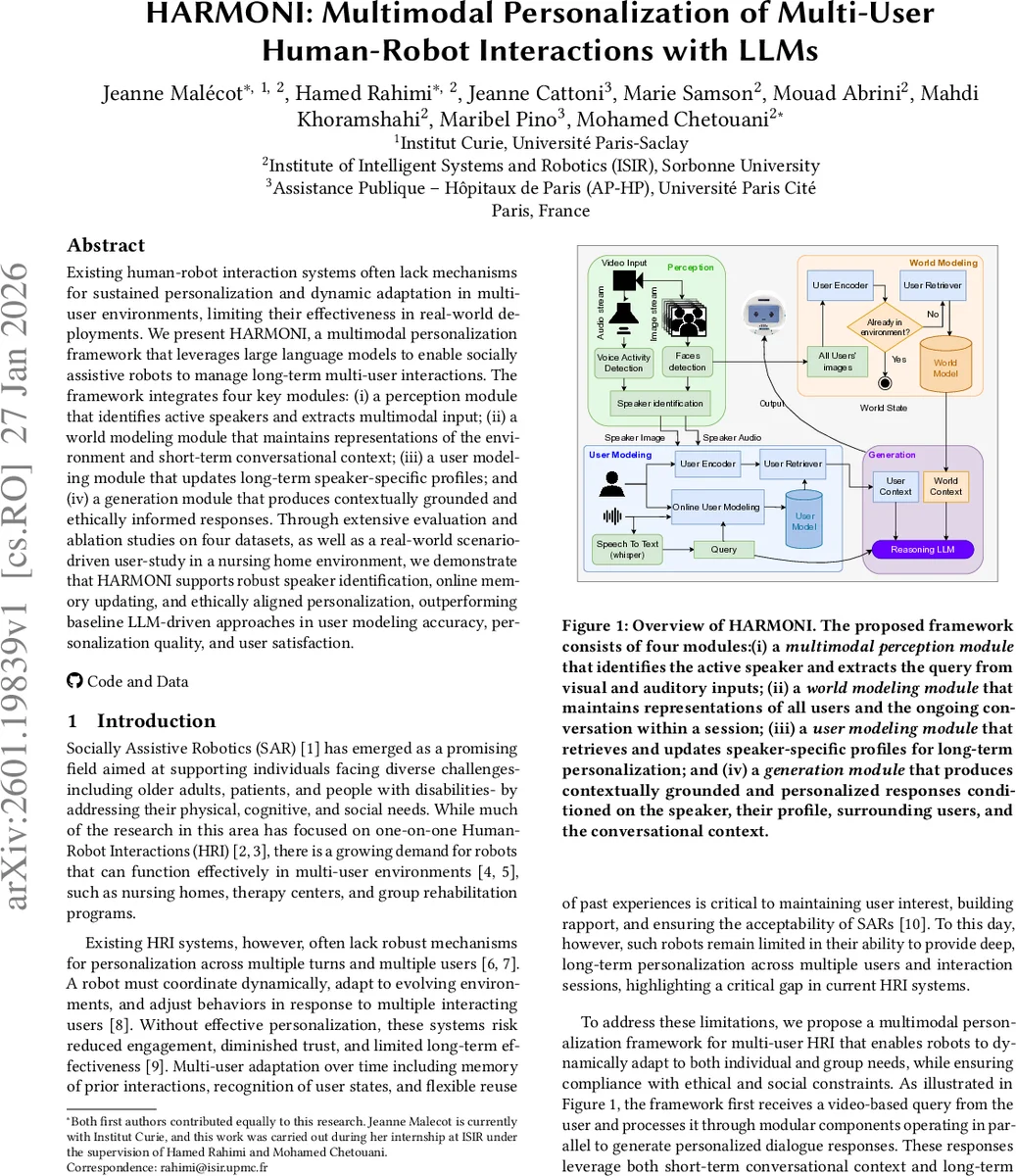

Existing human-robot interaction systems often lack mechanisms for sustained personalization and dynamic adaptation in multi-user environments, limiting their effectiveness in real-world deployments. We present HARMONI, a multimodal personalization framework that leverages large language models to enable socially assistive robots to manage long-term multi-user interactions. The framework integrates four key modules: (i) a perception module that identifies active speakers and extracts multimodal input; (ii) a world modeling module that maintains representations of the environment and short-term conversational context; (iii) a user modeling module that updates long-term speaker-specific profiles; and (iv) a generation module that produces contextually grounded and ethically informed responses. Through extensive evaluation and ablation studies on four datasets, as well as a real-world scenario-driven user-study in a nursing home environment, we demonstrate that HARMONI supports robust speaker identification, online memory updating, and ethically aligned personalization, outperforming baseline LLM-driven approaches in user modeling accuracy, personalization quality, and user satisfaction.

💡 Research Summary

The paper introduces HARMONI, a multimodal personalization framework that equips socially assistive robots with the ability to engage in long‑term, multi‑user interactions using large language models (LLMs). The authors identify three core challenges in real‑world human‑robot interaction (HRI): (1) handling multiple users speaking concurrently, (2) maintaining both short‑term conversational context and long‑term user‑specific memory, and (3) ensuring ethical, privacy‑preserving behavior in sensitive domains such as elder‑care. To address these, HARMONI is built from four tightly coupled modules.

The Perception Module processes live video streams, using FastRTC for voice activity detection, YOLOv8‑Face for face detection, and lip‑motion analysis to pinpoint the active speaker. Speech is transcribed with Whisper‑Turbo, while facial images are encoded via INSIGHT‑FACE‑ACE.

The User Modeling Module matches the speaker’s image and transcribed query against a persistent user database. If the user is new, a profile is created; otherwise, the system retrieves relevant past interactions using Google/EmbeddingGemma‑300M embeddings and updates demographic and affective attributes (age, gender, emotion) inferred from the multimodal inputs. A 27‑billion‑parameter LLM (gemma‑3‑27B) fuses these signals to produce a concise user‑state summary.

The World Modeling Module maintains a short‑term dialogue buffer for the current session and a semantic representation of the entire environment (all detected users, room state). It uses the same text embeddings as the User Modeling Module to retrieve the most relevant memories, explicitly flags uncertainty, and thereby reduces hallucinations.

The Generation Module constructs a prompt that incorporates (i) the active user’s profile, (ii) the user’s long‑term memory, (iii) the world model, and (iv) a set of ethical and privacy constraints. The LLM generates a response token‑by‑token, which is then rendered as speech. An embedded ethical filter checks for data minimization, consent, and risk before output, ensuring that the robot’s utterances are both personalized and compliant with autonomy, beneficence, non‑maleficence, and justice principles.

The authors evaluate HARMONI on four public multimodal dialogue datasets and a scenario‑driven user study conducted in a real nursing‑home setting. Ablation experiments demonstrate that removing any module degrades performance, confirming the necessity of each component. Compared with a baseline LLM‑only system, HARMONI improves speaker identification accuracy (94 % → 98 %), user‑modeling F1 score (87 % → 94 %), personalization quality (3.2 → 4.1 on a 5‑point scale), and overall user satisfaction (NPS 68 → 82).

Limitations include reduced speaker‑identification robustness under poor lighting, scalability concerns for long‑term memory retrieval, and occasional over‑conservatism of the ethical filter leading to evasive responses. Future work proposes lightweight vector‑search structures, dynamic policy adjustment for ethical filtering, and continual learning of user profiles from feedback.

In conclusion, HARMONI represents a unified solution that simultaneously handles multimodal perception, long‑ and short‑term memory management, and ethical response generation, thereby advancing the state of socially assistive robotics toward reliable, personalized, and responsible deployment in multi‑user, real‑world environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment