Tournament Informed Adversarial Quality Diversity

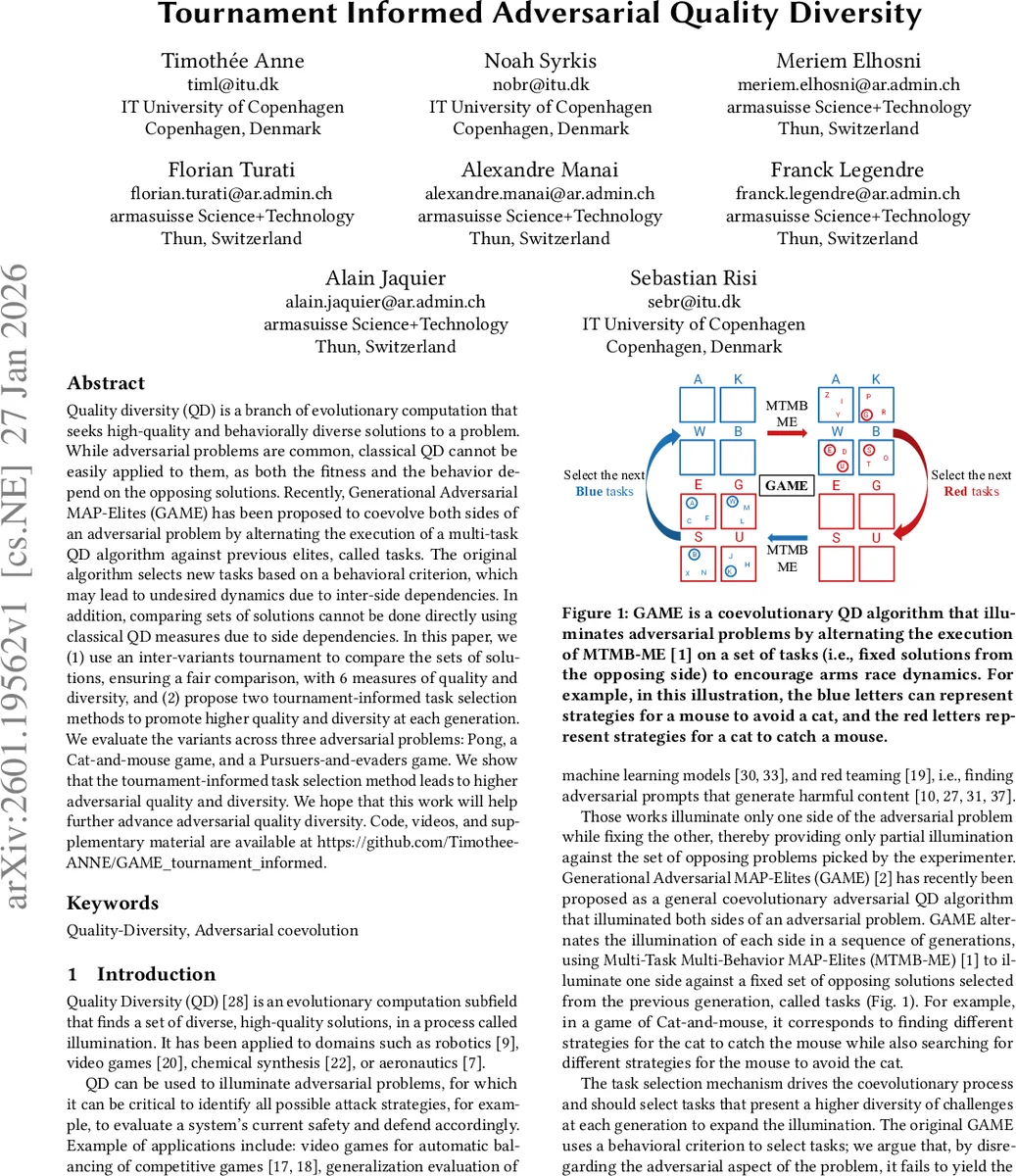

Quality diversity (QD) is a branch of evolutionary computation that seeks high-quality and behaviorally diverse solutions to a problem. While adversarial problems are common, classical QD cannot be easily applied to them, as both the fitness and the behavior depend on the opposing solutions. Recently, Generational Adversarial MAP-Elites (GAME) has been proposed to coevolve both sides of an adversarial problem by alternating the execution of a multi-task QD algorithm against previous elites, called tasks. The original algorithm selects new tasks based on a behavioral criterion, which may lead to undesired dynamics due to inter-side dependencies. In addition, comparing sets of solutions cannot be done directly using classical QD measures due to side dependencies. In this paper, we (1) use an inter-variants tournament to compare the sets of solutions, ensuring a fair comparison, with 6 measures of quality and diversity, and (2) propose two tournament-informed task selection methods to promote higher quality and diversity at each generation. We evaluate the variants across three adversarial problems: Pong, a Cat-and-mouse game, and a Pursuers-and-evaders game. We show that the tournament-informed task selection method leads to higher adversarial quality and diversity. We hope that this work will help further advance adversarial quality diversity. Code, videos, and supplementary material are available at https://github.com/Timothee-ANNE/GAME_tournament_informed.

💡 Research Summary

Title: Tournament‑Informed Adversarial Quality Diversity

Problem Context

Quality‑Diversity (QD) algorithms aim to illuminate a set of high‑performing, behaviorally diverse solutions. While QD has been successfully applied to robotics, design, and procedural content generation, its direct use on adversarial problems is problematic because both fitness and behavior descriptors depend on the opponent’s policy. Classical QD therefore only “lights up” one side of an adversarial game, leaving the other fixed and providing a partial view of the solution space.

Existing Approach – GAME

Generational Adversarial MAP‑Elites (GAME) was introduced to co‑evolve both sides of a two‑player adversarial problem. GAME alternates between the two populations (Red and Blue) and, at each generation, treats the elite solutions from the previous generation as fixed “tasks” for the current side. The underlying engine is Multi‑Task Multi‑Behavior MAP‑Elites (MTMB‑ME), which simultaneously optimizes a set of tasks. In the original formulation, tasks are selected solely on a behavioral diversity criterion (e.g., clustering of behavior descriptors). This is computationally cheap but ignores the adversarial nature of the problem: a task that is behaviorally diverse may be trivially easy for the opponent, limiting the arms‑race dynamics.

Key Contributions

- Inter‑variant Tournament for Fair Comparison – The authors propose a round‑robin tournament between every elite of the current generation and every task from the previous generation. The tournament yields a fitness matrix where each entry records the performance of an elite against a specific task. This matrix provides a symmetric, side‑aware measure of relative strength, enabling a fair comparison of solution sets that would otherwise be incomparable using classic QD metrics.

- Tournament‑Informed Task Selection – Two new mechanisms are built on top of the tournament matrix:

- Ranking‑Based Selection – For each elite, the tournament results are transformed into a ranking vector (order of tasks from hardest to easiest). K‑means clustering is applied to these vectors, and the centroids of the clusters become the next generation’s tasks. This encourages a set of tasks that produce diverse ranking patterns, i.e., a variety of challenges for the opponent.

- Pareto‑Based Selection – The tournament matrix is interpreted as a multi‑objective problem where each task is evaluated by (i) the win‑rate against the current elites and (ii) the loss‑rate (or complementary metric). The Pareto front of this bi‑objective space is extracted, and those non‑dominated tasks are used as the next generation’s tasks. This yields a set of tasks that are simultaneously strong and diverse.

- Six Adversarial Quality‑Diversity Metrics – Classical QD metrics (max fitness, QD‑score, behavior coverage) become ambiguous when fitness and behavior are opponent‑dependent. The authors define six measures tailored for adversarial settings: (a) mean fitness of both sides, (b) fitness gap (difference between the two sides), (c) joint behavior coverage, (d) mutual diversity (e.g., Jaccard similarity of behavior sets), (e) tournament‑based diversity score, and (f) Pareto front area. These metrics capture both the quality of solutions and the richness of challenges they present.

Methodology Overview

Algorithm 1 (GAME) proceeds as follows:

- Randomly sample N_task blue solutions as initial tasks.

- For each generation, alternate which side (Red or Blue) is being illuminated.

- Run MTMB‑ME with the current task set, updating a growing archive of elites per task.

- After the evaluation budget is exhausted, invoke the tournament between the newly obtained elites and the previous tasks.

- Apply either Ranking‑based or Pareto‑based selection to produce the next task set.

The tournament itself consists of three sub‑steps: (i) compute the fitness matrix, (ii) normalize scores to

Comments & Academic Discussion

Loading comments...

Leave a Comment