A Collaborative Extended Reality Prototype for 3D Surgical Planning and Visualization

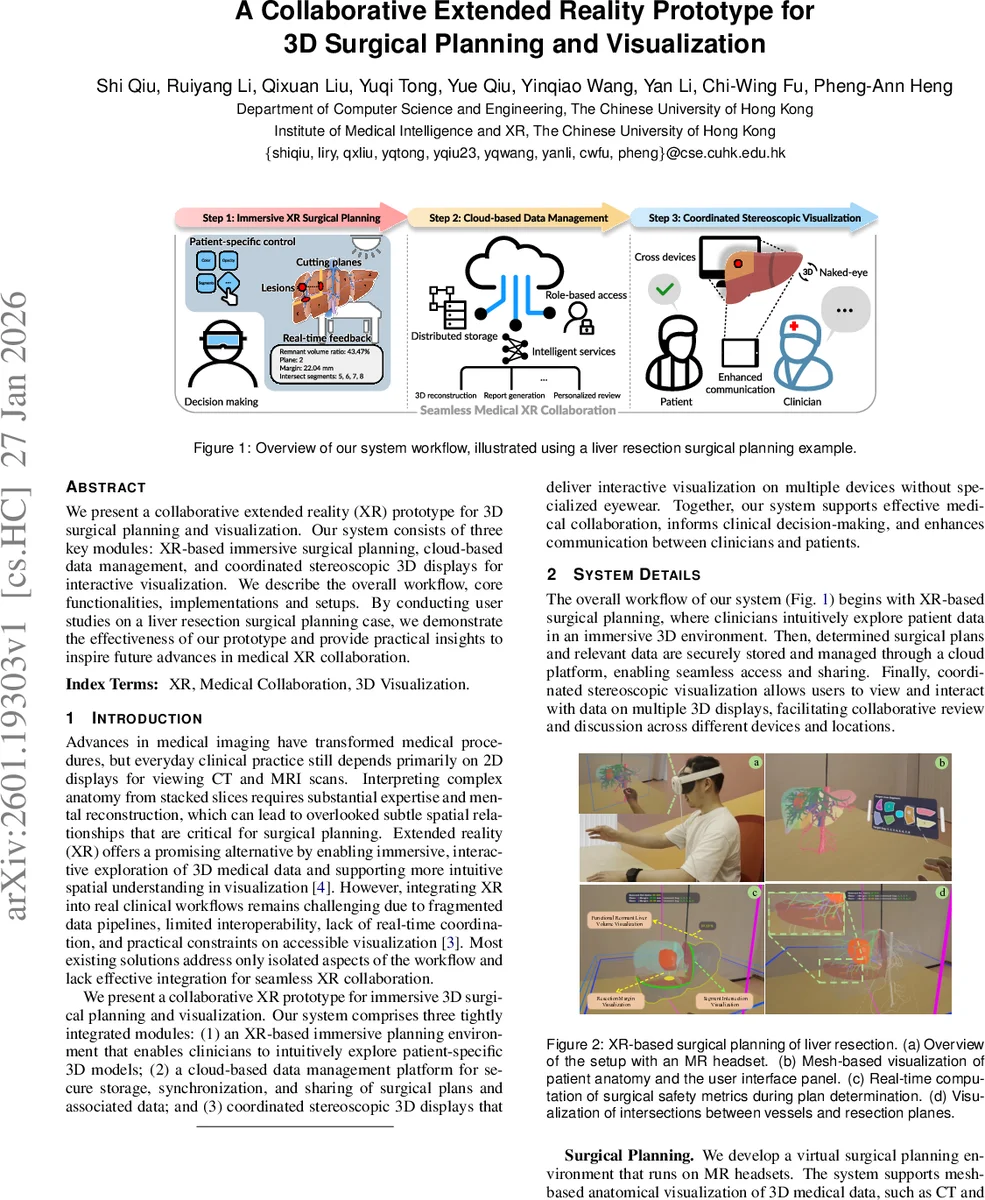

We present a collaborative extended reality (XR) prototype for 3D surgical planning and visualization. Our system consists of three key modules: XR-based immersive surgical planning, cloud-based data management, and coordinated stereoscopic 3D displays for interactive visualization. We describe the overall workflow, core functionalities, implementations and setups. By conducting user studies on a liver resection surgical planning case, we demonstrate the effectiveness of our prototype and provide practical insights to inspire future advances in medical XR collaboration.

💡 Research Summary

The paper introduces a collaborative extended reality (XR) prototype designed to support three‑dimensional (3D) surgical planning and visualization. The authors identify a gap in current clinical practice: while advanced imaging modalities such as CT and MRI are widely available, most clinicians still rely on two‑dimensional slice viewers, which demand substantial mental reconstruction and can obscure subtle spatial relationships critical for surgery. To bridge this gap, the system integrates three tightly coupled modules: (1) an immersive mixed‑reality (MR) planning environment, (2) a cloud‑based data‑management platform, and (3) a coordinated stereoscopic “naked‑eye” 3D display suite.

In the MR planning module, patient‑specific volumetric data are converted into mesh representations and rendered in real time on a head‑mounted MR headset. Surgeons can manipulate resection planes with free‑hand gestures, instantly visualizing intersections with vascular structures and functional liver segments. The system also computes safety metrics on the fly, such as the volume of the remaining liver (future liver remnant), providing immediate feedback that is not available in conventional desktop tools like 3D Slicer.

The cloud backend is built on the ThinkPHP framework and employs role‑based access control (RBAC) to secure sensitive medical data. All planning artifacts, meshes, and associated metadata are stored centrally, enabling authorized users—surgeons, researchers, or patients—to retrieve, edit, and share the latest plan from any device. This architecture eliminates version‑conflict issues and supports remote collaboration, a prerequisite for tele‑medicine and multi‑institutional case discussions.

The third module addresses the need for shared, high‑fidelity visualization without requiring each participant to wear specialized eyewear. A light‑field 3D standing screen and a stereoscopic panel equipped with viewpoint tracking are synchronized through a Unity application. The panel delivers high‑resolution, perspective‑correct images to individual observers, while the standing screen provides binocular disparity and motion parallax for a group of viewers. Interaction on either device propagates instantly to the other, allowing a surgeon to adjust a resection plane on the MR headset while a patient or colleague watches the updated anatomy on the naked‑eye display.

User experience was evaluated in two studies. The first involved eight liver surgeons (average age 27.9 years) who compared the XR system with a desktop baseline (3D Slicer). The System Usability Scale (SUS) score rose from 38.44 ± 16.90 to 76.25 ± 13.43—a 98 % improvement—and overall satisfaction averaged 4.75 / 5. The second study recruited ten engineering graduate students to assess three visualization setups: desktop, 3D panel only, and the coordinated platform (panel + standing screen). SUS scores increased progressively (63.60 → 72.20 → 81.00), and a repeated‑measures ANOVA confirmed a significant effect (F(2,18)=4.119, p=0.034). Post‑hoc tests showed the coordinated platform outperformed the desktop configuration (p=0.036). These results suggest that immersive XR planning and shared stereoscopic displays substantially enhance perceived usability and collaborative effectiveness.

The authors acknowledge several limitations. The hardware stack—high‑end MR headsets and proprietary light‑field displays—poses cost and scalability challenges. The cloud component, while functional, lacks integration with standard health‑information protocols such as HL7/FHIR, limiting interoperability with existing hospital information systems. The participant pools are small and skewed toward young, tech‑savvy users, and the studies do not address long‑term clinical workflow integration, fatigue, or error rates.

Future work will focus on lowering hardware barriers by exploring consumer‑grade AR devices, extending the cloud service to support HL7/FHIR, and incorporating AI‑driven planning assistance (e.g., automatic resection‑plane suggestion, risk prediction). The authors envision a fully integrated AI‑XR ecosystem that streamlines surgical decision‑making, enhances multidisciplinary education, and ultimately improves patient outcomes through more intuitive, collaborative visual communication.

Comments & Academic Discussion

Loading comments...

Leave a Comment