GLOVE: Global Verifier for LLM Memory-Environment Realignment

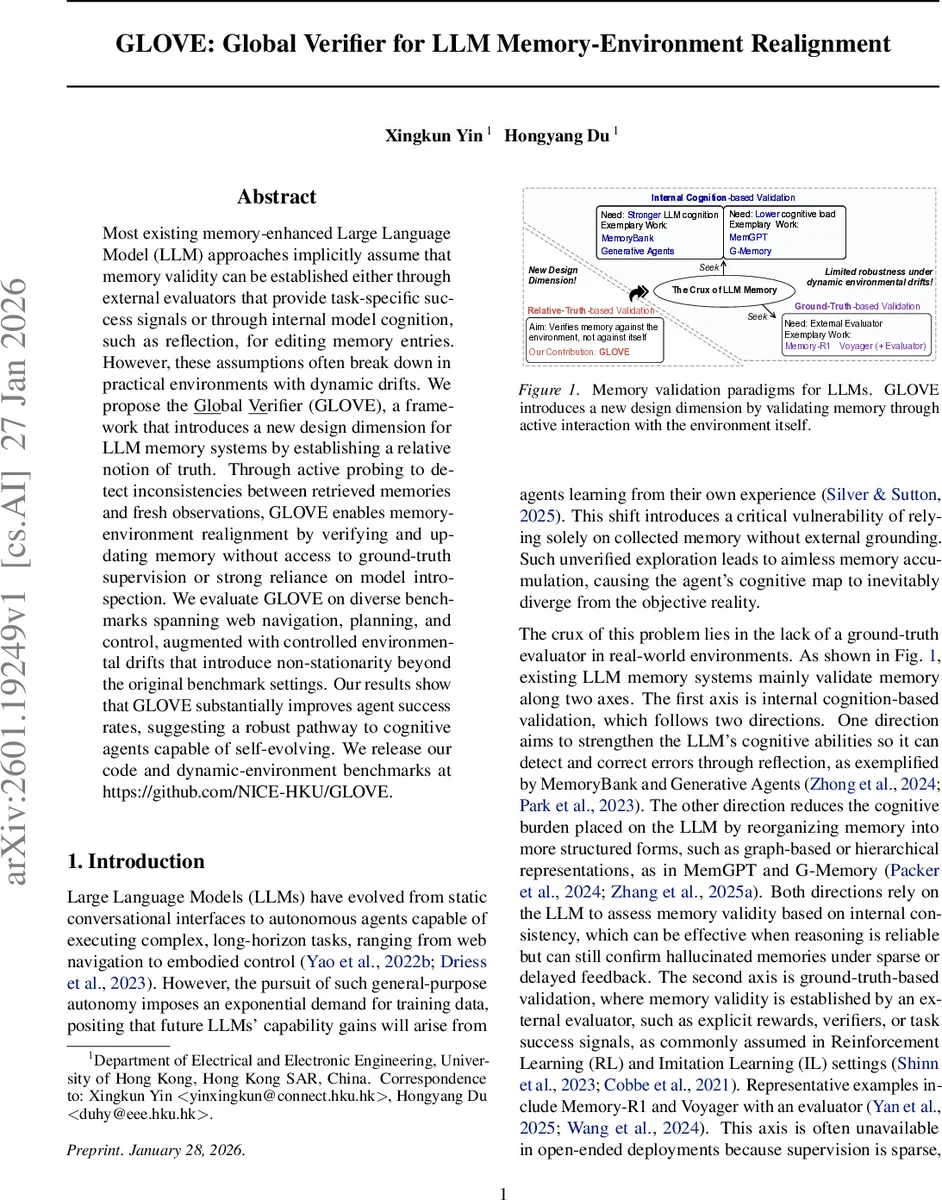

Most existing memory-enhanced Large Language Model (LLM) approaches implicitly assume that memory validity can be established either through external evaluators that provide task-specific success signals or through internal model cognition, such as reflection, for editing memory entries. However, these assumptions often break down in practical environments with dynamic drifts. We propose the Global Verifier (GLOVE), a framework that introduces a new design dimension for LLM memory systems by establishing a relative notion of truth. Through active probing to detect inconsistencies between retrieved memories and fresh observations, GLOVE enables memory-environment realignment by verifying and updating memory without access to ground-truth supervision or strong reliance on model introspection. We evaluate GLOVE on diverse benchmarks spanning web navigation, planning, and control, augmented with controlled environmental drifts that introduce non-stationarity beyond the original benchmark settings. Our results show that GLOVE substantially improves agent success rates, suggesting a robust pathway to cognitive agents capable of self-evolving.

💡 Research Summary

The paper tackles a fundamental weakness of current memory‑augmented large language model (LLM) agents: the assumption that stored experiences remain valid indefinitely. In real‑world deployments, the environment’s response distribution for a given state‑action pair can change without any explicit signal—a phenomenon the authors term “environmental drift.” Existing validation strategies fall into two camps. The first relies on internal cognition: the LLM reflects on its own reasoning or uses structured memory (graphs, hierarchies) to detect inconsistencies. This approach only checks coherence with past beliefs and fails when past memories are internally consistent yet externally obsolete. The second depends on external evaluators such as reward signals or success/failure feedback. These signals are typically sparse, delayed, and only assess terminal outcomes, making it impossible to pinpoint which intermediate memory entries have become stale.

To bridge this “epistemic gap,” the authors introduce the Global Verifier (GLO VE), a framework that treats memory as a hypothesis about the environment and continuously validates that hypothesis through active probing. The core idea is “relative truth”: when a retrieved experience conflicts with a fresh observation, the agent re‑executes the same state‑action pair a limited number of times (budget α) to gather fresh outcomes. By comparing the distribution of these new outcomes with the historical distribution stored for the same pre‑condition, GLO VE detects cognitive dissonance and constructs an updated transition model ˆQₜ(·|s,a). The outdated records are pruned from the experience bank and replaced with the verified transition summary, ensuring that future retrievals reflect the current dynamics.

Algorithmically, GLO VE operates in three phases within the standard LLM‑agent loop: (1) Cognitive Dissonance Detection – retrieve counterpart experiences N(eₜ) matching the current (sₜ,aₜ) and compute a statistical surprise metric Φ_surp; (2) Relative Truth Formation – if Φ_surp exceeds a threshold, actively probe the environment α times to collect a set V of new next‑states; (3) Memory‑Environment Realignment – replace N(eₜ) with the newly estimated distribution. The framework is model‑agnostic and can be plugged into any retrieval‑augmented generation system, regardless of whether the underlying memory is flat, graph‑based, or hierarchical.

Theoretical analysis shows that, under mild assumptions, the empirical distribution from the α probes converges to the true current transition distribution as α grows, and that the expected additional computational cost scales linearly with the number of probed state‑action pairs, which is bounded by the probing budget.

Empirically, the authors evaluate GLO VE on three distinct domains: (i) web navigation (e.g., automated shopping cart manipulation), (ii) discrete planning (puzzle solving, routing), and (iii) continuous control (robotic arm and simulated drone tasks). For each benchmark they introduce controlled drifts—such as UI layout changes, obstacle relocations, or physics parameter shifts—affecting 3–5 % of interactions. Baselines include state‑of‑the‑art memory‑augmented agents (MemoryBank, MemGPT, G‑Memory) and evaluator‑driven agents (Memory‑R1, Voyager). When GLO VE is added as a plug‑in, success rates improve by an average of 12.4 percentage points, with gains exceeding 20 pp in the most volatile scenarios. Ablation studies reveal that indiscriminate probing (probing every step) inflates cost without proportional performance gains, confirming the importance of the surprise‑driven detection step.

The authors acknowledge limitations: active probing assumes the ability to repeat the same action in the same state, which may be costly or unsafe in physical robots; the current matching relies on exact or near‑exact state embeddings, which could be refined with meta‑learning similarity metrics. Nonetheless, GLO VE establishes a new design dimension—relative‑truth‑based validation—that enables LLM agents to maintain up‑to‑date long‑term memory without external supervision or perfect introspection. The code and the dynamically‑drifted benchmarks are released publicly, inviting further research into lifelong, self‑evolving AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment