AgenticSCR: An Autonomous Agentic Secure Code Review for Immature Vulnerabilities Detection

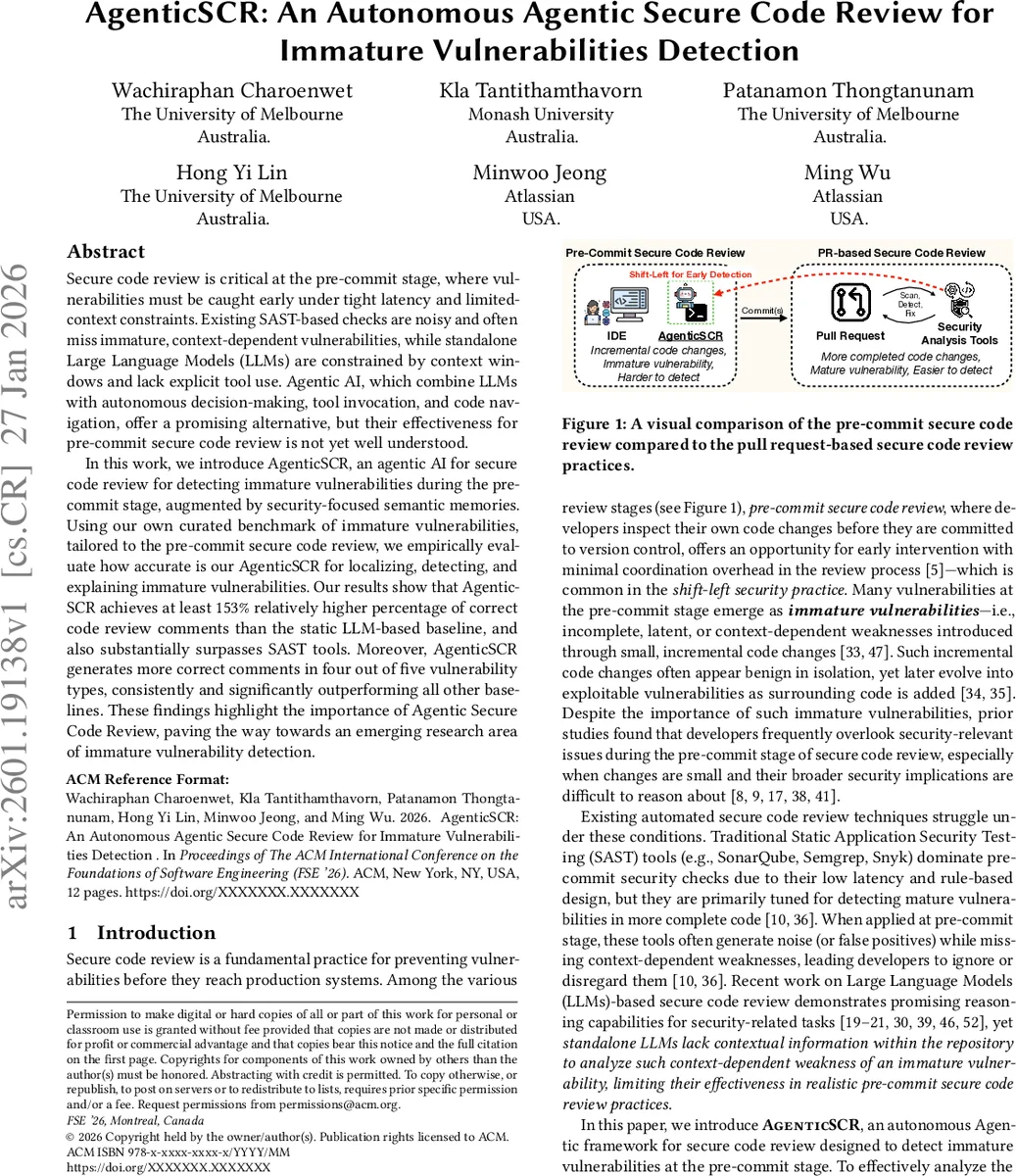

Secure code review is critical at the pre-commit stage, where vulnerabilities must be caught early under tight latency and limited-context constraints. Existing SAST-based checks are noisy and often miss immature, context-dependent vulnerabilities, while standalone Large Language Models (LLMs) are constrained by context windows and lack explicit tool use. Agentic AI, which combine LLMs with autonomous decision-making, tool invocation, and code navigation, offer a promising alternative, but their effectiveness for pre-commit secure code review is not yet well understood. In this work, we introduce AgenticSCR, an agentic AI for secure code review for detecting immature vulnerabilities during the pre-commit stage, augmented by security-focused semantic memories. Using our own curated benchmark of immature vulnerabilities, tailored to the pre-commit secure code review, we empirically evaluate how accurate is our AgenticSCR for localizing, detecting, and explaining immature vulnerabilities. Our results show that AgenticSCR achieves at least 153% relatively higher percentage of correct code review comments than the static LLM-based baseline, and also substantially surpasses SAST tools. Moreover, AgenticSCR generates more correct comments in four out of five vulnerability types, consistently and significantly outperforming all other baselines. These findings highlight the importance of Agentic Secure Code Review, paving the way towards an emerging research area of immature vulnerability detection.

💡 Research Summary

The paper addresses a critical gap in modern software development: the detection of “immature” security vulnerabilities during the pre‑commit stage, when developers review their own incremental code changes before they are committed to version control. Immature vulnerabilities are incomplete, latent, or highly context‑dependent flaws that may appear benign in isolation but can evolve into exploitable weaknesses as surrounding code is added. Existing static application security testing (SAST) tools (e.g., SonarQube, Semgrep, Snyk) are rule‑based, low‑latency solutions optimized for mature vulnerabilities in more complete code. When applied to pre‑commit changes they generate substantial noise (false positives) and miss context‑dependent issues. Recent large language model (LLM)‑based secure code review approaches improve reasoning capabilities but suffer from limited context windows and lack of explicit tool use, making them ill‑suited for the nuanced, repository‑wide analysis required for immature vulnerability detection.

To overcome these limitations, the authors propose AgenticSCR, an autonomous, agentic AI framework that combines LLM reasoning with explicit decision‑making, tool invocation, repository navigation, and a security‑focused memory system. The architecture is formally defined as a tuple ⟨E, A, G, M, T, Π⟩ where:

- E is the CLI‑based environment (filesystem, git repository, toolchain).

- A consists of two coordinated sub‑agents: a detector (ad) and a validator (av).

- G encodes high‑level goals (localization, detection, explanation).

- M is a structured memory comprising long‑term semantic memory (SAST rules) and short‑term episodic memory.

- T is the set of executable tools (git, grep, bash, etc.).

- Π is the coordination policy governing task decomposition, agent collaboration, and tool selection.

The detector sub‑agent extracts the diff (git diff) to focus on modified files and line ranges, then uses an LLM (Claude) together with a long‑term semantic memory of SAST rules to perform line‑level localization, generate a security review comment, and predict the vulnerability type. The validator sub‑agent leverages a CWE‑based validation framework (a tree of common weakness patterns) to verify and filter the detector’s output, thereby reducing false positives. The two agents iteratively exchange observations, plan actions, and call tools, with the memory system preserving context across iterations.

To evaluate AgenticSCR, the authors curated SCR‑Bench, a repository‑aware, human‑annotated line‑level benchmark of immature vulnerabilities specifically for the pre‑commit stage. SCR‑Bench includes the full repository context (dependencies, configuration files, etc.) and line‑level ground truth for localization, comment relevance, and vulnerability type. Experiments compare AgenticSCR against (1) a static LLM‑based baseline (the “CodeReviewer” approach from Liu et al.) and (2) traditional SAST tools.

Key results:

- AgenticSCR produces correct comments (localization + relevance + type) for 17.5 % of generated comments, an absolute improvement of 10.6 percentage points over the static LLM baseline.

- It generates only 32 % as many comments as the baseline, indicating a substantial reduction in noise.

- Relative to the baseline, AgenticSCR achieves at least 153 % higher correct‑comment rate.

- For four out of five vulnerability categories, AgenticSCR yields the highest correct‑comment proportion.

- Ablation studies show that both the semantic SAST memory (detector) and the CWE‑based validation (validator) contribute positively; the combination yields the best performance, improving localization and relevance by 10.2 % while cutting comment volume by 81 %.

The paper’s contributions are threefold:

- Introduction of AgenticSCR, a security‑augmented, autonomous agentic framework for pre‑commit secure code review.

- Construction and public release (upon acceptance) of SCR‑Bench, the first line‑level, repository‑aware benchmark for immature vulnerability detection.

- Empirical demonstration that agentic AI with explicit memory and validation mechanisms can outperform both rule‑based SAST tools and monolithic LLM approaches in a realistic, low‑latency development workflow.

In discussion, the authors argue that the success of AgenticSCR highlights the importance of security‑focused semantic memory and CWE‑driven validation for autonomous code review agents. They suggest future work on (a) domain‑specific skill‑sets for agents, (b) scaling to larger codebases with more sophisticated tool orchestration, and (c) integration into continuous integration pipelines for real‑time pre‑commit security enforcement.

Overall, the study establishes a promising new research direction—immature vulnerability detection via autonomous, memory‑enhanced agents—and provides concrete evidence that such systems can deliver higher precision, lower noise, and better contextual reasoning than existing static analysis or plain LLM‑based solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment