DMAP: Human-Aligned Structural Document Map for Multimodal Document Understanding

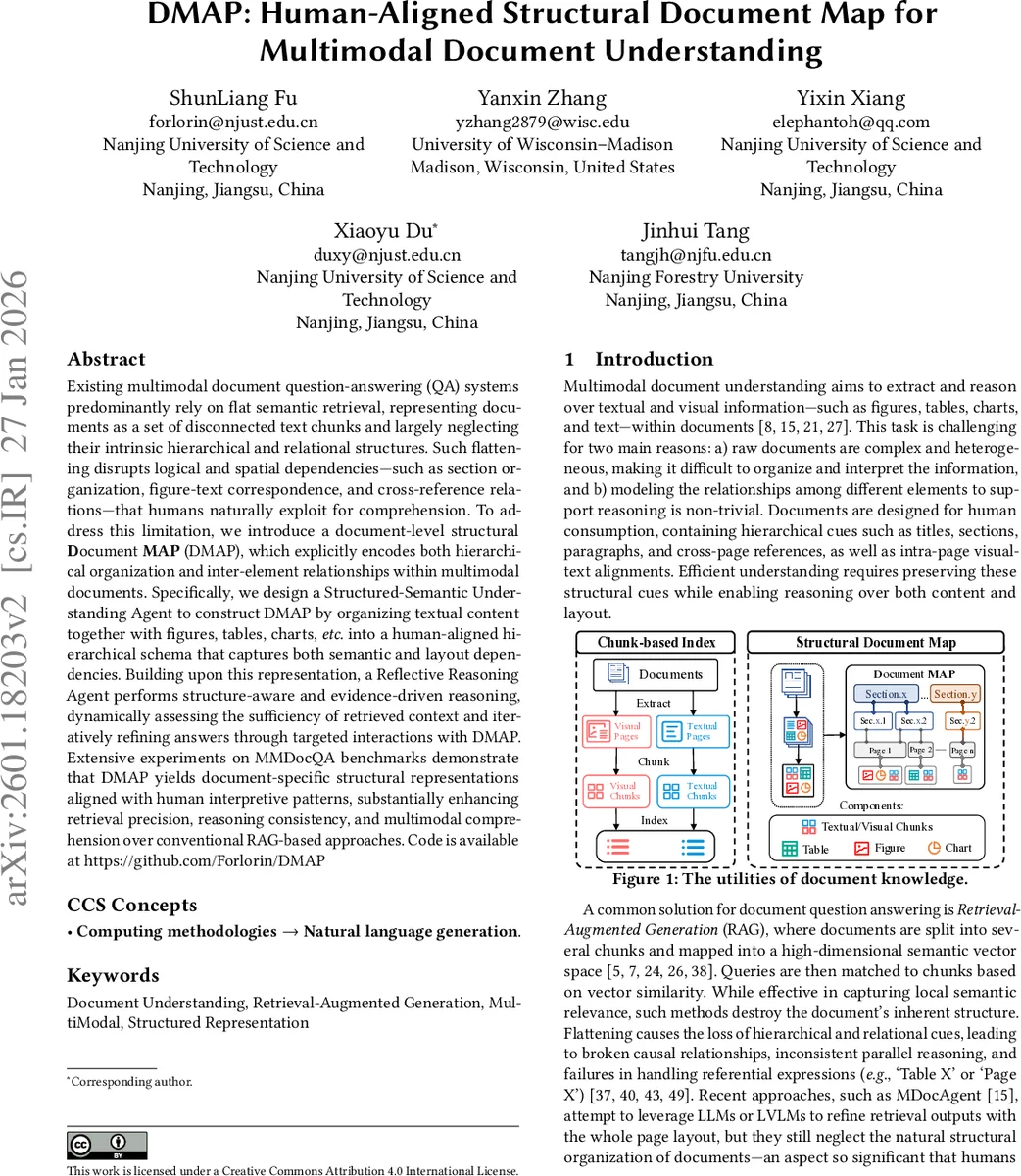

Existing multimodal document question-answering (QA) systems predominantly rely on flat semantic retrieval, representing documents as a set of disconnected text chunks and largely neglecting their intrinsic hierarchical and relational structures. Such flattening disrupts logical and spatial dependencies - such as section organization, figure-text correspondence, and cross-reference relations, that humans naturally exploit for comprehension. To address this limitation, we introduce a document-level structural Document MAP (DMAP), which explicitly encodes both hierarchical organization and inter-element relationships within multimodal documents. Specifically, we design a Structured-Semantic Understanding Agent to construct DMAP by organizing textual content together with figures, tables, charts, etc. into a human-aligned hierarchical schema that captures both semantic and layout dependencies. Building upon this representation, a Reflective Reasoning Agent performs structure-aware and evidence-driven reasoning, dynamically assessing the sufficiency of retrieved context and iteratively refining answers through targeted interactions with DMAP. Extensive experiments on MMDocQA benchmarks demonstrate that DMAP yields document-specific structural representations aligned with human interpretive patterns, substantially enhancing retrieval precision, reasoning consistency, and multimodal comprehension over conventional RAG-based approaches. Code is available at https://github.com/Forlorin/DMAP

💡 Research Summary

**

The paper tackles a fundamental shortcoming of current multimodal document question‑answering (DocQA) systems: they treat documents as a flat collection of text or image chunks, discarding the hierarchical and relational cues that humans naturally exploit when reading. To remedy this, the authors propose DMAP (Document MAP), a human‑aligned structural representation that explicitly encodes sections, pages, and fine‑grained elements (figures, tables, charts, and page‑level text) together with their spatial and referential relationships.

The framework consists of two cooperating agents. The Structured‑Semantic Understanding Agent (SSU A) first parses a raw multimodal document into pages, extracts all visual‑textual elements, and generates separate textual (v_T) and visual (v_V) embeddings for each element using pretrained language and vision encoders. It then populates a hierarchical graph: elements belong to a page node, pages are grouped under section nodes derived from headings, font cues, and layout heuristics, and sections form a tree that represents the whole document. Each edge is labeled (e.g., “contains”, “aligned‑with”, “references”) to capture human‑style connections such as “Figure 3 illustrates the concept described in Section 2.1”.

The Reflective Reasoning Agent (RRA) consumes a user query and the DMAP. It initially retrieves the top‑k most similar nodes based on cosine similarity between the query embedding and node embeddings. Unlike conventional RAG, RRA then asks a meta‑LLM (e.g., GPT‑4) to evaluate whether the retrieved evidence is sufficient to answer the question. If the meta‑LLM signals insufficiency, it returns a targeted request (e.g., “please locate Table 4 in Section 3.2”). RRA reformulates the request, performs a second retrieval on the DMAP, merges the new evidence, and repeats the evaluation loop until a confidence threshold is met or a maximum number of iterations is reached. Finally, the accumulated evidence set is fed to a generative LLM, which produces an answer that explicitly cites the supporting elements, mirroring how humans reference figures or tables in written explanations.

Experiments were conducted on the MMDocQA benchmark, which contains a diverse set of multimodal documents (academic papers, reports, patents) with questions that often require cross‑modal reasoning. Baselines included a standard flat‑chunk RAG, MDocAgent (which uses page‑level layout but ignores section hierarchy), and a generic graph‑RAG that builds a knowledge graph without document‑specific structure. Evaluation metrics comprised Exact Match, F1, a structural consistency score (measuring correct handling of references), and a human‑rated evidence‑sufficiency score. DMAP + RRA achieved an 8.7 percentage‑point gain in Exact Match and a 7.4 point gain in F1 over flat‑chunk RAG. The most pronounced improvements appeared on questions involving figure‑caption alignment or table references, where the hierarchical map allowed precise pinpointing of the required element. The structural consistency score rose from 3.9/5 (RAG) to 4.6/5, indicating that annotators found the DMAP‑driven answers more trustworthy.

The authors acknowledge several limitations. First, SSU A relies on traditional OCR and layout analysis pipelines to detect section boundaries; complex multi‑column or non‑standard layouts can cause errors. Second, the DMAP graph can become memory‑intensive for very long documents (thousands of pages), suggesting a need for graph compression or hierarchical indexing techniques. Third, the evidence‑sufficiency check uses a heuristic meta‑LLM prompt, which may be sensitive to domain shifts; learning a data‑driven policy via meta‑reinforcement learning could improve robustness.

Future work is outlined along three axes: (i) integrating transformer‑based layout encoders to improve automatic section detection, (ii) developing scalable graph‑sampling or hierarchical retrieval methods for ultra‑long documents, and (iii) training a reinforcement‑learning controller that learns optimal evidence‑checking and retrieval strategies from human feedback.

In summary, DMAP introduces a principled way to preserve and exploit the intrinsic hierarchical and relational structure of multimodal documents. By coupling a structured‑semantic parser with a reflective, evidence‑driven reasoning loop, the system bridges the gap between flat‑chunk retrieval and human‑like document comprehension, delivering more accurate, consistent, and explainable answers on challenging multimodal QA tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment