A Systemic Evaluation of Multimodal RAG Privacy

The growing adoption of multimodal Retrieval-Augmented Generation (mRAG) pipelines for vision-centric tasks (e.g. visual QA) introduces important privacy challenges. In particular, while mRAG provides a practical capability to connect private datasets to improve model performance, it risks the leakage of private information from these datasets during inference. In this paper, we perform an empirical study to analyze the privacy risks inherent in the mRAG pipeline observed through standard model prompting. Specifically, we implement a case study that attempts to infer the inclusion of a visual asset, e.g. image, in the mRAG, and if present leak the metadata, e.g. caption, related to it. Our findings highlight the need for privacy-preserving mechanisms and motivate future research on mRAG privacy.

💡 Research Summary

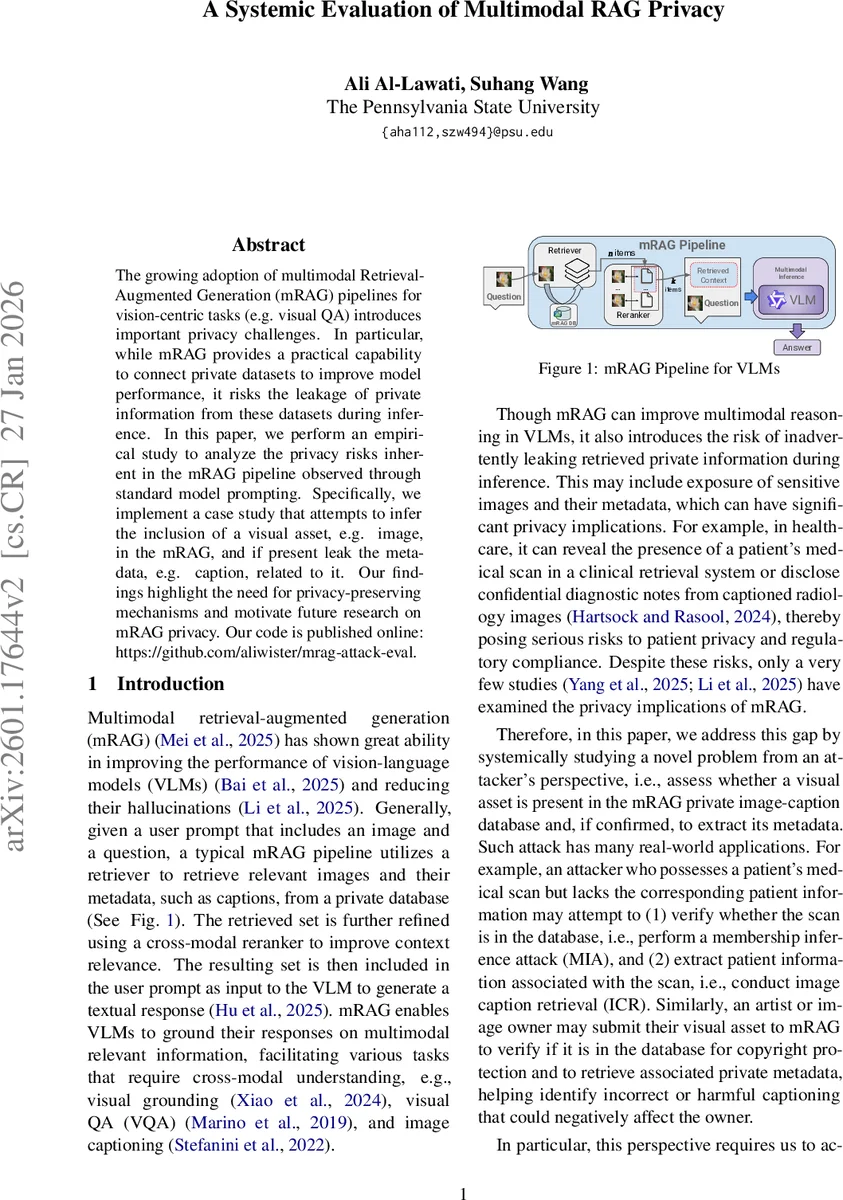

The paper presents a systematic empirical study of privacy risks in multimodal Retrieval‑Augmented Generation (mRAG) pipelines that are used for vision‑centric tasks such as visual question answering. An mRAG system typically retrieves image‑caption pairs from a private database, reranks them, and feeds the top results together with the user’s query image into a vision‑language model (VLM) that generates a textual answer. While this architecture improves performance and reduces hallucinations, it also creates a potential leakage channel for private visual assets and their associated metadata.

To quantify this risk, the authors define two attack scenarios. The first, Membership Inference Attack (MIA), asks whether a given image (or a transformed version of it) is present in the private database. The second, Image Caption Retrieval (ICR), attempts to extract the exact caption linked to that image if it is present. Both attacks are performed in a black‑box setting: the adversary can only send an image and a textual prompt to the system’s API and observe the generated answer. The prompts are deliberately simple (“Is the input image identical to any retrieved image?” for MIA and “Return the caption verbatim for the matching image” for ICR) to isolate the intrinsic privacy leakage of the pipeline rather than the effect of sophisticated adversarial optimization.

The experimental setup mirrors a realistic mRAG pipeline. Images are encoded with CLIP, and cosine similarity is used to retrieve the top n = 20 candidates from a multimodal database. A Jina‑Reranker (image‑image configuration) then selects the top k = 5 pairs for generation. Three state‑of‑the‑art VLMs—Qwen2.5‑VL, Cosmos‑Reason1, and InternVL3.5—are evaluated across four datasets: medical scans, generic COCO‑v2 images, a Pokémon image collection, and the mRAG‑Bench suite. For each image, four transformations are applied: the original, 90°/180° rotation, central cropping, and masking.

Results for MIA show near‑perfect performance on original images (average F1 ≈ 0.993). Even under transformations, the attack remains effective: average F1 ranges from 0.96 for cropping to 0.60 for rotation, indicating that simple visual perturbations do not fully mitigate leakage. ICR performance is more variable. Exact‑match caption recovery on original images reaches 0.75 on generic datasets but drops to 0.41 on medical data, reflecting the higher semantic complexity of clinical images. Transformations further degrade success, with up to a 72 % reduction under rotation.

Beyond the core attacks, the authors conduct ablation studies on prompt structure and retrieval‑rerank configurations. Placing the query image before the retrieved set in the prompt dramatically reduces MIA success, suggesting that ordering influences the model’s attention to private content. The reranking step consistently dampens ICR leakage, though its effectiveness depends on dataset characteristics and the size of the retrieved set. Larger candidate pools (e.g., increasing n to 40) increase attack success, highlighting a trade‑off between retrieval richness and privacy.

The paper’s contributions are threefold: (i) a comprehensive evaluation of both MIA and ICR attacks on image‑centric mRAG under realistic visual transformations; (ii) extensive ablations revealing how prompt design, candidate‑set size, and reranking affect privacy exposure; and (iii) empirical insights that motivate the development of privacy‑preserving mRAG mechanisms such as differential privacy, encrypted retrieval, or transformation‑robust prompting.

Limitations include the focus on text‑only VLMs (excluding multimodal LLMs that generate images) and the assumption of a purely black‑box adversary with limited computational resources. Defensive strategies are discussed conceptually but not implemented or benchmarked. Future work should explore concrete mitigation techniques, extend analysis to generative multimodal models, and evaluate defenses under adaptive adversaries.

Overall, the study demonstrates that mRAG pipelines remain vulnerable to privacy attacks even when images are altered, underscoring the urgent need for privacy‑by‑design considerations in the deployment of multimodal retrieval‑augmented systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment