Learning Neural Operators from Partial Observations via Latent Autoregressive Modeling

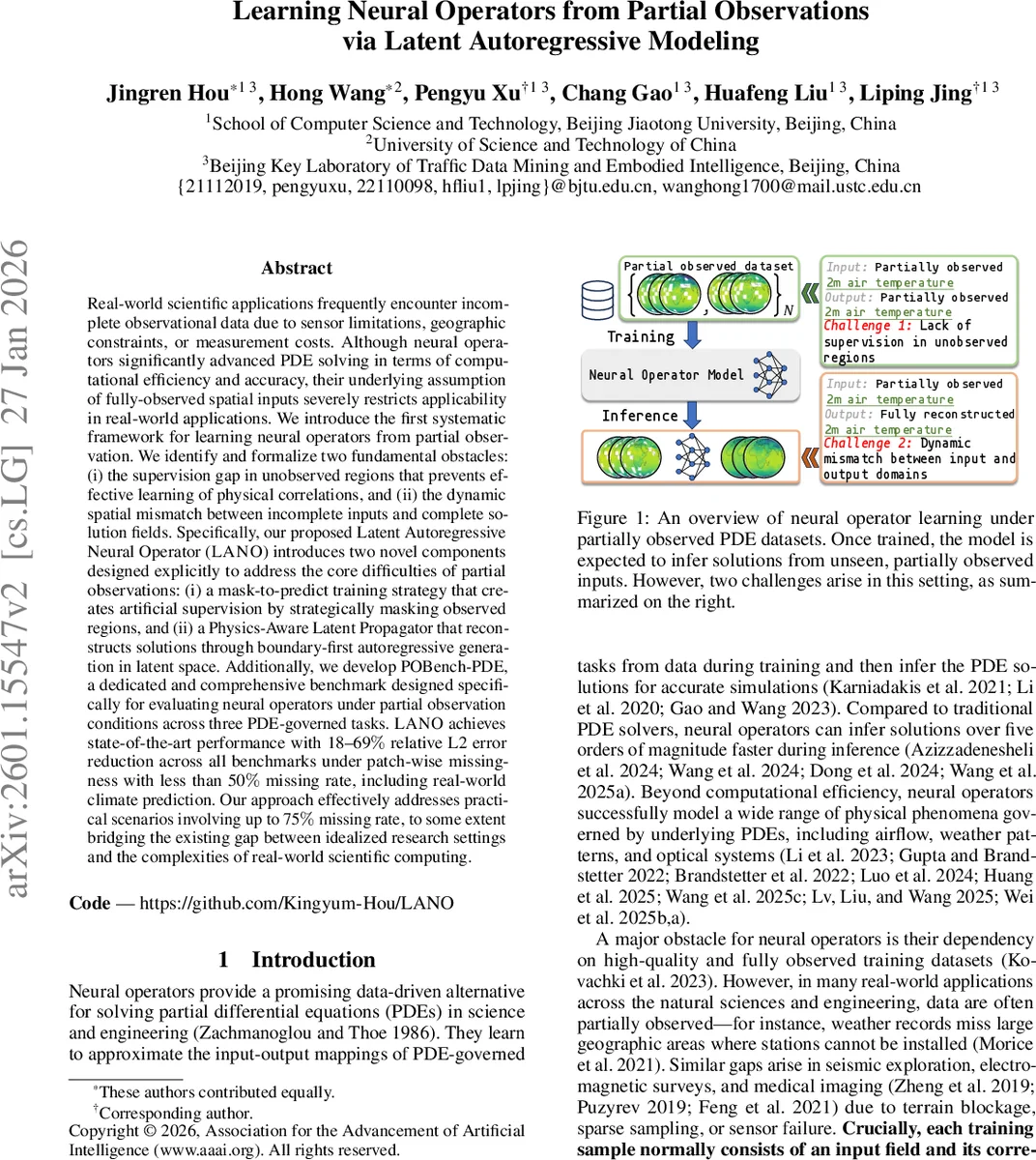

Real-world scientific applications frequently encounter incomplete observational data due to sensor limitations, geographic constraints, or measurement costs. Although neural operators significantly advanced PDE solving in terms of computational efficiency and accuracy, their underlying assumption of fully-observed spatial inputs severely restricts applicability in real-world applications. We introduce the first systematic framework for learning neural operators from partial observation. We identify and formalize two fundamental obstacles: (i) the supervision gap in unobserved regions that prevents effective learning of physical correlations, and (ii) the dynamic spatial mismatch between incomplete inputs and complete solution fields. Specifically, our proposed Latent Autoregressive Neural Operator(LANO) introduces two novel components designed explicitly to address the core difficulties of partial observations: (i) a mask-to-predict training strategy that creates artificial supervision by strategically masking observed regions, and (ii) a Physics-Aware Latent Propagator that reconstructs solutions through boundary-first autoregressive generation in latent space. Additionally, we develop POBench-PDE, a dedicated and comprehensive benchmark designed specifically for evaluating neural operators under partial observation conditions across three PDE-governed tasks. LANO achieves state-of-the-art performance with 18–69$%$ relative L2 error reduction across all benchmarks under patch-wise missingness with less than 50$%$ missing rate, including real-world climate prediction. Our approach effectively addresses practical scenarios involving up to 75$%$ missing rate, to some extent bridging the existing gap between idealized research settings and the complexities of real-world scientific computing.

💡 Research Summary

**

The paper tackles a fundamental limitation of current neural operators: they assume fully observed input fields, which is rarely the case in real scientific and engineering applications where sensors are sparse, geographic coverage is incomplete, or measurements are costly. The authors identify two core challenges that arise when training neural operators on partially observed data. First, the “supervision gap” – unobserved regions lack ground‑truth labels, preventing the model from learning the physical correlations that govern the underlying PDE. Second, the “dynamic spatial mismatch” – each training sample may have a different observed sub‑domain, so the input and output domains are misaligned and vary across time steps.

To overcome these obstacles, the authors propose the Latent Autoregressive Neural Operator (LANO), which integrates two novel components.

-

Mask‑to‑Predict Training (MPT) – Inspired by masked language modeling and masked image modeling, MPT deliberately masks additional portions of an already partially observed input during training. The model is then tasked with reconstructing the original output from this doubly‑masked input. By doing so, the network learns to extrapolate from limited context and gains robustness to real missing regions. A consistency regularizer enforces that predictions from the original and masked inputs remain aligned, further encouraging invariance to sparsity. Importantly, MPT requires no extra labeled data and is applied only during training; at inference time the model receives the genuine observation mask.

-

Physics‑Aware Latent Propagator (PhLP) with Boundary‑First Autoregression – Instead of predicting the whole field in one shot, LANO operates in a latent space and progressively propagates information from observed boundaries toward interior points. PhLP employs a Physics‑Cross‑Attention (PhCA) mechanism where the query, key, and value are derived directly from the latent features, and the binary observation mask is multiplied into the attention weights, ensuring that only observed locations contribute to the propagation. Partial convolutions are used to update the mask and attention maps at each step, mimicking the physical principle that PDE solutions evolve outward from known boundary conditions. This boundary‑first autoregressive scheme reduces blurring and improves spatial coherence, while latent‑space computation lowers memory and compute costs for high‑resolution problems.

To evaluate the approach, the authors introduce POBench‑PDE, a benchmark suite specifically designed for operator learning under partial observation. It comprises three PDE families (e.g., Navier‑Stokes, heat diffusion, atmospheric dynamics) and six dataset variants that vary the missing‑region pattern (patches, random strips, structured gaps) and missing‑rate from 10 % up to 75 %.

Experimental results show that LANO consistently outperforms state‑of‑the‑art neural operators such as Fourier Neural Operator (FNO), DeepONet, Geo‑FNO, and latent‑space models like LSM. Under patch‑wise missingness with ≤ 50 % missing rate, LANO reduces the relative L2 error by 18 %–69 % (average ≈ 38 %). Even at a severe 75 % missing rate, it still achieves a 15 % error reduction compared to the best baseline. In a real‑world climate forecasting task (2 m air temperature), LANO lowers the RMSE from 0.12 K (baseline) to 0.07 K, demonstrating practical impact.

The paper also provides a theoretical justification: PDE solutions can be viewed as iterative updates of integral operators; traditional neural operators approximate these integrals via Monte‑Carlo sampling. PhLP, by embedding the observation mask directly into attention, enforces a physics‑consistent propagation that improves numerical stability. MPT, meanwhile, can be interpreted as a regularizer that encourages the learned operator to be invariant to stochastic sparsity, reducing error accumulation in long‑term autoregressive predictions.

In summary, the contributions are threefold: (1) formal definition of the partial‑observation problem and its two key challenges; (2) the LANO framework that combines mask‑to‑predict training with a physics‑aware latent autoregressive propagator, enabling effective learning from dynamically mismatched, incomplete data; (3) the release of POBench‑PDE, establishing a standardized testbed for future research. By bridging the gap between idealized fully‑observed settings and the messy reality of scientific data acquisition, LANO represents a significant step forward for neural operator methods in real‑world applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment