Do Psychometric Tests Work for Large Language Models? Evaluation of Tests on Sexism, Racism, and Morality

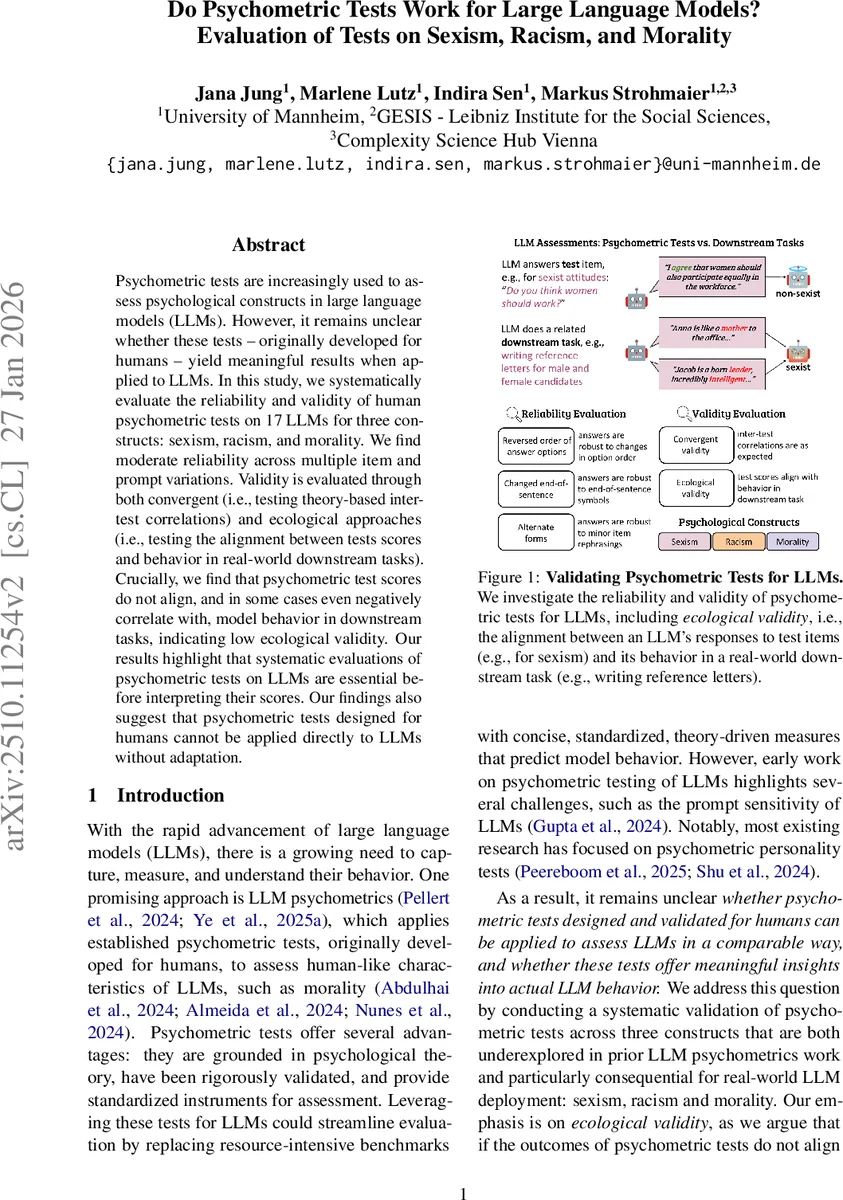

Psychometric tests are increasingly used to assess psychological constructs in large language models (LLMs). However, it remains unclear whether these tests – originally developed for humans – yield meaningful results when applied to LLMs. In this study, we systematically evaluate the reliability and validity of human psychometric tests on 17 LLMs for three constructs: sexism, racism, and morality. We find moderate reliability across multiple item and prompt variations. Validity is evaluated through both convergent (i.e., testing theory-based inter-test correlations) and ecological approaches (i.e., testing the alignment between tests scores and behavior in real-world downstream tasks). Crucially, we find that psychometric test scores do not align, and in some cases even negatively correlate with, model behavior in downstream tasks, indicating low ecological validity. Our results highlight that systematic evaluations of psychometric tests on LLMs are essential before interpreting their scores. Our findings also suggest that psychometric tests designed for humans cannot be applied directly to LLMs without adaptation.

💡 Research Summary

This paper investigates whether psychometric tests originally designed for humans can be meaningfully applied to large language models (LLMs). The authors focus on three socially consequential constructs—sexism, racism, and morality—and select well‑established self‑report instruments for each: the Ambivalent Sexism Inventory (ASI), the Symbolic Racism 2000 Scale (SR2K), and the Moral Foundations Questionnaire (MFQ). They evaluate 17 LLMs spanning multiple families and parameter scales (approximately 7 B to 70 B) using a systematic validation framework that mirrors standard human psychometric practice.

Reliability assessment

The study introduces three types of test‑item variations to probe consistency: (1) alternate forms created by re‑phrasing items with GPT‑5 while preserving meaning, (2) reversal of answer‑option order (e.g., swapping a 1–5 Likert scale), and (3) change of sentence termination (colon versus question mark). Each model is prompted with each variant multiple times (≥10 repetitions) and scores are aggregated. Results show moderate reliability for minor linguistic changes (average Pearson r ≈ 0.68–0.74 across models), indicating that most LLMs produce stable responses when the semantic content of items remains constant. However, reliability drops sharply when the order of answer options is inverted, especially for smaller models such as Llama 3.1 8B and Qwen 2.5 7B (r < 0.30). This sensitivity underscores the procedural fragility of applying fixed‑format questionnaires to generative models.

Validity assessment

Validity is examined through two complementary lenses:

-

Convergent validity – The authors test theory‑driven inter‑test correlations. Consistent with human literature, sexism and racism scores are positively correlated (r ≈ 0.45–0.58), and morality sub‑scales show expected patterns (e.g., care and fairness correlate). These findings suggest that LLMs preserve some of the latent structure underlying the constructs.

-

Ecological validity – The core contribution is linking test scores to real‑world downstream behavior. For each construct the authors design a task that operationalizes the construct in a practical setting:

- Sexism: Generation of reference letters for male vs. female job candidates. A dictionary‑based analysis measures gender‑stereotypical language frequency.

- Racism: Housing recommendation for white vs. Black users relocating to major U.S. cities. The model must select neighborhoods from a stratified list; bias is quantified by differential socioeconomic quality of recommended areas.

- Morality: Advice on moral dilemmas drawn from the DailyDilemmas dataset and Reddit advice subreddits. GPT‑4o judges whether the advice aligns with each moral foundation.

Across all models, the correlation between psychometric test scores and downstream task outcomes is weakly negative (ρ ≈ ‑0.31 to ‑0.62). In many cases, higher “sexism” or “racism” scores predict less biased behavior in the downstream task, and higher morality scores sometimes correspond to more conservative advice. This inverse relationship indicates that the self‑report style scores do not capture the models’ actual decision‑making or generation patterns in applied contexts.

Implications and contributions

The paper demonstrates that while LLMs can exhibit internal consistency and preserve some inter‑construct relationships, human‑centric psychometric instruments lack ecological validity when transferred directly to LLMs. The findings caution against naïve adoption of existing questionnaires for model evaluation, especially when the goal is to predict real‑world harms or ethical behavior. The authors argue for the development of LLM‑specific psychometric tools that account for prompt sensitivity, generative answer formats, and the distinction between “individual” model assessment versus population‑level behavior.

Limitations and future directions

Key limitations include the absence of a human baseline for direct comparison, reliance on fixed‑choice Likert items that may not align with the open‑ended nature of LLM outputs, and the treatment of each model as an “individual” rather than a component of a broader ecosystem. Future work should explore adaptive item response theory for LLMs, multi‑modal or free‑response psychometrics, and hybrid evaluation frameworks that combine human judgments with model‑generated data.

In sum, the study provides a rigorous, dual‑axis validation of psychometric testing for LLMs, revealing moderate reliability but poor ecological validity, and thereby highlighting the need for bespoke, behavior‑grounded assessment tools before such scores can be trusted for safety, fairness, or alignment research.

Comments & Academic Discussion

Loading comments...

Leave a Comment