TextMineX: Data, Evaluation Framework and Ontology-guided LLM Pipeline for Humanitarian Mine Action

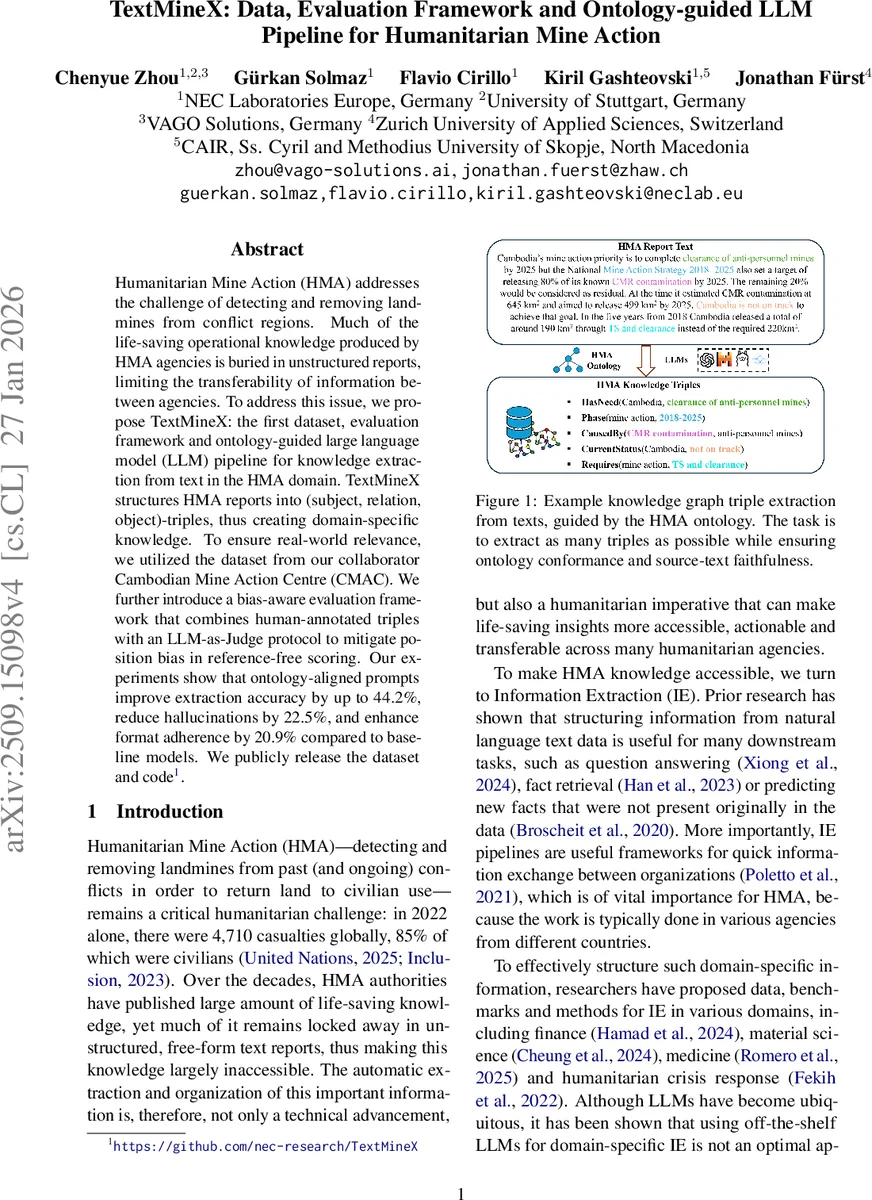

Humanitarian Mine Action (HMA) addresses the challenge of detecting and removing landmines from conflict regions. Much of the life-saving operational knowledge produced by HMA agencies is buried in unstructured reports, limiting the transferability of information between agencies. To address this issue, we propose TextMineX: the first dataset, evaluation framework and ontology-guided large language model (LLM) pipeline for knowledge extraction from text in the HMA domain. TextMineX structures HMA reports into (subject, relation, object)-triples, thus creating domain-specific knowledge. To ensure real-world relevance, we utilized the dataset from our collaborator Cambodian Mine Action Centre (CMAC). We further introduce a bias-aware evaluation framework that combines human-annotated triples with an LLM-as-Judge protocol to mitigate position bias in reference-free scoring. Our experiments show that ontology-aligned prompts improve extraction accuracy by up to 44.2%, reduce hallucinations by 22.5%, and enhance format adherence by 20.9% compared to baseline models. We publicly release the dataset and code.

💡 Research Summary

TextMineX addresses a critical gap in humanitarian mine action (HMA) by providing the first domain‑specific dataset, evaluation framework, and ontology‑guided large language model (LLM) pipeline for extracting structured knowledge from unstructured mine‑action reports. The authors collected 120 publicly available technical PDFs from the Cambodian Mine Action Centre (CMAC) and the Geneva International Centre for Humanitarian Demining (GICHD), filtered them for relevance, and focused on the five most recent CMAC reports (233 pages). From these, 1,095 high‑quality (subject, relation, object) triples were curated through a three‑stage process: automatic chunk‑based prompt generation, initial annotation by GPT‑4o and Llama‑3‑70B, and expert human verification. Inter‑annotator agreement scores (Jaccard 0.89, Dice 0.94, Overlap 0.97) confirm the reliability of the ground‑truth set.

The ontology backbone integrates six IMSMA core models with a general humanitarian ontology (Empathi), yielding 160 entity types and 86 relation types that capture the full spectrum of HMA concepts such as MineEvent, ContaminatedArea, and ClearanceMethod. This comprehensive schema guides both annotation and model prompting.

The pipeline consists of four stages. First, a layout‑aware parser (Open‑Parse) splits PDFs into semantically coherent paragraph chunks (average 127 words), preserving contextual information while fitting within typical LLM context windows. Second, five prompting strategies are evaluated: zero‑shot, one‑shot with random sentence, one‑shot with random paragraph, one‑shot with ontology‑aligned sentence, and one‑shot with ontology‑aligned paragraph. Ontology‑aligned demonstrations share the exact entity and relation schema of the target chunk, thereby constraining the label space and encouraging domain‑specific reasoning. Third, the selected prompts are fed to LLMs (GPT‑4o, Llama‑3‑70B) using greedy decoding (temperature 0) to produce deterministic triples. Fourth, a multi‑perspective evaluation combines reference‑based metrics (BLEU, ROUGE, METEOR, BERTScore) with a reference‑free LLM‑as‑Judge approach that scores factuality, format conformance, and ontology compliance. To mitigate position bias, the authors employ sampling and ensure that demonstration examples do not overlap with test inputs.

Experimental results demonstrate that ontology‑aligned prompts (OS/OP) improve extraction accuracy by up to 44.2% compared with random demonstrations, reduce hallucination rates by 22.5%, and increase format adherence by 20.9%. The bias‑aware evaluation reveals that rankings can shift substantially depending on whether reference‑based or reference‑free scores are used, underscoring the importance of accounting for evaluation bias in high‑stakes domains.

Key insights include: (1) embedding a domain‑specific ontology directly into prompts dramatically enhances LLM performance on structured extraction tasks; (2) paragraph‑level chunking preserves essential context without exceeding model limits; (3) combining human annotations with LLM‑as‑Judge provides a robust evaluation pipeline when gold references are scarce. Limitations noted are the dependence on a well‑maintained ontology, potential information loss in very large tables or figures, and the computational cost of LLM‑as‑Judge scoring.

The paper concludes that TextMineX offers a practical, reproducible solution for turning free‑form HMA reports into queryable knowledge graphs, facilitating cross‑agency information sharing and improving operational decision‑making. Future work will explore automatic ontology expansion, multimodal chunking (including tables and images), lightweight judge models, and deployment of an API service for real‑time field use.

Comments & Academic Discussion

Loading comments...

Leave a Comment