A Gift from the Integration of Discriminative and Diffusion-based Generative Learning: Boundary Refinement Remote Sensing Semantic Segmentation

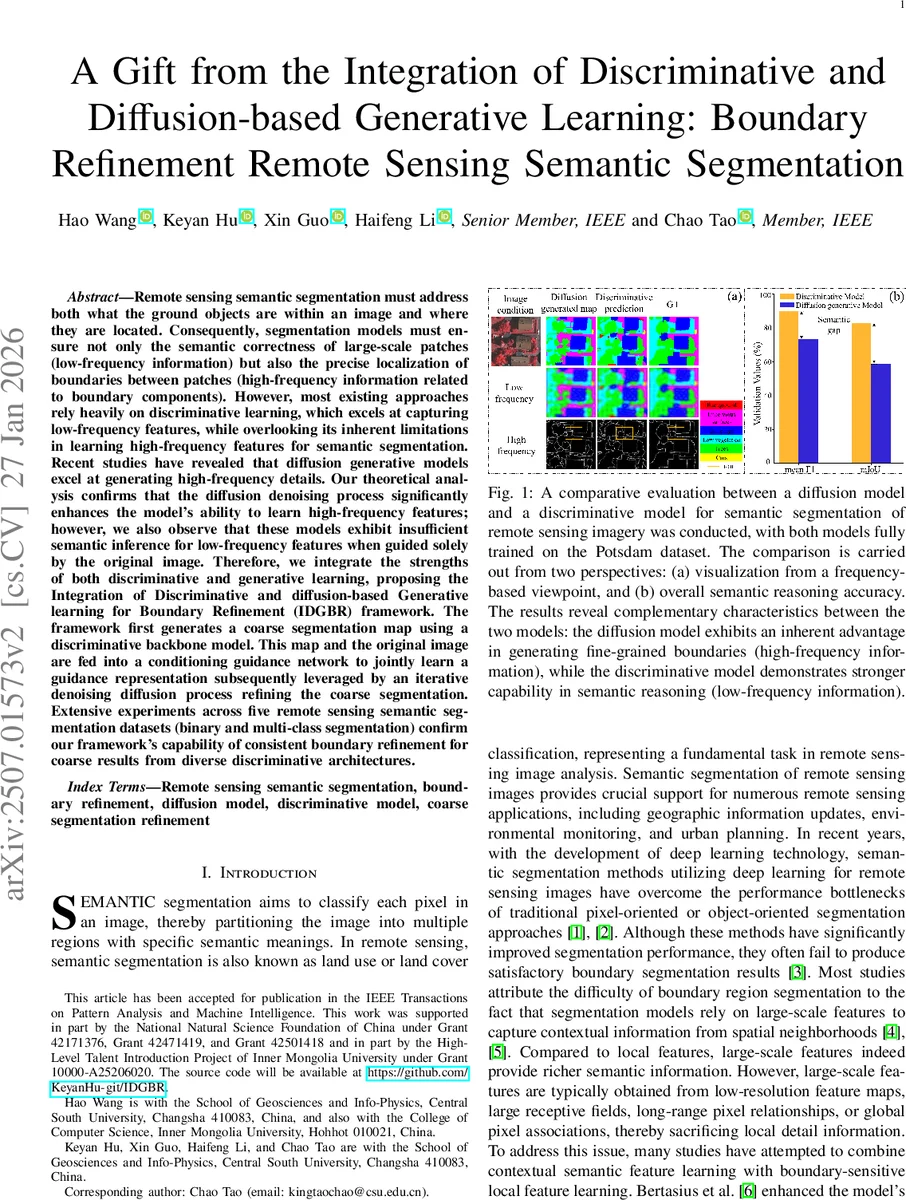

Remote sensing semantic segmentation must address both what the ground objects are within an image and where they are located. Consequently, segmentation models must ensure not only the semantic correctness of large-scale patches (low-frequency information) but also the precise localization of boundaries between patches (high-frequency information). However, most existing approaches rely heavily on discriminative learning, which excels at capturing low-frequency features, while overlooking its inherent limitations in learning high-frequency features for semantic segmentation. Recent studies have revealed that diffusion generative models excel at generating high-frequency details. Our theoretical analysis confirms that the diffusion denoising process significantly enhances the model’s ability to learn high-frequency features; however, we also observe that these models exhibit insufficient semantic inference for low-frequency features when guided solely by the original image. Therefore, we integrate the strengths of both discriminative and generative learning, proposing the Integration of Discriminative and diffusion-based Generative learning for Boundary Refinement (IDGBR) framework. The framework first generates a coarse segmentation map using a discriminative backbone model. This map and the original image are fed into a conditioning guidance network to jointly learn a guidance representation subsequently leveraged by an iterative denoising diffusion process refining the coarse segmentation. Extensive experiments across five remote sensing semantic segmentation datasets (binary and multi-class segmentation) confirm our framework’s capability of consistent boundary refinement for coarse results from diverse discriminative architectures.

💡 Research Summary

The paper addresses a fundamental dilemma in remote‑sensing semantic segmentation: the need to capture low‑frequency, large‑scale semantic information (“what”) while simultaneously preserving high‑frequency boundary details (“where”). Existing discriminative segmentation networks excel at the former because their loss functions prioritize overall pixel‑wise accuracy, which biases learning toward low‑frequency components that dominate the image area. Consequently, high‑frequency boundary cues are suppressed, leading to blurry or inaccurate object borders.

Recent advances in diffusion probabilistic models have demonstrated a remarkable ability to reconstruct high‑frequency details during the reverse denoising process. The authors first provide a theoretical and empirical analysis showing that diffusion models indeed generate sharper boundary structures (as evidenced by Fourier‑domain visualizations) but suffer from poor semantic reasoning when conditioned only on the raw image. In other words, diffusion models capture fine‑grained edge information but lack the low‑frequency contextual understanding that discriminative models provide.

To exploit the complementary strengths of both paradigms, the authors propose the Integration of Discriminative and diffusion‑based Generative learning for Boundary Refinement (IDGBR). The framework consists of three main components:

-

Coarse Segmentation Backbone – Any off‑the‑shelf discriminative network (e.g., DeepLabV3+, UNet, SegFormer) produces an initial segmentation map that reliably encodes low‑frequency semantic content but contains coarse or inaccurate borders.

-

Conditional Guidance Network – This module receives both the original remote‑sensing image and the coarse segmentation map, learning a joint representation that fuses semantic context with visual cues. The resulting guidance embedding is designed to steer the diffusion process.

-

Diffusion Refinement Module – A diffusion model (based on Stable Diffusion) performs an iterative denoising process on the segmentation label space. The guidance embedding is injected via residual connections at each denoising step, allowing the model to refine boundaries while preserving the semantic layout supplied by the coarse map.

To stabilize training and align the generative latent space with the guidance features, the authors introduce a regularization term that matches intermediate diffusion features to those of a pre‑trained vision transformer. This alignment mitigates the notorious instability of diffusion training in the early stages and encourages semantic coherence throughout the refinement trajectory.

The authors evaluate IDGBR on five publicly available remote‑sensing segmentation datasets, covering both binary and multi‑class scenarios. They test multiple discriminative backbones to demonstrate the framework’s backbone‑agnostic nature. Performance is measured with standard metrics (mIoU, F1‑score) and a boundary‑sensitive metric, Weighted F‑measure (WFm), which emphasizes errors near object edges. Results show consistent improvements: mIoU gains of 2–4 percentage points and WFm gains of 5–9 percentage points over strong baselines. Qualitative visualizations reveal that IDGBR restores crisp, geometrically accurate borders, even for small or thin structures that typical discriminative models tend to blur.

The paper also critiques current evaluation practices, arguing that pixel‑wise metrics overlook boundary quality, and advocates for the adoption of boundary‑aware measures like WFm. Finally, the authors discuss future directions, including multi‑scale guidance strategies, lightweight diffusion refinements for real‑time applications, and cross‑domain extensions to medical imaging or autonomous driving.

In summary, IDGBR successfully marries discriminative semantic reasoning with diffusion‑based high‑frequency reconstruction, delivering a versatile and effective solution for boundary refinement in remote‑sensing semantic segmentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment