A Comprehensive Survey of Deep Learning for Multivariate Time Series Forecasting: A Channel Strategy Perspective

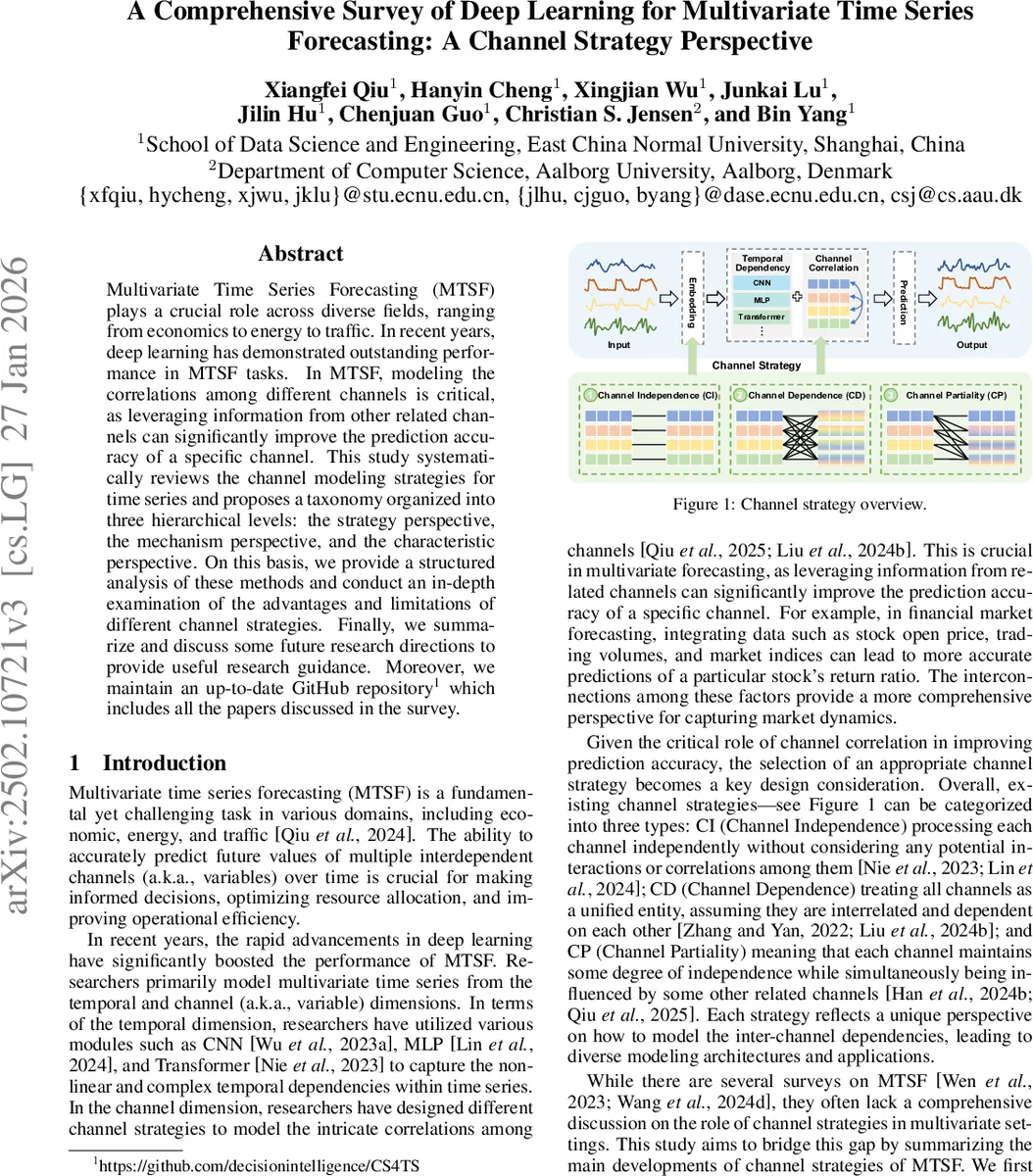

Multivariate Time Series Forecasting (MTSF) plays a crucial role across diverse fields, ranging from economic, energy, to traffic. In recent years, deep learning has demonstrated outstanding performance in MTSF tasks. In MTSF, modeling the correlations among different channels is critical, as leveraging information from other related channels can significantly improve the prediction accuracy of a specific channel. This study systematically reviews the channel modeling strategies for time series and proposes a taxonomy organized into three hierarchical levels: the strategy perspective, the mechanism perspective, and the characteristic perspective. On this basis, we provide a structured analysis of these methods and conduct an in-depth examination of the advantages and limitations of different channel strategies. Finally, we summarize and discuss some future research directions to provide useful research guidance. Moreover, we maintain an up-to-date Github repository (https://github.com/decisionintelligence/CS4TS) which includes all the papers discussed in the survey.

💡 Research Summary

The paper presents a comprehensive survey of deep learning approaches for multivariate time series forecasting (MTSF) with a focus on how different methods handle the relationships among multiple channels (variables). Recognizing that channel correlations are pivotal for accurate forecasting, the authors propose a three‑level taxonomy: (1) Strategy Perspective, (2) Mechanism Perspective, and (3) Characteristic Perspective.

In the Strategy Perspective, three fundamental paradigms are defined: Channel Independence (CI), Channel Dependence (CD), and Channel Partiality (CP). CI treats each channel separately, exemplified by models such as PatchTST, DLinear, and CycleNet; it offers low model complexity and easy scalability but ignores potentially useful inter‑channel information. CD assumes all channels are interdependent and processes them as a unified entity. CD methods are further split into Embedding Fusion (e.g., Informer, Autoformer, TimesNet) where convolutional layers mix channel embeddings, and Explicit Correlation (e.g., iTransformer, TSMixer) where dedicated modules such as self‑attention or MLP mixing explicitly capture cross‑channel dependencies. While CD often yields higher accuracy, it suffers from quadratic computational cost with respect to the number of channels and can be vulnerable to noisy channels. CP occupies a middle ground, allowing each channel to retain some independence while interacting with a selected subset of related channels. CP is divided into Fixed Partial Channels (e.g., MTGNN, which builds a K‑regular graph) and Dynamic Partial Channels (e.g., DUET, which learns a frequency‑domain similarity mask, and CCM, which dynamically clusters channels).

The Mechanism Perspective details the concrete architectural components used to realize these strategies. Transformer‑based mechanisms include Naïve Attention (global channel‑wise attention), Router Attention (a small set of “router” tokens aggregates information to reduce O(N²) complexity to O(N)), Frequency Attention (operating in the spectral domain), and Mask Attention (applying learned masks to suppress irrelevant channels). CNN‑based mechanisms rely on 1‑D or 2‑D convolutions to capture local channel interactions, while MLP‑based mechanisms employ channel‑wise mixing layers that can be stacked to model global dependencies efficiently. Graph Neural Network (GNN) mechanisms explicitly construct a graph over channels, enabling both local and global relational modeling; examples include MTGNN, GTS, FourierGNN, and several recent GNN variants.

The Characteristic Perspective introduces six orthogonal attributes that differentiate models beyond the basic strategy: Asymmetry (non‑reciprocal influence), Lag (temporal offset in dependencies), Polarity (sign of influence), Group‑wise (clusters of channels processed together), Dynamism (ability to adapt the channel graph over time), and Multi‑scale (handling patterns at different temporal resolutions). The authors populate a large table (Table 1) indicating which models exhibit each attribute, providing a quick reference for practitioners.

A systematic comparison of CI, CD, and CP highlights trade‑offs: CI excels in computational efficiency and robustness to noise but may underperform when strong cross‑channel signals exist; CD achieves state‑of‑the‑art accuracy at the cost of higher computation and potential over‑fitting; CP offers a balanced solution, especially when dynamic partial connections are employed, allowing models to focus on the most informative channels while discarding noise.

The survey concludes with several forward‑looking research directions. First, automated strategy selection via meta‑learning could relieve practitioners from manually choosing between CI, CD, and CP. Second, self‑supervised or contrastive learning techniques may pre‑train channel interaction modules without labeled data. Third, hybrid architectures that combine the global receptive field of Transformers, the relational expressiveness of GNNs, and the efficiency of MLP mixers are promising for large‑scale, real‑time forecasting. Finally, model compression, quantization, and sparsity‑inducing mechanisms are needed to make CD‑heavy models viable in edge or streaming scenarios.

Overall, the paper makes three key contributions: (1) a unified, up‑to‑date survey of deep learning models for MTSF with an emphasis on channel strategies; (2) a novel three‑level taxonomy that clarifies the relationships among strategy, mechanism, and characteristic dimensions; and (3) a roadmap of open challenges and opportunities that can guide future research. By providing a structured lens through which to view the rapidly expanding literature, the work serves as a valuable reference for both newcomers and seasoned researchers aiming to design more effective multivariate forecasting systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment