EmoBench-M: Benchmarking Emotional Intelligence for Multimodal Large Language Models

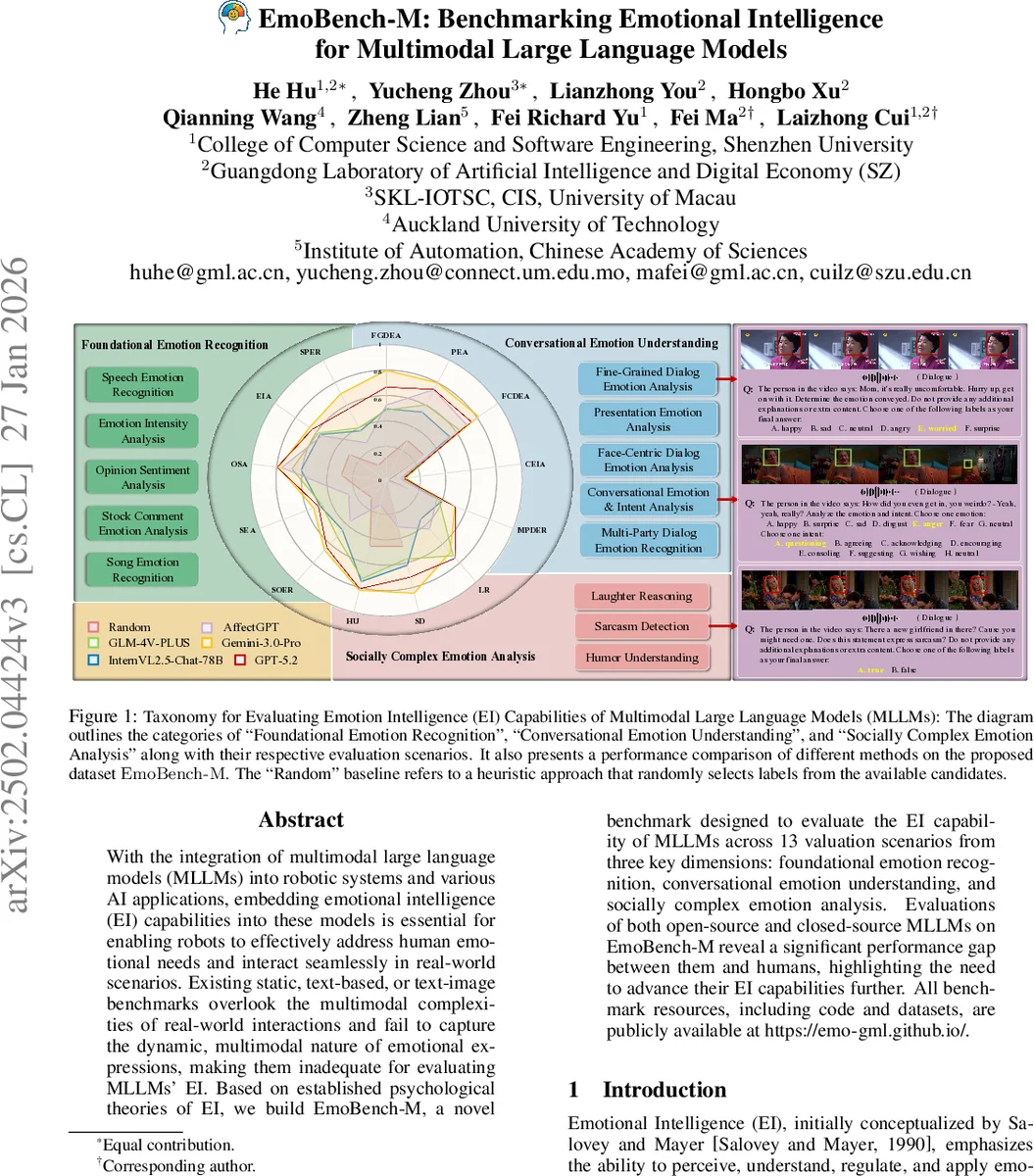

With the integration of Multimodal large language models (MLLMs) into robotic systems and various AI applications, embedding emotional intelligence (EI) capabilities into these models is essential for enabling robots to effectively address human emotional needs and interact seamlessly in real-world scenarios. Existing static, text-based, or text-image benchmarks overlook the multimodal complexities of real-world interactions and fail to capture the dynamic, multimodal nature of emotional expressions, making them inadequate for evaluating MLLMs’ EI. Based on established psychological theories of EI, we build EmoBench-M, a novel benchmark designed to evaluate the EI capability of MLLMs across 13 valuation scenarios from three key dimensions: foundational emotion recognition, conversational emotion understanding, and socially complex emotion analysis. Evaluations of both open-source and closed-source MLLMs on EmoBench-M reveal a significant performance gap between them and humans, highlighting the need to further advance their EI capabilities. All benchmark resources, including code and datasets, are publicly available at https://emo-gml.github.io/.

💡 Research Summary

EmoBench‑M introduces a comprehensive benchmark for assessing emotional intelligence (EI) in multimodal large language models (MLLMs). Recognizing that existing benchmarks are largely text‑only or static text‑image and thus fail to capture the dynamic, multimodal nature of real‑world emotional expression, the authors ground their design in established psychological theories—Salovey and Mayer’s EI framework and Ekman’s basic emotions. The benchmark is organized into three hierarchical dimensions: (1) Foundational Emotion Recognition, (2) Conversational Emotion Understanding, and (3) Socially Complex Emotion Analysis. Across these dimensions, 13 evaluation scenarios are defined, covering tasks such as song and speech emotion recognition, opinion sentiment analysis, fine‑grained dialog emotion analysis, multi‑party dialog emotion recognition, humor understanding, sarcasm detection, and laughter reasoning.

Data are drawn from public multimodal datasets (e.g., RAVDESS, CMU‑MOSEI, MELD) and undergo rigorous quality control: three expert annotators re‑label each sample, and only items where the original label matches the majority‑vote consensus are retained. Class imbalance is mitigated by down‑sampling majority classes to a maximum of 500 samples per task, ensuring balanced evaluation across emotion categories.

Evaluation uses accuracy for classification tasks and an LLM‑based evaluator (Qwen2.5‑72B‑Instruct) for generative tasks. The authors test a diverse set of models—including open‑source Qwen2‑Audio, MiniCPM‑V, InternVL2.5, Video‑LLaMA2, Emotion‑LLaMA, and closed‑source GLM‑4V and Gemini—in a zero‑shot setting. Results reveal a substantial gap between model performance and human baselines (≈90%+), with especially poor results on socially complex tasks (often below 30% accuracy). Scaling model size yields limited gains, indicating current MLLMs struggle with multimodal alignment and high‑level emotional reasoning.

EmoBench‑M thus provides the first systematic, multimodal benchmark for EI, offering clear metrics and diverse scenarios that can guide future research toward more emotionally aware AI, crucial for human‑robot interaction, affective computing services, and therapeutic applications. The paper also suggests future extensions such as cross‑cultural datasets, real‑time interaction testing, and evaluation of emotion regulation and generation capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment