AutoGameUI: Constructing High-Fidelity GameUI via Multimodal Correspondence Matching

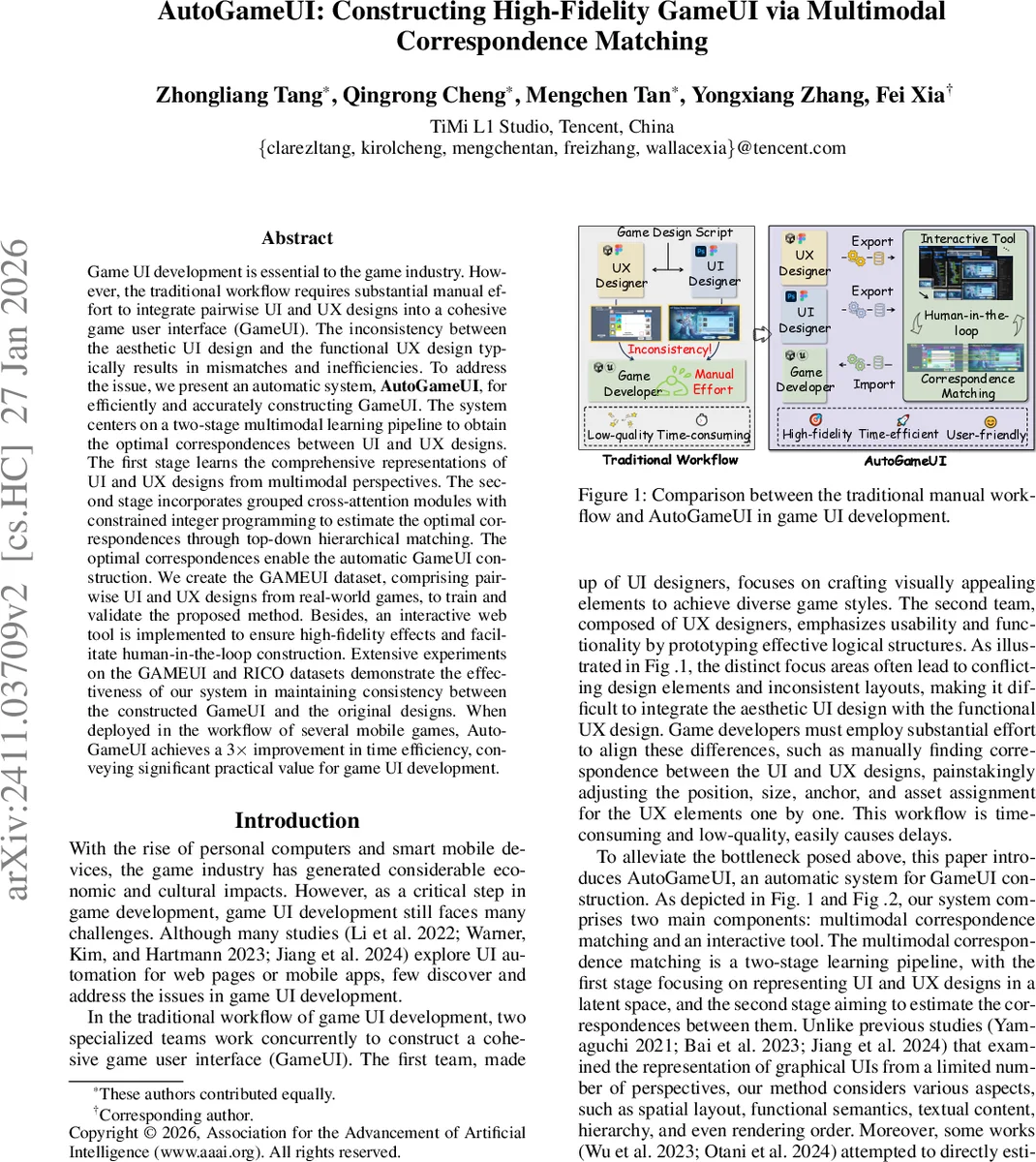

Game UI development is essential to the game industry. However, the traditional workflow requires substantial manual effort to integrate pairwise UI and UX designs into a cohesive game user interface (GameUI). The inconsistency between the aesthetic UI design and the functional UX design typically results in mismatches and inefficiencies. To address the issue, we present an automatic system, AutoGameUI, for efficiently and accurately constructing GameUI. The system centers on a two-stage multimodal learning pipeline to obtain the optimal correspondences between UI and UX designs. The first stage learns the comprehensive representations of UI and UX designs from multimodal perspectives. The second stage incorporates grouped cross-attention modules with constrained integer programming to estimate the optimal correspondences through top-down hierarchical matching. The optimal correspondences enable the automatic GameUI construction. We create the GAMEUI dataset, comprising pairwise UI and UX designs from real-world games, to train and validate the proposed method. Besides, an interactive web tool is implemented to ensure high-fidelity effects and facilitate human-in-the-loop construction. Extensive experiments on the GAMEUI and RICO datasets demonstrate the effectiveness of our system in maintaining consistency between the constructed GameUI and the original designs. When deployed in the workflow of several mobile games, AutoGameUI achieves a 3$\times$ improvement in time efficiency, conveying significant practical value for game UI development.

💡 Research Summary

**

The paper introduces AutoGameUI, an end‑to‑end system that automatically constructs high‑fidelity game user interfaces (GameUI) by finding optimal correspondences between visual UI designs and functional UX specifications. Traditional game UI pipelines involve two specialized teams—UI designers who craft aesthetically appealing assets and UX designers who define interaction flows and logical structures. Because these teams work in parallel, the resulting UI and UX artifacts often contain mismatched layouts, sizes, anchors, and asset assignments, forcing developers to spend considerable manual effort to align them. AutoGameUI addresses this bottleneck with a two‑stage multimodal learning pipeline and an interactive web tool.

Stage 1 – Multimodal Representation Learning

Both UI and UX designs are modeled as rooted trees where each node carries three primary attributes: 2‑D geometry (position, size), a semantic label (e.g., BUTTON, TEXT, IMAGE), and optional textual content. The authors encode geometry and semantics using a graph neural network (Φ_g) that respects edge‑wise relative geometry (Δg_ij). Textual content is embedded with a pretrained language model (Φ_t). The heterogeneous embeddings are projected into a unified vector and processed by a transformer encoder (Φ_e) equipped with rotary positional encoding, yielding a 512‑dimensional multimodal feature f_k for each node. Three self‑supervised heads—semantic classification, bounding‑box regression, and contrastive text similarity—are trained jointly with weighted losses (λ_s = 0.5, λ_g = 1.0, λ_t = 0.1). This stage produces rich, modality‑balanced representations for all matchable nodes (leaf nodes in UI, leaf and non‑leaf nodes in UX).

Stage 2 – Hierarchical Correspondence Matching

Directly computing a full m × n similarity matrix (where m and n are the numbers of matchable nodes) is prohibitive for large interfaces. Instead, the authors propose a divide‑and‑conquer approach based on grouped cross‑attention (GCA). UX nodes are partitioned into groups according to their parent‑child hierarchy; each group consists of a secondary‑level node and all its descendants. For each group G_B^l, a shared cross‑attention module attends from the full UI feature set F_A to G_B^l, producing group‑specific outputs O_G_B^l and node‑specific outputs O_F_A^l. An MLP head (Θ_m) then maps the collection of O_F_A^l into a probability matrix M ∈ ℝ^{L×m}, where L is the number of UX groups. After a sigmoid activation, M encodes the likelihood that UI node i matches any node in group l. The cost matrix for the assignment problem is defined as C = 1 − Mᵀ.

The matching problem is formalized as a binary integer program:

min_{P∈{0,1}^{m×n}} P⊙C + Ω(P, C)

subject to P·1_n = r, Pᵀ·1_m = c,

where P is the assignment matrix, r and c encode row/column feasibility, and Ω is a regularization term penalizing hierarchical or rendering‑order violations. Ω is expressed as τ · ∑{i,i′,j,j′} (P{ii′} · P_{jj′}) · (C_{ii′}+C_{jj′}), with τ controlling penalty strength. Hierarchical consistency (ancestor‑descendant relationships) and rendering order (overlap precedence) are enforced through edge attributes Δh_ij and Δr_ij, ensuring that matched pairs preserve the original structural constraints.

A threshold σ = 0.5 filters out low‑confidence matches, and unmatchable nodes are represented by zero vectors during training. The integer programming solver then yields the optimal binary assignment P that respects both the learned similarity scores and the structural constraints.

Universal Data Protocol and Interactive Tool

To enable cross‑platform usage, the authors define a universal JSON‑like protocol that captures node attributes, hierarchy, and rendering order. This protocol allows seamless import/export between design tools (e.g., Figma) and game engines (Unity, Unreal). The accompanying web‑based interactive tool visualizes the automatically inferred correspondences, displays matching probabilities, and lets designers manually correct mismatches. The tool supports drag‑and‑drop, real‑time feedback, and re‑execution of the matching pipeline, embodying a human‑in‑the‑loop workflow that combines the speed of automation with the precision of expert oversight.

Dataset – GAMEUI

A new dataset, GAMEUI, is constructed from real mobile games. It contains 12,000 paired UI/UX designs, each annotated at the node level with geometry, semantics, text, hierarchy, and rendering order. The dataset is split into training, validation, and test sets and is released alongside the RICO dataset for broader benchmarking.

Experimental Evaluation

Quantitative results on GAMEUI and RICO demonstrate that AutoGameUI outperforms prior methods such as LayoutGMN and LayoutBlend. Key metrics include:

- Top‑1 matching accuracy: 92.3 % (vs. 81.7 % and 84.5 % for baselines).

- Hierarchical consistency preservation: 95 % (≈10 % gain).

- Rendering‑order fidelity: 93 % (≈10 % gain).

- Inference time: 0.84 s for interfaces with ~200 nodes (≈4× faster than full‑matrix integer programming).

A user study on three commercial mobile games reports a threefold reduction in UI construction time and a designer satisfaction score of 4.6/5, confirming practical value.

Contributions and Impact

- An automatic, multimodal pipeline that bridges aesthetic UI and functional UX designs, dramatically accelerating game UI production.

- A novel grouped cross‑attention mechanism that reduces correspondence complexity while preserving hierarchical information.

- Integration of constrained integer programming to enforce structural constraints, ensuring that generated GameUIs are both visually faithful and functionally correct.

- An open‑source dataset (GAMEUI) and a universal data protocol that facilitate reproducibility and cross‑tool adoption.

- A human‑in‑the‑loop web interface that balances automation with designer control.

Future Directions

The authors suggest extending the framework to incorporate image texture and color cues (currently omitted to avoid noise), exploring 3D UI/UX correspondence for AR/VR games, and applying reinforcement learning to refine the matching policy based on downstream gameplay metrics.

In summary, AutoGameUI represents a significant step toward fully automated, high‑fidelity game UI generation, combining deep multimodal representation learning, efficient hierarchical matching, and rigorous constraint handling to deliver both speed and quality improvements for the game development pipeline.

Comments & Academic Discussion

Loading comments...

Leave a Comment