SituFont: A Just-in-Time Adaptive Intervention System for Enhancing Mobile Readability in Situational Visual Impairments

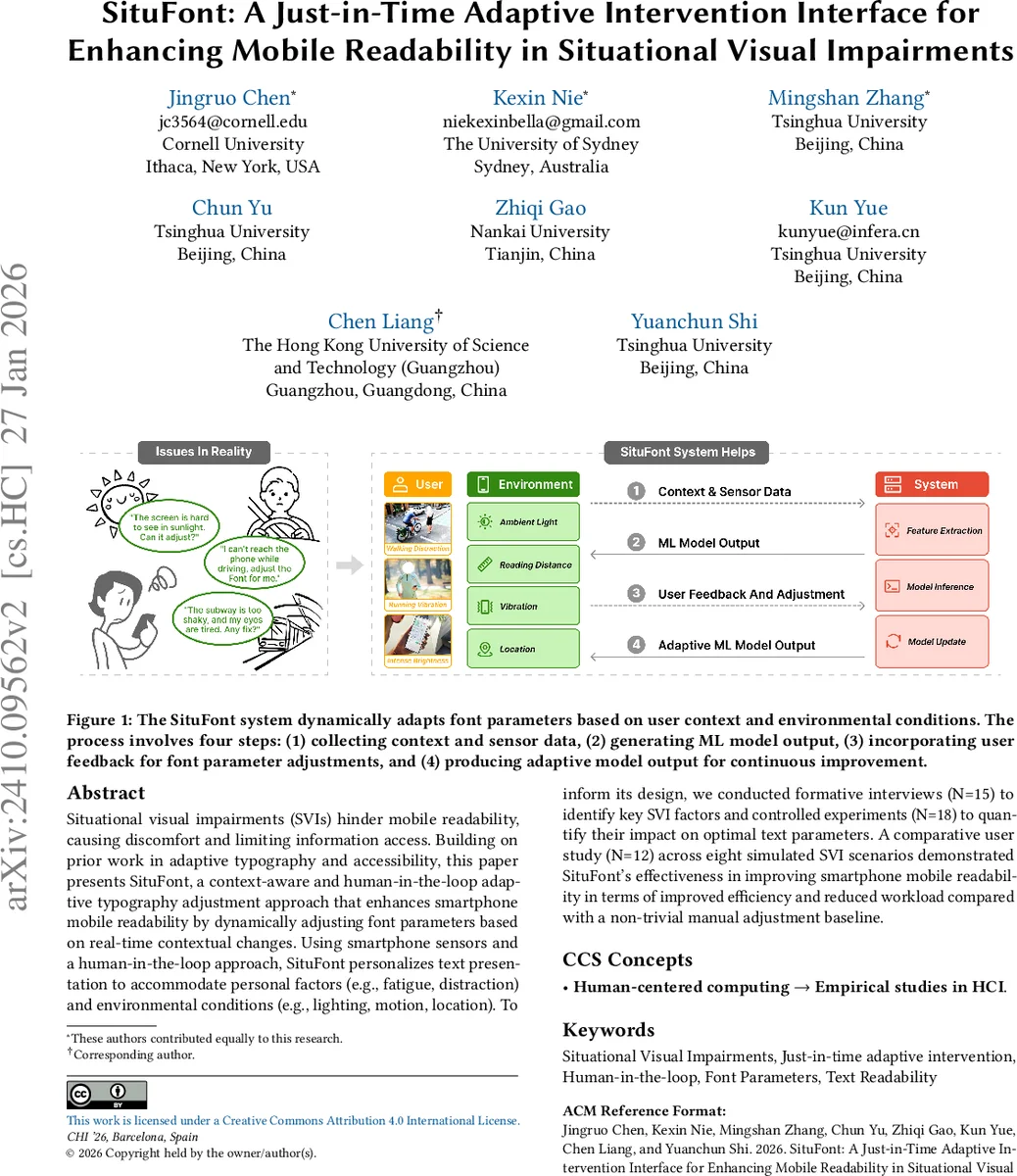

Situational visual impairments (SVIs) hinder mobile readability, causing discomfort and limiting information access. Building on prior work in adaptive typography and accessibility, this paper presents SituFont, a context-aware and human-in-the-loop adaptive typography adjustment approach that enhances smartphone mobile readability by dynamically adjusting font parameters based on real-time contextual changes. Using smartphone sensors and a human-in-the-loop approach, SituFont personalizes text presentation to accommodate personal factors (e.g., fatigue, distraction) and environmental conditions (e.g., lighting, motion, location). To inform its design, we conducted formative interviews (N=15) to identify key SVI factors and controlled experiments (N=18) to quantify their impact on optimal text parameters. A comparative user study (N=12) across eight simulated SVI scenarios demonstrated SituFont’s effectiveness in improving smartphone mobile readability in terms of improved efficiency and reduced workload compared with a non-trivial manual adjustment baseline.

💡 Research Summary

SituFont is a context‑aware, human‑in‑the‑loop adaptive typography system designed to improve mobile readability when users experience situational visual impairments (SVIs) such as low lighting, motion, glare, or fatigue. The authors begin by highlighting that mobile reading occurs in highly dynamic environments where multiple factors can simultaneously degrade legibility, and that existing solutions—manual font adjustments, static accessibility settings, or rule‑based automatic changes—are insufficient because they either place a constant burden on users or fail to consider the interaction of several contextual variables.

To ground the design, the authors conducted three empirical studies. Study 1 involved semi‑structured interviews with 15 participants (students and professionals with a range of visual conditions). Thematic analysis revealed three broad categories of SVI‑inducing factors: environmental (e.g., ambient light, vibration, viewing distance), personal (e.g., fatigue, divided attention), and informational (e.g., text density). Participants reported that adjusting font size, weight, character spacing, and line spacing was the most common coping strategy, but they found repeated manual adjustments disruptive.

Study 2 was a controlled laboratory experiment with 18 participants. The researchers manipulated three key environmental variables—ambient illumination, device vibration (captured via accelerometer), and visual clutter—and asked participants to select preferred font parameters for each condition. Quantitative results showed systematic trends: low‑light conditions prompted increases in font size (≈15 %–20 %) and weight; high vibration encouraged wider character spacing; and combined low light with high motion led to a simultaneous increase in line spacing. These findings supplied concrete parameter‑adjustment rules that could be learned by a machine‑learning model.

The core of SituFont consists of (1) a hierarchical “label‑tree” that encodes the current context (e.g., “indoor‑low‑light‑medium‑vibration”), populated from real‑time sensor streams (ambient light sensor, accelerometer, GPS, microphone) and editable by the user; and (2) a predictive model (implemented as a Gradient Boosting Regressor) that maps the label representation to optimal values for four font parameters: size, weight, character spacing, and line spacing. Crucially, the system incorporates a human‑in‑the‑loop feedback loop: after each automatic adjustment, users can rate the readability (“good / okay / bad”) and optionally fine‑tune the parameters via a lightweight UI. This feedback is fed back into the model for online refinement, enabling incremental personalization while preserving transparency.

Evaluation (Study 3) compared SituFont against a “static optimal” baseline derived from Study 2, in which participants manually set a single best‑fit font configuration for each of eight simulated SVI scenarios (e.g., walking outdoors in bright sunlight, commuting in a moving vehicle, reading in a crowded subway). Twelve participants performed reading tasks while objective metrics (reading speed, error rate) and subjective measures (NASA‑TLX workload, perceived readability) were recorded. SituFont yielded a 22 % increase in reading speed, a 12 % reduction in errors, and a 15 % drop in workload scores, all statistically significant. Participants also reported that the system’s automatic, context‑driven adjustments reduced interruptions to their reading flow and lessened visual fatigue.

The authors claim three primary contributions: (1) a mixed‑methods empirical characterization of how environmental, personal, and informational factors jointly affect mobile reading under SVIs; (2) the design and implementation of a population‑informed, human‑in‑the‑loop JIT adaptive typography framework that supports continuous personalization; and (3) design guidelines emphasizing the timing, activation triggers, and seamless integration of adaptive interventions into the reading workflow.

Limitations are acknowledged. The prototype focuses on Chinese characters, which have dense visual structure; extending the approach to Latin alphabets or bidirectional scripts may require different parameter sensitivities. Privacy and battery‑consumption concerns associated with continuous sensor sampling were not fully explored, and long‑term user acceptance remains an open question. Future work is outlined to (a) test cross‑lingual generalization, (b) incorporate on‑device privacy‑preserving learning, and (c) develop explanatory interfaces that increase user trust in automated adjustments.

In sum, SituFont demonstrates that a sensor‑driven, user‑feedback‑enhanced adaptive typography system can meaningfully mitigate situational visual impairments on smartphones, delivering measurable gains in efficiency and user comfort while maintaining user control over the adaptation process.

Comments & Academic Discussion

Loading comments...

Leave a Comment