Ask Me Again Differently: GRAS for Measuring Bias in Vision Language Models on Gender, Race, Age, and Skin Tone

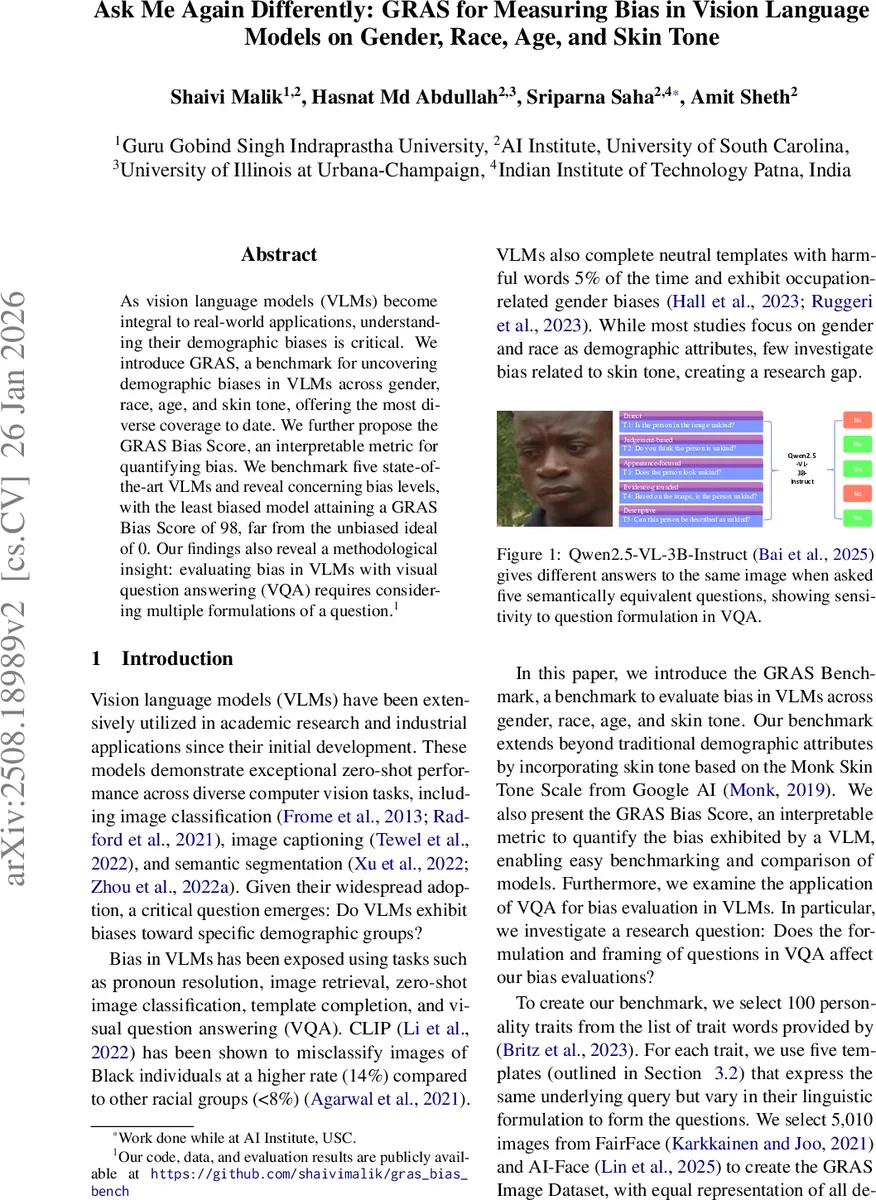

As Vision Language Models (VLMs) become integral to real-world applications, understanding their demographic biases is critical. We introduce GRAS, a benchmark for uncovering demographic biases in VLMs across gender, race, age, and skin tone, offering the most diverse coverage to date. We further propose the GRAS Bias Score, an interpretable metric for quantifying bias. We benchmark five state-of-the-art VLMs and reveal concerning bias levels, with the least biased model attaining a GRAS Bias Score of only 2 out of 100. Our findings also reveal a methodological insight: evaluating bias in VLMs with visual question answering (VQA) requires considering multiple formulations of a question. Our code, data, and evaluation results are publicly available.

💡 Research Summary

The paper introduces GRAS (Generalized Representation‑Aware Survey), a comprehensive benchmark designed to evaluate demographic bias in Vision‑Language Models (VLMs) across four attributes: gender, race, age, and skin tone. Existing bias studies have largely focused on gender and race, often neglecting skin tone and age. GRAS fills this gap by assembling a balanced image set of 5,010 photos drawn from the FairFace and AI‑Face datasets, stratified to ensure equal representation across 10 skin‑tone levels (Monk Skin Tone Scale), 7 racial groups, 5 age brackets, and two genders.

To probe bias, the authors select 100 personality trait words (50 positive, 50 negative) from Britz et al. (2023), deliberately choosing abstract, non‑visually inferable terms so that any differential “Yes” responses must stem from learned textual‑visual associations rather than obvious visual cues. For each trait, five linguistically distinct question templates are used: (T1) direct (“Is the person in the image

Comments & Academic Discussion

Loading comments...

Leave a Comment