Collab: Fostering Critical Identification of Deepfake Videos on Social Media via Synergistic Annotation

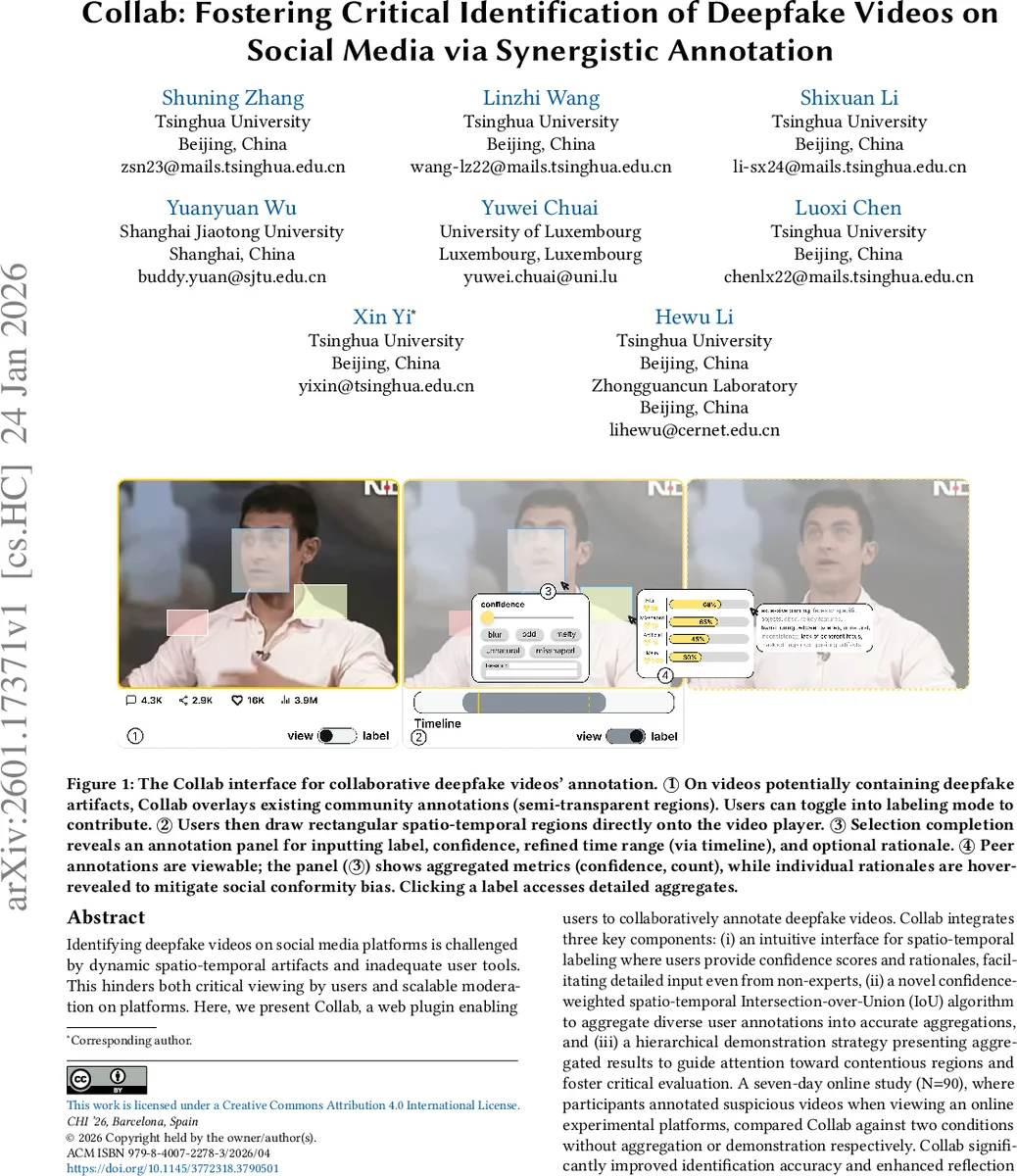

Identifying deepfake videos on social media platforms is challenged by dynamic spatio-temporal artifacts and inadequate user tools. This hinders both critical viewing by users and scalable moderation on platforms. Here, we present Collab, a web plugin enabling users to collaboratively annotate deepfake videos. Collab integrates three key components: (i) an intuitive interface for spatio-temporal labeling where users provide confidence scores and rationales, facilitating detailed input even from non-experts, (ii) a novel confidence-weighted spatio-temporal Intersection-over-Union (IoU) algorithm to aggregate diverse user annotations into accurate aggregations, and (iii) a hierarchical demonstration strategy presenting aggregated results to guide attention toward contentious regions and foster critical evaluation. A seven-day online study (N=90), where participants annotated suspicious videos when viewing an online experimental platforms, compared Collab against two conditions without aggregation or demonstration respectively. Collab significantly improved identification accuracy and enhanced reflection compared to non-demonstration condition, while outperforming non-aggregation condition for its novelty and effectiveness.

💡 Research Summary

The paper presents Collab, a web‑based plugin that enables crowdsourced, fine‑grained annotation of deepfake videos on social media. Recognizing that deepfakes contain dynamic spatio‑temporal artifacts that are difficult for both automated detectors and casual users to spot, the authors design a three‑stage system: (1) Labeling, where users draw rectangular spatio‑temporal boxes directly onto the video player, assign a predefined or custom label (e.g., “real” or “fake”), provide a confidence score (0‑100), and optionally write a short rationale; (2) Aggregation, which fuses the many individual annotations using a novel confidence‑weighted 3‑D Intersection‑over‑Union (IoU) algorithm. Each annotation is represented as a 3‑D bounding volume (time + 2‑D space). Annotations whose IoU exceeds a threshold are clustered; within each cluster, user confidence and a pre‑computed reliability metric are multiplied to produce a weighted average confidence, and the label with the highest weighted support becomes the cluster’s representative label. This approach preserves minority but high‑confidence inputs while preventing noisy contributions from dominating. (3) Demonstration, a hierarchical visualization that returns the aggregated results to users. Initially, only a semi‑transparent color overlay and an aggregated confidence value are shown. When a user hovers over a region, detailed information—dominant label, aggregated confidence, and a concise summary of rationales—appears in a tooltip. Individual peer rationales are revealed only on hover, deliberately limiting social conformity bias.

The design is grounded in Collective Intelligence (CI) and Social Influence (SI) theories. CI informs the labeling and aggregation stages, emphasizing diversity, independence, and motivation. SI guides the demonstration stage, controlling normative, informational, and comparative influences through selective visibility, density, and social cues. The authors articulate four high‑level goals: leveraging human temporal reasoning, mitigating bias, optimizing workflow, and fostering critical engagement.

A seven‑day online user study with 90 participants compared three conditions: (1) Collab (full system), (2) No Agg (annotation + demonstration but without aggregation), and (3) No Label (annotation + aggregation but without demonstration). Participants annotated 12 videos (mix of authentic and deepfake content) on a custom experimental platform. Primary outcomes included detection accuracy, reflection scores, perceived smoothness, novelty, and effectiveness. Collab achieved an average accuracy of 88.1 %, significantly higher than No Agg’s 79.7 % (p < 0.01). Subjective measures also favored Collab across all dimensions, with participants reporting higher critical thinking, reduced conformity, and greater satisfaction with the hierarchical feedback. Qualitative feedback highlighted that the spatio‑temporal boxes helped users pinpoint manipulation zones, while the aggregated rationales prompted deeper contemplation rather than blind acceptance of peer judgments.

The paper contributes: (i) a theoretically‑driven design framework that explicitly links labeling, aggregation, and demonstration; (ii) the Collab system itself, featuring an intuitive spatio‑temporal UI, a confidence‑weighted 3‑D IoU aggregation algorithm, and a bias‑aware hierarchical visualization; and (iii) empirical evidence that such a system can substantially improve deepfake detection accuracy and promote reflective, critical engagement compared to simpler crowdsourcing approaches.

Limitations are acknowledged. The label taxonomy is binary, restricting nuanced classifications (e.g., “partially manipulated”). User reliability is modeled simply via past accuracy, lacking a dynamic, longitudinal trust model that could adapt to adversarial behavior. The study was conducted in a controlled lab‑like environment; real‑world deployment on large platforms may encounter scalability, latency, and privacy challenges.

Future work proposes extending the label set, integrating continuous reliability learning (e.g., Bayesian trust models), exploring multimodal cues (audio, facial micro‑expressions), and testing Collab in live social media ecosystems to assess its impact on misinformation spread at scale. Overall, Collab demonstrates that a well‑designed human‑centric collaborative pipeline can meaningfully augment deepfake detection and empower users to become more discerning consumers of video content.

Comments & Academic Discussion

Loading comments...

Leave a Comment