ECCO: Evidence-Driven Causal Reasoning for Compiler Optimization

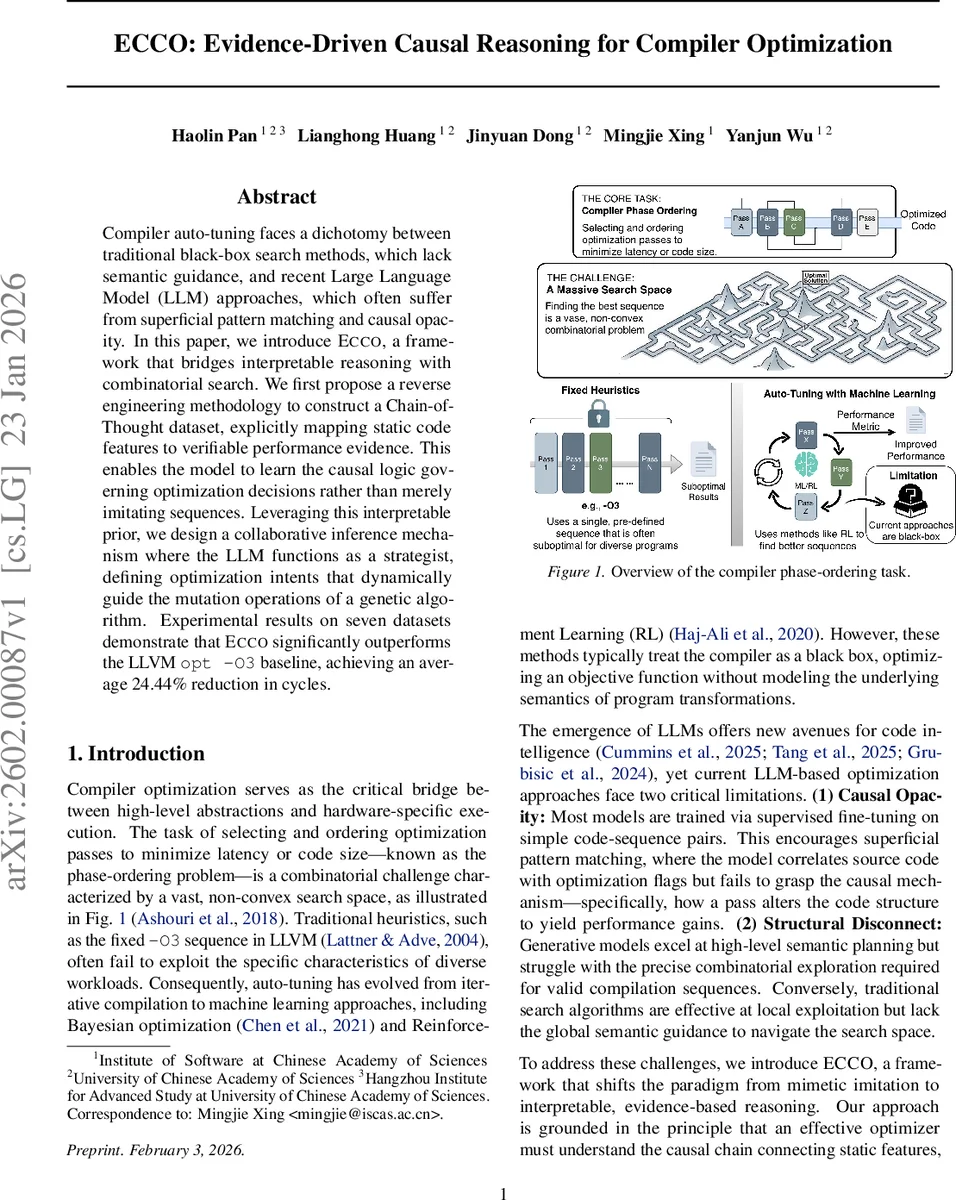

Compiler auto-tuning faces a dichotomy between traditional black-box search methods, which lack semantic guidance, and recent Large Language Model (LLM) approaches, which often suffer from superficial pattern matching and causal opacity. In this paper, we introduce ECCO, a framework that bridges interpretable reasoning with combinatorial search. We first propose a reverse engineering methodology to construct a Chain-of-Thought dataset, explicitly mapping static code features to verifiable performance evidence. This enables the model to learn the causal logic governing optimization decisions rather than merely imitating sequences. Leveraging this interpretable prior, we design a collaborative inference mechanism where the LLM functions as a strategist, defining optimization intents that dynamically guide the mutation operations of a genetic algorithm. Experimental results on seven datasets demonstrate that ECCO significantly outperforms the LLVM opt -O3 baseline, achieving an average 24.44% reduction in cycles.

💡 Research Summary

The paper tackles two fundamental shortcomings of current compiler auto‑tuning: (1) traditional black‑box search methods ignore program semantics, and (2) recent large language model (LLM) approaches rely on superficial pattern matching, offering little insight into the causal relationship between a pass and its performance impact. To bridge this gap, the authors introduce ECCO – Evidence‑Driven Causal Reasoning for Compiler Optimization – a three‑stage framework that couples interpretable reasoning with combinatorial search.

First, they construct a Chain‑of‑Thought (CoT) dataset by reverse‑engineering high‑performance optimization trajectories. Using an iterative greedy pruning algorithm, raw pass sequences generated by a state‑of‑the‑art tuner (CFSA‑T) are trimmed from an average length of 18.5 passes down to 4.5, preserving only the critical passes that actually drive performance gains. For each retained pass, three evidence types are recorded: (i) structural evidence (δ_struct) – the IR diff before and after the pass, (ii) feature evidence (δ_feat) – high‑dimensional static feature deltas extracted via Autophase, and (iii) performance evidence (g_t) – the marginal cycle reduction. Additionally, the authors perform a synergy analysis by swapping adjacent passes to label ordering constraints.

Because dynamic evidence is unavailable at inference time, the authors employ a “simulated predictive reasoning” step. A teacher model (Claude‑4.5‑Sonnet) is given the full evidence packet and asked to generate a rationale R that predicts the observed deltas and gains solely from the initial static feature vector Φ_initial. The resulting (Φ_initial, R ⊕ S_opt) pairs form a CoT training set that forces the target LLM (Qwen2.5‑Instruct) to internalize a causal simulator: during inference it must hallucinate the intermediate states and performance improvements from static features alone.

Training proceeds in two stages. Stage 1 is supervised fine‑tuning (SFT) on the evidence‑driven dataset, enforcing a strict output format with

The most novel contribution is the Strategist‑Tactician collaborative inference. The fine‑tuned LLM acts as a Strategist, producing a high‑level intent distribution I over optimization categories (e.g., loop unrolling, vectorization). A global pass‑benefit prior E

Comments & Academic Discussion

Loading comments...

Leave a Comment