Dimensional Peeking for Low-Variance Gradients in Zeroth-Order Discrete Optimization via Simulation

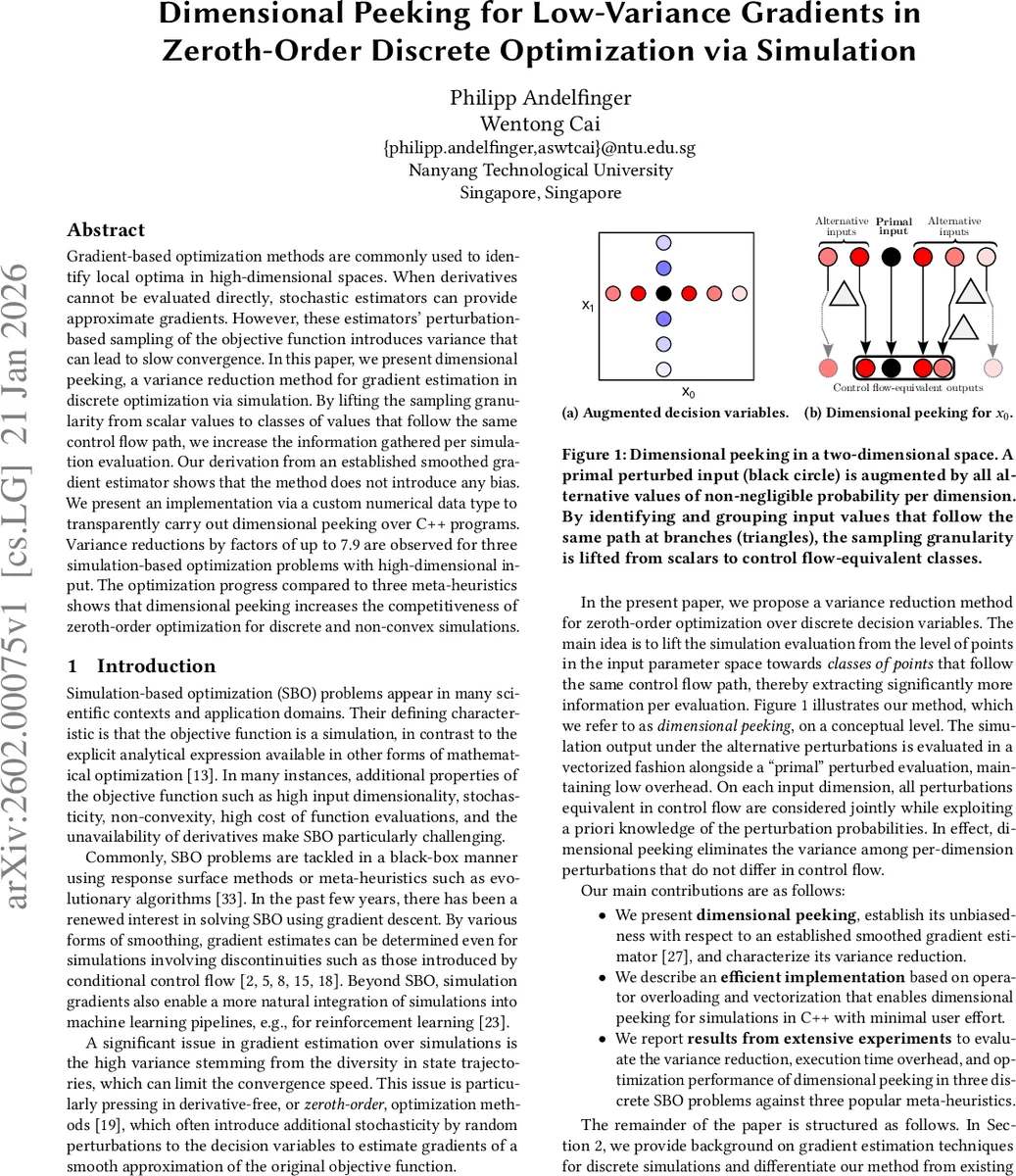

Gradient-based optimization methods are commonly used to identify local optima in high-dimensional spaces. When derivatives cannot be evaluated directly, stochastic estimators can provide approximate gradients. However, these estimators’ perturbation-based sampling of the objective function introduces variance that can lead to slow convergence. In this paper, we present dimensional peeking, a variance reduction method for gradient estimation in discrete optimization via simulation. By lifting the sampling granularity from scalar values to classes of values that follow the same control flow path, we increase the information gathered per simulation evaluation. Our derivation from an established smoothed gradient estimator shows that the method does not introduce any bias. We present an implementation via a custom numerical data type to transparently carry out dimensional peeking over C++ programs. Variance reductions by factors of up to 7.9 are observed for three simulation-based optimization problems with high-dimensional input. The optimization progress compared to three meta-heuristics shows that dimensional peeking increases the competitiveness of zeroth-order optimization for discrete and non-convex simulations.

💡 Research Summary

The paper introduces a variance‑reduction technique called Dimensional Peeking for zeroth‑order (derivative‑free) optimization of high‑dimensional discrete simulations. Traditional stochastic gradient estimators, such as Polyak’s Gradient‑Free Oracle (PGO), perturb each decision variable independently, evaluate the simulation for each perturbed point, and compute a finite‑difference estimate. In discrete, branching‑heavy simulations this approach suffers from high variance because many perturbations follow the same control‑flow path yet are treated as independent samples, wasting information and slowing convergence.

Dimensional Peeking addresses this inefficiency by lifting the sampling granularity from individual scalar perturbations to equivalence classes of perturbations that share the same control‑flow path. Two perturbations are considered equivalent if, when applied to the program, they traverse identical sequences of conditional branches (the “Path” of the program). Because the simulation’s control structure is identical for all members of a class, a single simulation run can be used to evaluate the objective for every perturbation in that class. The estimator aggregates the contributions of all class members, weighting them by their known probabilities, and normalizes appropriately. The authors prove that this aggregation preserves the unbiasedness of the original PGO estimator while eliminating the intra‑class variance component. Consequently, the per‑dimension variance is reduced by a factor of

\

Comments & Academic Discussion

Loading comments...

Leave a Comment