Tracking the Limits of Knowledge Propagation: How LLMs Fail at Multi-Step Reasoning with Conflicting Knowledge

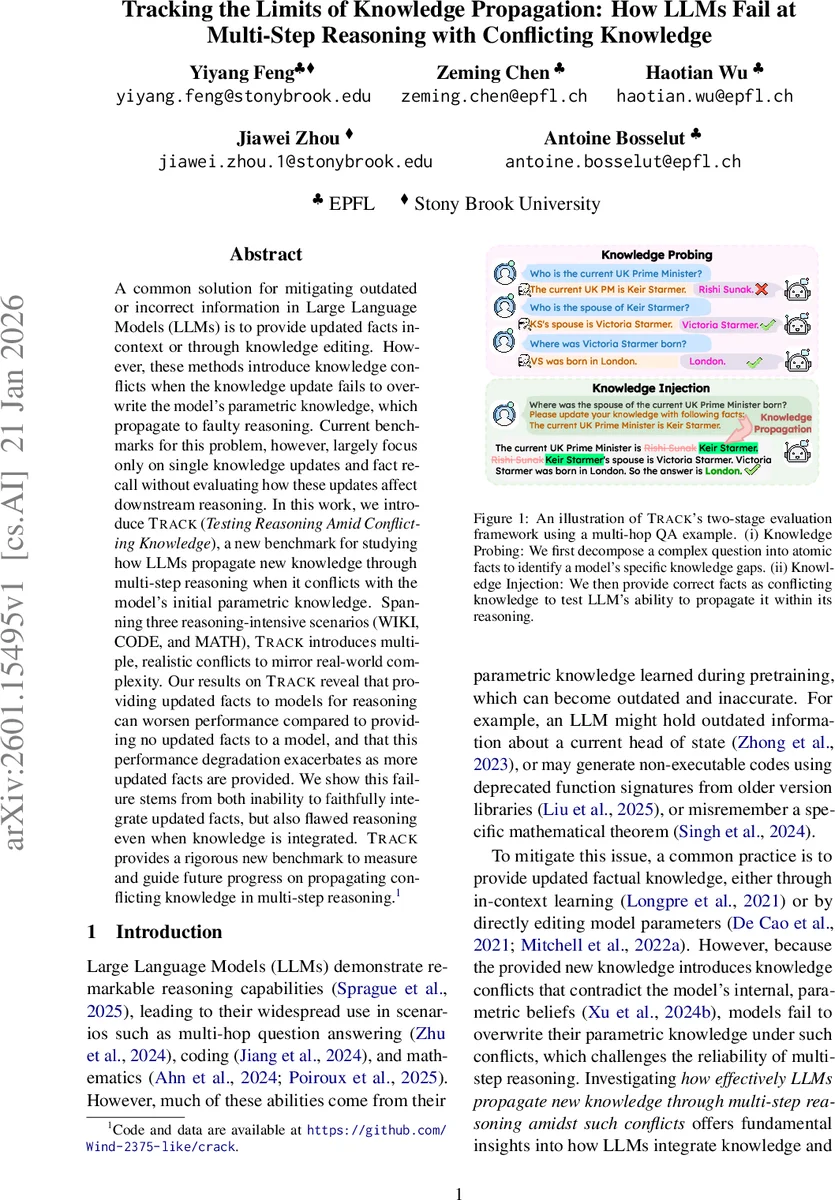

A common solution for mitigating outdated or incorrect information in Large Language Models (LLMs) is to provide updated facts in-context or through knowledge editing. However, these methods introduce knowledge conflicts when the knowledge update fails to overwrite the model’s parametric knowledge, which propagate to faulty reasoning. Current benchmarks for this problem, however, largely focus only on single knowledge updates and fact recall without evaluating how these updates affect downstream reasoning. In this work, we introduce TRACK (Testing Reasoning Amid Conflicting Knowledge), a new benchmark for studying how LLMs propagate new knowledge through multi-step reasoning when it conflicts with the model’s initial parametric knowledge. Spanning three reasoning-intensive scenarios (WIKI, CODE, and MATH), TRACK introduces multiple, realistic conflicts to mirror real-world complexity. Our results on TRACK reveal that providing updated facts to models for reasoning can worsen performance compared to providing no updated facts to a model, and that this performance degradation exacerbates as more updated facts are provided. We show this failure stems from both inability to faithfully integrate updated facts, but also flawed reasoning even when knowledge is integrated. TRACK provides a rigorous new benchmark to measure and guide future progress on propagating conflicting knowledge in multi-step reasoning.

💡 Research Summary

**

The paper addresses a critical gap in the evaluation of large language models (LLMs) when they are supplied with updated factual information that conflicts with their internal, parametric knowledge. While prior work has focused on single‑fact updates and simple recall, it has largely ignored how such updates affect multi‑step reasoning pipelines. To fill this void, the authors introduce TRACK (Testing Reasoning Amid Conflicting Knowledge), a benchmark specifically designed to measure the propagation of conflicting knowledge through multi‑hop reasoning tasks.

TRACK follows a two‑stage evaluation protocol. First, a Knowledge Probing phase decomposes each complex query into a set of atomic facts (Kq). The model is queried on each fact, and any fact it fails to answer correctly is marked as a knowledge gap (Kg). Second, a Knowledge Injection phase supplies the correct, up‑to‑date facts corresponding to Kg as in‑context information (open‑book setting). The model’s performance is then compared against a closed‑book baseline that receives only the original question. This design isolates the effect of the injected facts on downstream reasoning.

Three realistic domains are instantiated: (i) WIKI – multi‑hop question answering over recent Wikipedia revisions, (ii) CODE – code generation tasks that require the use of newly‑released APIs or updated library signatures, and (iii) MATH – multi‑step mathematical problems that depend on recently revised theorems or formulas. Each domain contains multiple, interacting conflicts per instance, reflecting real‑world complexity where several facts may need to be updated simultaneously.

The authors propose three evaluation metrics: Answer Pass (AP) checks whether the final answer is correct; Full Knowledge Entailment (FKE) verifies that every atomic fact in Kq is explicitly referenced in the model’s reasoning chain; Holistic Pass (HP) requires both AP and FKE to be satisfied, providing the strictest measure of faithful reasoning. These metrics go beyond simple fact recall by demanding that updated knowledge be used as premises in a reasoning chain.

Experiments span a wide range of models, including closed‑source (GPT‑4, Claude) and open‑source (Llama‑2, Mistral) families, as well as both chain‑of‑thought and direct‑answer variants. The results are strikingly consistent: (1) providing correct, conflicting facts often yields little to no gain in AP, and in many cases degrades performance relative to the closed‑book baseline; (2) performance on FKE and HP deteriorates sharply as the number of injected facts increases; (3) error analysis reveals two primary failure modes. First, models struggle to overwrite their parametric knowledge, leading to blended or contradictory reasoning. Second, even when the new fact is correctly incorporated, the subsequent reasoning steps are frequently flawed—e.g., mis‑applying an updated API, or failing to correctly chain mathematical deductions.

The benchmark also includes a Knowledge Aggregation Scope (KAS) parameter that controls how many questions’ gaps are aggregated into a single context. Larger KAS values simulate environments where multiple updates must be handled concurrently. As KAS grows, models exhibit pronounced difficulty in selecting relevant facts, further reducing FKE and HP scores. This highlights a practical challenge for deployed systems that must ingest streams of updates.

In summary, the study demonstrates that current LLMs are ill‑suited for propagating conflicting knowledge through multi‑step reasoning. TRACK provides a rigorous, multi‑domain testbed that quantifies this limitation and offers fine‑grained diagnostics. The authors suggest future directions such as (i) integrating parametric knowledge editing with in‑context updates, (ii) developing meta‑reasoning mechanisms that can assess fact reliability and resolve conflicts, and (iii) designing training objectives that explicitly encourage faithful knowledge propagation across reasoning steps. TRACK thus establishes a new standard for evaluating and improving LLM robustness in the face of evolving real‑world information.

Comments & Academic Discussion

Loading comments...

Leave a Comment