GuideTouch: An Obstacle Avoidance Device with Tactile Feedback for Visually Impaired

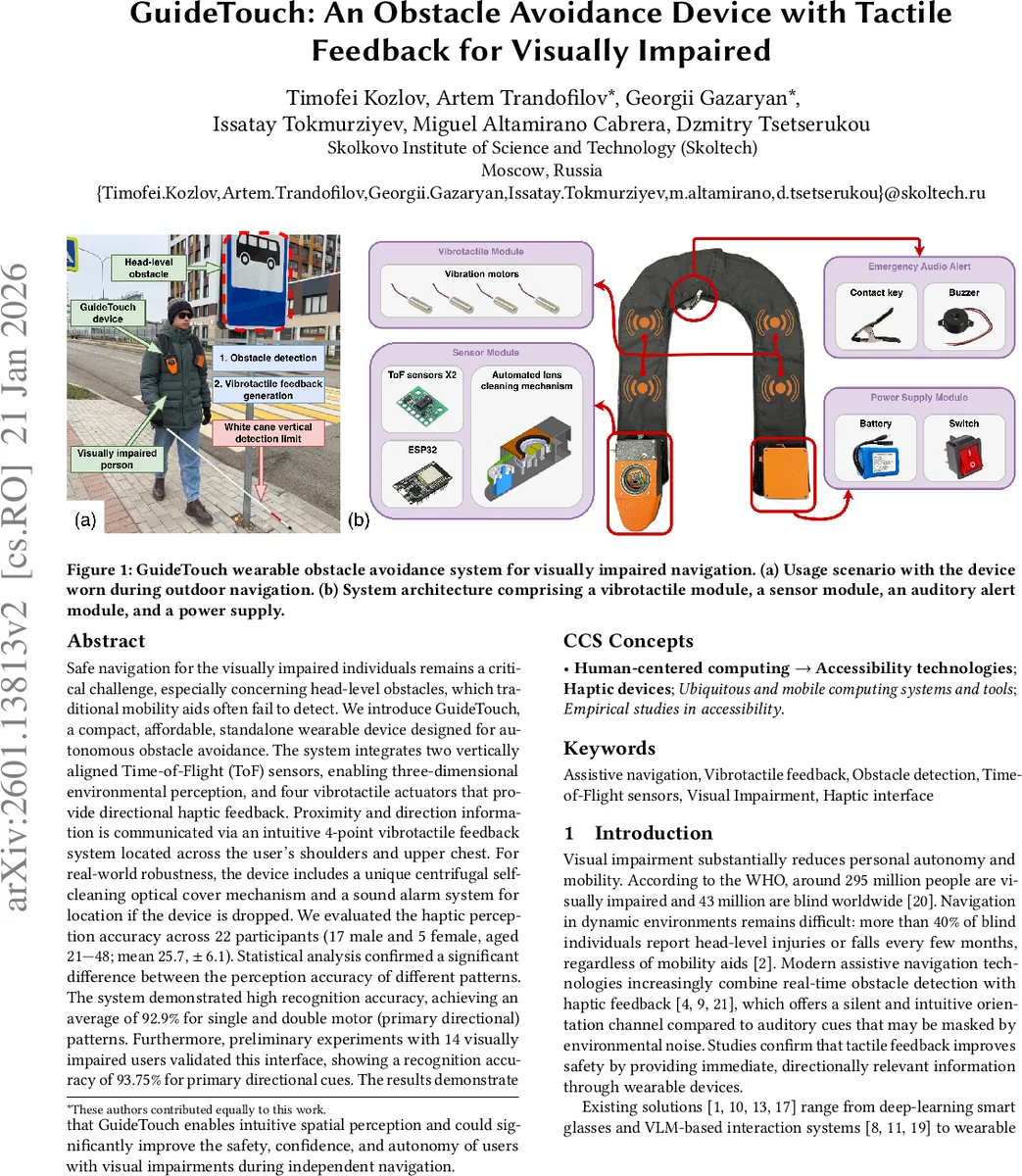

Safe navigation for the visually impaired individuals remains a critical challenge, especially concerning head-level obstacles, which traditional mobility aids often fail to detect. We introduce GuideTouch, a compact, affordable, standalone wearable device designed for autonomous obstacle avoidance. The system integrates two vertically aligned Time-of-Flight (ToF) sensors, enabling three-dimensional environmental perception, and four vibrotactile actuators that provide directional haptic feedback. Proximity and direction information is communicated via an intuitive 4-point vibrotactile feedback system located across the user’s shoulders and upper chest. For real-world robustness, the device includes a unique centrifugal self-cleaning optical cover mechanism and a sound alarm system for location if the device is dropped. We evaluated the haptic perception accuracy across 22 participants (17 male and 5 female, aged 21-48, mean 25.7, sd 6.1). Statistical analysis confirmed a significant difference between the perception accuracy of different patterns. The system demonstrated high recognition accuracy, achieving an average of 92.9% for single and double motor (primary directional) patterns. Furthermore, preliminary experiments with 14 visually impaired users validated this interface, showing a recognition accuracy of 93.75% for primary directional cues. The results demonstrate that GuideTouch enables intuitive spatial perception and could significantly improve the safety, confidence, and autonomy of users with visual impairments during independent navigation.

💡 Research Summary

The paper presents GuideTouch, a compact, affordable, standalone wearable designed to help visually impaired individuals avoid head‑level obstacles that conventional mobility aids often miss. The hardware integrates two vertically aligned Time‑of‑Flight (ToF) sensors (VL53L5CX) that provide an 8 × 8 distance matrix per sensor with a combined vertical field of view of 90°. By mounting the sensors at a 30° relative angle, the device can detect obstacles ranging from knee height (≈30 cm) to head height (≈160 cm) at distances up to 50 cm, and can sense objects as small as 4 cm at 1 m.

Distance data are sampled every 0.1 s by an ESP32 microcontroller, filtered for outliers, and segmented into zones (left, right, top, bottom). Each zone is mapped to one of four vibration motors embedded in a scarf‑like garment positioned on the shoulders and upper chest. Single‑motor patterns convey primary directional cues, while combinations of two, three, or four motors indicate more complex obstacle geometries (e.g., overhanging hazards). The haptic feedback is intended to be intuitive, hands‑free, and robust to environmental noise that can mask auditory cues.

To ensure reliable operation in rain or snow, the authors devised a centrifugal self‑cleaning mechanism: a brushless DC motor spins an infrared‑transparent glass cover at 3000 rpm, effectively removing droplets in 18 of 20 trials. The cleaning system produces an average sound level of 70 dB, which is within safe exposure limits. A passive piezo buzzer (3–4 kHz) linked to a detachable clip provides a “lost‑device” alarm if the wearable becomes dislodged.

The prototype weighs less than 500 g, costs roughly 100 USD, and offers up to 12 hours of operation (4 hours while cleaning).

User evaluation was conducted in two phases. In the first phase, 22 sighted participants (17 M, 5 F, mean age ≈ 26) were divided into two groups. Group A experienced 15 vibrotactile patterns (including single, double, triple, and quadruple motor combinations) while Group B experienced only the 10 simpler patterns (single and double motors). Each pattern was presented five times in random order. Group A achieved an average recognition accuracy of 78.4%, whereas Group B reached 92.9%. Statistical analysis (one‑way repeated‑measures ANOVA) revealed a significant effect of pattern complexity (F(14,150)=7.53, p < 0.000001). Errors were primarily due to confusion of simple patterns with more complex ones, and two female participants showed lower performance, which the authors attribute to discomfort affecting contact with the device.

In the second phase, 14 visually impaired participants (8 M, 6 F, ages 16–60) performed a similar recognition test focusing on primary directional cues (single and double motor patterns). The average accuracy was 93.75%, confirming that the target user group can reliably interpret the haptic signals.

The authors conclude that GuideTouch successfully delivers intuitive spatial information through a four‑point tactile interface, achieving high recognition rates for the most critical directional cues. Limitations include testing only under static conditions and with first‑time users; dynamic navigation, long‑term training, and integration of higher‑resolution ToF sensors with computer‑vision processing are identified as future work. The proposed system thus represents a promising step toward affordable, hands‑free assistive navigation for the visually impaired.

Comments & Academic Discussion

Loading comments...

Leave a Comment