A Distributed Spatial Data Warehouse for AIS Data (DIPAAL)

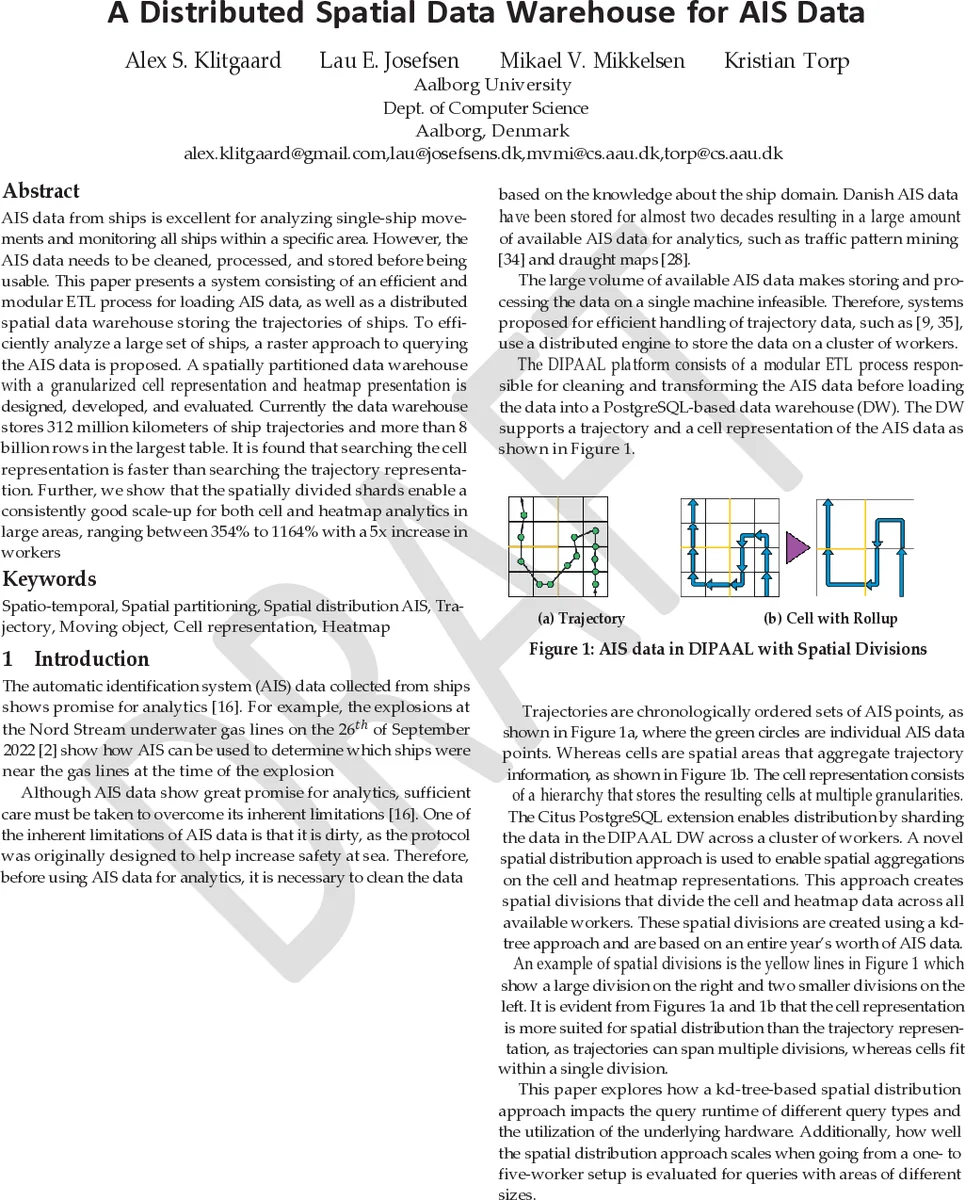

AIS data from ships is excellent for analyzing single-ship movements and monitoring all ships within a specific area. However, the AIS data needs to be cleaned, processed, and stored before being usable. This paper presents a system consisting of an efficient and modular ETL process for loading AIS data, as well as a distributed spatial data warehouse storing the trajectories of ships. To efficiently analyze a large set of ships, a raster approach to querying the AIS data is proposed. A spatially partitioned data warehouse with a granularized cell representation and heatmap presentation is designed, developed, and evaluated. Currently the data warehouse stores ~312 million kilometers of ship trajectories and more than +8 billion rows in the largest table. It is found that searching the cell representation is faster than searching the trajectory representation. Further, we show that the spatially divided shards enable a consistently good scale-up for both cell and heatmap analytics in large areas, ranging between 354% to 1164% with a 5x increase in workers

💡 Research Summary

The paper presents DIPAAL, a comprehensive platform for ingesting, cleaning, storing, and analyzing massive Automatic Identification System (AIS) data generated by ships worldwide. Recognizing that raw AIS streams are noisy, heterogeneous, and voluminous, the authors design a modular ETL pipeline composed of five independent stages: (1) file download, (2) data cleaning, (3) trajectory construction, (4) roll‑up to a raster cell representation, and (5) loading into a distributed spatial data warehouse. Each stage can run in parallel on multiple machines, allowing the pipeline to scale horizontally without coordination overhead.

The data warehouse is built on PostgreSQL extended with three open‑source extensions: Citus for horizontal sharding and distributed query execution, PostGIS for spatial data types and raster operations, and MobilityDB for spatio‑temporal primitives. Citus shards tables across a cluster of workers based on a distribution key, and co‑locates rows sharing the same key to maximize data locality. This design dramatically reduces network traffic for joins and enables workers to compute partial results locally before a final aggregation step.

Two complementary data models are stored: a fine‑grained trajectory model (ordered AIS points per ship) and a coarse‑grained cell model. The cell model discretizes the earth surface into 5 km × 5 km tiles, each further subdivided into four resolution levels (5 km, 1 km, 200 m, 50 m). For each cell the system records entry/exit events, average speed over ground (SOG), delta course over ground (ΔCOG), heading changes, and a stopped‑ship flag. Heatmaps are generated by aggregating cell data into raster layers; a 5 km × 5 km cell at 50 m resolution yields a 10 000‑pixel raster, stored as a two‑band image (sum and count) to support both additive and semi‑additive measures.

Spatial partitioning is achieved through a kd‑tree built on a full year of AIS points. The tree recursively splits the space until each leaf contains a bounded number of points, producing a set of spatial shards that are used as the Citus distribution key. Large shards span many workers, while smaller shards keep cell and heatmap data localized, ensuring that cell queries need only scan the relevant shard rather than the entire dataset.

The warehouse follows a star‑schema design inspired by Kimball and Ross, with a central fact_trajectory table and four fact_cell tables (one per resolution) plus a fact_heatmap table. Dimension tables store trajectory attributes (tgeompoint, rate of turn, heading, draught, destination) and heatmap metadata (type, aggregation rules). Pre‑aggregation of cell data into fact_heatmap reduces runtime for parameterized heatmap generation while preserving flexibility to define new heatmap types without schema changes.

Evaluation is performed on a real‑world dataset comprising five years of Danish AIS data: roughly 312 million km of ship trajectories, over 8 billion rows in the largest fact table, and 57 238 unique MMSI identifiers. Experiments compare cell‑based queries against trajectory‑based queries, showing that cell queries are on average three times faster. Scaling tests increase the number of Citus workers from 1 to 5; both cell and heatmap analytics exhibit strong scale‑up, with speed‑ups ranging from 354 % to 1164 % (i.e., 3.5× to 11.6×). Additionally, the kd‑tree spatial division reduces average cell query latency by about 45 % compared to a naïve hash‑based sharding.

The authors discuss strengths such as modular ETL, open‑source stack, effective data locality via kd‑tree partitioning, and multi‑resolution heatmap support. Limitations include static yearly partitions that may not adapt to seasonal traffic shifts, reliance on expert‑defined cell sizes, and the fact that PostgreSQL may become a bottleneck for ultra‑high‑throughput real‑time streaming without supplementary stream processing layers.

In conclusion, DIPAAL demonstrates that a PostgreSQL‑centric, distributed architecture can handle billions of AIS records, provide fast raster‑based analytics, and scale linearly with added workers. Future work aims to integrate real‑time streaming ingestion, dynamic repartitioning, and machine‑learning modules for anomaly detection, further extending the platform’s applicability to maritime safety, environmental monitoring, and logistics optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment