Small Updates, Big Doubts: Does Parameter-Efficient Fine-tuning Enhance Hallucination Detection ?

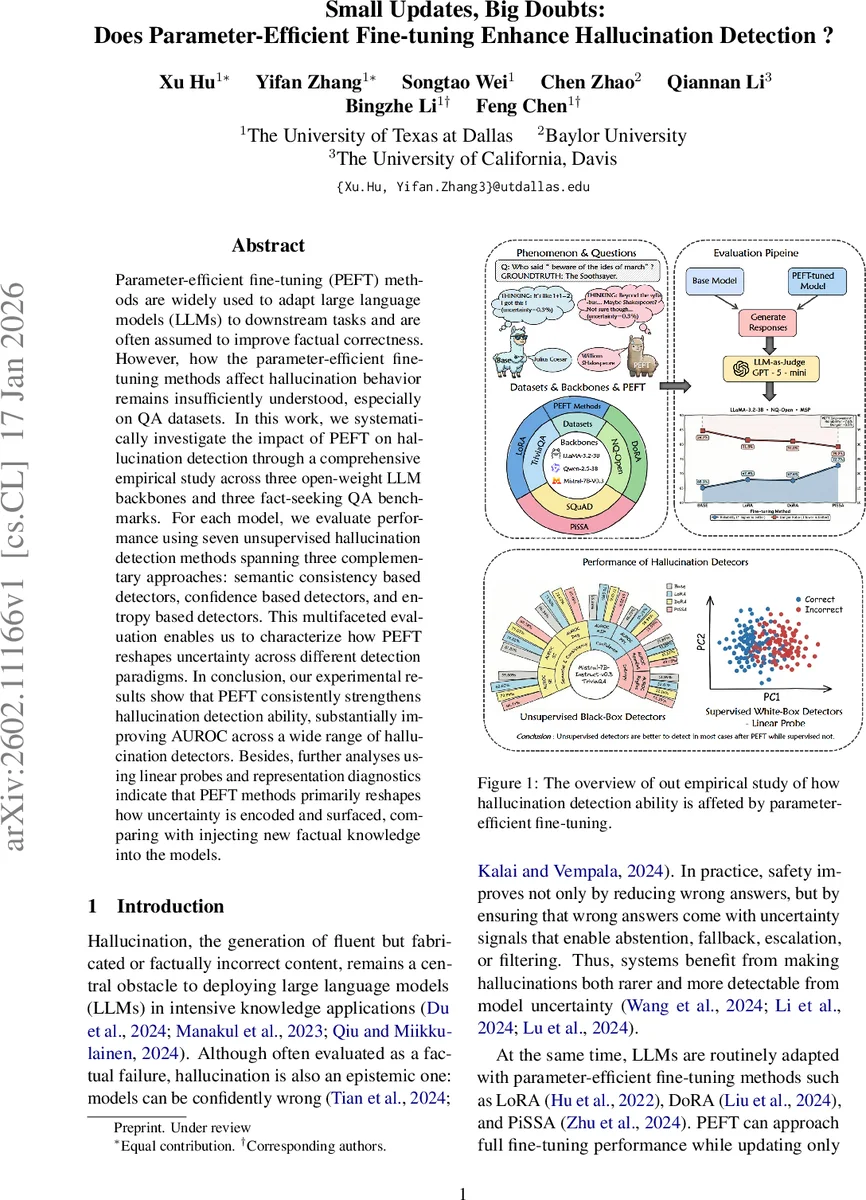

Parameter-efficient fine-tuning (PEFT) methods are widely used to adapt large language models (LLMs) to downstream tasks and are often assumed to improve factual correctness. However, how the parameter-efficient fine-tuning methods affect hallucination behavior remains insufficiently understood, especially on QA datasets. In this work, we systematically investigate the impact of PEFT on hallucination detection through a comprehensive empirical study across three open-weight LLM backbones and three fact-seeking QA benchmarks. For each model, we evaluate performance using seven unsupervised hallucination detection methods spanning three complementary approaches: semantic consistency based detectors, confidence based detectors, and entropy based detectors. This multifaceted evaluation enables us to characterize how PEFT reshapes uncertainty across different detection paradigms. In conclusion, our experimental results show that PEFT consistently strengthens hallucination detection ability, substantially improving AUROC across a wide range of hallucination detectors. Besides, further analyses using linear probes and representation diagnostics indicate that PEFT methods primarily reshapes how uncertainty is encoded and surfaced, comparing with injecting new factual knowledge into the models.

💡 Research Summary

This paper investigates how parameter‑efficient fine‑tuning (PEFT) influences hallucination detection in large language models (LLMs). While PEFT methods such as LoRA, DoRA, and PiSSA are widely adopted for adapting LLMs with a tiny fraction of trainable parameters, their effect on the epistemic signals that underpin unsupervised hallucination detectors has not been systematically studied, especially in fact‑seeking question‑answering (QA) settings.

Experimental setup

Three open‑weight instruction‑tuned backbones are used: LLaMA‑3.2‑3B‑Instruct, Mistral‑7B‑Instruct‑v0.3, and Qwen2.5‑3B‑Instruct. For each backbone, the three PEFT variants are fine‑tuned on the same QA dataset (TriviaQA, Natural Questions‑Open, or SQuAD) using identical hyper‑parameters (rank = 32, scaling α = 64, dropout = 0.05, AdamW lr = 2e‑5, batch = 64, one epoch, BF16). Only the attention projection matrices (q_proj, k_proj, v_proj, o_proj) are updated.

The study evaluates two axes: (1) standard QA accuracy and (2) hallucination detection performance measured primarily by AUROC (and AUPR for SQuAD due to class imbalance). Seven unsupervised detectors are examined, grouped into (i) semantic‑consistency methods (Semantic Entropy, SelfCheckGPT, Degree of Uncertainty), (ii) confidence‑based methods (Maximum Sequence Probability, Perplexity), and (iii) entropy‑based methods (Predictive Entropy, Mean Token Entropy). In addition, a white‑box linear probe is trained on hidden states to probe how PEFT reshapes internal representations.

Key findings

-

Modest accuracy gains – Across all backbones and datasets, PEFT improves QA accuracy by only 0.1 %–3 %, indicating that the primary benefit is not a large increase in factual knowledge.

-

Consistent AUROC improvement – All semantic‑consistency and confidence‑based detectors show substantial AUROC lifts after PEFT, ranging from ~4 % to over 12 % relative improvement. For example, on LLaMA‑3.2‑3B‑Instruct, SelfCheckGPT’s AUROC rises by 7.73 % and the Maximum Sequence Probability (MSP) detector by 11.18 % on SQuAD. This suggests that PEFT moves model outputs away from the over‑confident regime and makes uncertainty signals more discriminative.

-

Entropy‑based detectors are unstable – Predictive Entropy and Mean Token Entropy sometimes improve but often show negligible or even negative changes. Token‑level entropy appears sensitive to lexical diversity and syntactic variation rather than factual correctness, explaining the inconsistency.

-

White‑box linear probes are not uniformly better – Although PEFT reshapes hidden representations (especially in the attention projection layers), the resulting features are not consistently more linearly separable for correctness. In several configurations the linear probe’s AUROC drops, indicating that the epistemic regularization introduced by PEFT is not captured by a simple linear classifier.

-

Representation diagnostics – PCA and linear‑probe analyses reveal that PEFT primarily alters how uncertainty is encoded rather than injecting new factual knowledge. The changes are concentrated in the attention projection matrices, supporting the authors’ claim that PEFT acts as an “epistemic regularizer” that aligns the model’s internal notion of being wrong with observable uncertainty cues.

Conclusions and implications

PEFT does not dramatically reduce hallucinations by making the model know more facts; instead, it makes the model’s internal uncertainty more coherent and easier to surface to downstream detectors. Consequently, pairing PEFT with semantic‑consistency or confidence‑based detection pipelines can yield safer, more reliable LLM deployments, allowing systems to abstain, fallback, or flag uncertain answers more effectively. The authors release code for full reproducibility and suggest future work on domain‑specific PEFT effects (e.g., medical or legal QA) and on designing detection pipelines that explicitly exploit the epistemic regularization introduced by PEFT.

Comments & Academic Discussion

Loading comments...

Leave a Comment