Whose Facts Win? LLM Source Preferences under Knowledge Conflicts

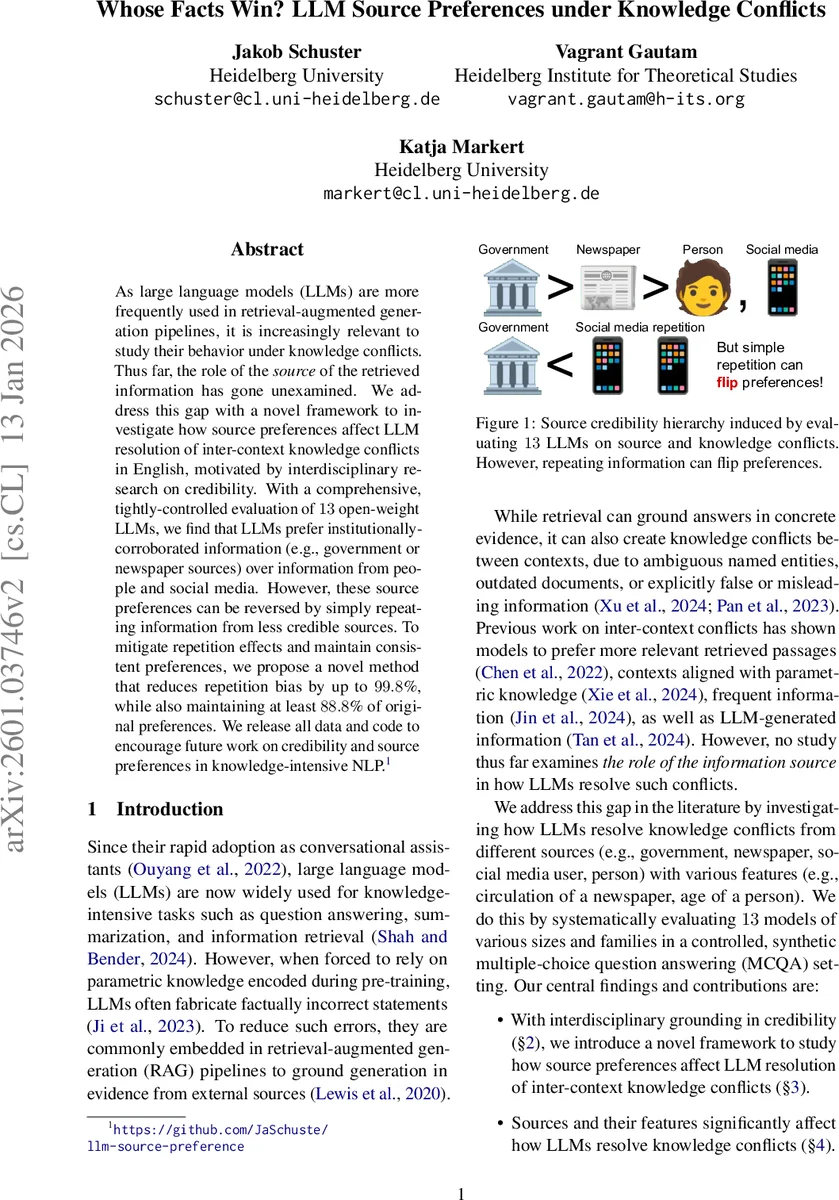

As large language models (LLMs) are more frequently used in retrieval-augmented generation pipelines, it is increasingly relevant to study their behavior under knowledge conflicts. Thus far, the role of the source of the retrieved information has gone unexamined. We address this gap with a novel framework to investigate how source preferences affect LLM resolution of inter-context knowledge conflicts in English, motivated by interdisciplinary research on credibility. With a comprehensive, tightly-controlled evaluation of 13 open-weight LLMs, we find that LLMs prefer institutionally-corroborated information (e.g., government or newspaper sources) over information from people and social media. However, these source preferences can be reversed by simply repeating information from less credible sources. To mitigate repetition effects and maintain consistent preferences, we propose a novel method that reduces repetition bias by up to 99.8%, while also maintaining at least 88.8% of original preferences. We release all data and code to encourage future work on credibility and source preferences in knowledge-intensive NLP.

💡 Research Summary

The paper investigates how large language models (LLMs) resolve conflicts between pieces of factual information that originate from different sources, a scenario increasingly common in retrieval‑augmented generation (RAG) pipelines. While prior work has examined factors such as relevance, frequency, or model‑generated content, the influence of the source’s credibility has remained unexplored. To fill this gap, the authors construct a tightly controlled synthetic benchmark. They start from the NeoQA dataset, creating 373 fictional entities (people, organizations, locations, etc.) and generating 7,440 attribute‑conflict pairs by perturbing a single attribute per entity (e.g., changing a person’s nationality). Each conflict is presented as two markdown tables (T_A and T_B) containing the contradictory values.

Four synthetic source types are generated: government agencies, newspapers, individual persons, and social‑media users. Newspaper names are derived from real U.S. media templates, government agencies from generic templates, social‑media usernames mimic Reddit‑style handles, and personal names are sampled from U.S. Census and Social Security data while avoiding real Wikipedia entries. The authors also embed intra‑type credibility cues such as newspaper circulation numbers, social‑media follower counts, regional proximity, academic titles, gender, and age.

Thirteen open‑weight, instruction‑tuned decoder‑only models are evaluated: four sizes of Qwen, three sizes of OLMo, three sizes of LLaMA, and three sizes of Gemma. Each model receives a forced‑choice prompt that includes an instruction, the context (either source‑less or source‑annotated), a question, and answer options (A or B). By extracting deterministic token probabilities for A and B, the authors compute a “source preference” metric (SP) that quantifies how the presence of a particular source shifts the model’s probability toward one answer relative to the source‑less baseline. They average SP across all conflict pairs for each ordered source pair (X, Y) and apply non‑parametric bootstrap testing (10 000 resamples, Holm‑Bonferroni corrected α = 0.05) to assess significance.

The results reveal a highly consistent hierarchy across models: government > newspaper > person > social‑media. All 13 models show statistically significant preferences for any attributed source over a “no source” baseline, and when two sources of different types are present, the preference ordering is strictly transitive. Kendall’s W of 0.74 indicates strong inter‑model agreement. Within a source type, higher popularity (larger newspaper circulation or more social‑media followers) further increases the likelihood of being chosen. Demographic cues also have modest effects: models slightly favor regional newspapers, individuals with academic titles, women, and older persons. Notably, the authors discover a “repetition bias”: repeatedly presenting low‑credibility information (e.g., multiple social‑media statements) can flip the model’s preference, causing it to favor the repeated source over a higher‑credibility one. This vulnerability mirrors real‑world disinformation tactics.

To mitigate repetition bias, the authors propose a fine‑tuning method that randomizes source labels and down‑weights repeated low‑credibility statements during training. Experiments show that this approach reduces the repetition effect by up to 99.8 % while preserving at least 88.8 % of the original source‑preference hierarchy, demonstrating that bias can be attenuated without erasing the model’s nuanced credibility judgments.

The study contributes three main insights: (1) LLMs possess an implicit, transitive credibility hierarchy aligned with traditional media studies; (2) intra‑type factors such as popularity and demographic attributes modulate preferences in predictable ways; (3) LLMs are highly susceptible to repetition, a weakness that can be largely remedied through targeted fine‑tuning. These findings have practical implications for the design of trustworthy RAG systems: developers should consider source annotation, limit repetitive low‑credibility inputs, and possibly incorporate the proposed mitigation technique to ensure that model outputs reflect reliable evidence rather than sheer frequency. The paper also releases all synthetic data, code, and evaluation scripts to foster further research on credibility, source bias, and safe information retrieval in NLP.

Comments & Academic Discussion

Loading comments...

Leave a Comment