"Newspaper Eat" Means "Not Tasty": A Taxonomy and Benchmark for Coded Languages in Real-World Chinese Online Reviews

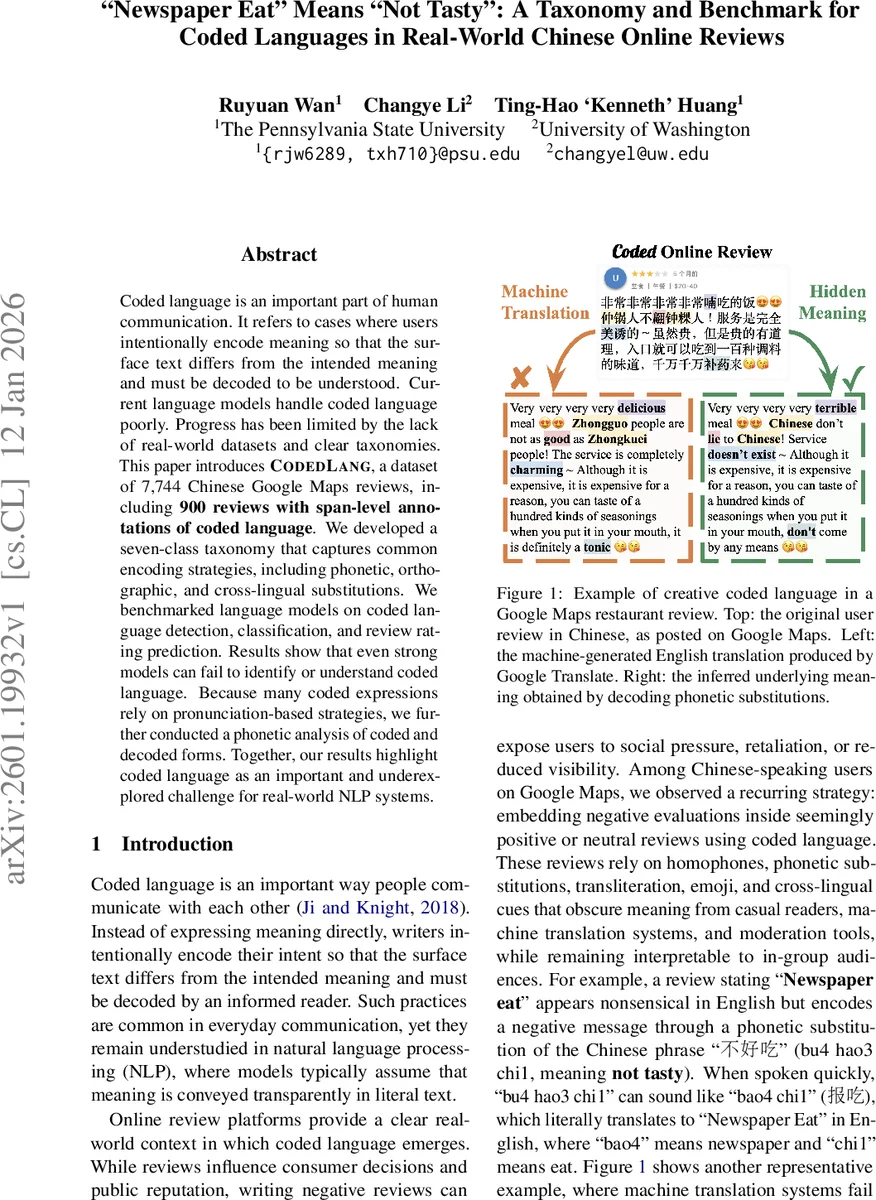

Coded language is an important part of human communication. It refers to cases where users intentionally encode meaning so that the surface text differs from the intended meaning and must be decoded to be understood. Current language models handle coded language poorly. Progress has been limited by the lack of real-world datasets and clear taxonomies. This paper introduces CodedLang, a dataset of 7,744 Chinese Google Maps reviews, including 900 reviews with span-level annotations of coded language. We developed a seven-class taxonomy that captures common encoding strategies, including phonetic, orthographic, and cross-lingual substitutions. We benchmarked language models on coded language detection, classification, and review rating prediction. Results show that even strong models can fail to identify or understand coded language. Because many coded expressions rely on pronunciation-based strategies, we further conducted a phonetic analysis of coded and decoded forms. Together, our results highlight coded language as an important and underexplored challenge for real-world NLP systems.

💡 Research Summary

The paper tackles the understudied problem of coded language—deliberate alterations of surface text that hide the true intent—in real‑world Chinese online reviews. The authors introduce CodedLang, a new dataset comprising 7,744 Google Maps reviews (both restaurant and local business) collected from the United States, of which 900 reviews contain at least one instance of coded language. Each coded instance is annotated at the span level with one of seven categories that the authors define after an iterative, human‑in‑the‑loop mining process.

The seven categories are: (1) Ambiguous Homophone – a legitimate lexical phrase whose meaning is altered via a homophonic substitution (e.g., “炒鸡” → “超级”), (2) Non‑Lexical Homophone – a string of characters that does not form a valid word but sounds like a normal phrase (e.g., “灰常” → “非常”), (3) Phonetic Substitution – use of Pinyin, Roman letters, or Zhuyin to replace characters based on pronunciation (e.g., “tm” → “他妈”), (4) Emoji Substitution – emojis that stand for a word or phrase (e.g., “🌶️” → “辣鸡”), (5) Orthographic Substitution – characters that look similar to the target (e.g., “口乞” → “吃”), (6) Cross‑Lingual Phonetic Encoding – borrowing phonetic similarity from another language (e.g., “Newspaper eat” → “报吃” → “不好吃”), and (7) Cipher – strings that are essentially gibberish without a direct literal meaning.

Data collection began with a seed set of 23 examples harvested from news articles and social‑media posts that discussed coded reviews. The authors then built a dictionary of 100 initial spans, performed large‑scale string matching across 1.77 M restaurant reviews and 666 M local reviews, and manually validated candidates. They iteratively expanded the dictionary using validated spans and an external homophone dictionary derived from Weibo and Tieba, stopping when no new spans were found. The final corpus includes 184 unique coded spans, and inter‑annotator agreement is extremely high (Cohen’s κ = 0.99 for review‑level detection, 98 % span overlap).

Statistical analysis shows that homophone‑based strategies dominate (Ambiguous 292, Non‑Lexical 200), followed by phonetic substitution (184) and orthographic substitution (48). Cross‑lingual and cipher categories are rare (34 and 7 instances respectively).

The authors benchmark four contemporary large language models—GPT‑5‑mini, Gemini‑2.5‑Flash, DeepSeek‑V3.2, and Qwen2.5‑7B‑Instruct—on three tasks: (i) binary detection of coded language, (ii) multi‑label classification of the seven categories, and (iii) review rating prediction. All models are prompted with a definition of coded language and few‑shot examples drawn from the taxonomy. Detection performance peaks at an F1 of 0.77 (Gemini) and drops to 0.56 (Qwen). Classification results (shown for DeepSeek‑V3.2) reveal a pattern: precision is generally higher than recall, indicating models tend to miss many coded spans. Orthographic substitution achieves a recall of 0.94 but a precision of only 0.32, suggesting over‑generation. Cross‑lingual encoding attains perfect recall (1.00) but very low precision (0.25), reflecting many false positives. The cipher class, despite high precision and recall, suffers from insufficient data for reliable conclusions.

In the rating‑prediction task, models trained on raw reviews systematically overestimate scores for reviews containing coded language, because the negative sentiment is masked. The authors report a root‑mean‑square error increase of 0.45 when coded reviews are left untreated, underscoring the practical impact on downstream applications such as recommendation systems and reputation management.

A dedicated phonetic analysis compares coded spans with their decoded counterparts. The authors compute syllable length, tone‑matching rates, and consonant‑vowel alignment. Coded forms are on average 1.3 × shorter in syllable count, and tone agreement exceeds 85 % for non‑lexical homophones and phonetic substitutions. These findings suggest that phonological similarity, rather than visual similarity, is the primary driver of successful encoding in Chinese online discourse.

The paper’s contributions are threefold: (1) a publicly released, span‑annotated dataset of real‑world coded language; (2) a rigorously defined seven‑class taxonomy that captures the breadth of encoding strategies; (3) a comprehensive benchmark revealing that even state‑of‑the‑art LLMs struggle with detection, classification, and downstream sentiment inference when faced with coded language. The authors discuss limitations, notably the geographic focus on U.S. businesses and the relatively small number of cross‑lingual and cipher examples, and propose future work: expanding to other regions and languages, developing bidirectional “code‑decode” models, integrating phonetic features into LLMs, and building lightweight real‑time detectors for moderation platforms.

Overall, the study highlights coded language as a critical blind spot for current NLP pipelines and provides concrete resources and analyses to stimulate research toward more culturally and linguistically aware language understanding systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment