Demystifying Multi-Agent Debate: The Role of Confidence and Diversity

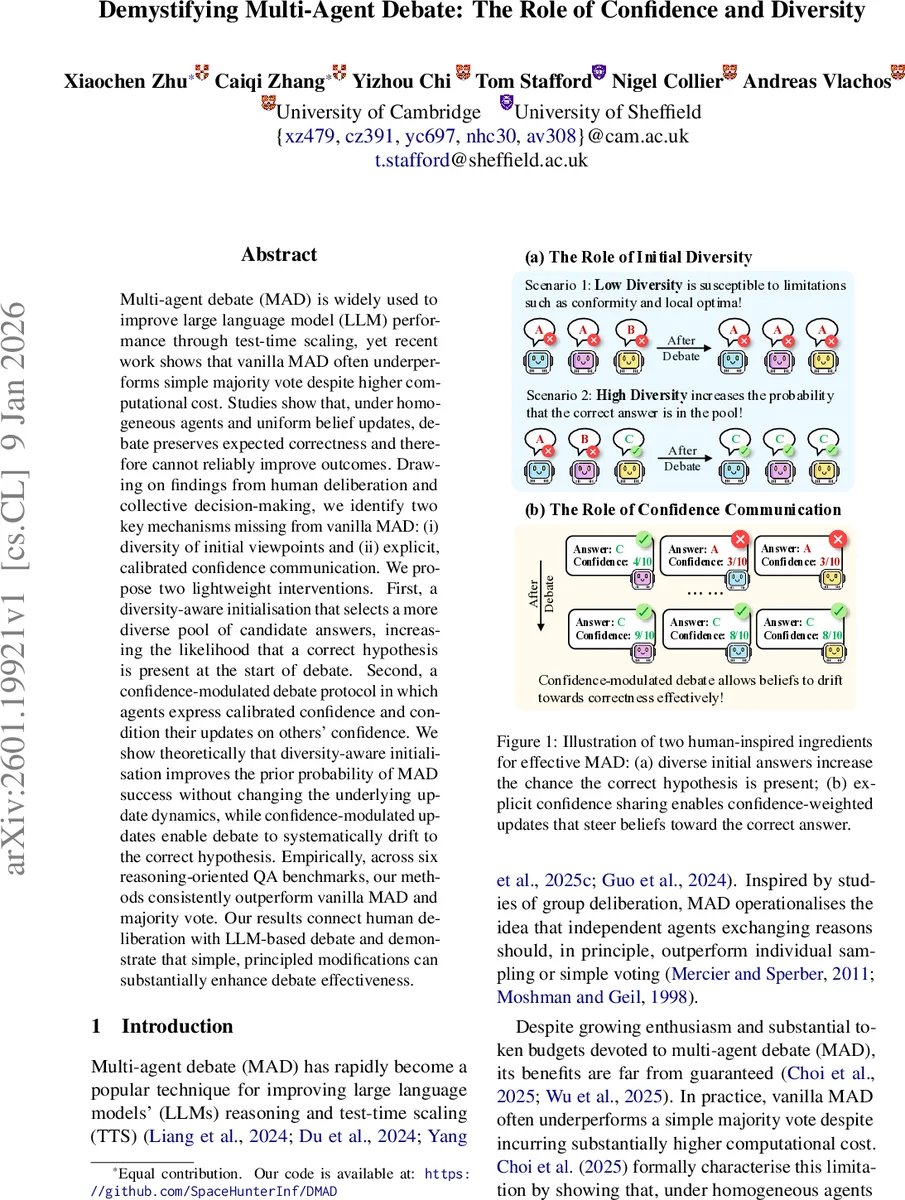

Multi-agent debate (MAD) is widely used to improve large language model (LLM) performance through test-time scaling, yet recent work shows that vanilla MAD often underperforms simple majority vote despite higher computational cost. Studies show that, under homogeneous agents and uniform belief updates, debate preserves expected correctness and therefore cannot reliably improve outcomes. Drawing on findings from human deliberation and collective decision-making, we identify two key mechanisms missing from vanilla MAD: (i) diversity of initial viewpoints and (ii) explicit, calibrated confidence communication. We propose two lightweight interventions. First, a diversity-aware initialisation that selects a more diverse pool of candidate answers, increasing the likelihood that a correct hypothesis is present at the start of debate. Second, a confidence-modulated debate protocol in which agents express calibrated confidence and condition their updates on others’ confidence. We show theoretically that diversity-aware initialisation improves the prior probability of MAD success without changing the underlying update dynamics, while confidence-modulated updates enable debate to systematically drift to the correct hypothesis. Empirically, across six reasoning-oriented QA benchmarks, our methods consistently outperform vanilla MAD and majority vote. Our results connect human deliberation with LLM-based debate and demonstrate that simple, principled modifications can substantially enhance debate effectiveness.

💡 Research Summary

The paper investigates why Multi‑Agent Debate (MAD), a popular test‑time scaling technique for large language models (LLMs), often fails to outperform a simple majority vote despite its higher computational cost. Building on recent theoretical work (Choi et al., 2025) that shows homogeneous agents with uniform belief updates produce a martingale over belief trajectories—meaning the expected correctness does not improve over rounds—the authors identify two human‑deliberation mechanisms missing from standard MAD: (i) diversity of initial viewpoints and (ii) explicit, calibrated confidence communication.

To address these gaps, they propose two lightweight, training‑free interventions. The first, Diversity‑Aware Initialization, samples a larger pool of candidate answers (N_cand > N) and selects N of them to start the debate by maximizing the number of distinct answers. A greedy marginal‑gain algorithm is used, incurring only extra sampling cost but no model retraining. This increases the prior probability that at least one correct hypothesis is present in the initial debate state.

The second intervention, Confidence‑Modulated Debate, extends each agent’s output at every round to a pair (answer, confidence score) where confidence is a discrete integer from 0 to 10. Agents receive the full set of peers’ (answer, confidence) tuples and use the confidence scores to weight the influence of others when revising their own answer. Because LLMs are known to be over‑confident, the authors train agents to produce calibrated confidence via reinforcement learning: the reward encourages high confidence when the answer is correct and low confidence otherwise. This calibrated, shared confidence breaks the martingale limitation, allowing belief trajectories to drift toward the correct answer.

Theoretical analysis shows that diversity‑aware initialization raises the prior success probability without altering the update dynamics, while confidence‑weighted updates provide a formal guarantee that the expected correctness can increase over rounds.

Empirically, the authors evaluate their methods on six reasoning‑oriented QA benchmarks (GSM‑8K, MATH, ARC‑Easy, ARC‑Challenge, OpenBookQA, BoolQ). Baselines include single‑model sampling, majority vote, and vanilla MAD. Results demonstrate that (1) diversity‑aware initialization alone improves accuracy by ~1.5 percentage points on average, (2) confidence‑modulated debate alone yields comparable gains, and (3) combining both yields the largest boost, typically 3–4 pp over vanilla MAD and majority vote. Gains are especially pronounced on harder datasets where correct answers are less likely to appear in a homogeneous initial pool. Ablation studies confirm that larger candidate pools and well‑calibrated confidence are essential; poorly calibrated confidence can hurt performance.

The discussion acknowledges that the proposed methods increase test‑time cost (more initial samples and RL‑based confidence training) and that the current theory assumes homogeneous agents. Future work is suggested on cost‑effective diversity extraction, extending the framework to heterogeneous ensembles, and exploring richer confidence signals beyond discrete scores.

In summary, by importing two key ingredients from human group deliberation—initial viewpoint diversity and explicit confidence sharing—the paper provides both theoretical justification and practical algorithms that substantially improve the effectiveness of multi‑agent debate for LLMs, closing the gap between human‑style reasoning and current AI debate protocols.

Comments & Academic Discussion

Loading comments...

Leave a Comment