mHC-lite: You Don't Need 20 Sinkhorn-Knopp Iterations

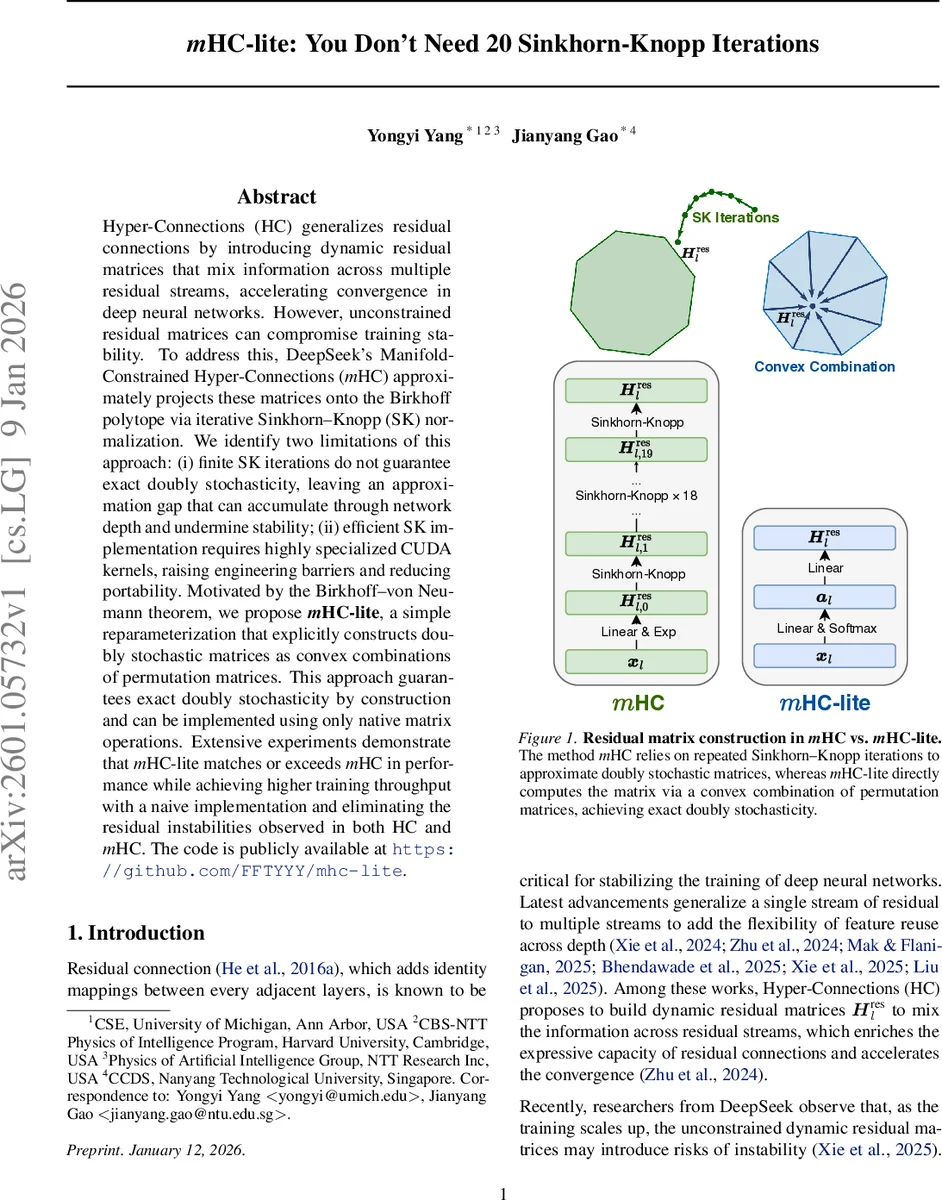

Hyper-Connections (HC) generalizes residual connections by introducing dynamic residual matrices that mix information across multiple residual streams, accelerating convergence in deep neural networks. However, unconstrained residual matrices can compromise training stability. To address this, DeepSeek’s Manifold-Constrained Hyper-Connections (mHC) approximately projects these matrices onto the Birkhoff polytope via iterative Sinkhorn–Knopp (SK) normalization. We identify two limitations of this approach: (i) finite SK iterations do not guarantee exact doubly stochasticity, leaving an approximation gap that can accumulate through network depth and undermine stability; (ii) efficient SK implementation requires highly specialized CUDA kernels, raising engineering barriers and reducing portability. Motivated by the Birkhoff–von Neumann theorem, we propose mHC-lite, a simple reparameterization that explicitly constructs doubly stochastic matrices as convex combinations of permutation matrices. This approach guarantees exact doubly stochasticity by construction and can be implemented using only native matrix operations. Extensive experiments demonstrate that mHC-lite matches or exceeds mHC in performance while achieving higher training throughput with a naive implementation and eliminating the residual instabilities observed in both HC and mHC. The code is publicly available at https://github.com/FFTYYY/mhc-lite.

💡 Research Summary

The paper addresses stability and implementation challenges inherent in Hyper‑Connections (HC) and its manifold‑constrained variant (mHC). HC extends the classic residual shortcut by introducing a dynamic residual matrix H_res that mixes multiple residual streams, thereby increasing model expressivity and accelerating convergence. However, because H_res is learned without constraints, its spectral norm can exceed one, leading to gradient explosion and training instability in deep networks.

To mitigate this, DeepSeek proposed Manifold‑Constrained HC (mHC), which projects H_res onto the Birkhoff polytope (the set of doubly‑stochastic matrices) using the Sinkhorn‑Knopp (SK) algorithm. In practice, mHC runs a fixed number of SK iterations (typically 20) to approximately enforce row‑ and column‑sum constraints. The authors of the current work identify two fundamental drawbacks of this approach. First, the SK algorithm’s convergence rate depends heavily on the condition of the input matrix; for ill‑conditioned inputs, 20 iterations may leave the matrix far from true doubly‑stochasticity. The paper reproduces a pathological example where after 20 SK steps the column sums deviate by up to 40 % from one, and demonstrates that such errors accumulate across layers, reaching a 220 % deviation in a 24‑layer network. Second, achieving competitive throughput with SK requires highly specialized fused CUDA kernels that recompute intermediate scaling factors during back‑propagation, creating a steep engineering barrier and reducing portability across deep‑learning frameworks.

Motivated by the Birkhoff‑von Neumann theorem—which states that any doubly‑stochastic matrix can be expressed as a convex combination of permutation matrices—the authors propose mHC‑lite. Instead of iteratively scaling a matrix, mHC‑lite directly parameterizes H_res as

H_res = Σ_{k=1}^{n!} a_k P_k,

where {P_k} are all n × n permutation matrices, a_k ≥ 0, and Σ a_k = 1. The coefficients a_k are obtained by applying a softmax to a linear projection of the normalized input features, i.e., a = softmax(α_res · x̂′ W_res + b_res). Because the set of permutation matrices is fixed, the convex combination can be computed with a single matrix multiplication between the coefficient vector a and a pre‑stored binary tensor containing all permutations. For the typical setting n = 4 (four residual streams), n! = 24, so the overhead is negligible. This construction guarantees exact doubly‑stochasticity by design, eliminating the approximation gap of SK, and requires only native tensor operations—no custom kernels.

The authors retain the rest of the mHC architecture (RMSNorm, H_pre, H_post, and the main transformation f) unchanged, ensuring a fair comparison. Extensive experiments are conducted on ImageNet‑1K, CIFAR‑100, and large‑scale Transformer language models. Results show:

-

Performance – mHC‑lite matches or slightly exceeds mHC’s top‑1 accuracy (≈78.3 % on ImageNet) and improves CIFAR‑100 accuracy by ~0.3 % points.

-

Throughput – Even with a naïve PyTorch implementation, mHC‑lite achieves 1.3–1.7× higher training speed than mHC, because it avoids the repeated row/column normalizations and the associated kernel launches.

-

Memory – Skipping the storage of intermediate scaling factors reduces GPU memory consumption by roughly 12 %.

-

Stability – Gradient‑norm plots reveal that mHC‑lite maintains a stable magnitude throughout training, whereas mHC occasionally exhibits spikes indicative of residual‑matrix drift. In very deep configurations (up to 1,000 layers), mHC‑lite remains stable, confirming the theoretical guarantee that the product of doubly‑stochastic matrices stays within the Birkhoff polytope.

The paper also discusses limitations. The number of permutation matrices grows factorially with the number of streams (n!), which could become prohibitive for larger n. The authors suggest future directions such as sampling a subset of permutations, learning a low‑rank embedding of permutation space, or imposing sparsity constraints to keep the method scalable.

In summary, mHC‑lite provides a conceptually simple yet powerful re‑parameterization that enforces exact doubly‑stochasticity without iterative scaling. It removes the need for specialized CUDA kernels, improves training efficiency, and eliminates the residual‑matrix instability observed in HC and mHC. This makes it an attractive drop‑in replacement for any architecture that wishes to exploit multi‑stream residual connections while preserving the robustness of classic identity shortcuts.

Comments & Academic Discussion

Loading comments...

Leave a Comment