MAGA-Bench: Machine-Augment-Generated Text via Alignment Detection Benchmark

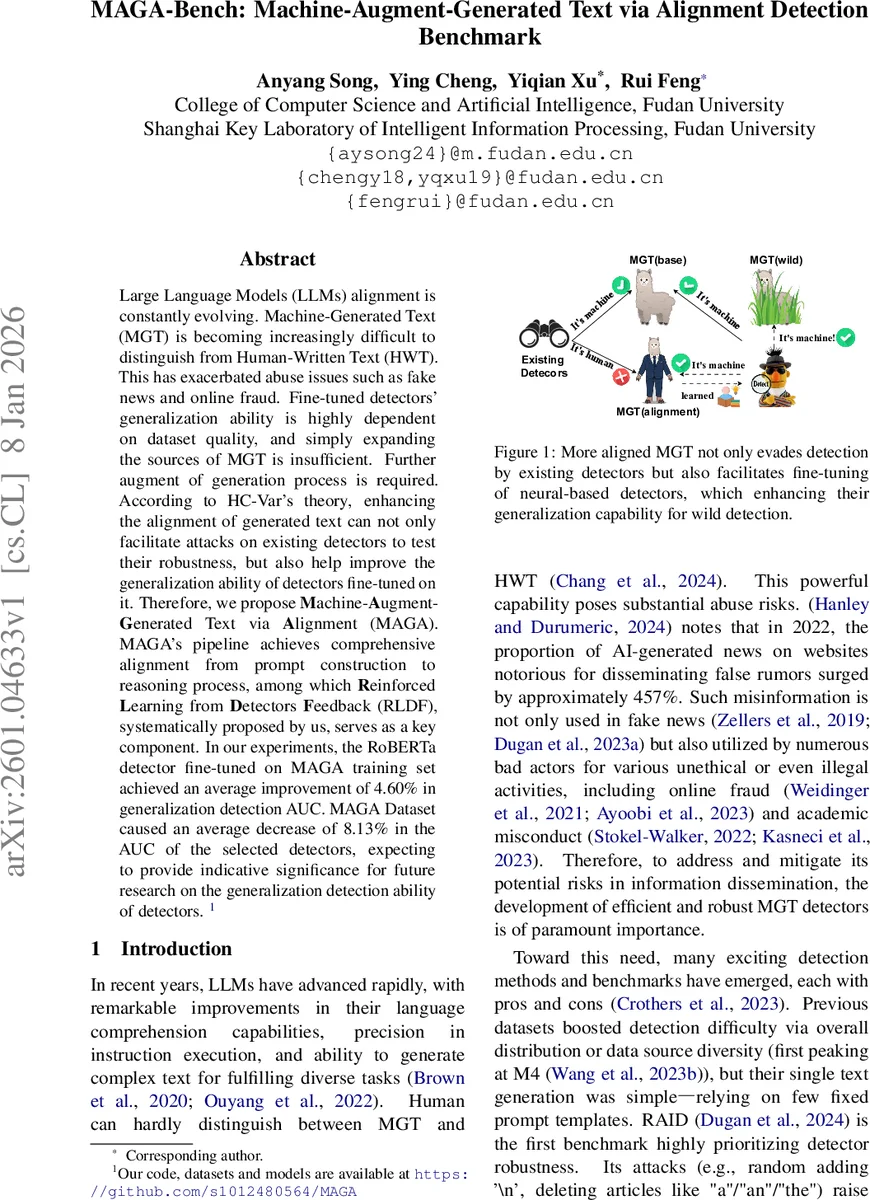

Large Language Models (LLMs) alignment is constantly evolving. Machine-Generated Text (MGT) is becoming increasingly difficult to distinguish from Human-Written Text (HWT). This has exacerbated abuse issues such as fake news and online fraud. Fine-tuned detectors’ generalization ability is highly dependent on dataset quality, and simply expanding the sources of MGT is insufficient. Further augment of generation process is required. According to HC-Var’s theory, enhancing the alignment of generated text can not only facilitate attacks on existing detectors to test their robustness, but also help improve the generalization ability of detectors fine-tuned on it. Therefore, we propose \textbf{M}achine-\textbf{A}ugment-\textbf{G}enerated Text via \textbf{A}lignment (MAGA). MAGA’s pipeline achieves comprehensive alignment from prompt construction to reasoning process, among which \textbf{R}einforced \textbf{L}earning from \textbf{D}etectors \textbf{F}eedback (RLDF), systematically proposed by us, serves as a key component. In our experiments, the RoBERTa detector fine-tuned on MAGA training set achieved an average improvement of 4.60% in generalization detection AUC. MAGA Dataset caused an average decrease of 8.13% in the AUC of the selected detectors, expecting to provide indicative significance for future research on the generalization detection ability of detectors.

💡 Research Summary

The paper addresses the growing difficulty of distinguishing machine‑generated text (MGT) from human‑written text (HWT) as large language models (LLMs) become increasingly capable. Existing detectors suffer from limited generalization, which is largely tied to the quality and diversity of the training data; merely adding more MGT sources does not suffice. Building on the HC‑Var theory, the authors argue that making MGT more “aligned” with HWT—i.e., sharing the same salient linguistic features—both challenges current detectors (useful for robustness testing) and provides better training material for fine‑tuning detectors, thereby improving their out‑of‑distribution performance.

To this end, they introduce MAGA‑Bench (Machine‑Augment‑Generated Text via Alignment), a benchmark that systematically augments the generation pipeline with four complementary alignment techniques:

- Role‑Playing – concise, coarse‑grained system‑role prompts that steer the LLM toward a human‑like persona without the brittleness of overly detailed role descriptions.

- BPO (Prompt‑Optimization) – a dedicated model that appends alignment‑enhancing information to the original prompt, ensuring the generated output stays closer to human‑written characteristics.

- Self‑Refine – a reasoning‑process optimization where the LLM first generates a draft, then critiques its own output and refines it, improving coherence and reducing obvious machine artifacts.

- RLDF (Reinforced Learning from Detectors Feedback) – the core contribution. Existing detectors (primarily RoBERTa‑based) are used as reward models (RMs). The LLM is fine‑tuned via reinforcement learning (GRPO) to produce text that is harder for the detector to flag and more human‑aligned. RLDF is split into two cross‑regularization variants:

- RLDF‑CD (Cross‑Domain) – detectors are fine‑tuned on domain‑specific MGT, then cross‑applied to other domains for reward calculation, encouraging domain‑agnostic alignment.

- RLDF‑CM (Cross‑Model) – detectors are fine‑tuned on model‑specific MGT and then cross‑applied across models, mitigating over‑fitting to a single generator’s quirks.

The dataset construction proceeds as follows: 72 k human texts (titles included) are sampled uniformly from ten diverse domains (Reddit, Wikipedia, news, reviews, Q&A, etc.). For each human text, matching MGT is generated using twelve contemporary LLMs (GPT‑4o‑mini, Llama‑3.1‑8B, Gemini‑2.0‑flash, DeepSeek‑V3, Qwen‑3, Mistral‑8B, Hunyuan‑TurboS, etc.). Two parallel corpora are produced:

- MGB (Machine‑Generated‑Base) – generated with the original, unaugmented prompt template.

- MAGA – generated through the full alignment pipeline (role‑playing + BPO + RLDF + Self‑Refine).

Both corpora contain 936 k entries (60 k training, 12 k validation per domain), yielding a 1:1 pairing of HWT and MGT. The authors emphasize that MAGA is the only publicly available benchmark that simultaneously offers multi‑domain, multi‑generator, multilingual, adversarial, and multi‑sampling‑parameter coverage while also applying alignment augmentation.

Evaluation: Ten detectors selected from the RAID suite (excluding costly commercial models) are evaluated on both MGB and MAGA. Metrics include AUC, ACC, TPR, TNR, and AUC@FPR=5 %. Results show:

- When detectors are attacked with MAGA data (i.e., applied without retraining), their average AUC drops by 8.13 %, confirming that MAGA‑generated text successfully evades existing systems.

- When a RoBERTa detector is fine‑tuned on MAGA training data, its average AUC on four external benchmark datasets (SemEval‑2024‑M4 test set, M4, RAID, etc.) improves by 4.60 % over a model fine‑tuned on MGB. Gains are especially pronounced for R‑B GPT2, R‑L GPT2, and R‑B CGPT detectors (≈5‑6 % absolute increase).

Table 1 compares MAGA‑Bench to prior datasets (M4, RAID, RealDet, etc.) across dimensions such as domain coverage, model coverage, multilinguality, adversarial attacks, sampling diversity, and alignment augmentation. MAGA scores “✓” in all categories, highlighting its comprehensiveness.

The authors discuss practical challenges of RLDF: using a detector as a reward model can lead to over‑fitting, as detectors quickly achieve near‑perfect training accuracy and rely on spurious shortcuts. To mitigate this, they employ cross‑domain and cross‑model reward signals, and limit RLDF to a single criticism‑refinement round, which empirically yields stable improvements.

Limitations & Future Work: The study relies heavily on automatic metrics; human evaluation of perceived naturalness and alignment is absent. RLDF’s computational cost is non‑trivial, especially when scaling to larger LLMs. The authors suggest extending RLDF to multi‑round iterations, incorporating more languages and cultural contexts, and exploring joint training of detectors and generators.

Conclusion: MAGA‑Bench demonstrates that alignment‑focused augmentation can simultaneously produce harder‑to‑detect MGT (useful for robustness testing) and higher‑quality training data that boosts detector generalization. The pipeline—particularly the novel RLDF mechanism—offers a promising direction for the co‑evolution of LLM generation and detection technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment