MMErroR: A Benchmark for Erroneous Reasoning in Vision-Language Models

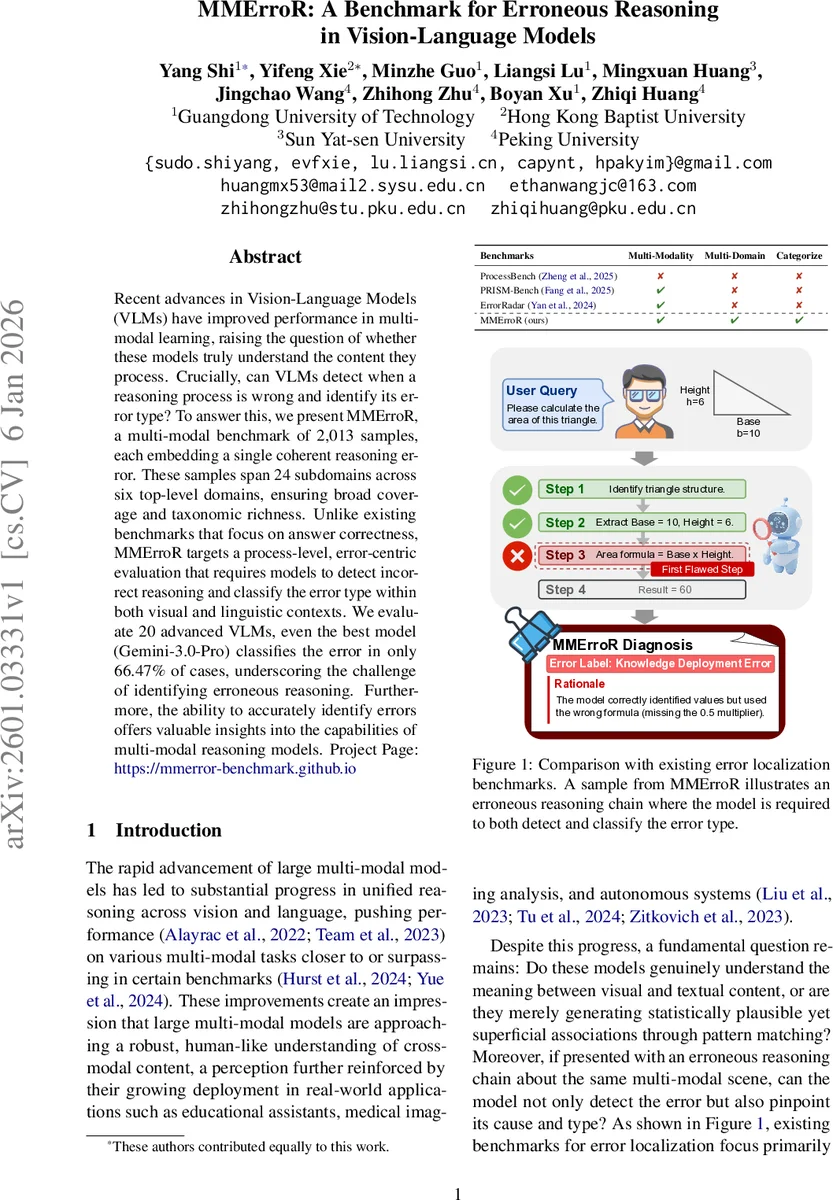

Recent advances in Vision-Language Models (VLMs) have improved performance in multi-modal learning, raising the question of whether these models truly understand the content they process. Crucially, can VLMs detect when a reasoning process is wrong and identify its error type? To answer this, we present MMErroR, a multi-modal benchmark of 2,013 samples, each embedding a single coherent reasoning error. These samples span 24 subdomains across six top-level domains, ensuring broad coverage and taxonomic richness. Unlike existing benchmarks that focus on answer correctness, MMErroR targets a process-level, error-centric evaluation that requires models to detect incorrect reasoning and classify the error type within both visual and linguistic contexts. We evaluate 20 advanced VLMs, even the best model (Gemini-3.0-Pro) classifies the error in only 66.47% of cases, underscoring the challenge of identifying erroneous reasoning. Furthermore, the ability to accurately identify errors offers valuable insights into the capabilities of multi-modal reasoning models. Project Page: https://mmerror-benchmark.github.io

💡 Research Summary

The paper introduces MMErroR, a novel benchmark designed to evaluate Vision‑Language Models (VLMs) on their ability to detect and classify erroneous reasoning steps in multimodal contexts. While recent VLMs have achieved impressive performance on tasks that require generating correct answers, there has been little work on assessing whether these models can recognize when their own reasoning is flawed and pinpoint the nature of the flaw. MMErroR fills this gap by providing 2,013 carefully curated samples, each consisting of an image, a question, and a multi‑step chain‑of‑thought (CoT) that contains exactly one deliberately injected error.

Dataset construction draws from established multimodal corpora (MMMU, MathVista, ScienceQA, AI2D, etc.) to ensure diverse domains. A stratified sampling strategy balances six top‑level domains (Physics & Engineering, Data & Analytics, Mathematics & Logic, Earth & Environment, Biology & Healthcare, Chemistry & Materials). After filtering for complexity, GPT‑5 is used to inject a single error into each CoT, constrained to one of four taxonomic categories: Visual Perception Error (VPE), Knowledge Deployment Error (KDE), Question Comprehension Error (QCE), and Reasoning Error (RE). Human verification proceeds in three rounds with 20 domain experts, achieving a Cohen’s κ of 0.794 and a final agreement of 97.19 %. An additional scoring phase on coherence, step clarity, error localizability, and semantic consistency further prunes the set to the final 2,013 high‑quality instances.

Evaluation protocol defines two complementary tasks. (1) Error‑Type Classification (ETC) assumes the presence of an error and asks the model to select the correct error type from the four‑class taxonomy. (2) Error‑Presence Detection (EPD) first requires the model to decide whether any error exists; if it answers “yes,” it may then provide the error type. This separation lets researchers measure pure detection ability versus full diagnostic capability.

Experimental setup evaluates 20 state‑of‑the‑art VLMs, split into “thinking‑less” (direct response) models such as GPT‑4o mini, Qwen‑VL‑Max, LLaMA‑4‑Maverick, and “thinking‑enabled” models that generate explicit reasoning, including the Gemini series, Claude‑4‑Sonnet, GPT‑5.2, Grok‑4, and Qwen‑VL‑Thinking. Baselines include random choice and human experts (low and high proficiency).

Results show that even the best model, Gemini‑3.0‑Pro, attains only 66.67 % accuracy on ETC and roughly 70 % on EPD, far below human expert performance (>90 %). Knowledge Deployment Errors dominate the dataset (48.39 % of samples) and are the hardest for models, with accuracies hovering around 55 %. Visual Perception Errors are relatively easier (~70 % accuracy), while Question Comprehension and Reasoning Errors remain challenging (~60 %). The gap between “thinking‑enabled” and “thinking‑less” models is modest, indicating that simply prompting models to reason does not automatically confer robust self‑diagnostic abilities.

Analysis highlights three key insights: (i) VLMs excel at surface‑level perception‑language alignment but lack meta‑cognitive mechanisms to audit their own inference chains; (ii) error‑type performance differentials expose specific weaknesses—e.g., insufficient grounding of external knowledge and limited question‑parsing fidelity; (iii) the benchmark’s fine‑grained taxonomy enables targeted diagnostics, offering a roadmap for future model improvements such as dedicated error‑detection modules, self‑correction loops, or hybrid symbolic‑neural architectures.

Conclusion positions MMErroR as the first large‑scale, process‑oriented benchmark for multimodal error detection and classification. By quantifying VLMs’ introspective capabilities across diverse domains, the work not only reveals a substantial reliability gap between current models and human reasoning but also provides a concrete evaluation framework to drive the next generation of trustworthy, self‑aware multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment