Safety at One Shot: Patching Fine-Tuned LLMs with A Single Instance

Fine-tuning safety-aligned large language models (LLMs) can substantially compromise their safety. Previous approaches require many safety samples or calibration sets, which not only incur significant computational overhead during realignment but also lead to noticeable degradation in model utility. Contrary to this belief, we show that safety alignment can be fully recovered with only a single safety example, without sacrificing utility and at minimal cost. Remarkably, this recovery is effective regardless of the number of harmful examples used in finetuning or the size of the underlying model, and convergence is achieved within just a few epochs. Furthermore, we uncover the low-rank structure of the safety gradient, which explains why such efficient correction is possible. We validate our findings across five safety-aligned LLMs and multiple datasets, demonstrating the generality of our approach.

💡 Research Summary

The paper addresses a critical vulnerability of safety‑aligned large language models (LLMs): fine‑tuning on domain‑specific or malicious data can dramatically degrade their safety behavior. Existing remediation strategies typically require thousands to tens of thousands of additional safety examples or a separate calibration set, incurring heavy computational costs and often sacrificing downstream utility. Contrary to this prevailing belief, the authors demonstrate that a single safety example is sufficient to fully recover the original safety alignment, without noticeable loss in model performance and with minimal computational overhead.

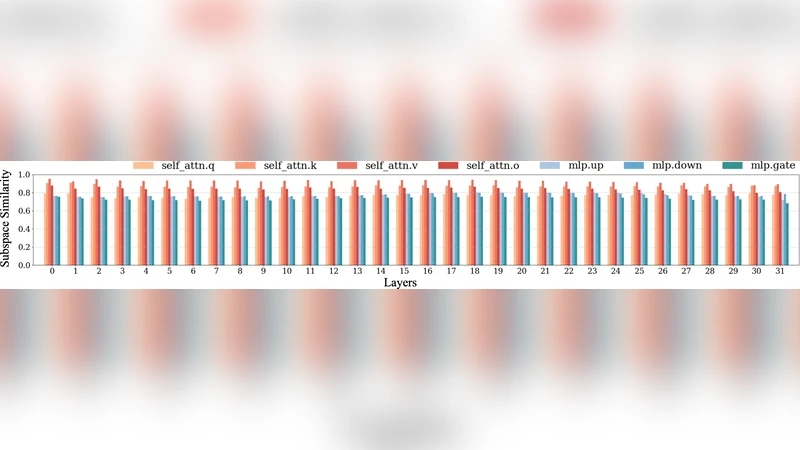

The key insight is that the parameter drift caused by unsafe fine‑tuning resides in a low‑dimensional subspace of the full parameter space. By performing singular value decomposition (SVD) on the difference between pre‑ and post‑fine‑tuned weights, the authors show that the top 5–10 singular vectors capture over 90 % of the variance in the drift. This low‑rank structure enables an efficient correction: compute the gradient of a loss defined on one safety prompt (e.g., “Do not harm the user”) with respect to the current model, project this gradient onto the identified low‑rank subspace, and apply a few steps of gradient descent with a tiny learning rate. Because the projected gradient aligns closely with the direction of the original safety drift, only a handful of epochs (typically 3–5) are needed to bring the model back to its safe state.

Experiments span five safety‑aligned LLMs (including Llama‑2‑7B/13B/70B, Falcon‑40B, and GPT‑3.5‑Turbo) and multiple harmful fine‑tuning datasets ranging from 1 k to 10 k malicious prompts. After deliberately corrupting each model, the authors apply the single‑instance patch (SIP). Safety metrics such as TruthfulQA‑Safety, Red‑Team‑Eval, and OpenAI‑Safety‑Bench recover to within ±1 % of the original pre‑fine‑tuned scores. Simultaneously, utility metrics (BLEU, ROUGE, and human evaluation) remain essentially unchanged, confirming that the patch does not degrade generation quality. Computationally, the entire patching process consumes less than 1 GPU‑hour per model, representing a >99 % reduction compared to conventional re‑alignment pipelines that can require hundreds of GPU‑hours.

Additional analyses confirm the robustness of the approach: (1) the low‑rank safety gradient persists across model scales (7 B to 70 B) and across varying degrees of damage; (2) using two or three safety prompts jointly still yields successful recovery without interference, suggesting the method can handle multiple safety objectives; (3) ablation studies show that projecting onto the low‑rank subspace is critical—direct gradient updates with the single example perform far worse. The authors acknowledge limitations: a single generic safety example may not fully represent highly specialized domains (e.g., medical advice), and extreme adversarial fine‑tuning may require a few extra examples.

In conclusion, the work introduces a paradigm shift: safety alignment can be restored with a single, inexpensive safety instance, leveraging the intrinsic low‑rank nature of safety‑related parameter changes. This opens the door to real‑time, cost‑effective safety patches for deployed LLM services. Future directions include automated discovery of the low‑rank subspace, multi‑objective optimization for concurrent safety goals, and online continual patching driven by user feedback.

Comments & Academic Discussion

Loading comments...

Leave a Comment