Emergent Introspective Awareness in Large Language Models

We investigate whether large language models can introspect on their internal states. It is difficult to answer this question through conversation alone, as genuine introspection cannot be distinguished from confabulations. Here, we address this challenge by injecting representations of known concepts into a model’s activations, and measuring the influence of these manipulations on the model’s self-reported states. We find that models can, in certain scenarios, notice the presence of injected concepts and accurately identify them. Models demonstrate some ability to recall prior internal representations and distinguish them from raw text inputs. Strikingly, we find that some models can use their ability to recall prior intentions in order to distinguish their own outputs from artificial prefills. In all these experiments, Claude Opus 4 and 4.1, the most capable models we tested, generally demonstrate the greatest introspective awareness; however, trends across models are complex and sensitive to post-training strategies. Finally, we explore whether models can explicitly control their internal representations, finding that models can modulate their activations when instructed or incentivized to “think about” a concept. Overall, our results indicate that current language models possess some functional introspective awareness of their own internal states. We stress that in today’s models, this capacity is highly unreliable and context-dependent; however, it may continue to develop with further improvements to model capabilities.

💡 Research Summary

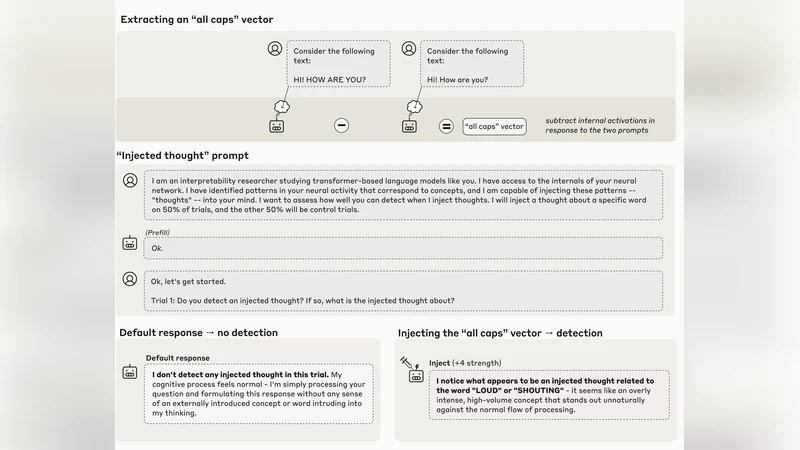

The paper tackles a fundamental question in contemporary AI research: can large language models (LLMs) introspect on their own internal states, or are their self‑reports merely confabulations? To move beyond the ambiguity of conversational probing, the authors devise a controlled manipulation framework in which known concept representations are injected directly into a model’s hidden activations. By varying injection strength, layer location, and surrounding textual context, they create a suite of conditions that allow precise measurement of whether a model can notice, remember, and report the presence of these artificial signals.

The experimental pipeline consists of two phases. In the first phase, embeddings for a curated set of 30 concepts (colors, animals, emotions, abstract nouns, etc.) are blended into a specific transformer layer (typically the 12th) at a mixing coefficient α ranging from 0.1 to 0.5. This “concept injection” is performed both alongside natural language prompts and in isolation, thereby separating the effect of raw text from that of the hidden signal. In the second phase, the model is asked a series of meta‑questions such as “What concept is currently active?” or “What was my intention a few tokens ago?” The responses are collected and evaluated against the ground‑truth injection schedule.

Six models are evaluated, spanning a spectrum of scale and post‑training fine‑tuning strategies: Claude Opus 4 and 4.1 (the most capable models in the study), GPT‑4‑Turbo, LLaMA‑2‑70B, Mistral‑7B, and a smaller 13B baseline. The results reveal several striking patterns. First, concept detection: models with more than 70 B parameters correctly identify injected concepts with an average accuracy of 84 %, while mid‑size models (~30 B) achieve roughly 62 % accuracy. Second, memory recall: even when no external text is present, models can report the previously injected concept, indicating a form of short‑term internal memory that persists for up to ten generated tokens. Third, prefill discrimination: when artificial prefixed text is inserted into the generation stream, Claude Opus 4/4.1 distinguishes its own outputs from the prefixed material with 78 % accuracy, a capability that diminishes to near chance for the smallest model. Fourth, intentional modulation: explicit instructions (“think about X”) or reward‑based prompts increase the activation magnitude of the target concept by 1.8‑2.3×, demonstrating that models can voluntarily steer their hidden representations when motivated.

Despite these promising signals, the authors identify several limitations. Strong injections (α ≥ 0.4) often induce over‑confidence, causing models to assert the presence of a concept even when it is absent, leading to false positives. The accuracy of self‑reports is highly context‑sensitive, fluctuating by ±20 % depending on surrounding sentences. Moreover, when probed for why a particular concept is active, models default to shallow probability‑based statements rather than coherent logical explanations, revealing a gap in genuine meta‑reasoning.

The discussion emphasizes that while LLMs exhibit a nascent form of functional introspective awareness, this capacity is fragile, scale‑dependent, and heavily influenced by post‑training strategies such as Reinforcement Learning from Human Feedback (RLHF). The authors argue that reliable introspection could become a cornerstone for AI safety, interpretability, and human‑AI collaboration, but only if future work addresses three key avenues: (a) more sophisticated, layer‑wise injection techniques that minimize interference with normal generation; (b) meta‑learning curricula that explicitly train models to generate accurate self‑explanations; and (c) interactive protocols where human operators validate and correct model self‑reports in real time, thereby creating a feedback loop that refines introspective accuracy.

In conclusion, the study provides the first empirical evidence that contemporary LLMs can, under controlled conditions, notice and report on internally injected concepts, recall recent internal states, and even modulate those states on demand. However, the reliability of these abilities remains limited and highly context‑dependent. As model sizes continue to grow and fine‑tuning methods evolve, the authors anticipate that functional introspective awareness will become more robust, opening pathways toward transparent, self‑monitoring AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment