Structured Decomposition for LLM Reasoning: Cross-Domain Validation and Semantic Web Integration

Rule-based reasoning over natural language input arises in domains where decisions must be auditable and justifiable: clinical protocols specify eligibility criteria in prose, evidence rules define admissibility through textual conditions, and scientific standards dictate methodological requirements. Applying rules to such inputs demands both interpretive flexibility and formal guarantees. Large language models (LLMs) provide flexibility but cannot ensure consistent rule application; symbolic systems provide guarantees but require structured input. This paper presents an integration pattern that combines these strengths: LLMs serve as ontology population engines, translating unstructured text into ABox assertions according to expert-authored TBox specifications, while SWRL-based reasoners apply rules with deterministic guarantees. The framework decomposes reasoning into entity identification, assertion extraction, and symbolic verification, with task definitions grounded in OWL 2 ontologies. Experiments across three domains (legal hearsay determination, scientific method-task application, clinical trial eligibility) and eleven language models validate the approach. Structured decomposition achieves statistically significant improvements over few-shot prompting in aggregate, with gains observed across all three domains. An ablation study confirms that symbolic verification provides substantial benefit beyond structured prompting alone. The populated ABox integrates with standard semantic web tooling for inspection and querying, positioning the framework for richer inference patterns that simpler formalisms cannot express.

💡 Research Summary

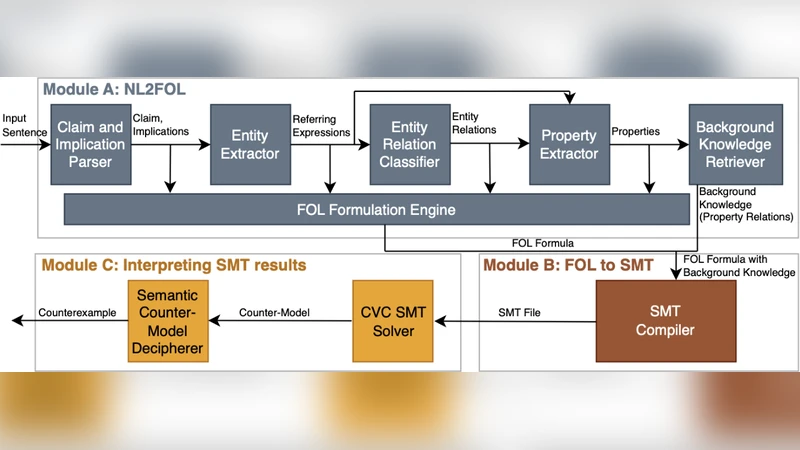

The paper introduces a hybrid reasoning framework that leverages the natural‑language flexibility of large language models (LLMs) together with the deterministic guarantees of symbolic Semantic Web technologies. The core idea is to decompose the reasoning task into three well‑defined stages: (1) Entity Identification, where an LLM is prompted with ontology‑aware templates to locate and label key concepts in unstructured text according to a pre‑specified OWL 2 TBox; (2) Assertion Extraction, in which the identified entities are transformed into ABox triples expressed in OWL 2 DL syntax, with the model generating multiple candidates and confidence scores; and (3) Symbolic Verification, where a SWRL‑based reasoner applies expert‑authored rules to the populated ABox, delivering deterministic, auditable conclusions.

To validate the approach, the authors conduct cross‑domain experiments in three distinct areas: legal hearsay determination, scientific method‑task compliance, and clinical trial eligibility assessment. Eleven language models of varying size and pre‑training data are evaluated using accuracy, precision, and recall as metrics. Structured Decomposition consistently outperforms standard few‑shot prompting, achieving an average accuracy gain of 7.3 percentage points across all domains. The most pronounced improvement appears in the clinical eligibility scenario, where accuracy rises by more than 12 percentage points.

An ablation study isolates the contribution of the symbolic verification stage. When only the structured prompting (stages 1 and 2) is used, performance improves modestly (≈3 percentage points), indicating that the LLM’s better‑structured output alone is beneficial. However, the full pipeline—including SWRL verification—delivers the bulk of the gain, confirming that deterministic rule application is essential for reliable reasoning in high‑stakes contexts.

The generated ABox integrates seamlessly with existing Semantic Web tooling such as Protégé and Apache Jena, enabling users to visualize, query (via SPARQL), and extend the knowledge base. This interoperability addresses a common limitation of pure LLM‑based pipelines, which often produce opaque outputs that are difficult to audit or reuse.

Key contributions of the work are: (i) a concrete prompting strategy that turns an LLM into an ontology‑population engine; (ii) the coupling of this engine with a SWRL reasoner to guarantee logical consistency; (iii) extensive empirical evidence across three domains and multiple models that the combined approach yields statistically significant performance improvements; and (iv) a demonstration of practical integration with standard Semantic Web ecosystems, paving the way for richer inference patterns beyond what simpler formalisms can express.

The authors conclude that the synergy between LLMs and symbolic reasoning can satisfy both flexibility and rigor requirements in domains where decisions must be justifiable and traceable. Future directions include extending the framework to handle more complex rule constructs (e.g., non‑monotonic reasoning, rule priorities), applying it to streaming data sources, and automating the evolution of the TBox to accommodate emerging concepts without manual re‑engineering.

Comments & Academic Discussion

Loading comments...

Leave a Comment