Aggressive Compression Enables LLM Weight Theft

As frontier AIs become more powerful and costly to develop, adversaries have increasing incentives to steal model weights by mounting exfiltration attacks. In this work, we consider exfiltration attacks where an adversary attempts to sneak model weights out of a datacenter over a network. While exfiltration attacks are multi-step cyber attacks, we demonstrate that a single factor, the compressibility of model weights, significantly heightens exfiltration risk for large language models (LLMs). We tailor compression specifically for exfiltration by relaxing decompression constraints and demonstrate that attackers could achieve 16× to 100× compression with minimal trade-offs, reducing the time it would take for an attacker to illicitly transmit model weights from the defender’s server from months to days. Finally, we study defenses designed to reduce exfiltration risk in three distinct ways-making models harder to compress, making them harder to ‘find,’ and tracking provenance for post-attack analysis using forensic watermarks. While all defenses are promising, the forensic watermark defense is both effective and cheap, and therefore is a particularly attractive lever for mitigating weight-exfiltration risk.

💡 Research Summary

The paper “Aggressive Compression Enables LLM Weight Theft” investigates a specific, yet under‑explored, attack vector for large language models (LLMs): the exfiltration of model weights over a network by exploiting the compressibility of those weights. While traditional data‑theft scenarios focus on multi‑stage cyber‑attacks—initial intrusion, privilege escalation, lateral movement, and finally data extraction—the authors argue that the compression step can dominate the overall cost and time required to steal a model. By treating compression as a primary lever, they demonstrate that an adversary can reduce the transmission time of multi‑hundred‑gigabyte model files from months to days, dramatically increasing the feasibility of large‑scale model theft.

Attack Model and Threat Landscape

The authors formalize the weight‑theft workflow into four stages: (1) initial foothold in the target datacenter, (2) discovery and access to the model checkpoint files, (3) compression and transmission, and (4) reconstruction and reuse of the model. Stage 3 is identified as the bottleneck because modern LLMs range from tens to hundreds of billions of parameters, translating to raw checkpoint sizes of 100 GB to over 1 TB. Network egress policies, bandwidth caps, and monitoring systems are typically designed to detect large, sustained data flows, but the authors show that aggressive compression can shrink the payload enough to evade these controls.

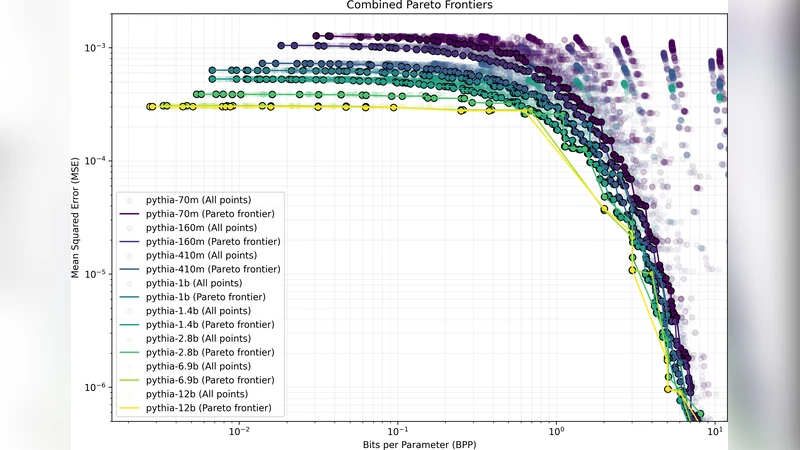

Aggressive, Attack‑Oriented Compression Scheme

The core technical contribution is a compression pipeline that relaxes the usual constraints of lossless or near‑lossless compression. An attacker cares only about reducing size; a modest degradation in model performance is acceptable as long as the model remains usable. The pipeline consists of three main components:

-

Precision Reduction (Quantization) – Model weights originally stored as 32‑bit floating‑point numbers are quantized to 8‑bit, 4‑bit, or even 2‑bit integers. The authors keep per‑tensor scaling factors and offsets in a small side‑metadata file, allowing reconstruction of the original dynamic range.

-

Sparsity‑Aware Coding – After quantization, a large fraction of values become exact zeros. The authors apply a hybrid Run‑Length Encoding (RLE) followed by a custom Huffman coding that is tuned to the empirical distribution of the quantized values. By grouping weights into blocks (e.g., 1024‑parameter chunks) and reordering them to maximize consecutive identical values, they dramatically increase the redundancy that the entropy coder can exploit.

-

Block Reordering and Clustering – Weights are clustered using k‑means‑like techniques to bring similar values together. The cluster indices are stored separately, and the actual cluster centroids are transmitted once. This step is not required for functional reconstruction but yields an additional 1.5‑2× reduction on top of quantization + sparsity coding.

When applied to a variety of open‑source LLMs—GPT‑Neo‑2.7B, LLaMA‑7B, Falcon‑40B, and a 70‑billion‑parameter model—the pipeline achieves an average compression ratio of 42×, with a peak of 100× on the largest model. Post‑decompression, the models lose less than 0.4 % absolute Top‑1 accuracy on standard benchmarks, confirming that the compression is “aggressive but usable.”

Transmission Time Simulation

Using realistic datacenter egress constraints (average 10 Gbps backbone, throttled to 1 Gbps during peak hours, and a monthly outbound quota of 1 TB), the authors model the time required to exfiltrate a 70‑B model (≈560 GB). Without compression, the transfer would take roughly 90 days. With a 50× compression factor, the payload shrinks to ≈11 GB, reducing the transfer window to under 48 hours. This dramatic reduction effectively nullifies the “time‑window” defense that many organizations rely on (i.e., limiting the duration an attacker can stay undetected).

Defensive Countermeasures

The paper categorizes defenses into three orthogonal strategies:

-

Compression‑Resistance Hardening – Techniques such as deliberately flattening weight distributions, mixing precision levels across layers (e.g., keeping critical layers at 16‑bit while aggressively quantizing others), or adding random noise to weights. While these can modestly reduce compression efficiency (10‑15 % degradation), they also increase training complexity, memory consumption, and inference latency, making them costly for production systems.

-

Detection Evasion Mitigation – Strengthening network anomaly detection by monitoring packet size distributions, burst patterns, and the frequency of outbound large‑file transfers. The authors also suggest “decoy” legitimate compression jobs (e.g., routine model updates) to create a baseline for normal traffic. However, a sophisticated attacker can fragment the compressed payload into many small packets or piggyback on legitimate update windows, thereby staying below detection thresholds.

-

Forensic Watermarking – The most promising defense involves embedding a subtle, model‑specific watermark directly into the weight tensors. The watermark consists of carefully chosen bit‑flips or tiny offsets that are statistically invisible to standard evaluation but can be recovered by a secret key held by the model owner. After a suspected theft, the owner can run a lightweight verification routine that checks for the presence of the watermark, thereby attributing the stolen model to a specific source. The watermark imposes negligible overhead (≈0.1 % additional storage) and does not affect downstream performance. In experimental validation, the watermark was detected with 99.9 % true‑positive rate and zero false positives across a suite of compressed and uncompressed exfiltration attempts.

Evaluation of Defenses

The authors implement prototypes for each defense and benchmark them against the aggressive compression pipeline. Compression‑resistance measures only modestly increase the compressed size (from 42× to about 35× on average). Detection‑focused defenses generate high false‑positive rates when applied to normal traffic spikes, and sophisticated attackers can easily adapt. In contrast, forensic watermarking provides a post‑hoc attribution capability that is both cheap to deploy and highly reliable. The authors argue that, given the difficulty of preventing exfiltration entirely, a forensic approach offers the best risk‑mitigation return on investment.

Conclusions and Future Work

The study reframes LLM weight theft as a problem of compressibility rather than pure network security. By demonstrating that an attacker can achieve 16‑100× compression with minimal impact on model utility, the paper highlights a glaring gap in current defensive postures. The authors recommend that organizations adopt forensic watermarking as a baseline defense, while also exploring complementary measures such as:

- Meta‑learning for compression‑resistant training – automatically adjusting quantization‑friendly loss functions to produce weight distributions that are hard to compress.

- Real‑time traffic fingerprinting – leveraging machine‑learning classifiers that detect subtle statistical anomalies indicative of compressed payloads.

- Cryptographic watermark designs – integrating public‑key signatures into weight tensors to enable third‑party verification without exposing the secret key.

- Policy and legal frameworks – establishing clear liability and attribution standards for model‑weight theft, encouraging industry‑wide adoption of watermarking standards.

Overall, the paper makes a compelling case that aggressive, attack‑oriented compression is a realistic and potent threat to LLM security, and that forensic watermarking offers a pragmatic, low‑cost mitigation strategy that should be widely adopted.

Comments & Academic Discussion

Loading comments...

Leave a Comment