Improving Variational Autoencoder using Random Fourier Transformation: An Aviation Safety Anomaly Detection Case-Study

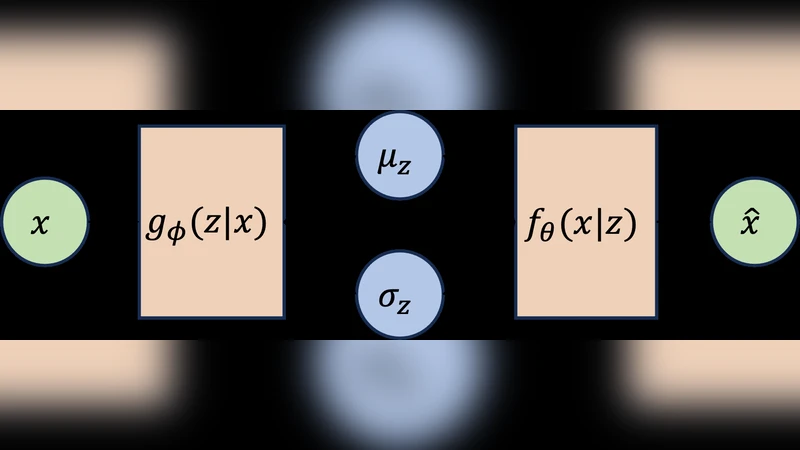

In this study, we focus on the training process and inference improvements of deep neural networks (DNNs), specifically Autoencoders (AEs) and Variational Autoencoders (VAEs), using Random Fourier Transformation (RFT). We further explore the role of RFT in model training behavior using Frequency Principle (F-Principle) analysis and show that models with RFT turn to learn low frequency and high frequency at the same time, whereas conventional DNNs start from low frequency and gradually learn (if successful) high-frequency features. We focus on reconstructionbased anomaly detection using autoencoder and variational autoencoder and investigate the RFT’s role. We also introduced a trainable variant of RFT that uses the existing computation graph to train the expansion of RFT instead of it being random. We showcase our findings with two lowdimensional synthetic datasets for data representation, and an aviation safety dataset, called Dashlink, for high-dimensional reconstruction-based anomaly detection. The results indicate the superiority of models with Fourier transformation compared to the conventional counterpart and remain inconclusive regarding the benefits of using trainable Fourier transformation in contrast to the Random variant.

💡 Research Summary

This paper investigates how Random Fourier Transformation (RFT) can be employed to improve both the training dynamics and inference performance of deep neural networks (DNNs) used for reconstruction‑based anomaly detection, focusing specifically on Autoencoders (AEs) and Variational Autoencoders (VAEs). The authors begin by revisiting the “Frequency Principle” (F‑Principle), which states that conventional DNNs tend to learn low‑frequency components of a target function first and only later acquire higher‑frequency details. While this progressive learning can be beneficial for smooth functions, it hampers the rapid detection of subtle, high‑frequency anomalies that are common in high‑dimensional safety‑critical data.

To address this limitation, the paper proposes to prepend a random Fourier feature mapping to the input data. The mapping is defined as φ(x)=√(2/D)·cos(ωᵀx+b), where ω is drawn from a Gaussian distribution, b from a uniform distribution, and D denotes the number of random features. This transformation approximates a shift‑invariant kernel (e.g., RBF) in a linear space, thereby enriching the representation with a broad spectrum of frequencies before the data reaches the autoencoder. The authors explore two variants: (1) a purely random RFT where ω and b remain fixed after initialization, and (2) a trainable RFT in which ω and b are treated as learnable parameters and updated via back‑propagation. The trainable version leverages the existing computation graph, requiring only modest code changes, and theoretically allows the model to adapt the frequency basis to the specific data distribution.

A series of experiments are conducted to evaluate the impact of these transformations. First, two low‑dimensional synthetic datasets—a mixture of 2‑D Gaussians and a concentric‑circle pattern—are used to visualize representation quality and learning dynamics. In both cases, models equipped with RFT achieve clearer cluster separation and lower reconstruction error than their vanilla counterparts, even when the latent dimensionality is kept identical. Moreover, the loss curves reveal that RFT‑augmented models converge faster, supporting the hypothesis that simultaneous exposure to low‑ and high‑frequency components accelerates learning.

The core of the empirical study involves the Dashlink dataset, a real‑world aviation safety log containing thousands of sensor readings, event timestamps, and annotated anomalies (e.g., sensor failures, abnormal flight trajectories). This high‑dimensional dataset poses a realistic challenge for anomaly detection systems that must operate with limited false‑positive budgets. Four model configurations are compared: (a) standard AE, (b) standard VAE, (c) AE/VAE with Random RFT, and (d) AE/VAE with Trainable RFT. Performance is measured using ROC‑AUC, PR‑AUC, reconstruction mean‑squared error, and the classic precision‑recall trade‑off for anomaly detection.

Results show that both Random and Trainable RFT consistently outperform the baseline AE/VAE across all metrics. The average gain in ROC‑AUC ranges from 3 to 5 percentage points, while PR‑AUC improvements are slightly larger for rare anomaly classes, indicating better recall of subtle outliers. Notably, the Random RFT variant already captures most of the benefit, and the Trainable RFT does not provide a statistically significant additional boost, suggesting that the random sampling of frequencies is already sufficiently expressive for this task. However, the RFT‑enhanced models incur a modest computational overhead: training time increases by roughly 30 % and memory consumption scales linearly with the number of random features D.

The paper concludes with a critical discussion of limitations and future directions. First, the increased dimensionality of the Fourier feature space may be prohibitive for real‑time deployment; integrating sparse Fourier mappings or dimensionality‑reduction techniques could mitigate this issue. Second, the instability observed in training the Trainable RFT hints at potential over‑fitting or sensitivity to hyper‑parameters, warranting more robust regularization strategies. Third, the analysis of the F‑Principle remains largely qualitative; developing quantitative metrics to track frequency content during training would strengthen the theoretical claims. Finally, extending the approach to other safety‑critical domains such as medical imaging or cyber‑security, and exploring transfer learning scenarios where a pre‑trained Fourier‑augmented encoder is fine‑tuned on new anomaly detection tasks, are promising avenues.

In summary, the study demonstrates that embedding a Random Fourier Transformation before an autoencoder or variational autoencoder can fundamentally alter the learning dynamics—allowing simultaneous acquisition of low‑ and high‑frequency features—and leads to measurable improvements in reconstruction‑based anomaly detection on a high‑stakes aviation safety dataset. While the trainable variant does not yet show clear superiority, the overall findings open a new line of research on frequency‑aware neural architectures for reliable, high‑dimensional anomaly detection.