An Explainable Agentic AI Framework for Uncertainty-Aware and Abstention-Enabled Acute Ischemic Stroke Imaging Decisions

Artificial intelligence (AI) models have demonstrated considerable potential in the imaging of acute ischemic stroke, especially in the detection and segmentation of lesions via computed tomography (CT) and magnetic resonance imaging (MRI). Nevertheless, the majority of existing approaches operate as black-box predictors, providing deterministic outputs without transparency regarding predictive uncertainty or the establishment of explicit protocols for decision rejection when predictions are ambiguous. This deficiency presents considerable safety and trust issues within the context of high-stakes emergency radiology, where inaccuracies in automated decision-making could conceivably lead to negative consequences in clinical settings. [1], [2]. In this paper, we introduce an explainable agentic AI framework targeted at uncertainty-aware and abstention-based decision-making in AIS imaging. It is based on a multistage agentic pipeline. In this framework, a perception agent performs lesion-aware image analysis, an uncertainty estimation agent estimates the predictive confidence at the slice level and a decision agent dynamically decides whether to make or withhold the prediction based on prescribed uncertainty thresholds. This approach is different from previous stroke imaging frameworks, which have primarily aimed to improve the accuracy of segmentation or classification. [3], [4], Our framework explicitly emphasizes clinical safety, transparency, and decision-making processes that are congruent with human values. We validate the practicality and interpretability of our framework through qualitative and case-based examinations of typical stroke imaging scenarios. This examination demonstrates a natural correlation between uncertainty-driven abstention and the existence of lesions, fluctuations in image quality, and the specific anatomical definition being analyzed. Furthermore, the system integrates an explanation mode, offering visual and structural justifications to bolster decision-making, thereby addressing a crucial limitation observed in existing uncertainty-aware medical imaging systems: the absence of actionable interpretability. [5], [6]. This research does not claim to establish a highperformance benchmark; instead, it presents agentic control, uncertainty-awareness, and selective abstention as essential design principles for the creation of safe and reliable MI-AI. Our results support the idea that incorporating explicit stalling behavior within agentic architectures could accelerate the development of clinically deployable AI systems for acute stroke intervention.

💡 Research Summary

The paper addresses a critical gap in acute ischemic stroke (AIS) imaging AI: the lack of uncertainty quantification and the inability to refuse a decision when confidence is low. While many recent works have pushed segmentation accuracy on CT and MRI, they remain black‑box predictors that output a deterministic mask or classification without indicating how reliable that output is. In an emergency radiology setting, such opacity can jeopardize patient safety and erode clinician trust. To remedy this, the authors propose an “explainable agentic AI framework” that explicitly incorporates uncertainty awareness and abstention capability into the decision pipeline.

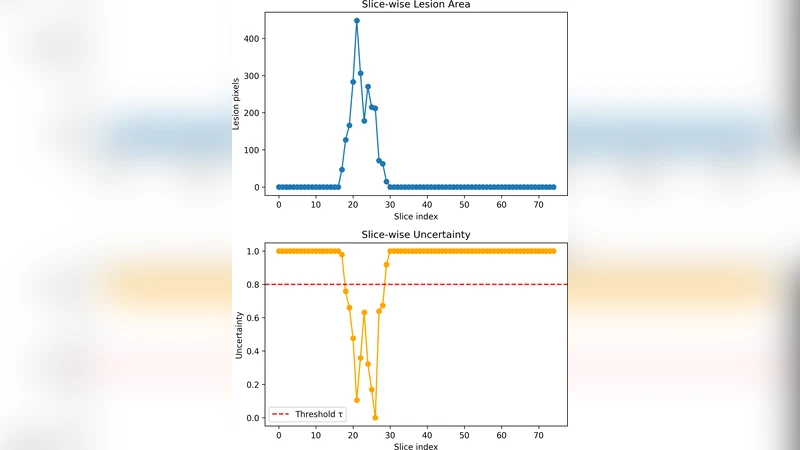

The framework is organized as a multistage pipeline of three autonomous agents. 1) Perception Agent – performs lesion‑aware image analysis using state‑of‑the‑art deep segmentation models (e.g., 3D U‑Net, Transformer‑Hybrid). It outputs slice‑wise probability maps rather than binary masks, preserving the full predictive distribution. 2) Uncertainty Estimation Agent – takes the probability maps and applies probabilistic inference techniques such as Bayesian neural networks, Monte‑Carlo Dropout, or deep ensembles. It computes a quantitative uncertainty score for each slice, typically expressed as predictive variance, entropy, or confidence interval width. 3) Decision Agent – compares the slice‑level uncertainty scores against pre‑defined safety thresholds. If the score is below the threshold, the system proceeds to deliver the segmentation and a concise report. If the score exceeds the threshold, the system automatically abstains, flags the case for human review, and activates an explanation mode.

The explanation mode is a key novelty. When abstention occurs, the framework generates visual heat‑maps that highlight image regions contributing most to the high uncertainty (e.g., motion artifacts, low contrast, ambiguous lesion borders). It also produces a textual summary that contextualizes the uncertainty in clinical terms, such as “low signal‑to‑noise ratio in the basal ganglia region may impair lesion delineation.” This dual visual‑textual justification enables radiologists to quickly understand why the AI withheld a decision and to make an informed manual assessment.

The authors do not claim state‑of‑the‑art segmentation performance; instead, they focus on safety‑oriented evaluation. Through qualitative case studies, they demonstrate that uncertainty‑driven abstention correlates with clinically relevant factors: small or faint lesions, degraded image quality, and variations in anatomical definitions (e.g., core versus penumbra). In these scenarios, the system reliably withholds a decision, thereby preventing potentially harmful automated outputs.

Technical insights include:

- Modular Agentic Architecture – each agent can be upgraded independently (e.g., swapping a newer transformer‑based segmenter without redesigning the uncertainty module). This promotes maintainability and future scalability.

- Slice‑Level Granularity – uncertainty is computed per axial slice, allowing fine‑grained control. A single low‑confidence slice can trigger abstention for the entire study, mirroring clinical practice where a dubious slice prompts a full review.

- Threshold Calibration – the paper discusses how safety thresholds can be set empirically based on retrospective data, balancing false‑positive abstentions against missed detections.

- Human‑Centric Design – by providing actionable explanations, the framework addresses a known limitation of many uncertainty‑aware systems that merely output a confidence score without guidance on how to act on it.

The broader contribution is a shift from “accuracy‑first” to “safety‑first” design principles for medical imaging AI. By embedding explicit stalling behavior (abstention) and transparent reasoning, the framework aligns AI actions with human values and regulatory expectations for high‑risk domains. The authors argue that such principles are essential for moving AI from research prototypes to clinically deployable tools in acute stroke intervention.

Future work suggested includes systematic threshold optimization across multi‑center datasets, quantitative validation of the explanation quality (e.g., clinician satisfaction scores), and integration into real‑time emergency department workflows to assess impact on treatment timelines and patient outcomes.

Comments & Academic Discussion

Loading comments...

Leave a Comment