📝 Original Info

- Title: FedHypeVAE: Federated Learning with Hypernetwork Generated Conditional VAEs for Differentially Private Embedding Sharing

- ArXiv ID: 2601.00785

- Date: 2026-01-02

- Authors: Sunny Gupta, Amit Sethi (Indian Institute of Technology Bombay, Mumbai, India)

📝 Abstract

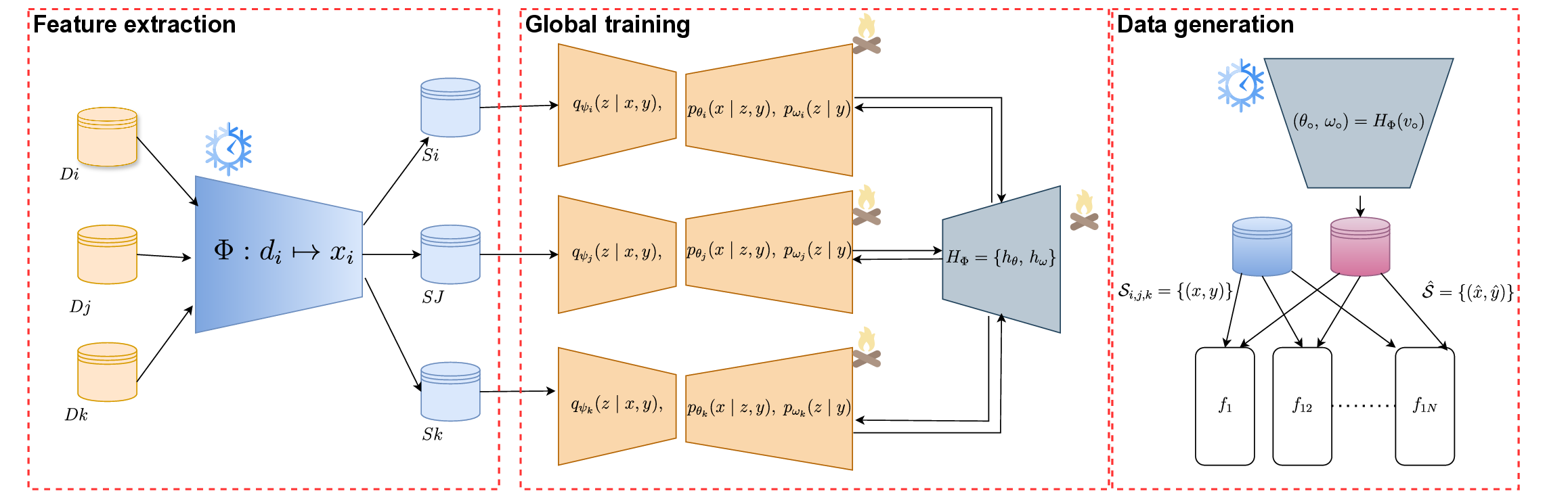

Federated data sharing promises utility without centralizing raw data, yet existing embedding-level generators struggle under non-IID client heterogeneity and provide limited formal protection against gradient leakage. We propose FedHypeVAE, a differentially private, hypernetwork-driven framework for synthesizing embedding-level data across decentralized clients. Building on a conditional VAE backbone, we replace the single global decoder and fixed latent prior with client-aware decoders and class-conditional priors generated by a shared hypernetwork from private, trainable client codes. This bi-level design personalizes the generative layerrather than the downstream modelwhile decoupling local data from communicated parameters. The shared hypernetwork is optimized under differential privacy, ensuring that only noise-perturbed, clipped gradients are aggregated across clients. A local MMD alignment between real and synthetic embeddings and a Lipschitz regularizer on hypernetwork outputs further enhance stability and distributional coherence under non-IID conditions. After training, a neutral meta-code enables domain-agnostic synthesis, while mixtures of meta-codes provide controllable multi-domain coverage. FedHypeVAE unifies personalization, privacy, and distribution alignment at the generator level, establishing a principled foundation for privacy-preserving data synthesis in federated settings. Code: github.com/sunnyinAI/FedHypeVAE

💡 Deep Analysis

Deep Dive into FedHypeVAE: Federated Learning with Hypernetwork Generated Conditional VAEs for Differentially Private Embedding Sharing.

Federated data sharing promises utility without centralizing raw data, yet existing embedding-level generators struggle under non-IID client heterogeneity and provide limited formal protection against gradient leakage. We propose FedHypeVAE, a differentially private, hypernetwork-driven framework for synthesizing embedding-level data across decentralized clients. Building on a conditional VAE backbone, we replace the single global decoder and fixed latent prior with client-aware decoders and class-conditional priors generated by a shared hypernetwork from private, trainable client codes. This bi-level design personalizes the generative layerrather than the downstream modelwhile decoupling local data from communicated parameters. The shared hypernetwork is optimized under differential privacy, ensuring that only noise-perturbed, clipped gradients are aggregated across clients. A local MMD alignment between real and synthetic embeddings and a Lipschitz regularizer on hypernetwork outputs

📄 Full Content

FEDHYPEVAE: FEDERATED LEARNING WITH

HYPERNETWORK-GENERATED CONDITIONAL VAES FOR

DIFFERENTIALLY-PRIVATE EMBEDDING SHARING

Sunny Gupta, Amit Sethi

Indian Institute of Technology Bombay

Mumbai, India

{sunnygupta, asethi}@iitb.ac.in

ABSTRACT

Federated data sharing promises utility without centralizing raw data, yet existing embedding-level

generators struggle under non-IID client heterogeneity and provide limited formal protection against

gradient leakage. We propose FedHypeVAE, a differentially private, hypernetwork-driven framework

for synthesizing embedding-level data across decentralized clients. Building on a conditional VAE

backbone, we replace the single global decoder and fixed latent prior with client-aware decoders and

class-conditional priors generated by a shared hypernetwork from private, trainable client codes. This

bi-level design personalizes the generative layerrather than the downstream modelwhile decoupling

local data from communicated parameters.

The shared hypernetwork is optimized under differential privacy, ensuring that only noise-perturbed,

clipped gradients are aggregated across clients. A local MMD alignment between real and synthetic

embeddings and a Lipschitz regularizer on hypernetwork outputs further enhance stability and

distributional coherence under non-IID conditions. After training, a neutral meta-code enables

domain-agnostic synthesis, while mixtures of meta-codes provide controllable multi-domain coverage.

FedHypeVAE unifies personalization, privacy, and distribution alignment at the generator level,

establishing a principled foundation for privacy-preserving data synthesis in federated settings. Code:

github.com/sunnyinAI/FedHypeVAE

Keywords Federated Learning · Privacy · Gradient Inversion

Introduction

Deep Neural Networks (DNNs) have driven remarkable progress in medical imaging, yet their widespread clinical

deployment remains constrained by limited data availability and stringent privacy requirements [1, 2]. Medical datasets

are often siloed across institutions, while the low prevalence of certain diseases further restricts access to diverse,

high-quality training data [3]. Although collaborative data sharing could mitigate these challenges, strict regulatory

frameworks such as HIPAA and GDPR render centralized dataset aggregation infeasible.

To address these limitations, Federated Learning (FL) [4] has emerged as a distributed paradigm that enables multiple

institutions to collaboratively train models without exposing raw data. The classical FedAvg algorithm [4] aggregates

model updates from clients to construct a global model, ensuring that sensitive data remain within institutional

boundaries. However, FL faces several persistent challenges. Communication overhead is substantial—especially

with high-capacity architectures such as Vision Transformers (ViTs) [5]—and performance often degrades under

non-IID client distributions. Recent efforts to improve efficiency through lightweight architectures [6, 7] have reduced

transmission cost but at the expense of robustness and diagnostic fidelity.

An emerging alternative is synthetic data sharing, where generative models produce privacy-preserving surrogate

datasets instead of transmitting model updates [8, 9]. Such methods reduce communication burden and improve

arXiv:2601.00785v1 [cs.LG] 2 Jan 2026

FedHypeVAE

cross-domain applicability. While Generative Adversarial Networks (GANs) [10] and diffusion models [11] achieve

high-fidelity synthesis, they remain unstable or computationally expensive for federated environments. In contrast,

Variational Autoencoders (VAEs) and their conditional extensions (CVAEs) offer stable, likelihood-based training

and computational efficiency, albeit at the cost of reduced perceptual sharpness. Recent work [12] demonstrated that

generating data in embedding space rather than image space can preserve task-relevant information while mitigating

privacy leakage.

This embedding-level paradigm is strengthened by the advent of foundation encoders such as DINOv2 [13], which

provide compact, semantically rich representations that generalize across imaging domains [14]. Training CVAEs on

such embeddings enables the generative model to capture diagnostic features efficiently while reducing redundancy and

risk of reconstruction-based attacks.

Despite these advances, two fundamental challenges persist. First, existing federated generative frameworks lack the

ability to adapt to client-specific heterogeneity, leading to degraded performance under non-IID distributions. Second,

formal privacy guarantees are rarely incorporated, with most prior methods relying on heuristic noise injection rather

than certified Differential Privacy (DP). Addressing these limitations requires a framework capable of personalized,

differentially-private generative modeling that remains consistent and generalizable across diverse clinical domains.

To this end, we propose FedHypeVAE—a Federated Hypernetwork-Generated Conditional

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.