📝 Original Info

- Title: Engineering Attack Vectors and Detecting Anomalies in Additive Manufacturing

- ArXiv ID: 2601.00384

- Date: 2026-01-01

- Authors: Md Mahbub Hasan, Marcus Sternhagen, Krishna Chandra Roy

📝 Abstract

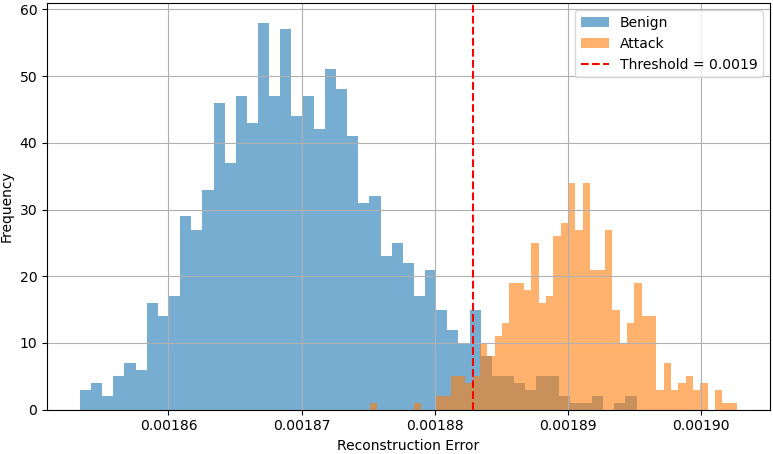

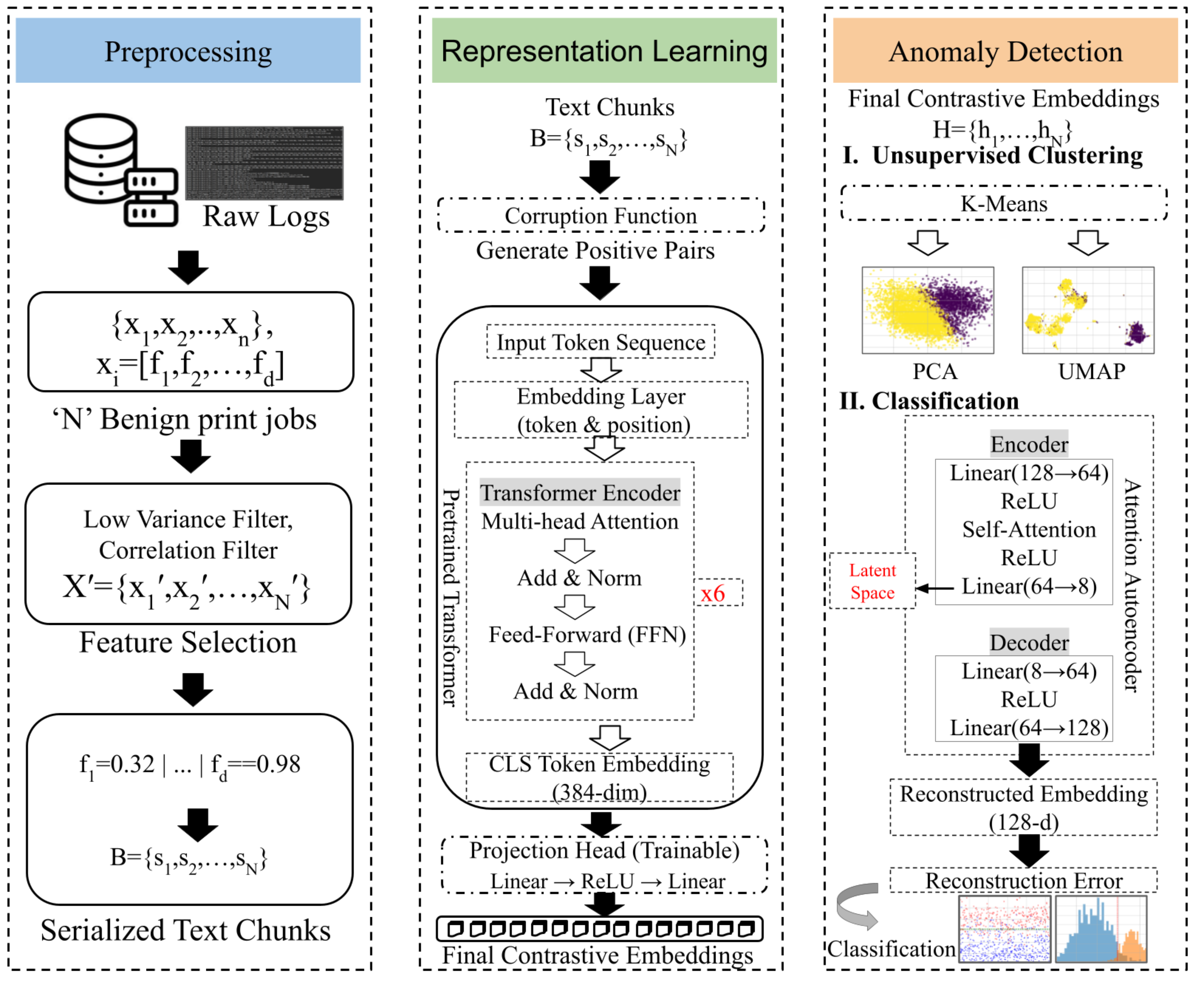

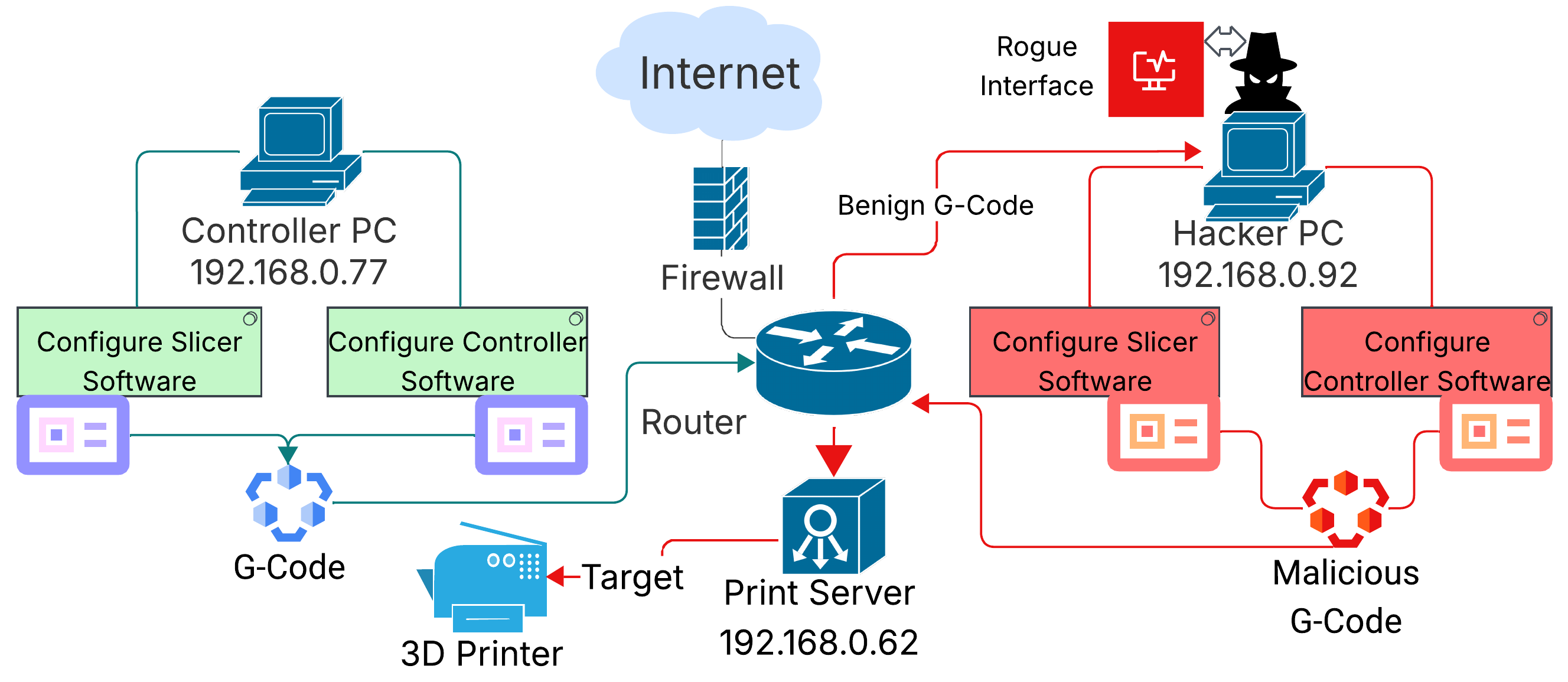

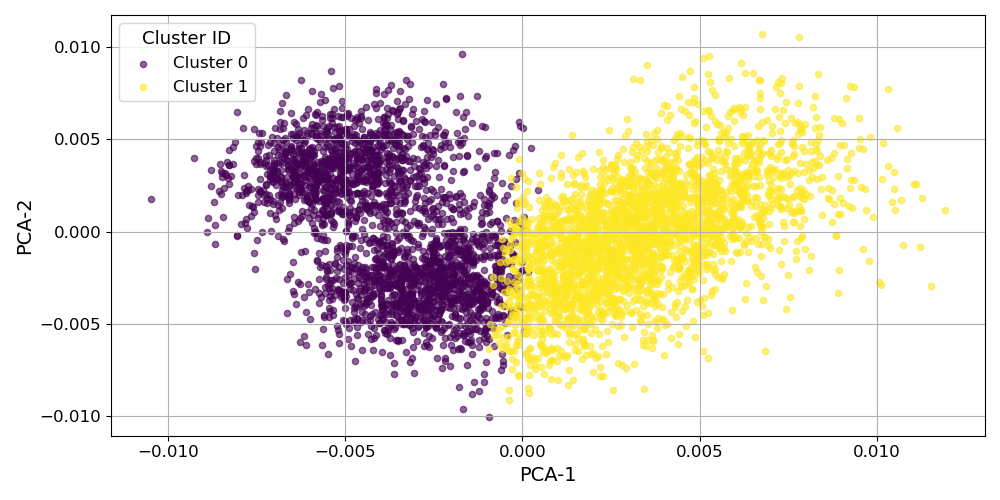

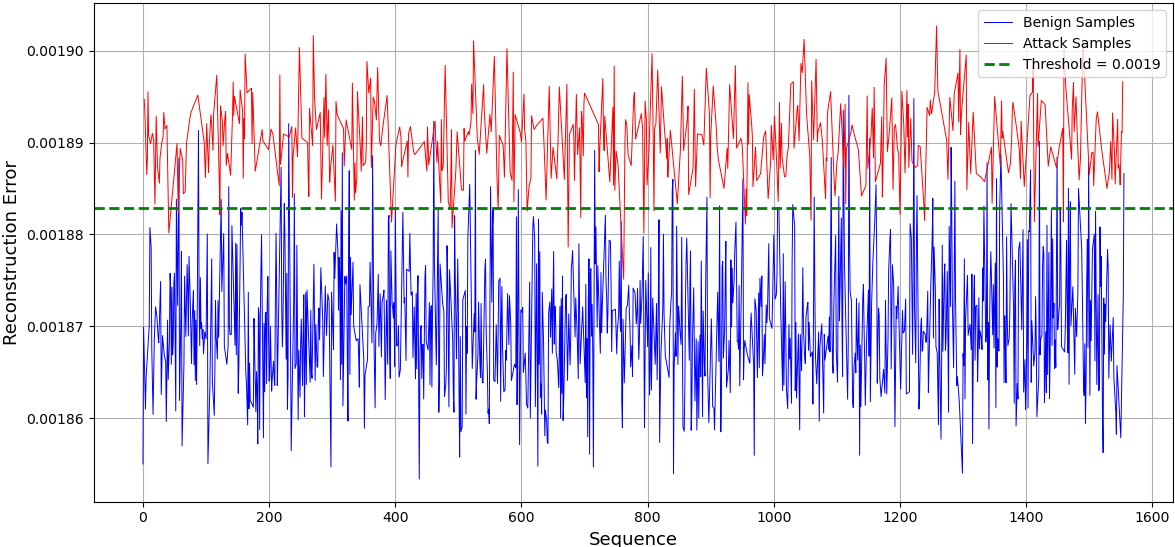

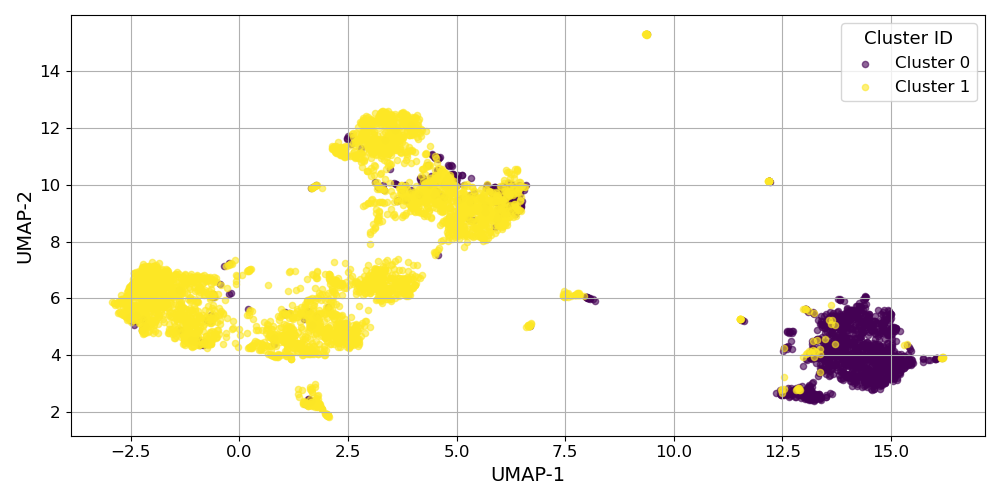

Additive manufacturing (AM) is rapidly integrating into critical sectors such as aerospace, automotive, and healthcare. However, this cyber-physical convergence introduces new attack surfaces, especially at the interface between computer-aided design (CAD) and machine execution layers. In this work, we investigate targeted cyberattacks on two widely used fused deposition modeling (FDM) systems, Creality's flagship model K1 Max, and Ender 3. Our threat model is a multi-layered Man-in-the-Middle (MitM) intrusion, where the adversary intercepts and manipulates G-code files during upload from the user interface to the printer firmware. The MitM intrusion chain enables several stealthy sabotage scenarios. These attacks remain undetectable by conventional slicer software or runtime interfaces, resulting in structurally defective yet externally plausible printed parts. To counter these stealthy threats, we propose an unsupervised Intrusion Detection System (IDS) that analyzes structured machine logs generated during live printing. Our defense mechanism uses a frozen Transformer-based encoder (a BERT variant) to extract semantic representations of system behavior, followed by a contrastively trained projection head that learns anomaly-sensitive embeddings. Later, a clustering-based approach and a self-attention autoencoder are used for classification. Experimental results demonstrate that our approach effectively distinguishes between benign and compromised executions.

💡 Deep Analysis

Deep Dive into Engineering Attack Vectors and Detecting Anomalies in Additive Manufacturing.

Additive manufacturing (AM) is rapidly integrating into critical sectors such as aerospace, automotive, and healthcare. However, this cyber-physical convergence introduces new attack surfaces, especially at the interface between computer-aided design (CAD) and machine execution layers. In this work, we investigate targeted cyberattacks on two widely used fused deposition modeling (FDM) systems, Creality’s flagship model K1 Max, and Ender 3. Our threat model is a multi-layered Man-in-the-Middle (MitM) intrusion, where the adversary intercepts and manipulates G-code files during upload from the user interface to the printer firmware. The MitM intrusion chain enables several stealthy sabotage scenarios. These attacks remain undetectable by conventional slicer software or runtime interfaces, resulting in structurally defective yet externally plausible printed parts. To counter these stealthy threats, we propose an unsupervised Intrusion Detection System (IDS) that analyzes structured machi

📄 Full Content

Engineering Attack Vectors and Detecting

Anomalies in Additive Manufacturing⋆

Md Mahbub Hasan1[0009−0002−2226−6494], Marcus

Sternhagen1[0009−0001−5605−6631], and Krishna Chandra

Roy1[0000−0001−9388−8042]

New Mexico Institute of Mining and Technology

{mdmahbub.hasan,marcus.sternhagen}@student.nmt.edu, krishna.roy@nmt.edu

Abstract. Additive manufacturing (AM) is rapidly integrating into crit-

ical sectors such as aerospace, automotive, and healthcare. However, this

cyber-physical convergence introduces new attack surfaces, especially at

the interface between computer-aided design (CAD) and machine exe-

cution layers. In this work, we investigate targeted cyberattacks on two

widely used fused deposition modeling (FDM) systems, Creality’s flag-

ship model K1 Max, and Ender 3. Our threat model is a multi-layered

Man-in-the-Middle (MitM) intrusion, where the adversary intercepts and

manipulates G-code files during upload from the user interface to the

printer firmware. The MitM intrusion chain enables several stealthy sab-

otage scenarios. These attacks remain undetectable by conventional slicer

software or runtime interfaces, resulting in structurally defective yet ex-

ternally plausible printed parts. To counter these stealthy threats, we

propose an unsupervised Intrusion Detection System (IDS) that ana-

lyzes structured machine logs generated during live printing. Our defense

mechanism uses a frozen Transformer-based encoder (a BERT variant)

to extract semantic representations of system behavior, followed by a

contrastively trained projection head that learns anomaly-sensitive em-

beddings. Later, a clustering-based approach and a self-attention autoen-

coder are used for classification. Experimental results demonstrate that

our approach effectively distinguishes between benign and compromised

executions.

Keywords: Cyber-Physical Systems · Intrusion Detection System (IDS)

· 3D Printer Attacks · Additive Manufacturing · Anomaly Detection.

1

Introduction

Additive Manufacturing (AM), commonly known as 3D printing, has revolu-

tionized modern manufacturing by enabling the rapid prototyping and produc-

tion of complex components with minimal material waste [1, 2]. Its integration

into safety-critical domains such as aerospace, healthcare, automotive, and de-

fense has made the underlying infrastructure of AM systems a prime target

⋆Supported by NSF - National Science Foundation

arXiv:2601.00384v1 [cs.CR] 1 Jan 2026

2

Hasan et al.

for cyber-physical threats [3,4]. With the proliferation of network-connected 3D

printers [5], adversaries can exploit attack vectors across the digital thread from

CAD design, STL/G-code translation, network communication, to firmware exe-

cution, potentially sabotaging the mechanical integrity, dimensional accuracy, or

intellectual property (IP) [6] of printed parts [7,8]. Recent studies have demon-

strated the feasibility and consequences of attacks at various stages of the AM

pipeline. These include manipulations of CAD/STL files [9], malicious firmware

modifications [10, 11], G-code-level sabotage [12], and side-channel IP leakage

through power, acoustic, and magnetic emissions [6, 8, 13, 14]. Meanwhile, at-

tack detection frameworks have been proposed using process monitoring [15,16],

statistical modeling [7], and analog emission analysis [4]. Despite these efforts,

most prior approaches [17] rely on either predefined attacker models or static

analysis or require access to a golden reference model(STL).Hence, a significant

gap persists in detecting real-time, stealthy attacks that operate directly at the

G-code level, the machine-readable instruction. G-code is generated by slicer

software from a CAD model and executed during fabrication. Once uploaded,

the assumption of trust in the G-code pipeline creates a critical vulnerability.

Unlike STL manipulations, G-code-level attacks can subtly alter toolpaths, ex-

trusion volumes, or print timing without triggering visual inspection or violating

basic geometry constraints.

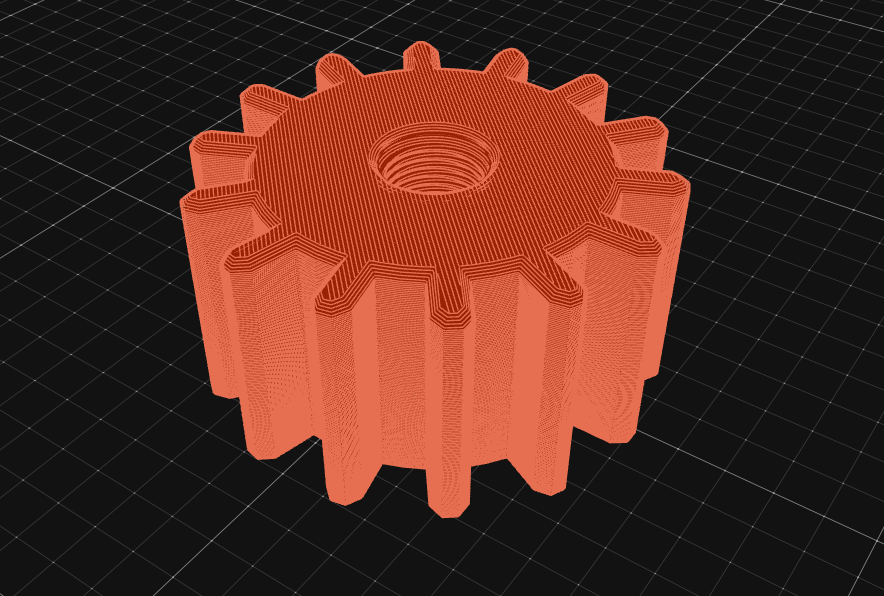

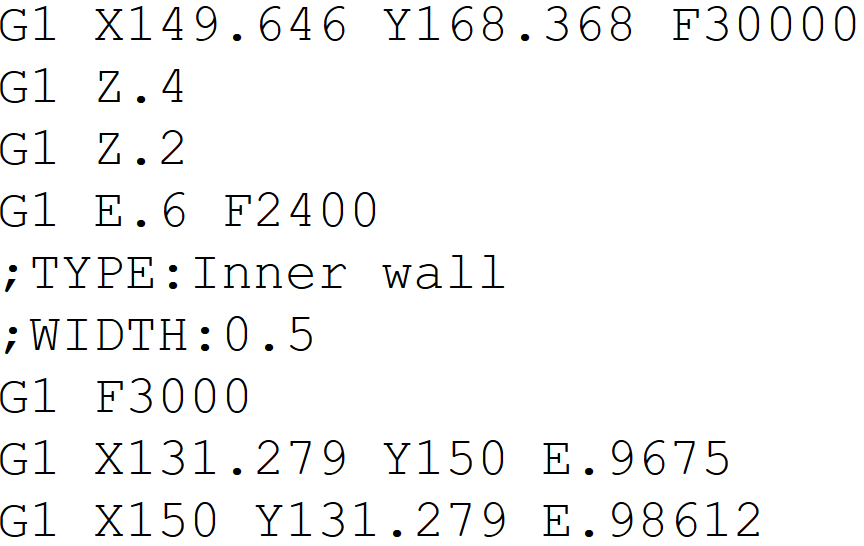

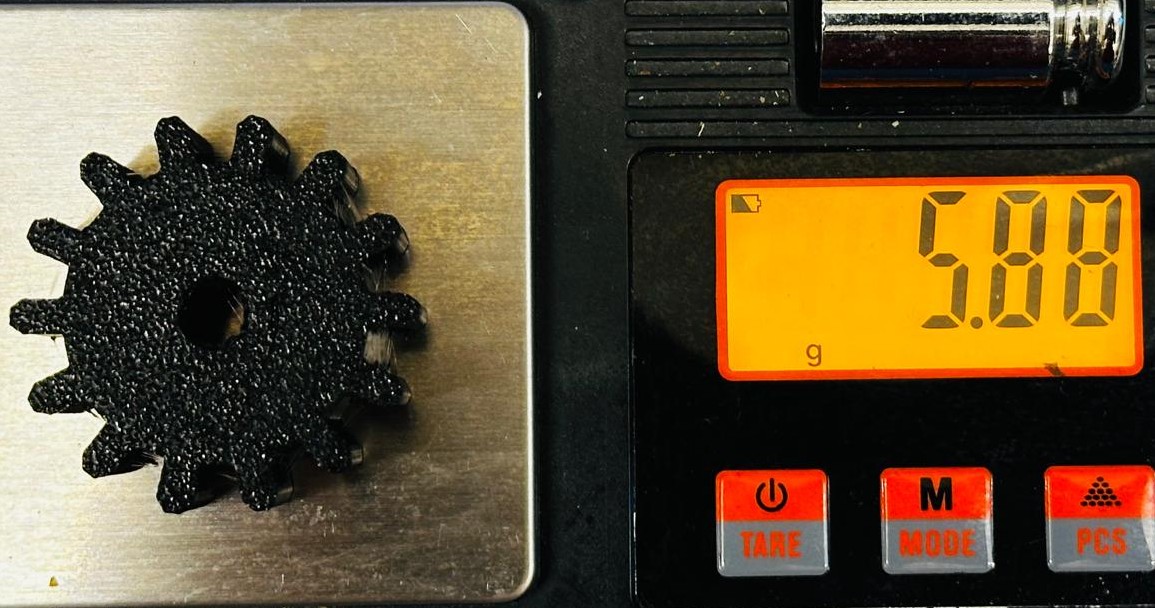

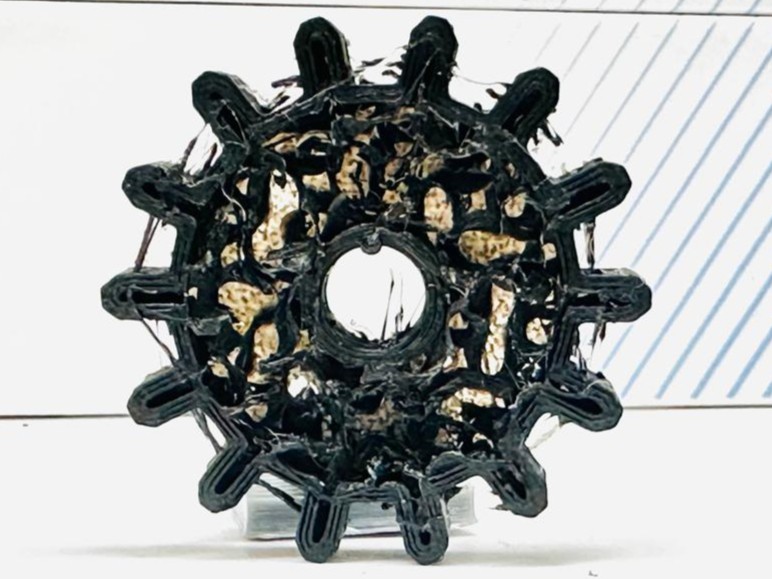

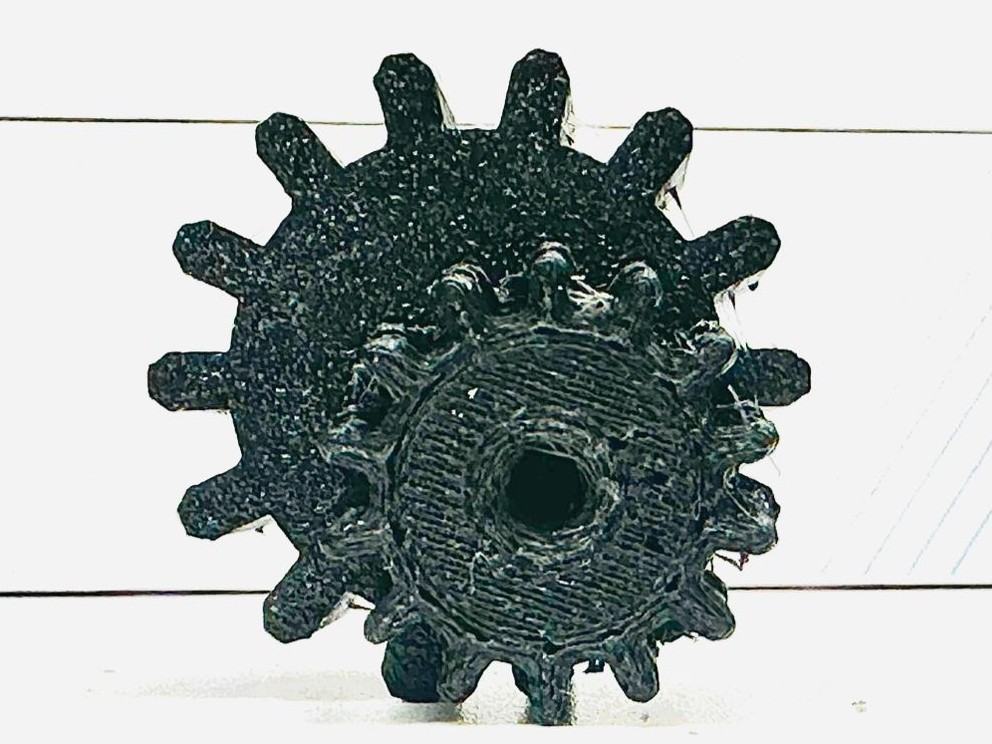

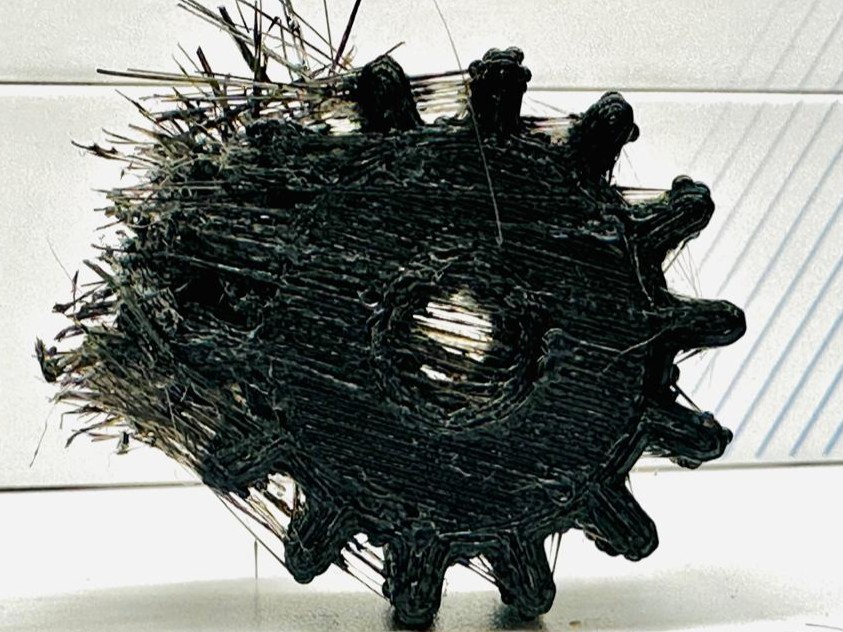

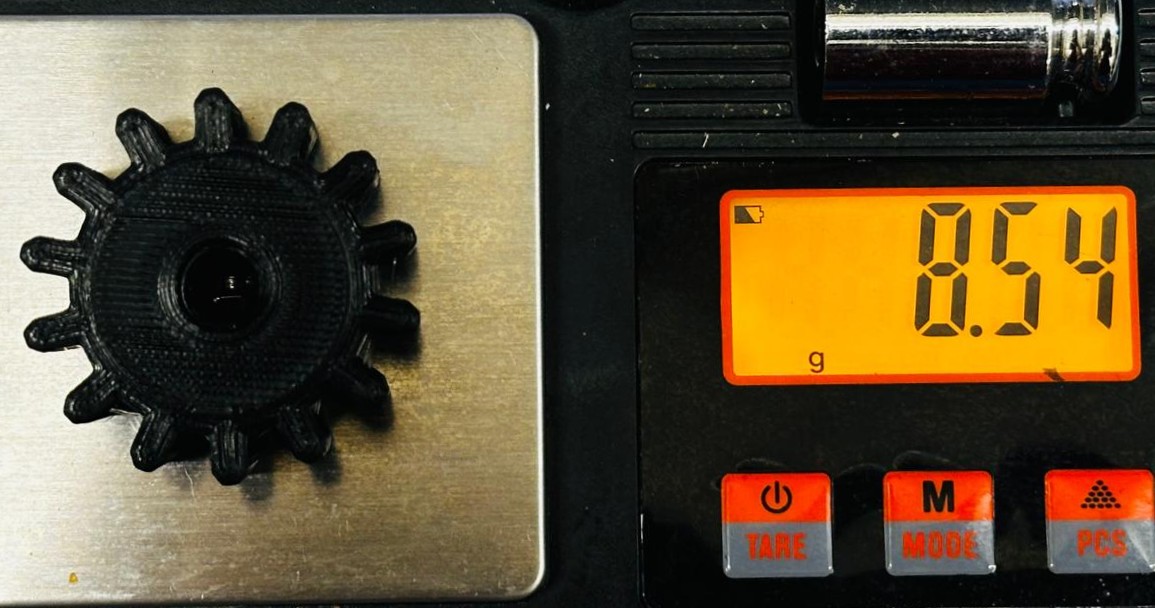

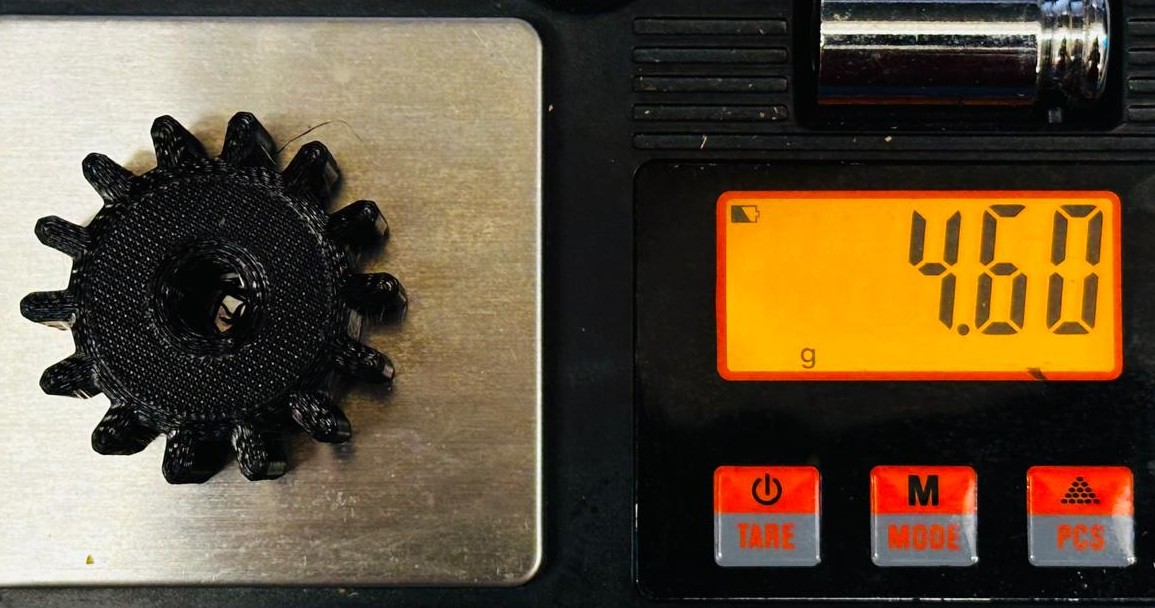

In this work, we explore stealthy G-code manipulation strategies that by-

pass traditional STL-based validation and compromise the final print without

overt disruption. We identify three strategies that exploit realistic threat models

in networked 3D printing setups, Deferred Print Exploit, Access-Jammed

G-code Swap, and Execution-Phase Tampering. Each of these approaches

was implemented in a realistic threat model, such as Under-extrusion, Over-

extrusion, Noisy G-code Injection, Dimensionality Change, and Internal Cavity

Insertion. Assuming the adversary has access to the printer’s file system or con-

trol interface (e.g., compromised print servers, remote access tools, or insider

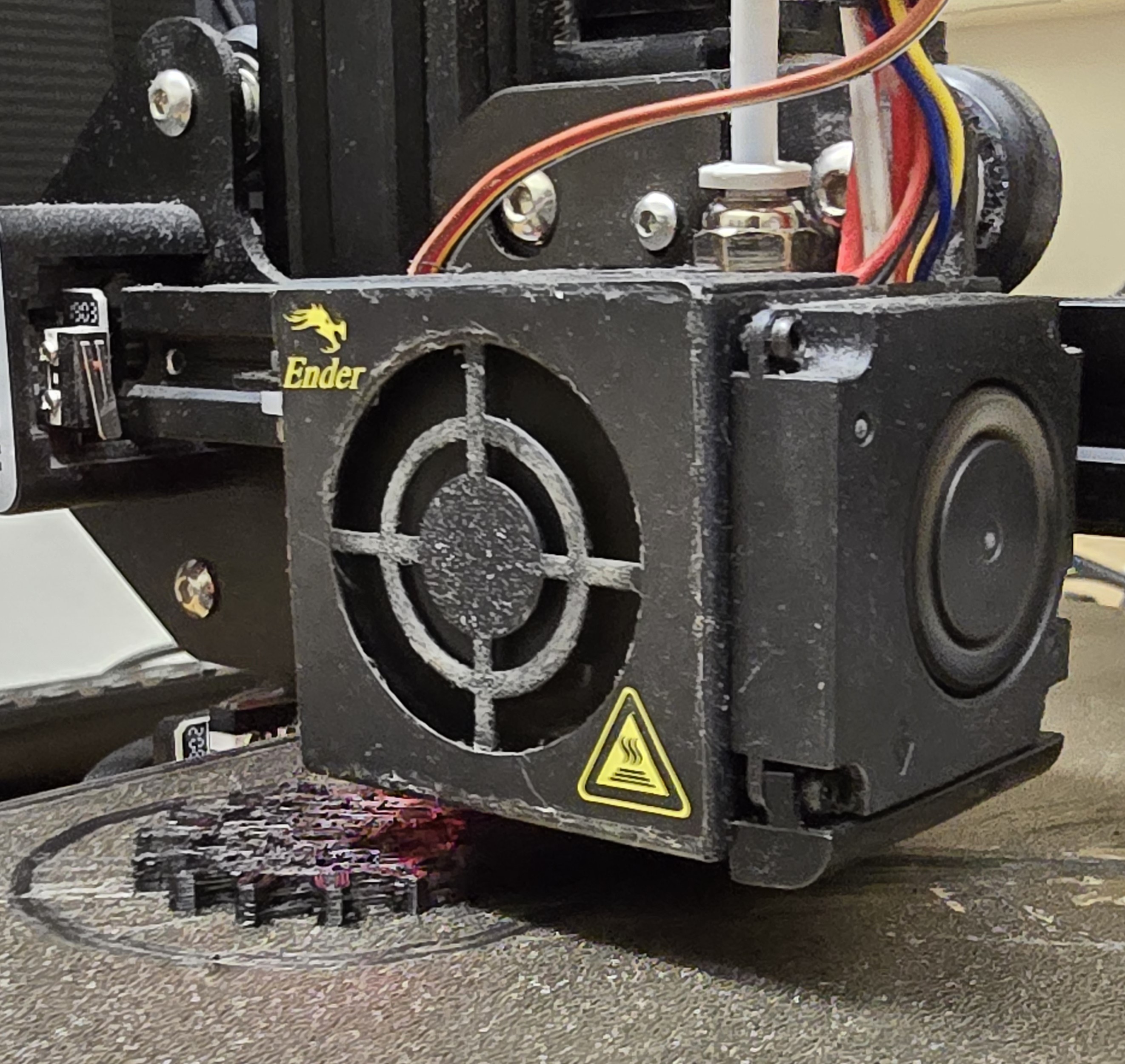

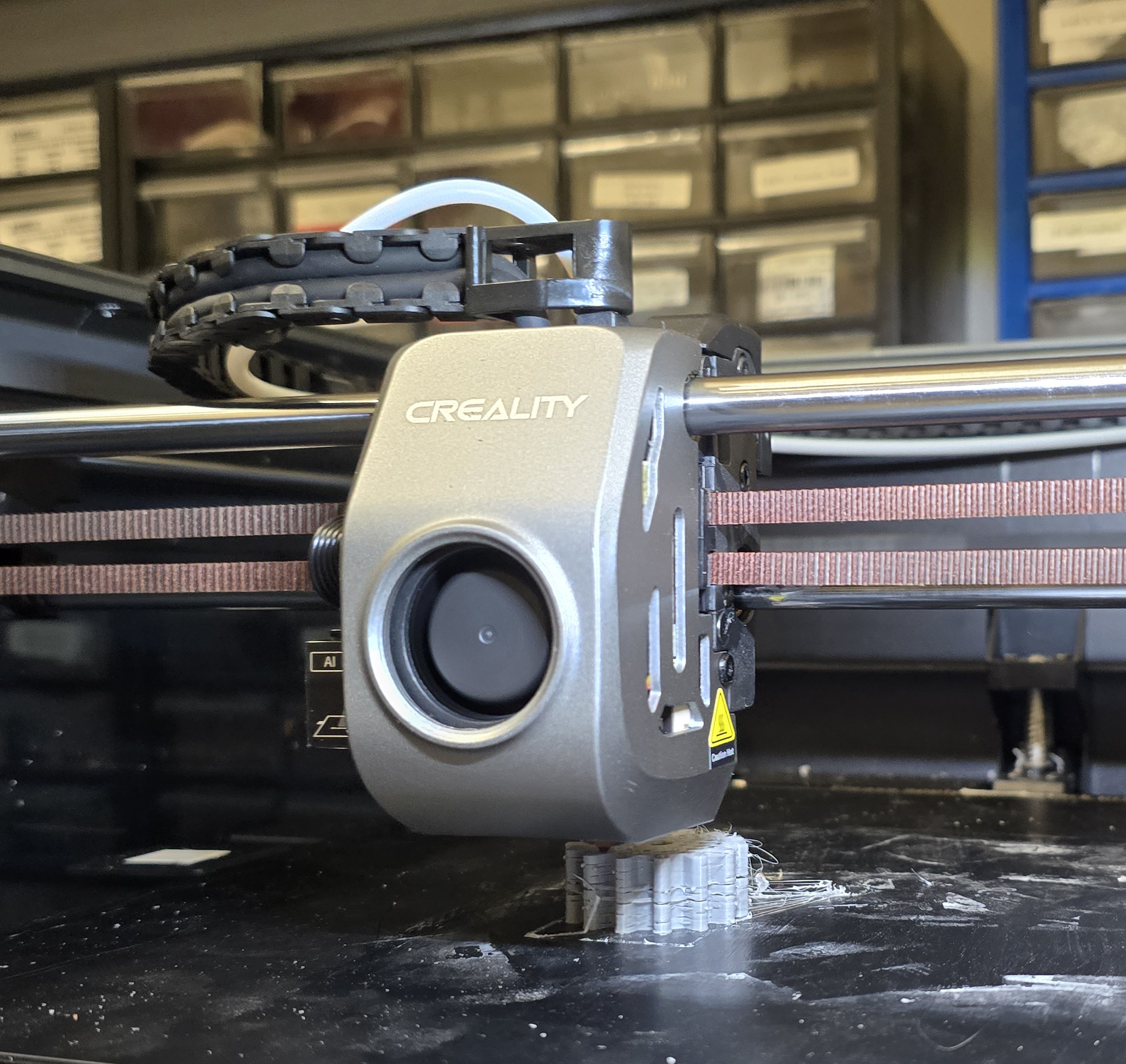

threats). We conducted experiments on two widely used FDM platforms, Cre-

ality K1 Max and Creality Ender 3, with distinct system architectures (Klipper

and OctoPrint, respectively), demonstrating the feasibility and cross-platform

generalizability of these threats.

A central challenge li

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.