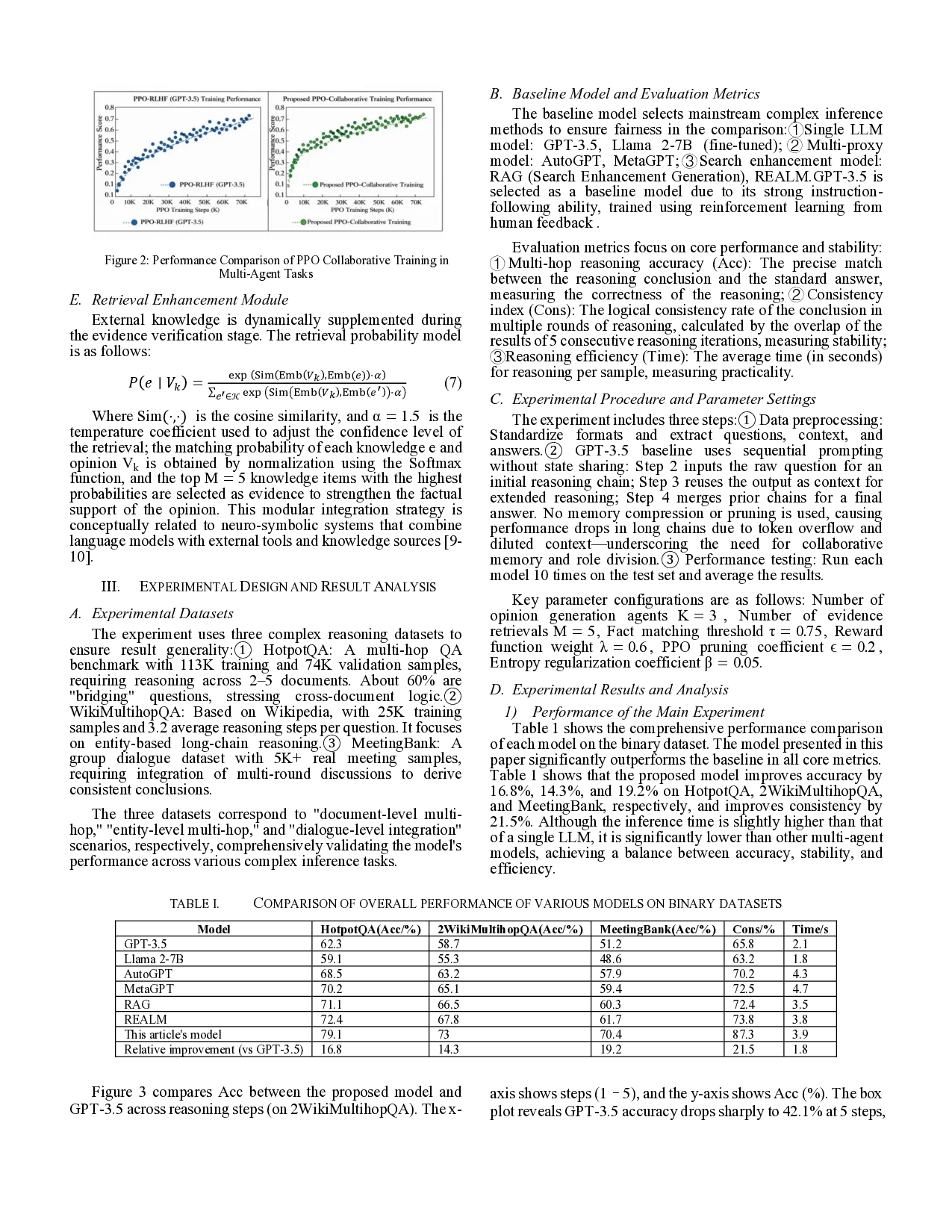

This study proposes a group deliberation multi-agent dialogue model to optimize the limitations of single-language models for complex reasoning tasks. The model constructs a threelevel role division architecture of "generation -verificationintegration." An opinion-generating agent produces differentiated reasoning perspectives, an evidence-verifying agent matches external evidence and quantifies the support of facts, and a consistency-arbitrating agent integrates logically coherent conclusions. A self-game mechanism is incorporated to expand the reasoning path, and a retrieval enhancement module supplements dynamic knowledge. A composite reward function is designed, and an improved proximal strategy is used to optimize collaborative training. Experiments show that the model improves multi-hop reasoning accuracy by 16.8%, 14.3%, and 19.2% on the HotpotQA, 2WikiMultihopQA, and MeetingBank datasets, respectively, and improves consistency by 21.5%. Its reasoning efficiency surpasses mainstream multi-agent models, achieving a balance between accuracy, stability, and efficiency, providing an efficient technical solution for complex reasoning.

Deep Dive into Arxiv 2512.24613.

This study proposes a group deliberation multi-agent dialogue model to optimize the limitations of single-language models for complex reasoning tasks. The model constructs a threelevel role division architecture of “generation -verificationintegration.” An opinion-generating agent produces differentiated reasoning perspectives, an evidence-verifying agent matches external evidence and quantifies the support of facts, and a consistency-arbitrating agent integrates logically coherent conclusions. A self-game mechanism is incorporated to expand the reasoning path, and a retrieval enhancement module supplements dynamic knowledge. A composite reward function is designed, and an improved proximal strategy is used to optimize collaborative training. Experiments show that the model improves multi-hop reasoning accuracy by 16.8%, 14.3%, and 19.2% on the HotpotQA, 2WikiMultihopQA, and MeetingBank datasets, respectively, and improves consistency by 21.5%. Its reasoning efficiency surpasses mainst

In real-world scenarios of complex reasoning tasks (such as multi-hop question answering and group decision-making), multi-agent collaboration is a core requirement for overcoming the bottleneck of single-model reasoning depth. These tasks require integrating multi-dimensional information and verifying multi-source facts. Prior work has shown that multiagent interaction such as debate can improve factuality and reasoning robustness, implicitly addressing failure modes of single-model reasoning. Furthermore, factual accuracy relies on pre-trained knowledge, making it difficult to dynamically supplement external information, resulting in insufficient stability and reliability in complex tasks. This study proposes a group deliberation multi-agent dialogue model: constructing a collaborative reasoning closed loop through role-based LLM agents (viewpoint generation, evidence verification, consistency arbitration), introducing a self-game mechanism to generate multi-path reasoning chains to expand perspectives, combining a retrieval enhancement module to dynamically supplement external knowledge to strengthen factual accuracy, and designing a reward model based on factual consistency and logical coherence, using a proximal strategy optimization to achieve multi-agent collaborative training. Multi-agent reinforcement learning has been extensively studied across a wide range of collaborative decision-making tasks.

This model constructs a three-level collaborative architecture of “generation -verification -integration” (Figure 1), following the emerging paradigm of role-based multi-agent language model systems [1][2]. Through the division of labor and cooperation among LLM agents with differentiated functions, the architecture enables structured collaboration, similar to recent communicative agent frameworks [3]. The architecture starts with task input, and through a closed-loop process of opinion generation, evidence verification, and consistency arbitration, it outputs reasoning results that are diverse, factual, and logical. n Figure 1, arrows from each agent to the “Task Input” block indicate persistent read-only access, not reverse data flow. Each agent relies on the full task input throughout reasoning: the Viewpoint Generation Agent uses it to guide diverse trajectories, the Evidence Verification Agent aligns retrieved facts with the original context, and the Consistency Arbitration Agent ensures semantic coherence in final outputs. This context-preserving design maintains factual grounding and consistency in multiagent collaboration.

The core function of this agent is to generate differentiated reasoning viewpoints based on the task input, avoiding the limitations of a single perspective. The generation of multiple differentiated viewpoints helps mitigate single-path reasoning bias, which is consistent with findings from self-consistency based reasoning methods [4]. Its generation process introduces a diversity constraint mechanism, mathematically expressed as:

Wherein, LLM V represents a dedicated LLM for opinion generation (such as a fine-tuned Llama 2), ω k is the viewpoint weight vector, following a multivariate normal distribution with mean μ and covariance matrix Σ, used to control the direction of opinion differentiation; This distribution is used for three reasons. First, the multivariate normal distribution offers a continuous, symmetric space around the reasoning center μ, supporting diverse yet coherent viewpoint generation without directional bias. Second, its covariance matrix Σ allows control over inter-factor correlations, enabling structured variations across reasoning dimensions. Third, Gaussian parameters align well with gradient-based learning and self-game updates, ensuring stable exploration and convergence. Thus, the normal distribution acts as an effective inductive bias balancing diversity, control, and stability, rather than assuming a fixed probabilistic form. Here, Emb(Q) is the task input embedding, ⊙ denotes element-wise multiplication, and weight modulation lets each Vk attend to different reasoning aspects. The selfgame mechanism explores varied reasoning paths, akin to treestructured deliberation.

This agent is responsible for matching factual evidence with each candidate opinion V k and verifying its reasonableness. This retrieval-enhanced verification process is inspired by retrieval-augmented generation frameworks that integrate external knowledge to improve factual grounding in language models [5]. Its core function is to calculate the factual matching degree between the opinion and the evidence:

In the formula, ℰ k is the set of evidence related to V k retrieved from the external knowledge base 𝒦 , |ℰ k |represents the number of evidence (the first 5 are taken by default); Emb(⋅) is the embedding function based on Sentence-BERT, Tr(⋅) represents the matrix trace operation, ‖ ⋅ ‖ F is the Frobenius norm, which is essentially an improved cosine similarity calculation,

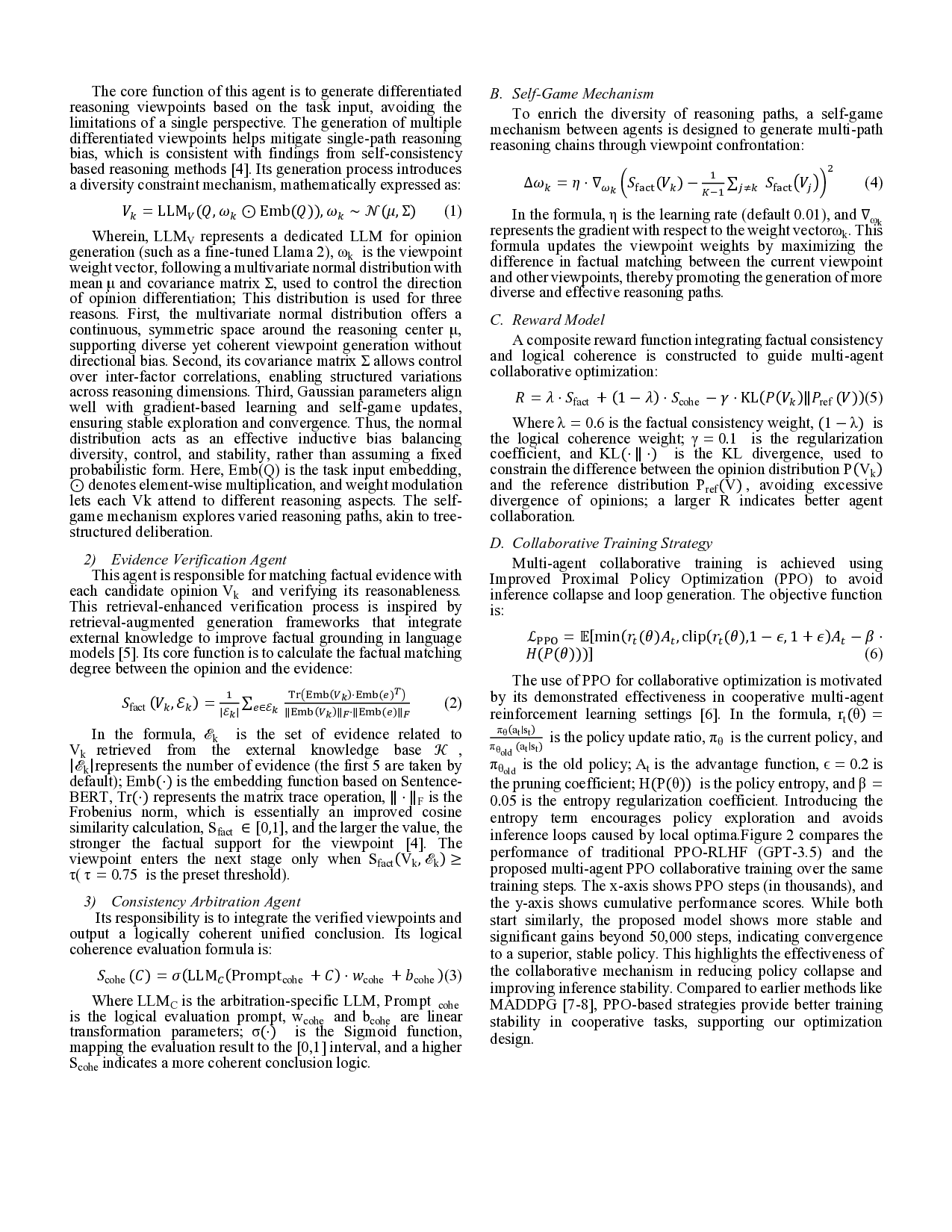

…(Full text truncated)…

This content is AI-processed based on ArXiv data.