Explicit Abstention Knobs for Predictable Reliability in Video Question Answering

High-stakes deployment of vision-language models (VLMs) requires selective prediction, where systems abstain when uncertain rather than risk costly errors. We investigate whether confidence-based abstention provides reliable control over error rates …

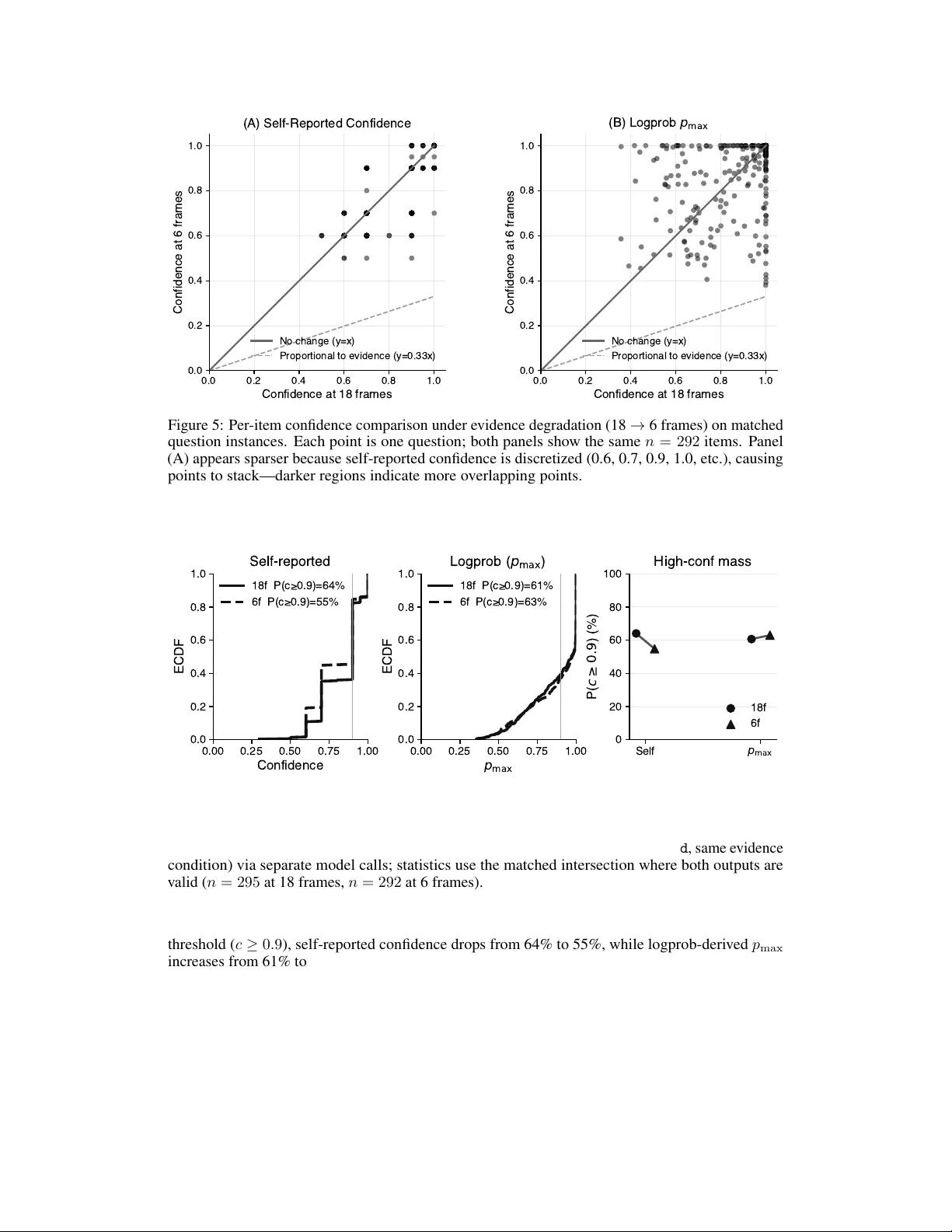

Authors: Jorge Ortiz