Semi-overlapping Multi-bandit Best Arm Identification for Sequential Support Network Learning

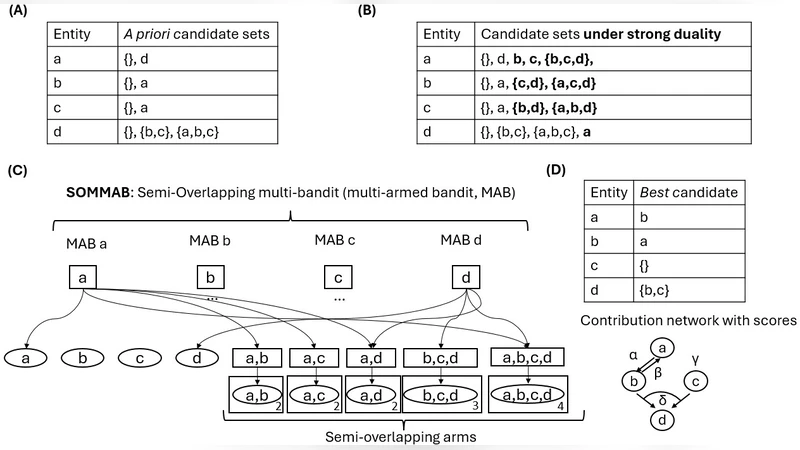

Many modern AI and ML problems require evaluating partners’ contributions through shared yet asymmetric, computationally intensive processes and the simultaneous selection of the most beneficial candidates. Sequential approaches to these problems can be unified under a new framework, Sequential Support Network Learning (SSNL), in which the goal is to select the most beneficial candidate set of partners for all participants using trials; that is, to learn a directed graph that represents the highest-performing contributions. We demonstrate that a new pure-exploration model, the semi-overlapping multi-(multi-armed) bandit (SOMMAB), in which a single evaluation provides distinct feedback to multiple bandits due to structural overlap among their arms, can be used to learn a support network from sparse candidate lists efficiently. We develop a generalized GapE algorithm for SOMMABs and derive new exponential error bounds that improve the best known constant in the exponent for multi-bandit best-arm identification. The bounds scale linearly with the degree of overlap, revealing significant sample-complexity gains arising from shared evaluations. From an application point of view, this work provides a theoretical foundation and improved performance guarantees for sequential learning tools for identifying support networks from sparse candidates in multiple learning problems, such as in multi-task learning (MTL), auxiliary task learning (ATL), federated learning (FL), and in multi-agent systems (MAS).

💡 Research Summary

The paper introduces a unified theoretical framework called Sequential Support Network Learning (SSNL) for problems where multiple participants must evaluate asymmetric, computationally intensive contributions while simultaneously selecting the most beneficial set of partners. The goal of SSNL is to learn a directed support graph that captures the highest‑performing contributions across all participants. To address this, the authors propose a novel pure‑exploration model named the Semi‑Overlapping Multi‑bandit (SOMMAB). In a SOMMAB, a single trial can provide distinct feedback to several bandits because some arms are shared among the bandits. This structural overlap reflects real‑world scenarios such as multi‑task learning (MTL) where several tasks share auxiliary tasks, federated learning (FL) where many clients update a common model, and multi‑agent systems (MAS) where agents observe the same environment.

The core algorithmic contribution is a generalized Gap Elimination (GapE) procedure adapted to SOMMABs. Traditional GapE eliminates arms whose estimated gaps to the best arm are large, but it assumes independent bandits. The generalized version simultaneously updates gap estimates for all bandits using the shared observations, thereby exploiting overlap to reduce uncertainty more quickly. At each round the algorithm selects the most uncertain arm, pulls it, and propagates the resulting observation to every bandit that contains that arm. Arms that become statistically unlikely to be optimal are eliminated from all bandits at once.

The authors derive two main theoretical results. First, they prove an exponential error bound that improves the best known constant in the exponent for multi‑bandit best‑arm identification. Specifically, for total error probability (\delta),

\

Comments & Academic Discussion

Loading comments...

Leave a Comment