📝 Original Info

- Title: The Agentic Leash: Extracting Causal Feedback Fuzzy Cognitive Maps with LLMs

- ArXiv ID: 2601.00097

- Date: 2025-12-31

- Authors: Researchers from original ArXiv paper

📝 Abstract

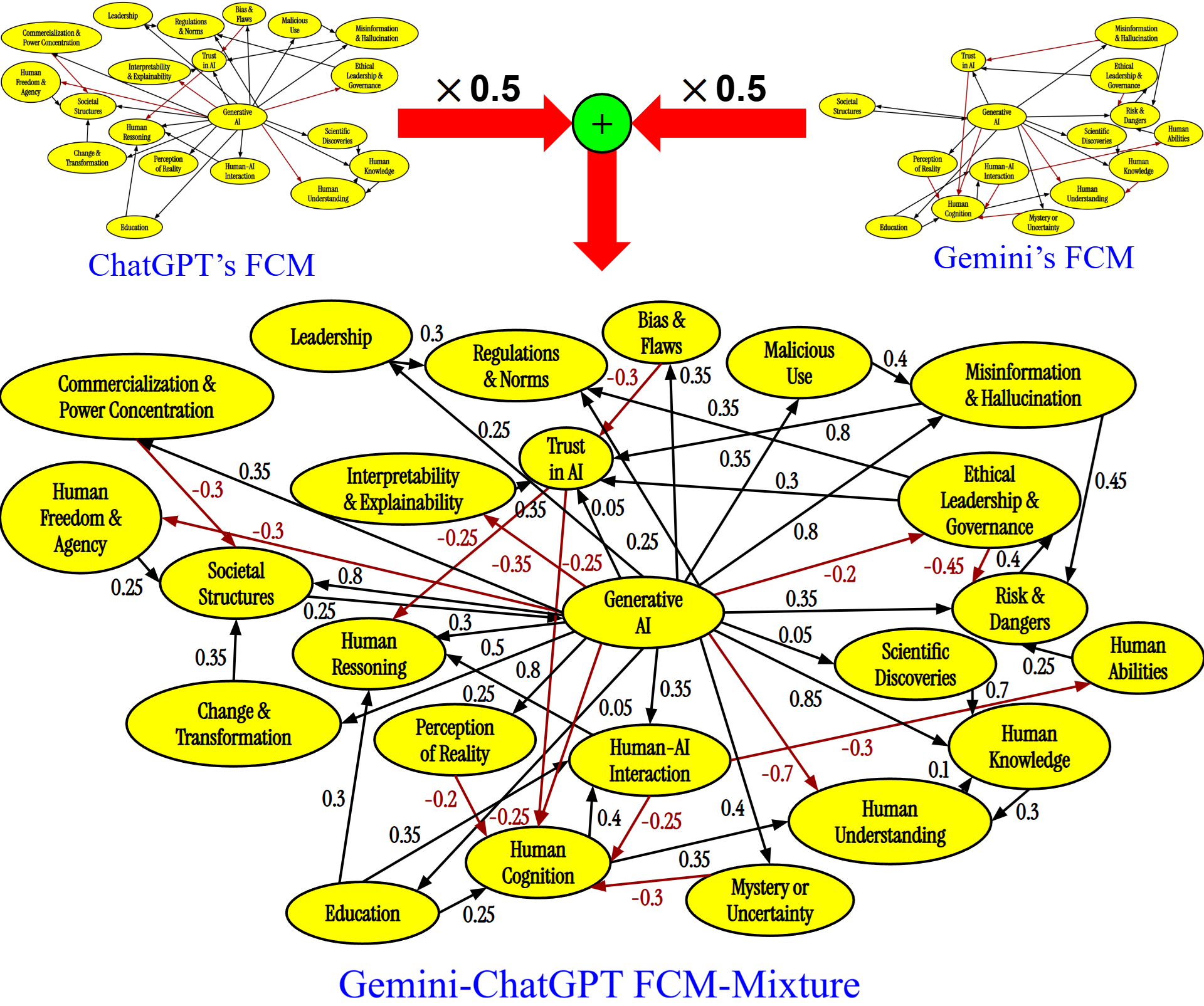

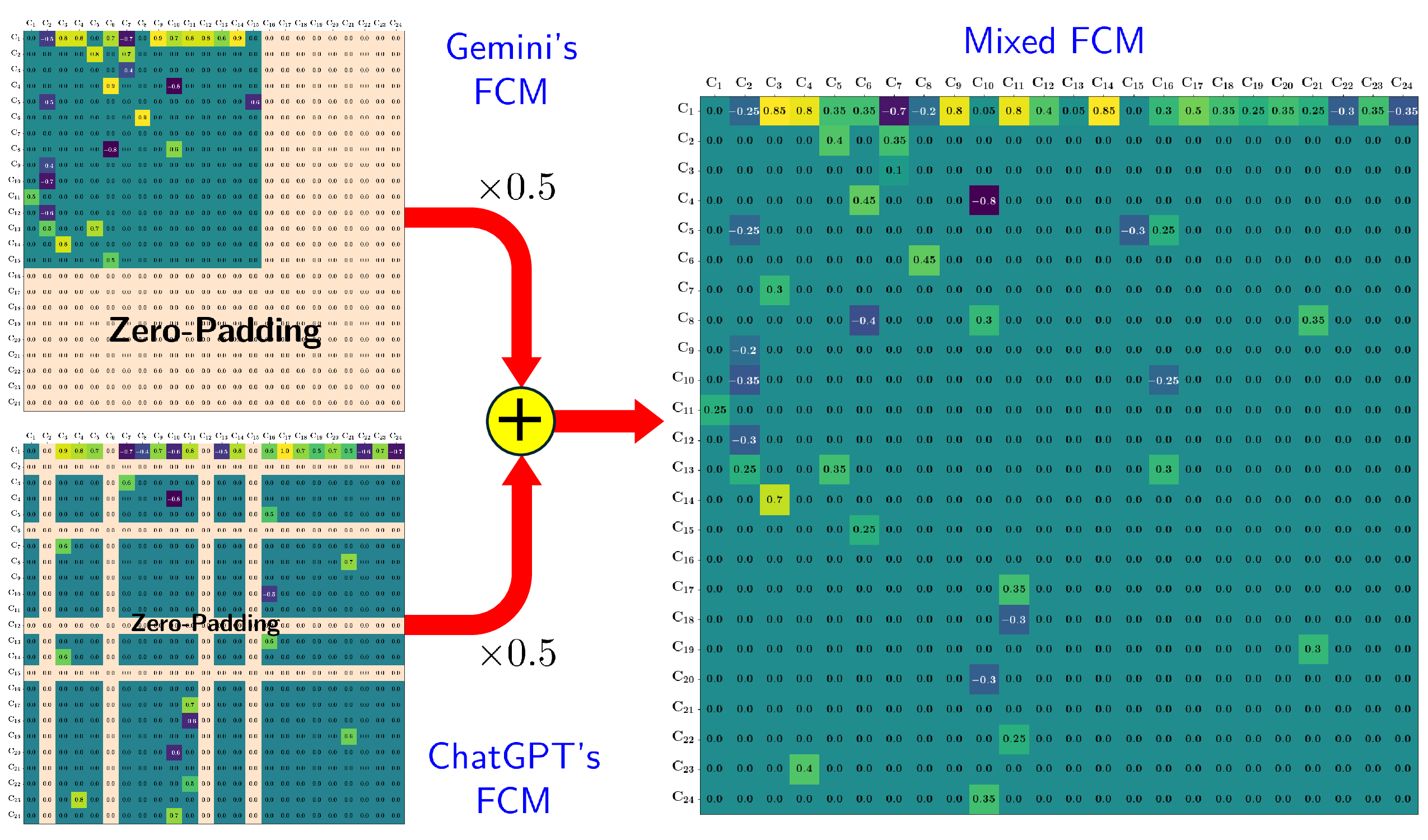

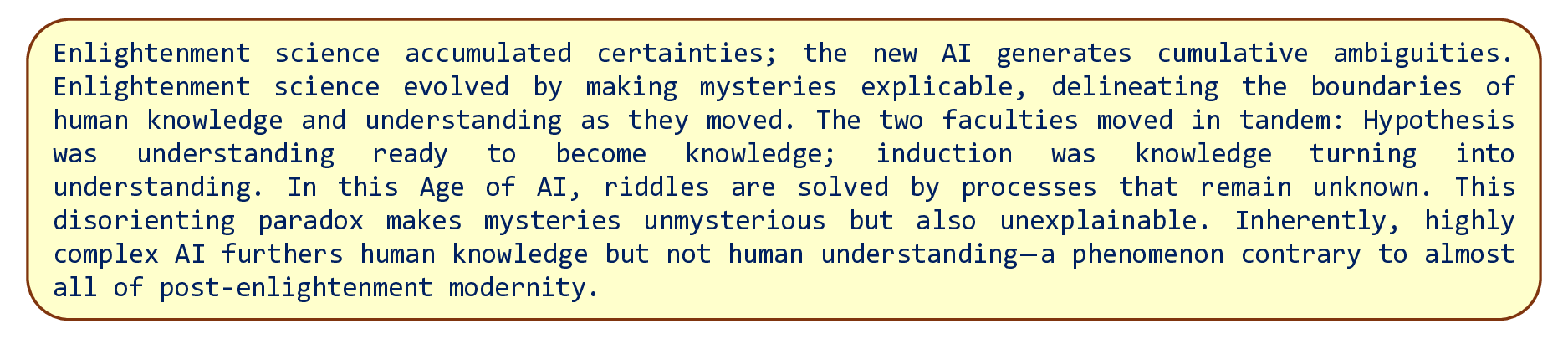

We design a large-language-model (LLM) agent that extracts causal feedback fuzzy cognitive maps (FCMs) from raw text. The causal learning or extraction process is agentic both because of the LLM's semiautonomy and because ultimately the FCM dynamical system's equilibria drive the LLM agents to fetch and process causal text. The fetched text can in principle modify the adaptive FCM causal structure and so modify the source of its quasi-autonomy-its equilibrium limit cycles and fixed-point attractors. This bidirectional process endows the evolving FCM dynamical system with a degree of autonomy while still staying on its agentic leash. We show in particular that a sequence of three finely tuned system instructions guide an LLM agent as it systematically extracts key nouns and noun phrases from text, as it extracts FCM concept nodes from among those nouns and noun phrases, and then as it extracts or infers partial or fuzzy causal edges between those FCM nodes. We test this FCM generation on a recent essay about the promise of AI from the late diplomat and political theorist Henry Kissinger and his colleagues. This three-step process produced FCM dynamical systems that converged to the same equilibrium limit cycles as did the humangenerated FCMs even though the human-generated FCM differed in the number of nodes and edges. A final FCM mixed generated FCMs from separate Gemini and ChatGPT LLM agents. The mixed FCM absorbed the equilibria of its dominant mixture component but also created new equilibria of its own to better approximate the underlying causal dynamical system.

💡 Deep Analysis

Deep Dive into The Agentic Leash: Extracting Causal Feedback Fuzzy Cognitive Maps with LLMs.

We design a large-language-model (LLM) agent that extracts causal feedback fuzzy cognitive maps (FCMs) from raw text. The causal learning or extraction process is agentic both because of the LLM’s semiautonomy and because ultimately the FCM dynamical system’s equilibria drive the LLM agents to fetch and process causal text. The fetched text can in principle modify the adaptive FCM causal structure and so modify the source of its quasi-autonomy-its equilibrium limit cycles and fixed-point attractors. This bidirectional process endows the evolving FCM dynamical system with a degree of autonomy while still staying on its agentic leash. We show in particular that a sequence of three finely tuned system instructions guide an LLM agent as it systematically extracts key nouns and noun phrases from text, as it extracts FCM concept nodes from among those nouns and noun phrases, and then as it extracts or infers partial or fuzzy causal edges between those FCM nodes. We test this FCM generation o

📄 Full Content

The Agentic Leash: Extracting Causal Feedback

Fuzzy Cognitive Maps with Mixed Large

Language Models

Akash Kumar Panda1, Olaoluwa Adigun2, and Bart Kosko3

1 University of Southern California, Los Angeles, CA 90007, USA

akashpan@usc.edu

2 Florida International University, Miami, FL 33199, USA

olaadigu@fiu.edu

3 University of Southern California, Los Angeles, CA 90007, USA

kosko@usc.edu

Abstract. We design a large-language-model (LLM) agent that extracts

causal feedback fuzzy cognitive maps (FCMs) from raw text. The causal

learning or extraction process is agentic both because of the LLM’s semi-

autonomy and because ultimately the FCM dynamical system’s equilib-

ria drive the LLM agents to fetch and process causal text. The fetched

text can in principle modify the adaptive FCM causal structure and so

modify the source of its quasi-autonomy–its equilibrium limit cycles and

fixed-point attractors. This bidirectional process endows the evolving

FCM dynamical system with a degree of autonomy while still staying

on its agentic leash. We show in particular that a sequence of three

finely tuned system instructions guide an LLM agent as it systemati-

cally extracts key nouns and noun phrases from text, as it extracts FCM

concept nodes from among those nouns and noun phrases, and then as

it extracts or infers partial or fuzzy causal edges between those FCM

nodes. We test this FCM generation on a recent essay about the promise

of AI from the late diplomat and political theorist Henry Kissinger and

his colleagues. This three-step process produced FCM dynamical systems

that converged to the same equilibrium limit cycles as did the human-

generated FCMs even though the human-generated FCM differed in the

number of nodes and edges. A final FCM mixed generated FCMs from

separate Gemini and ChatGPT LLM agents. The mixed FCM absorbed

the equilibria of its dominant mixture component but also created new

equilibria of its own to better approximate the underlying causal dynam-

ical system.

Keywords: fuzzy cognitive maps · causal reasoning · agentic LLMs

1

The Agentic Leash: Growing Fuzzy Cognitive Map

Dynamical Systems from Text

We show how agentic passes through structured LLM agents can grow causal

feedback fuzzy cognitive maps (FCM) from sampled text documents.

arXiv:2601.00097v2 [cs.AI] 14 Jan 2026

2

P. Akash et al.

These causal FCM feedback dynamical systems form local fuzzy or partial

causal rules from the sampled documents. This local causal structure in turn

defines global equilibrium limit cycles that serve as scenario-like answers to

causal what-if questions. They also define the very source of the FCM dynam-

ical system’s agency – its evolving equilibrium limit cycles. This differs from

ordinary feedforward agentic systems whose agency resides only in programmed

commands. Mixing FCMs can give both richer learned causal knowledge bases

and richer global equilibria. The extraction process is agentic [1] or partially

autonomous because the FCM’s evolving global equilibria command the LLM

agents to fetch and process further text that then tends to change the command-

ing FCM equilibria. Related work uses an autoencoder-like mapping to convert

FCMs to text and continue the reverberatory process [13]. This bidirectional

process keeps the FCM’s LLM agents on a type of flexible agentic leash.

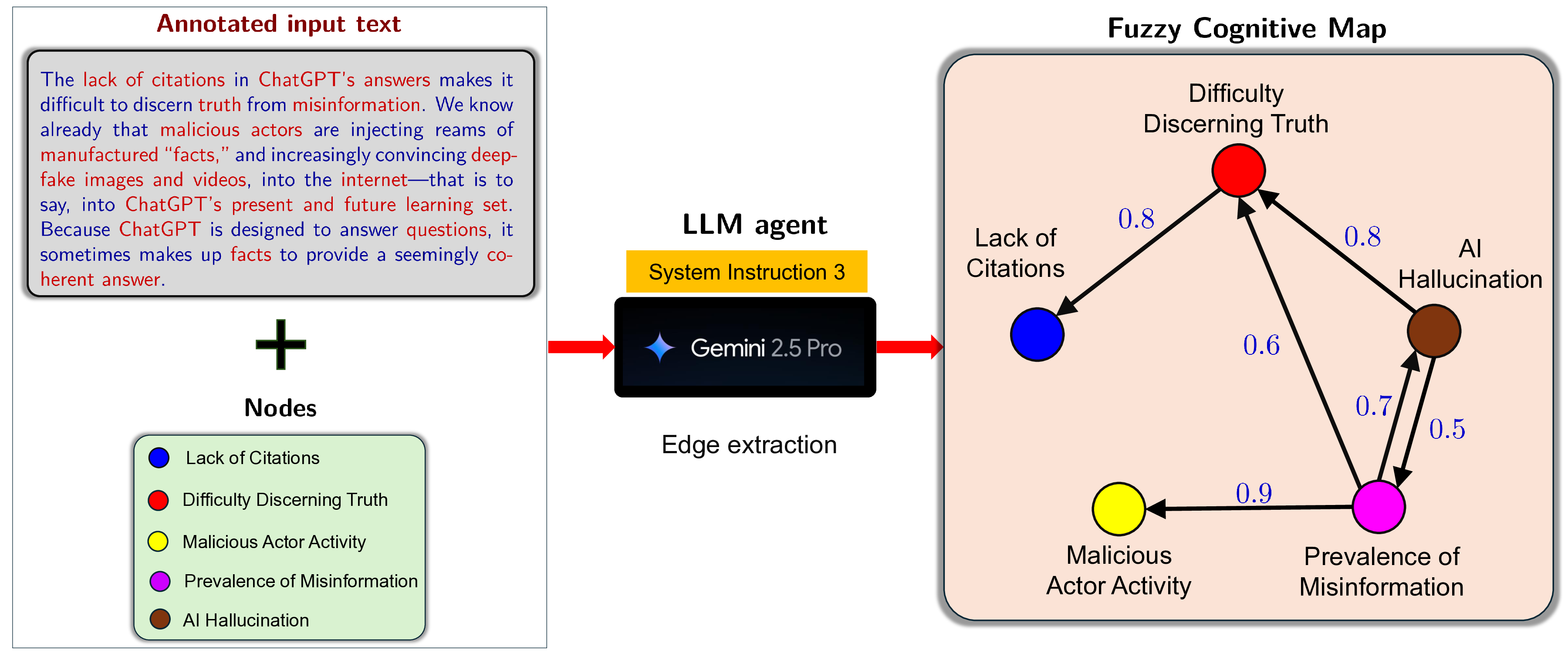

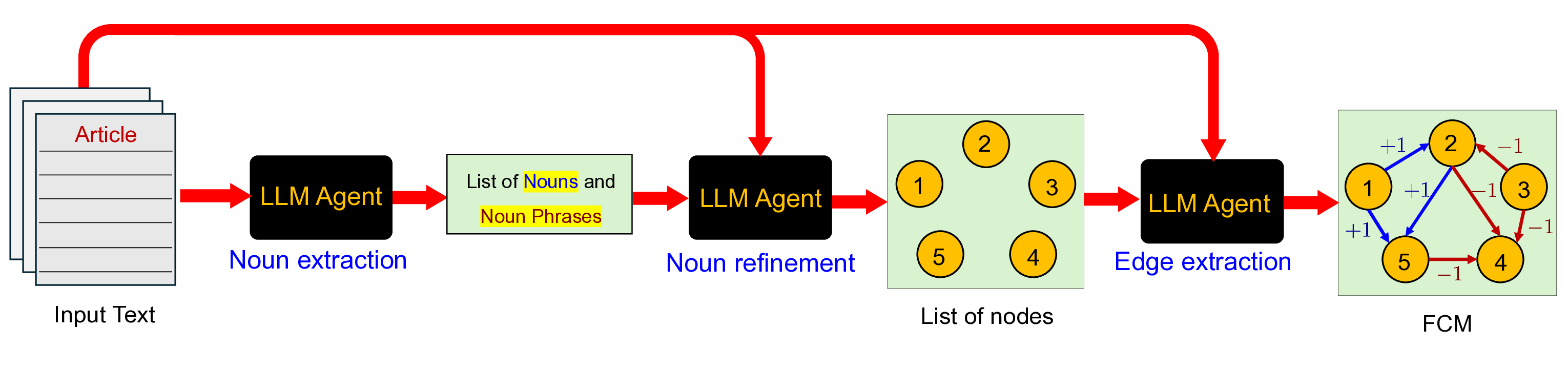

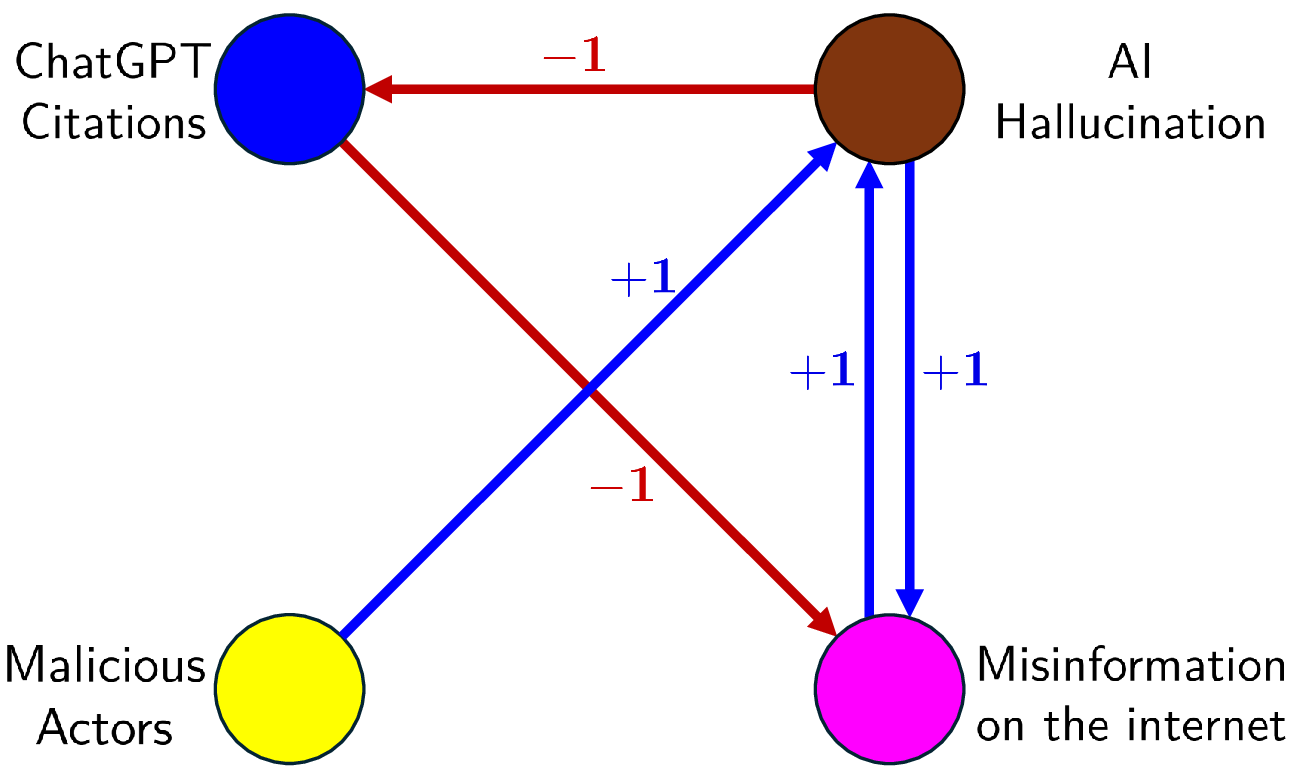

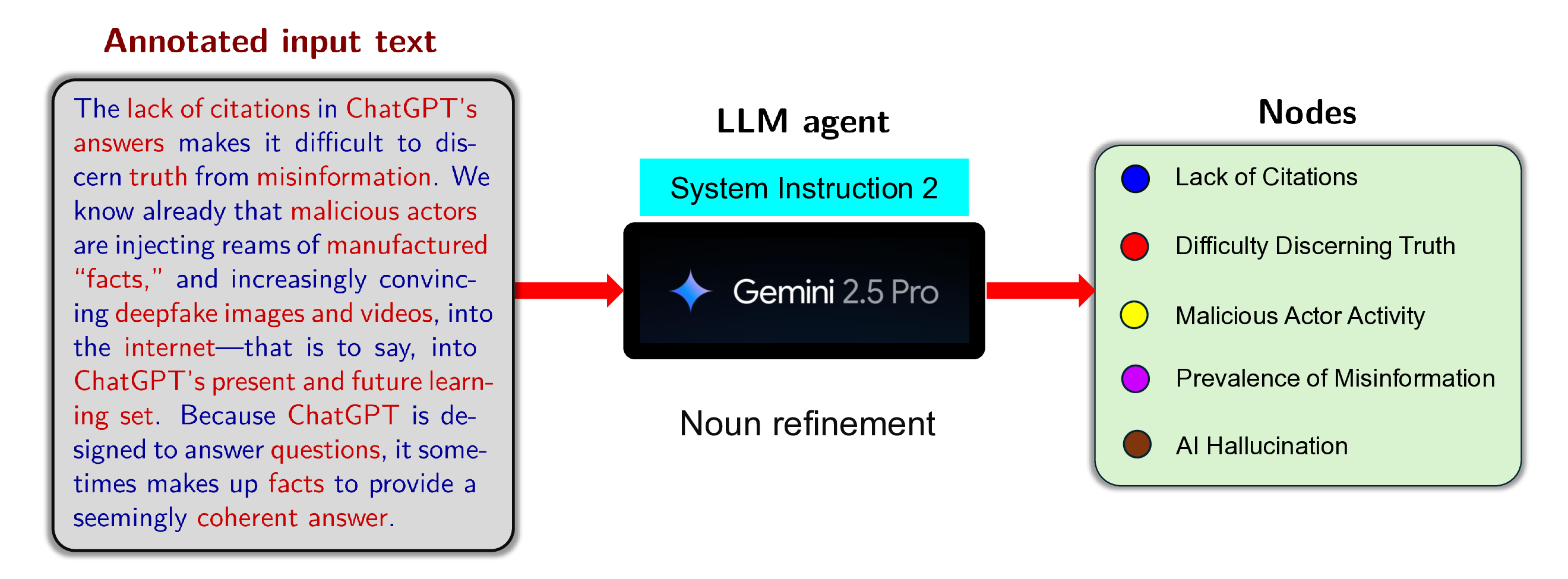

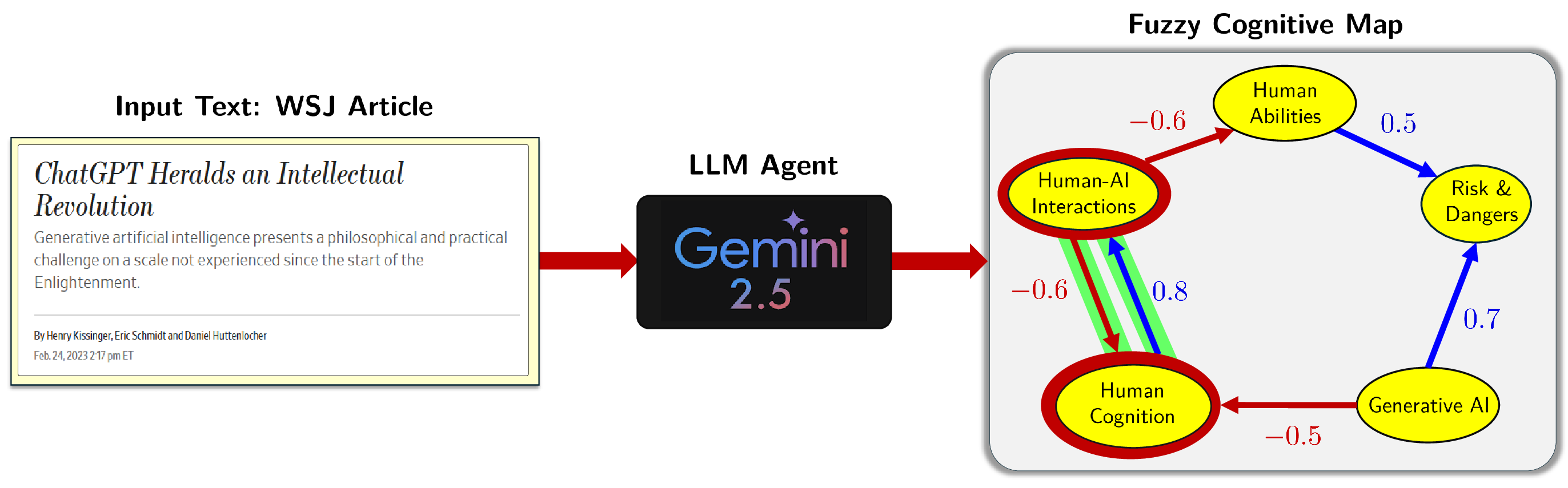

Fig. 1: A Large Language Model (LLM) extracts causal variables and their causal re-

lationships out of a Wall Street Journal article from Henry Kissinger and colleagues

about the promise of AI and then creates a Fuzzy Cognitive Map (FCM). The figure

shows only 5 out of the 15 AI-extracted nodes and the directed weighted edges that

connect them. The positive edges are in blue and the negative edges are in red. The

figure highlights one of many feedback loops in the FCM in green. In this case: Growth

of Human Cognition increases Human-AI Interactions but an increase in Human-AI

Interactions decreases Human Cognition.

Figure 1 shows a feedback causal sub-network of the complete 15-node FCM

in Figure 2. An LLM agent grew the complete FCM from a recent AI article

titled “ChatGPT Heralds an Intellectual Revolution” by Henry Kissinger et al.

in the Wall Street Journal [8]. The green highlight shows just one embedded

feedback loop that traces the causal flow from Human-AI Interaction to Human

Cognition and then back to itself: Human-AI Interaction →Human Cognition

→Human-AI Interaction. Here “→” denotes a negative edge and “→” denotes

a positive edge. Even this smaller 5-node sub-FCM encodes equilibrium limit

cycles that can serve as answers to what-if questions. Figure 7 below shows this

process for a simpler FCM.

These learned FCM knowledge graphs consist of local causal rules. The rules

give an immediate local form of interpretability or explainable AI (XAI) [2,4,6,

Agentic Learning of Feedback Causal FCMs with LLM Agents

3

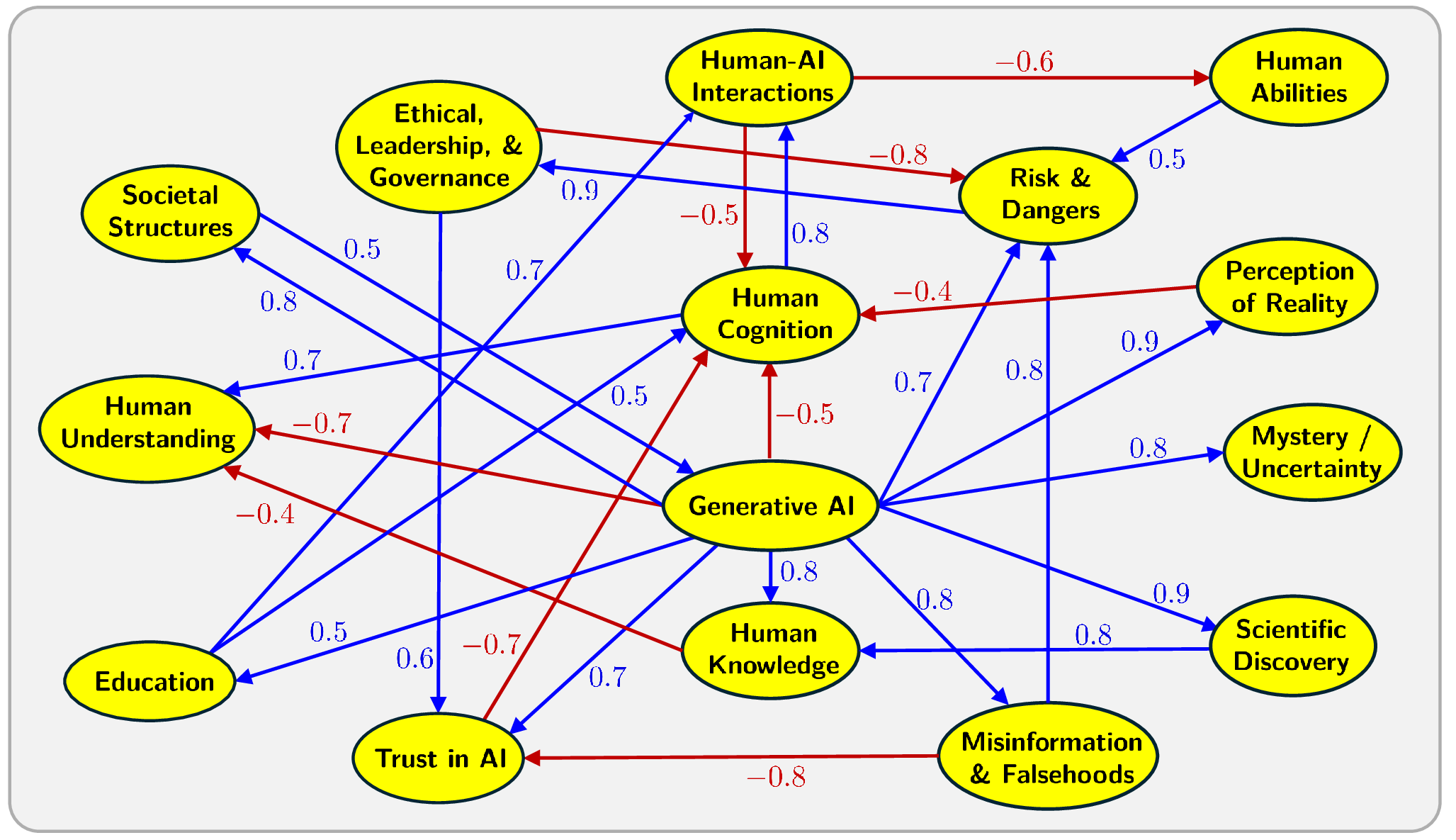

Fig. 2: A 15-node FCM extracted by the LLM from the WSJ article titled “ChatGPT Heralds an

Intellectual Revolution” by Henry Kissi

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.