Learning to Anchor Visual Odometry: KAN-Based Pose Regression for Planetary Landing

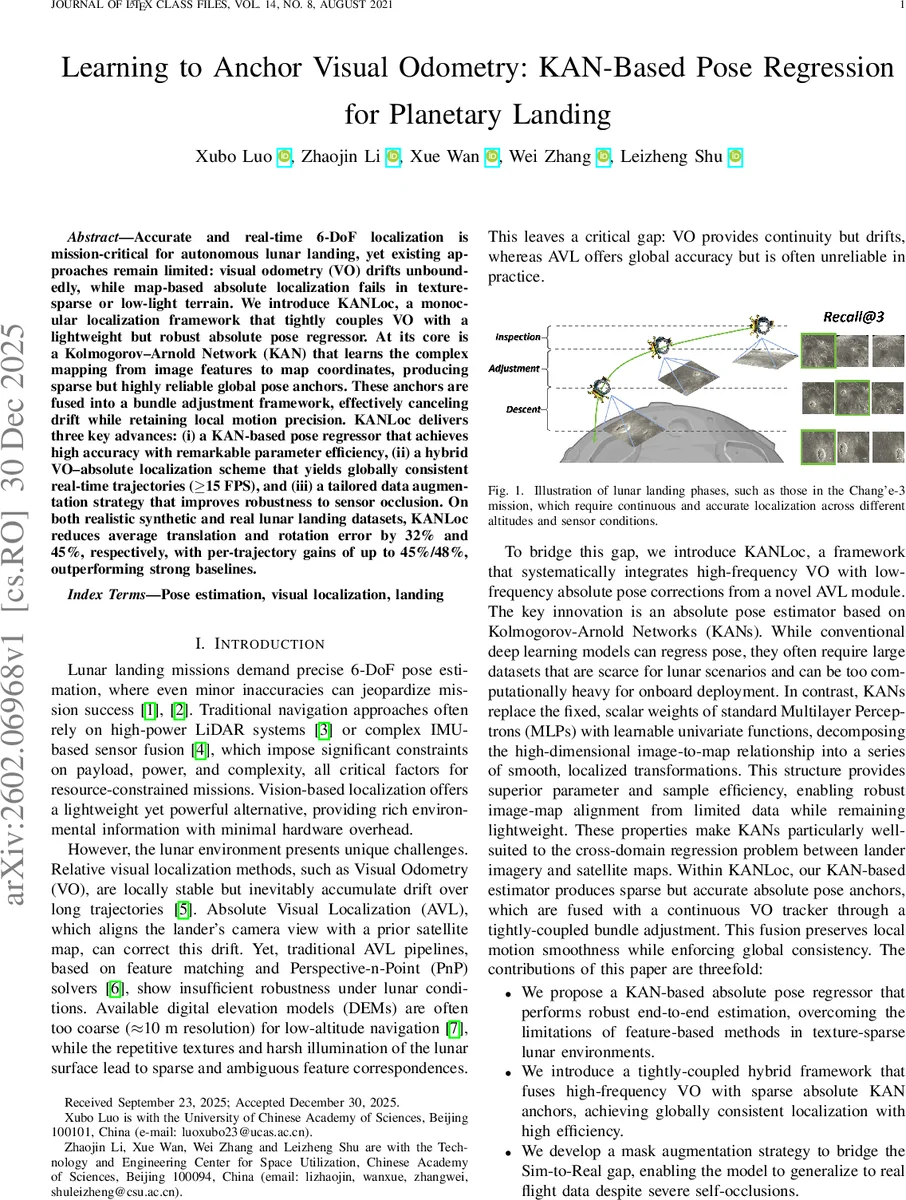

Accurate and real-time 6-DoF localization is mission-critical for autonomous lunar landing, yet existing approaches remain limited: visual odometry (VO) drifts unboundedly, while map-based absolute localization fails in texture-sparse or low-light terrain. We introduce KANLoc, a monocular localization framework that tightly couples VO with a lightweight but robust absolute pose regressor. At its core is a Kolmogorov-Arnold Network (KAN) that learns the complex mapping from image features to map coordinates, producing sparse but highly reliable global pose anchors. These anchors are fused into a bundle adjustment framework, effectively canceling drift while retaining local motion precision. KANLoc delivers three key advances: (i) a KAN-based pose regressor that achieves high accuracy with remarkable parameter efficiency, (ii) a hybrid VO-absolute localization scheme that yields globally consistent real-time trajectories (>=15 FPS), and (iii) a tailored data augmentation strategy that improves robustness to sensor occlusion. On both realistic synthetic and real lunar landing datasets, KANLoc reduces average translation and rotation error by 32% and 45%, respectively, with per-trajectory gains of up to 45%/48%, outperforming strong baselines.

💡 Research Summary

The paper addresses the critical problem of real‑time 6‑DoF localization for autonomous lunar landings, where traditional visual odometry (VO) suffers from unbounded drift and map‑based absolute visual localization (AVL) fails in texture‑poor or low‑light terrain. The authors propose KANLoc, a monocular localization framework that tightly couples high‑frequency VO with a lightweight yet robust absolute pose regressor based on Kolmogorov‑Arnold Networks (KAN). KAN replaces the fixed scalar weights of conventional multilayer perceptrons with learnable univariate spline functions, enabling a compact model that can approximate the complex mapping from image features to map coordinates with far fewer parameters.

The system works as follows: (1) For each incoming camera frame, DINO‑v2 descriptors are extracted and used to retrieve the top‑K (K=5) candidate map tiles via cosine similarity. (2) A learned gating mechanism blends the camera and map descriptors, producing a fused representation that is fed into a three‑layer KAN regressor (grid size 5). The KAN outputs a 6‑DoF pose (rotation represented in 6D and orthonormalized, translation directly). A simple confidence term combined with retrieval similarity scores selects the best pose anchor. (3) Concurrently, a classic ORB‑based monocular VO runs on the CPU, providing dense relative motion estimates and triangulated 3D landmarks. (4) The absolute pose anchors are introduced as prior factors in a tightly‑coupled bundle adjustment (BA) formulated as a pose‑graph optimization. Both the Sim(3) similarity transform aligning the VO frame to the global world frame and the camera poses are jointly optimized using g2o, with Huber kernels and a χ² gating (τ≈16.81) to reject outliers.

To bridge the sim‑to‑real gap, the authors employ a mask‑based data augmentation strategy that randomly occludes parts of the input images, mimicking sensor occlusions caused by landing hardware or dust. This improves the KAN regressor’s robustness to partial visibility.

Experiments are conducted on a high‑fidelity synthetic lunar dataset generated with Unreal Engine and AirSim (four trajectories of 1.8–2 km, varying illumination 0.2–1.0 and slopes up to 20°) and on real Chang’e‑3 landing imagery. All competing methods—including state‑of‑the‑art VO (ORB‑SLAM2/3, DPVO, NeRF‑VO, LEAP‑VO) and AVL (HLoc, Reloc3r, PoseDiffusion, Direct‑PoseNet)—are retrained under a unified protocol (Adam, 1e‑3 learning rate, 60 epochs, batch size 32). KANLoc achieves an average translation error of 7.50 m and rotation error of 7.78°, outperforming the next best VO method (ORB‑SLAM3, 11.03 m / 13.9°) by 32 % and 45 % respectively, and surpassing the best learning‑based AVL by over 30 %. Notably, KANLoc maintains performance under low illumination and steep terrain where other methods degrade sharply. Runtime analysis shows the VO thread runs at ~30 FPS on a CPU, while the KAN inference runs at up to 20 Hz on an RTX 4090, yielding an overall system speed of ≥15 FPS, satisfying real‑time constraints for landing.

In summary, KANLoc demonstrates that a parameter‑efficient KAN can serve as a powerful absolute pose regressor even with limited lunar training data, and that tightly‑coupled integration of sparse absolute anchors with dense VO via bundle adjustment can effectively eliminate drift while preserving high‑frequency motion fidelity. The approach offers a practical solution for resource‑constrained planetary landers, and future work could extend the deterministic KAN to probabilistic outputs and explore multi‑anchor fusion strategies to further enhance robustness.

Comments & Academic Discussion

Loading comments...

Leave a Comment