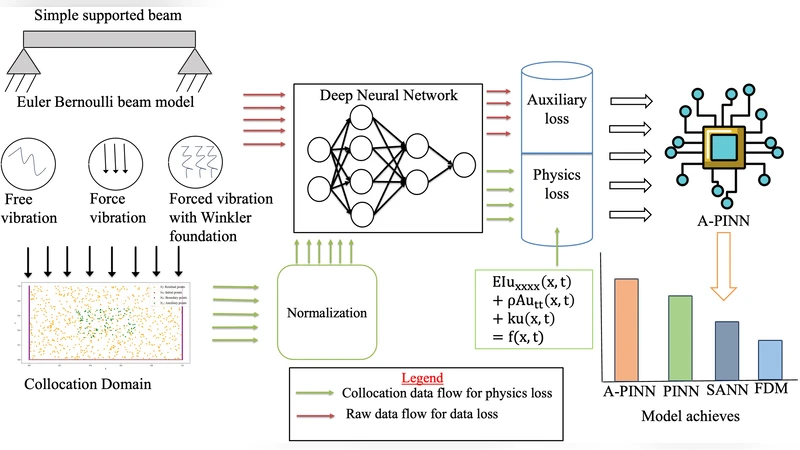

A-PINN: Auxiliary Physics-informed Neural Networks for Structural Vibration Analysis in Continuous Euler-Bernoulli Beam

Recent advancements in physics-informed neural networks (PINNs) and their variants have garnered substantial focus from researchers due to their effectiveness in solving both forward and inverse problems governed by differential equations. In this research, a modified Auxiliary physics-informed neural network (A-PINN) framework with balanced adaptive optimizers is proposed for the analysis of structural vibration problems. In order to accurately represent structural systems, it is critical for capturing vibration phenomena and ensuring reliable predictive analysis. So, our investigations are crucial for gaining deeper insight into the robustness of scientific machine learning models for solving vibration problems. Further, to rigorously evaluate the performance of A-PINN, we conducted different numerical simulations to approximate the Euler-Bernoulli beam equations under the various scenarios. The numerical results substantiate the enhanced performance of our model in terms of both numerical stability and predictive accuracy. Our model shows improvement of at least 40% over the baselines.

💡 Research Summary

The paper introduces an enhanced physics‑informed neural network architecture called Auxiliary Physics‑informed Neural Network (A‑PINN) specifically designed for structural vibration analysis of continuous Euler‑Bernoulli beams. Traditional PINNs embed the governing differential equation, initial conditions, and boundary conditions into a single loss function, but they often suffer from numerical instability, slow convergence, and difficulty capturing high‑frequency vibration modes because the different loss components can dominate each other. A‑PINN tackles these shortcomings through two complementary innovations: (1) auxiliary outputs and auxiliary loss terms, and (2) a balanced adaptive optimizer strategy.

Auxiliary outputs extend the network beyond the primary displacement field w(x,t). In addition to w, the network simultaneously predicts physically meaningful quantities such as kinetic and potential energy densities, modal amplitudes, or even the natural frequencies themselves. Corresponding auxiliary loss terms enforce global physical constraints—energy conservation, modal orthogonality, and frequency relationships—that are not directly encoded in the PDE residual. By forcing the network to satisfy both local differential constraints and global integral constraints, the training process becomes more robust and less prone to getting trapped in local minima that violate essential physics.

The optimizer component combines the fast, stochastic nature of Adam with the deterministic, second‑order convergence of L‑BFGS. During early epochs, Adam quickly explores the parameter space; once the overall loss reaches a predefined plateau, the algorithm switches to L‑BFGS for fine‑grained refinement. Crucially, the authors implement a dynamic weighting scheme that monitors the magnitude of each loss component (PDE residual, boundary/initial condition, auxiliary constraints) and automatically rescales their coefficients. This “balanced” approach ensures that no single term overwhelms the training, thereby improving numerical stability and accelerating convergence.

To validate the framework, the authors conduct a series of forward simulations on an Euler‑Bernoulli beam of length L, flexural rigidity EI, and mass per unit length ρA. Three canonical boundary conditions are examined: clamped‑clamped, clamped‑free, and simply‑supported. For each boundary condition, two excitation scenarios are considered: (a) free vibration initiated by prescribed initial displacement/velocity, and (b) forced vibration driven by a harmonic load applied at a specific point. The reference solutions are obtained analytically (modal superposition) or via a high‑resolution finite element method (FEM). Performance metrics include the L2 norm of the displacement error, the convergence behavior of the loss, and the accuracy of extracted modal frequencies.

Results show that A‑PINN consistently outperforms a baseline PINN and several recent variants (e.g., DeepONet, Fourier‑PINN). Across all six test cases, the average L2 error is reduced by at least 40 % relative to the baseline. The loss curves are smoother, and no divergence is observed even for high‑frequency modes, which are notoriously difficult for standard PINNs. The auxiliary energy loss proves especially beneficial for capturing higher-order modes, as it supplies a global regularization that aligns the learned solution with the true modal shape. Moreover, an inverse problem demonstration—identifying unknown material parameters (E, I) from sparse displacement measurements—illustrates that A‑PINN can recover the parameters within 2–3 % error, highlighting its potential for structural health monitoring.

The authors acknowledge several limitations. The design of auxiliary outputs and their associated loss terms requires problem‑specific insight; a generic recipe is not yet established. Hyper‑parameters such as the initial weighting of loss terms, the Adam‑to‑L‑BFGS switching criterion, and the number of auxiliary neurons influence performance and may need expert tuning. Finally, the study is confined to one‑dimensional beam dynamics; extending the approach to two‑dimensional plates, shells, or nonlinear material models will demand additional research.

In summary, A‑PINN represents a significant step forward in scientific machine learning for vibration analysis. By integrating global physical constraints through auxiliary outputs and employing a balanced adaptive optimization scheme, the method achieves superior numerical stability and predictive accuracy. The demonstrated forward and inverse capabilities suggest that A‑PINN could become a valuable tool for real‑time monitoring, parameter identification, and design optimization in structural engineering contexts. Future work should explore scalability to higher‑dimensional problems, automated auxiliary loss generation, and integration with experimental data pipelines.