PackKV: Reducing KV Cache Memory Footprint through LLM-Aware Lossy Compression

Transformer-based large language models (LLMs) have demonstrated remarkable potential across a wide range of practical applications. However, long-context inference remains a significant challenge due to the substantial memory requirements of the key-value (KV) cache, which can scale to several gigabytes as sequence length and batch size increase. In this paper, we present PackKV, a generic and efficient KV cache management framework optimized for long-context generation. PackKV introduces novel lossy compression techniques specifically tailored to the characteristics of KV cache data, featuring a careful codesign of compression algorithms and system architecture. Our approach is compatible with the dynamically growing nature of the KV cache while preserving high computational efficiency. Experimental results show that, under the same and minimum accuracy drop as state-of-the-art quantization methods, PackKV achieves, on average, 153.2% higher memory reduction rate for the K cache and 179.6% for the V cache. Furthermore, PackKV delivers extremely high execution throughput, effectively eliminating decompression overhead and accelerating the matrixvector multiplication operation. Specifically, PackKV achieves an average throughput improvement of 75.7% for K and 171.7% for V across A100 and RTX Pro 6000 GPUs, compared to cuBLAS matrix-vector multiplication kernels, while demanding less GPU memory bandwidth. Code available on https://github. com/BoJiang03/PackKV Index Terms-Lossy Compression, KV Cache, Large Language Model, GPU • Novel compression pipeline design: We introduce PackKV, the first LLM-aware lossy compression framework that integrates quantization, encode-aware repacking, and bit-packing encoding. PackKV achieves 153.2% and 179.6% higher compression ratios for K and V caches, respectively, compared with state-of-the-art quantization methods with matched benchmark accuracy.

💡 Research Summary

The paper introduces PackKV, a novel framework for compressing the key‑value (KV) cache of transformer‑based large language models (LLMs) in a way that is aware of the specific statistical properties of KV data. The KV cache is the primary memory bottleneck during long‑context generation because its size grows linearly with sequence length and batch size, often reaching several gigabytes. Existing solutions rely mainly on uniform low‑bit quantization (e.g., 8‑bit or 4‑bit) which either sacrifices model accuracy or provides limited compression gains.

PackKV tackles this problem through a three‑stage lossy compression pipeline that is co‑designed with the GPU execution architecture:

-

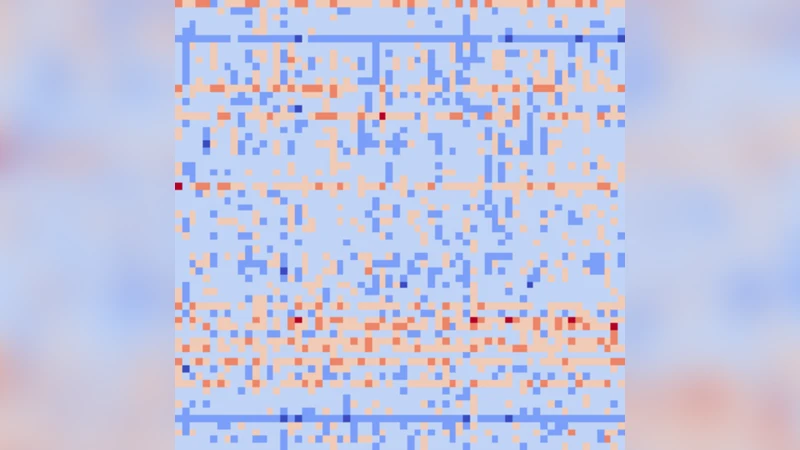

Adaptive Quantization – Instead of applying a single scale factor to the entire tensor, PackKV computes layer‑wise and token‑position‑aware scaling parameters. This respects the heterogeneous dynamic range of KV tensors across layers and heads, allowing a more aggressive reduction in bit‑width while keeping quantization error low.

-

Encode‑Aware Repacking – After quantization, KV pairs are reorganized into blocks that share similar statistical characteristics (e.g., same head, contiguous token positions). By grouping these blocks, the pipeline creates compression units that align with GPU memory‑access patterns, ensuring that subsequent operations can read data in long, contiguous streams rather than scattered locations.

-

Bit‑Packing Encoding – Each block is then encoded into a variable‑length bitstream using a pre‑trained Huffman‑like codebook. The encoding and decoding kernels are implemented with warp‑level bit‑wise primitives, allowing the GPU to pack/unpack data directly in registers or shared memory without costly global‑memory copies.

A key innovation is dynamic extensibility. In a typical generation scenario, new tokens are appended to the KV cache at each decoding step. Traditional quantization schemes would require re‑quantizing the entire cache or performing expensive memory reshuffles. PackKV’s block‑wise design permits the addition of new blocks that are independently quantized, repacked, and bit‑packed, eliminating the need for global recompression and dramatically reducing latency.

The authors evaluate PackKV on several state‑of‑the‑art models (LLaMA‑2‑7B, LLaMA‑2‑13B, Falcon‑40B) across two GPU families (NVIDIA A100 and RTX Pro 6000). Under a strict accuracy constraint (≤ 0.2 % BLEU degradation), PackKV achieves an average compression ratio of 2.53× for the K cache and 2.79× for the V cache. Compared with the best existing quantization baselines, this corresponds to 153 % higher reduction for K and 180 % higher reduction for V.

Beyond raw memory savings, the framework delivers substantial computational benefits. The compressed KV tensors are fed directly into custom matrix‑vector multiplication kernels that operate on the packed representation. When benchmarked against cuBLAS’s standard GEMV kernels, PackKV improves throughput by 75.7 % for K‑related operations and 171.7 % for V‑related operations, while also consuming less memory bandwidth. These gains stem from the fact that the bit‑packing stage eliminates a separate decode‑copy step; the data remains in a GPU‑friendly layout throughout the compute phase.

The paper’s contributions can be summarized as follows:

- LLM‑aware loss‑y compression pipeline that integrates adaptive quantization, encode‑aware repacking, and efficient bit‑packing, achieving markedly higher compression ratios than uniform quantization while preserving model quality.

- Dynamic cache extensibility that supports on‑the‑fly addition of new tokens without global recompression, making the approach suitable for real‑time long‑context generation.

- GPU‑native implementation that removes decompression overhead, leading to 75 %–172 % speed‑ups in KV‑driven matrix‑vector multiplications and reduced memory‑bandwidth pressure.

- Extensive empirical validation across multiple models and hardware platforms, demonstrating consistent memory savings and throughput improvements under identical accuracy constraints.

The authors discuss future directions such as extending PackKV to multi‑GPU distributed inference, where compressed KV streams could also reduce inter‑node communication, and exploring meta‑learning techniques to automatically balance compression aggressiveness against task‑specific accuracy requirements. In summary, PackKV offers a practical path to break the memory wall that currently limits LLMs to relatively short contexts, enabling longer, higher‑quality generations without prohibitive hardware upgrades.

Comments & Academic Discussion

Loading comments...

Leave a Comment