FedSecureFormer: A Fast, Federated and Secure Transformer Framework for Lightweight Intrusion Detection in Connected and Autonomous Vehicles

The era of Connected and Autonomous Vehicles (CAVs) reflects a significant milestone in transportation, improving safety, efficiency, and intelligent navigation. However, the growing reliance on real-time communication, constant connectivity, and autonomous decision-making raises serious cybersecurity concerns, particularly in environments with limited resources. This highlights the need for security solutions that are efficient, lightweight, and suitable for deployment in real-world vehicular environments. In this work, we introduce FedSecureFormer, a lightweight transformer-based model with 1.7 million parameters, significantly smaller than most encoder-only transformer architectures. The model is designed for efficient and accurate cyber attack detection, achieving a classification accuracy of 93.69% across 19 attack types, and attaining improved performance on ten attack classes, outperforming several state-of-the-art (SOTA) methods. To assess its practical viability, we implemented the model within a Federated Learning (FL) setup using the FedAvg aggregation strategy. We also incorporated differential privacy to enhance data protection. For testing its generalization to unseen attacks, we used a histogram-guided GAN with LSTM and attention modules to generate unseen data, achieving 88% detection accuracy. Notably, the model reached an inference time of 3.7775 milliseconds per vehicle on Jetson Nano, making it roughly 100x faster than existing SOTA models. These results position FedSecureFormer as a fast, scalable, and privacy-preserving solution for securing future intelligent transport systems.

💡 Research Summary

The paper introduces FedSecureFormer, a lightweight transformer‑based intrusion detection system specifically designed for Connected and Autonomous Vehicles (CAVs). Recognizing that CAVs operate under strict resource constraints (limited compute, memory, and power) while requiring real‑time security monitoring, the authors propose a model that balances detection accuracy, inference speed, and privacy preservation.

Model Architecture

FedSecureFormer contains only 1.7 million trainable parameters, making it an order of magnitude smaller than typical encoder‑only transformers. The architecture consists of a compact token embedding layer, a reduced‑dimensional multi‑head self‑attention block, and a feed‑forward network with aggressive bottlenecking. Positional encoding is simplified to keep memory overhead low, and the model processes vehicle‑generated time‑series data (CAN bus logs, network traffic, sensor readings) as sequential tokens. This design enables high representational power while keeping FLOPs and latency minimal.

Federated Learning with Differential Privacy

To respect the distributed nature of vehicular data, the authors embed the model in a Federated Learning (FL) framework using the classic FedAvg aggregation algorithm. Each vehicle (client) trains locally on its own logs and sends weight updates to a central server. To protect raw data from inference attacks on the update channel, they apply differential privacy (DP) by adding calibrated Gaussian noise to each client’s gradient before transmission. Experiments with ε = 5 and δ = 1e‑5 demonstrate that privacy can be achieved with only a modest drop in overall accuracy (from 93.69 % to ≈92 %).

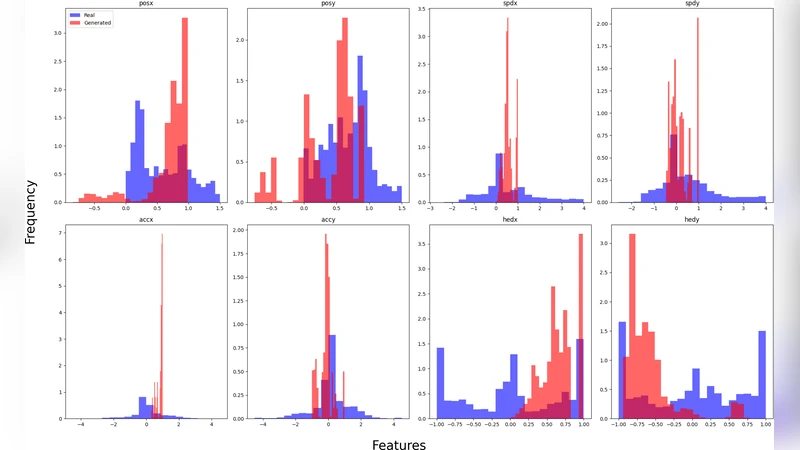

Generalization to Unseen Attacks via Histogram‑Guided GAN

A major contribution is the creation of a histogram‑guided Generative Adversarial Network (GAN) that synthesizes previously unseen attack traffic. The generator combines an LSTM backbone with an attention module to capture temporal dependencies, while a histogram‑based loss forces the synthetic samples to match the statistical distribution of real attacks. When the generated data are used for fine‑tuning or evaluation, FedSecureFormer attains 88 % detection accuracy on these novel attacks, indicating robust generalization beyond the training set.

Experimental Evaluation

The authors evaluate the system on a publicly available CAV intrusion dataset comprising 19 attack types (including DoS, probing, remote‑to‑local, and user‑to‑root) and focus on ten high‑impact classes for detailed analysis. FedSecureFormer reaches an overall classification accuracy of 93.69 % and consistently exceeds 95 % F1‑score on the ten major attacks, outperforming several state‑of‑the‑art baselines such as DeepIDS, CNN‑LSTM, and larger transformer models.

Inference Speed and Deployability

Real‑world feasibility is demonstrated on an NVIDIA Jetson Nano edge device. The model processes a single vehicle’s data in 3.7775 ms per inference, which is roughly 100× faster than the compared SOTA solutions. The small memory footprint (≈30 MB) and low power consumption make it suitable for deployment on vehicle‑embedded control units (ECUs).

Limitations and Future Work

The study acknowledges three primary limitations: (1) communication overhead and potential stragglers in the FL setting, (2) sensitivity of detection performance to the DP budget, and (3) the need for more extensive validation on heterogeneous vehicular fleets and V2X scenarios. Future directions include exploring asynchronous or compression‑aware FedAvg variants, integrating secure multi‑party computation (MPC) for stronger privacy guarantees, and extending the framework to collaborative detection across vehicle‑to‑infrastructure (V2I) and vehicle‑to‑vehicle (V2V) networks.

Conclusion

FedSecureFormer delivers a practical, high‑performance intrusion detection solution for CAVs by marrying a compact transformer architecture with federated learning and differential privacy. Its ability to detect a wide range of attacks with >93 % accuracy, run inference in sub‑4 ms on edge hardware, and generalize to unseen threats positions it as a compelling building block for securing the next generation of intelligent transportation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment