Coding With AI: From a Reflection on Industrial Practices to Future Computer Science and Software Engineering Education

Recent advances in large language models (LLMs) have introduced new paradigms in software development, including vibe coding, AI-assisted coding, and agentic coding, fundamentally reshaping how software is designed, implemented, and maintained. Prior research has primarily examined AI-based coding at the individual level or in educational settings, leaving industrial practitioners’ perspectives underexplored. This paper addresses this gap by investigating how LLM coding tools are used in professional practice, the associated concerns and risks, and the resulting transformations in development workflows, with particular attention to implications for computing education. We conducted a qualitative analysis of 57 curated YouTube videos published between late 2024 and 2025, capturing reflections and experiences shared by practitioners. Following a filtering and quality assessment process, the selected sources were analyzed to compare LLM-based and traditional programming, identify emerging risks, and characterize evolving workflows. Our findings reveal definitions of AI-based coding practices, notable productivity gains, and lowered barriers to entry. Practitioners also report a shift in development bottlenecks toward code review and concerns regarding code quality, maintainability, security vulnerabilities, ethical issues, erosion of foundational problemsolving skills, and insufficient preparation of entry-level engineers. Building on these insights, we discuss implications for computer science and software engineering education and argue for curricular shifts toward problem-solving, architectural thinking, code review, and early project-based learning that integrates LLM tools. This study offers an industry-grounded perspective on AI-based coding and provides guidance for aligning educational practices with rapidly evolving professional realities.

💡 Research Summary

This paper investigates how large language model (LLM)‑based coding tools are actually used by professional software engineers, what benefits and risks they perceive, and how these practices should reshape computer‑science and software‑engineering curricula. While prior work has largely examined AI‑assisted coding at the individual or classroom level, the authors fill a gap by analyzing 57 curated YouTube videos posted between late 2024 and 2025 that feature practitioners reflecting on their day‑to‑day workflows.

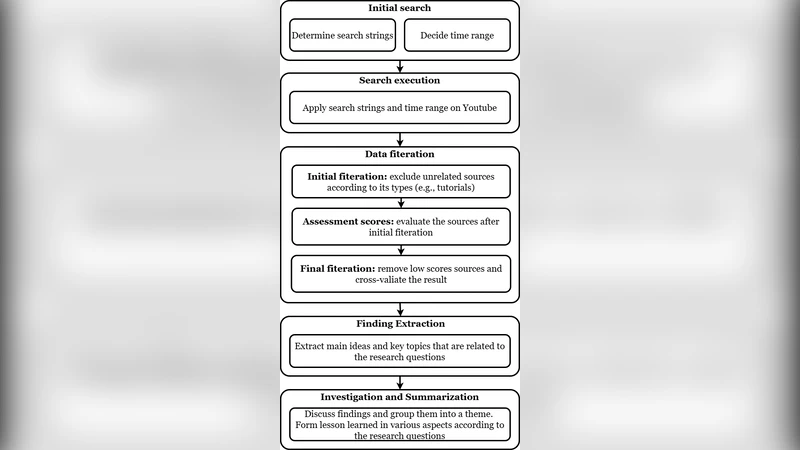

The authors first collected 312 videos using keyword queries such as “LLM coding,” “AI‑assisted development,” and “productivity.” They then applied a multi‑stage filtering process based on video length (≥10 minutes), view count (≥5 000), and source credibility (company channels, recognized experts). After a quality assessment, 57 videos representing a broad spectrum of domains—web, mobile, data pipelines, embedded systems—were retained. Transcripts were generated automatically and coded by two independent researchers using a hybrid of thematic coding and content analysis. Inter‑rater reliability (Cohen’s κ = 0.84) confirmed coding consistency. Twelve sub‑categories emerged, including definitions of AI‑based coding practices, productivity gains, emerging bottlenecks, and risk dimensions.

Key findings can be grouped into four themes.

- New Coding Paradigms – Practitioners describe “vibe coding,” “AI‑assisted coding,” and “agentic coding” as collaborative workflows where the LLM acts as a co‑author, generating whole functions, scaffolding architectural skeletons, and even orchestrating test generation.

- Productivity Gains – Across the sample, engineers report a 30‑45 % reduction in development time, especially for repetitive tasks such as CRUD scaffolding, API stub creation, documentation synthesis, and unit‑test generation.

- Shifted Bottlenecks – The traditional pain points of algorithm design and debugging have moved downstream to code review, security validation, and integration testing. Because the LLM supplies rapid drafts, human engineers now spend more effort verifying correctness, maintainability, and compliance.

- Risks and Concerns – The study uncovers several intertwined threats: (a) Code‑quality degradation – LLM output can contain non‑standard styles, unnecessary complexity, or hidden anti‑patterns that increase refactoring effort; (b) Security vulnerabilities – models sometimes reproduce known CVE patterns or omit essential input sanitization; (c) Ethical and legal issues – inadvertent copyright infringement, bias propagation, and opaque provenance of generated code; (d) Erosion of fundamental problem‑solving skills – junior developers may over‑rely on prompts, limiting their algorithmic reasoning development; (e) Insufficient preparation of entry‑level engineers – newcomers lacking LLM experience struggle with code comprehension and review.

From these observations, the authors derive concrete educational implications. First, curricula should pivot from a sole focus on algorithms and data structures toward “architectural thinking,” “system‑level design,” and “code‑review practices” that explicitly incorporate LLM tools. Second, project‑based learning should embed LLM‑augmented tasks, allowing students to experience both the productivity boost and the necessity of critical validation. Third, dedicated modules on AI ethics, intellectual‑property law, and secure coding are needed to sensitize future engineers to the legal and societal dimensions of AI‑generated software. Fourth, early industry exposure—through internships, co‑ops, or capstone projects that use real LLM assistants—can bridge the preparation gap for entry‑level engineers.

In conclusion, the paper provides the first large‑scale, industry‑grounded qualitative analysis of LLM‑based coding. It demonstrates that while LLMs deliver substantial efficiency gains, they also reconfigure the nature of software‑development bottlenecks and introduce new quality, security, and educational challenges. Aligning computer‑science education with these realities will require a systematic redesign of learning outcomes, assessment methods, and pedagogical tools, ensuring that graduates can harness AI assistants responsibly while retaining core problem‑solving and architectural competencies.

Comments & Academic Discussion

Loading comments...

Leave a Comment